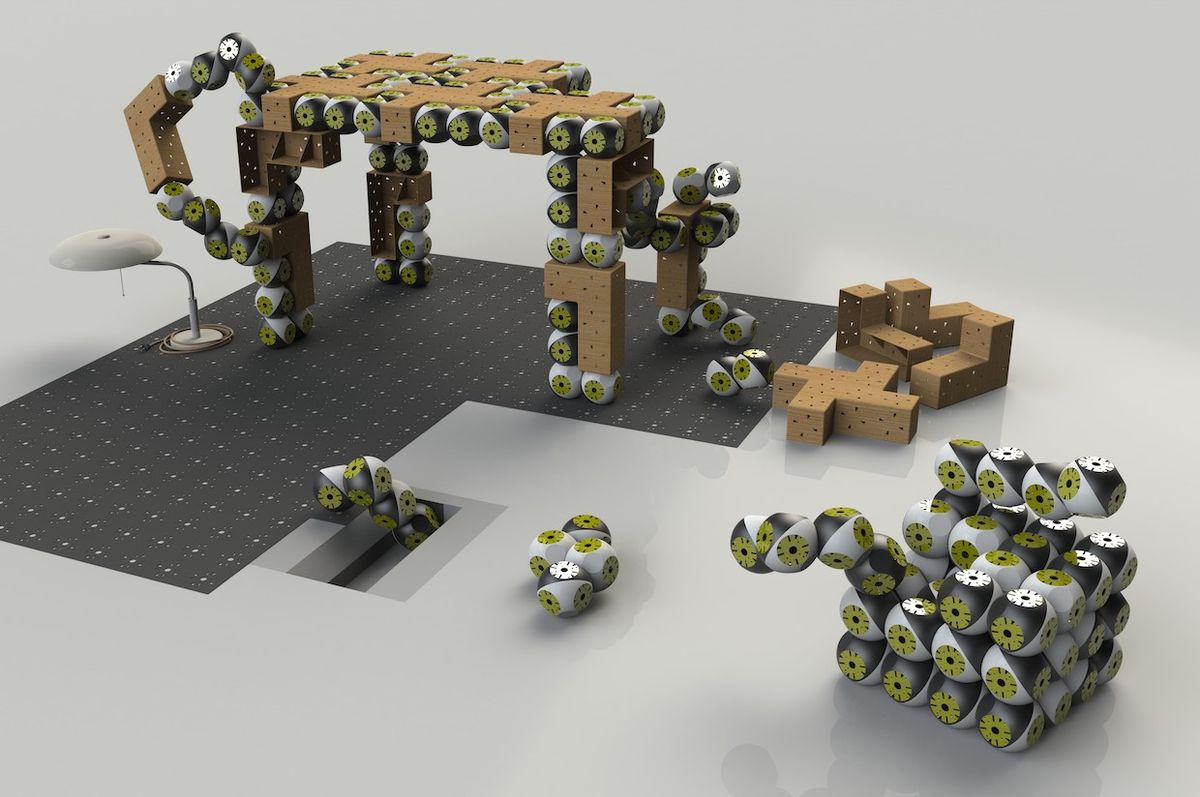

For the last decade-ish, EPFL’s Roombots have been modularizing their way towards becoming the only piece of furniture you’ll ever need. These little squarish roundish robotics modules, which can move around and latch onto each other, can collaboratively form chairs, tables, or whatever else you need or want. The idea is that you’d invest in a pile of Roombots, the pile size being proportional to the number of people and animals in your house, and then whatever bits of furniture you desire would be dynamically created (and then “destroyed”) through the intelligent and autonomous cooperation Roombots pile on an as-needed basis.

Roombots are a very compelling idea, especially for those of us who have small apartments. Like, I have a dining room table and four chairs. If I want to have more than a couple people over for dinner, they’d better bring their own chairs, because I don’t have anywhere for them to sit. If my furniture was Roombots, though, my bed could just disassemble itself to make more chairs when I needed them. Or, I could store a bunch of extra Roombot modules in my closet, and bring them out when needed.

In a new Roombots paper, researchers from EPFL’s Biorobotics Laboratory, led by Professor Auke Ijspeert, have demonstrated some practical (although still very research-y) swarm transformations, while also experimenting with how Roombots can interact with existing furniture to give it new capabilities—chairs that follow you, chairs that flee from you, and tables that can pick objects up off the floor.

Here are some examples of what the most recent swarm of Roombots can do:

The video is showing a combination of system-level autonomous behaviors for complex coordinated tasks, and manual control to demonstrate specific hardware capabilities or new functionality that’s still in the proof-of-concept stage. For example, the chair formation video is all autonomous (except for that little bit of human nudging), as is the user following, avoidance, and hand tracking, using a ceiling-mounted Kinect and an external computer. The Roombots moving the coffee table are controlled manually, and the bottle opening is a combination of user control and motion primitives.

The Roombots themselves have evolved quite a bit over the last five years. The internal mechanics have been redesigned, with a new low-backlash gearbox, new connection mechanism, and new electronics. Also new is the gripper system, LEDs, spotlight, and proximity sensors. And most importantly, the number of active Roombot modules has increased from just two (the minimum you need to experiment with the basic functionality of docking and undocking and movement) to 13, which is enough to do much more exciting things with.

While Roombots (and other modular robots) have a lot of potential advantages, including relatively low per-module cost, robustness via the easy replacement of malfunctioning modules, and unmatched versatility, they’re also much harder to design and program than unitasking robots, and using modular robots is inevitably a compromise—you can almost always design a dedicated robot that’s better at a specific task than your modular robots could ever be. But where adaptability is important, in situations where you can’t necessarily know in advance what your robots will need to do or when their task changes all the time, modular robots like Roombots are (the researchers hope) worth the extra hassle.

Part of what the Roombots project is working on now is exploring through physical testing exactly what a bunch of Roombots modules can do, and whether adding some tools to the otherwise homogenous module swarm could enable useful new capabilities. The most ambitious is likely object manipulation, which is quite a challenge for robots like these. The EPFL researchers managed to cram a small jamming gripper into a single hemisphere of a Roombots module, which is able to pick up most small, rigid objects that are within its reach. Not a lot is within the reach of a single module, but that’s where the swarm nature of Roombots comes in, because it’s easy to make the gripper module the end of an arbitrarily long (and mobile!) arm of Roombots to increase its workspace.

A lot of this paper seems to just be about the EPFL folks messing around with the Roombots to see what’s possible, what’s practical, and what needs more work, which honestly seems like a fantastic reason to publish a robotics paper. They’re still working on getting the modules to connect to each other robustly, as well as trying to manage the deformation that occurs when Roombots form larger structures, but the hope is that more completely modeling the physics of the Roombots could lead to better autonomy. It should also be noted that the current generation of Roombots, despite being able to self-assemble into things like tables and chairs, can’t actually support all that much weight, and that sitting on a Roombots chair would likely crush it. A complete redesign will likely be necessary for Roombots to move towards real world applications, and the researchers are already thinking about upgrades that include vision systems, distributed control, and even an “artificial skin” for safe human interaction.

For more details, we spoke via email with Simon Hauser and Mehmet Mutlu from EPFL.

IEEE Spectrum: Adaptive furniture is a unique application for modular robots—why are you focusing on it?

Simon Hauser and Mehmet Mutlu: Furniture is only one possible application for Roombots. Other applications could include reconfigurable space technologies (on a space station, satellite, or planetary station), reconfigurable factory lines, reconfigurable working and conference spaces, interactive art pieces, and edutainment. But we like the idea of assistive furniture because it helps people to imagine what can be done with this technology by placing it in their daily lives, and also because we feel that a person with limited mobility could benefit a lot by having a whole robotic environment (including furniture) that is assistive. We feel that is an area that is under-explored, since many roboticists focus on making multi-purpose robotic servants.

While the question of modularity can be philosophical in nature, the upshot for us is this: If you want a robot that excels at one thing, modularity is the wrong way to go. The strength of modularity shows when different tasks are asked or functions are required (although each function will be executed less optimally than a dedicated design). The question now becomes, where can we find situations where different functions are needed, and where sacrificing performance is acceptable? This is, in our opinion, a very hard question to answer, but we think reconfigurable furniture has the potential to fall into this category, while at the same time tackling an ever growing space problem in urban areas. Within this framework, we can explore all the capabilities of Roombots (self-reconfiguration, mobility, manipulation, interaction, interface) with direct links to real-world applications.

Why make Roombots this particular size? Could you make them bigger or smaller?

In principle, it would be interesting to explore other scales. Much smaller (similar to the robots in Big Hero 6) would be great, but would be difficult. Larger robots would be much simpler technically. It depends on the applications.

Usually, we can say that smaller sounds better, if the same functionality can be built into a smaller package. But we also wanted some flexibility within the modules to be able to add more features as needed. For certain applications such as furniture, actually having a single module that’s bigger, perhaps around the size of a real stool, can be more practical. However, bigger modules would be harder to make in-lab experiments with dozens of modules (we would need much more space), more expensive to produce, and strong or heavy enough to come with safety concerns so that additional safety features would be needed. It would mean significant engineering development rather than quicker proof of concept research. The current size is also big enough to pack strong motors and batteries, while small enough to be easily maintainable.

Manipulation seems particularly challenging for Roombots. Why is it important to implement?

Being able to manipulate objects, to modify the environment, is a fundamental capability expected from many robots. But, the core idea of Roombots is creating modules that can do everything. And manipulation is also needed to do everything! For assistive furniture, a useful ability of the robot would be to be able to pick-up an object that has fallen on the ground (like a remote control, glasses, or a pen) and bring it to a person in a wheelchair. The modules might also be able to bring a glass of water, or a book.

It’s 10 or 20 years from now and all of my furniture is Roombots. Can you describe what a day might be like for me?

Ten years is still early, but 20 to 50 years from now…

You wake up in a bed made out of Roombots modules. While washing your face and brushing your teeth, the Roombots bed self reconfigures into a table and a chair to get ready for your breakfast. While at work, you invite a few friends to come over for beers and a board game later. So your furniture at home rearranges itself into a sofa and a coffee table. While the humans are setting up the game, the Roombots table goes to the kitchen and fetches a few beers and snacks and brings them to the living room. Meanwhile, a Roombots spotlight climbs up onto the ceiling to create a more immersive atmosphere for the board game using the directional colorful spotlight light and LED rings. While playing the game, a human drops a board game piece to the floor. Roombots manipulators attached to the coffee table pick it up from the floor and put it back on the board by collaborating with each other. And finally, while guests are leaving, Roombots create the bed again to finish the day.

A different scenario could be imagined for a person in a wheelchair with an elderly parent. The furniture could help with transitions from the bed to the wheelchair. The furniture could move out of the way when the user crosses their apartment, and then return to its original place. A mobile stool could follow the parent and remain close when they take a few steps in case they get dizzy. In case of emergency, like a fall, a Roombot stool could approach the person on the ground to allow a voice activated phone call and to provide support for standing up.

These are just some ideas, we have many more!

Going forward, do you feel like the biggest challenges for developing Roombots (and other modular robots) will be in hardware or in software?

It’s hard to separate hardware and software in robotics. But, considering the fact that we can do more in simulation and less with the real hardware, we can say that there are more hardware challenges. On the software side, a big challenge that remains to be solved is how to create simple user interfaces with novice users. So far, we have tried different options: gesture recognition, play-dough for constructing structures, augmented reality, and keyboard interactions, but so far only with expert users.

What are you working on next?

The project is on hold until we get new funding. One proposal under review is to use Roombots as building blocks for designers. Another dream would be to push further the assistive scenario above for a person in a wheelchair, and to equip a test apartment to evaluate pros and cons of different possible solutions. And we would love to collaborate with anyone interested, including artists, designers, and other roboticists. There are still many things to explore!

“Roombots extended: Challenges in the next generation of self-reconfigurable modular robots and their application in adaptive and assistive furniture,” by S. Hauser, M. Mutlu, P.-A. Léziart, H. Khodr, A. Bernardino, and Auke Ijspeert from EPFL and IST Lisbon, is published in Robotics and Autonomous Systems.

[ EPFL ]

Evan Ackerman is a senior editor at IEEE Spectrum. Since 2007, he has written over 6,000 articles on robotics and technology. He has a degree in Martian geology and is excellent at playing bagpipes.