Machine learning, which now powers speech recognition, computer vision, and more, could prove even more powerful when run on quantum computers. Now scientists find the strange quantum phenomenon known as entanglement, which Einstein dubbed “spooky action at a distance,” might help remove a major potential roadblock to implementing quantum machine learning, a new study finds.

Quantum computers can theoretically prove more powerful than any conventional computer on a number of tasks, such as finding a number’s prime factors—the mathematical foundation of the modern encryption currently protecting banking and other secure data. The more components known as qubits that are linked together in a quantum computer through entanglement—wherein multiple particles can influence each other instantaneously regardless of how far apart they are—the greater its computational power can grow, in an exponential fashion.

Scientists are still researching the specific problems for which quantum computing might have an advantage over classical computing. Recently, they have begun exploring whether quantum computing might help boost machine learning, the field of AI that investigates algorithms that improve automatically through experience.

One potential application of quantum machine learning is simulating quantum systems—for instance, chemical reactions that might yield insights leading to next-generation batteries or new drugs. This might entail creating models of the molecules of interest, having them interact, and using experiments of how the actual compounds interact as training data to help improve the models.

A potential major stumbling block that quantum machine learning may face is the so-called “no free lunch” theorem. The theorem suggests any machine learning algorithm is as good as, but no better than, any other when their performance is averaged over many problems and sets of training data.

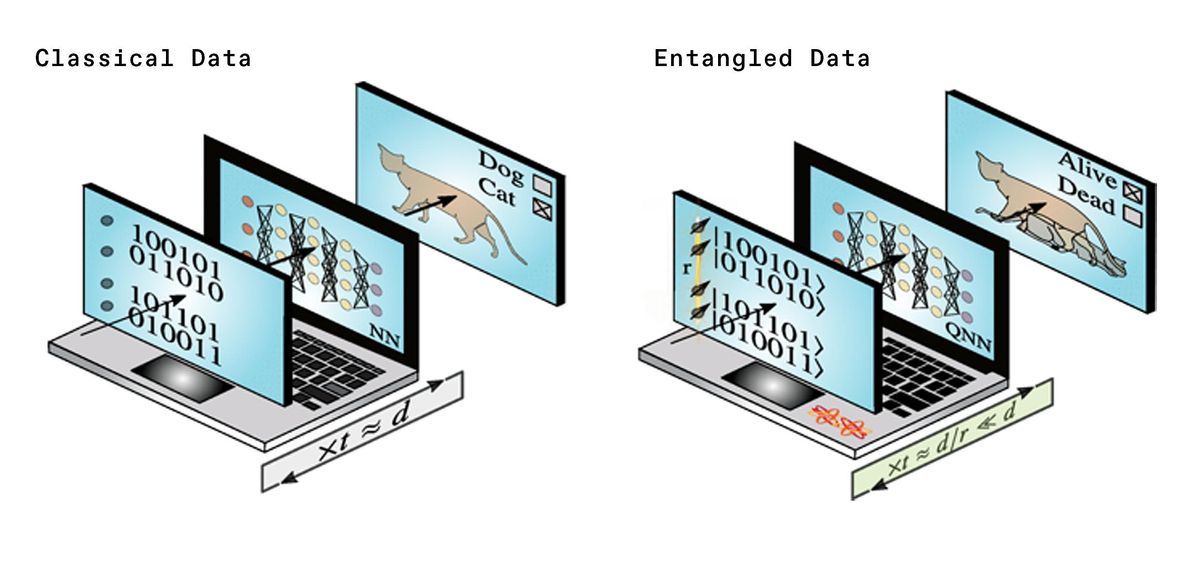

A consequence of the no-free-lunch theorem is that a machine-learning algorithm’s average performance depends on how much data it has, suggesting the amount of data ultimately limits machine learning’s performance. This raised the possibility that in order to model a quantum system, for example, the amount of training data that a quantum computer might need would grow exponentially as the modeled system became larger. This could potentially eliminate the edge that quantum computing could have over classical computing.

Now scientists have discovered a way to eliminate this exponential overhead using a newfound quantum version of the no-free-lunch theorem. Their findings, verified using quantum-hardware startup Rigetti’s Aspen-4 quantum computer, suggest that adding more entanglement to quantum machine learning can lead to exponential scale-up.

Specifically, the researchers suggested entangling additional qubits with the quantum system that a quantum computer aims to model. This extra set of “ancilla” qubits can help the quantum machine-learning circuit interact with many quantum states in the training data at the same time. As such, a quantum machine learning circuit may experience a speedup even with relatively few ancillas.

“Trading entanglement for training states could give huge advantages for training certain types of quantum systems,” says study coauthor Andrew Sornborger, a physicist at Los Alamos National Laboratory, in New Mexico.

Sornborger cautions that it can prove extremely difficult entangling ancilla qubits with the quantum systems used in the experiments needed to supply training data. Still, “as long as it is not exponentially difficult in some sense to create entanglement, then we stand to benefit,” he says.

One potential futuristic application of this work is what the researchers call “black box uploading.” “Wouldn’t it be cool to be able to learn a model of a quantum experiment, then study it on a quantum computer without having to do repeated experiments?” Sornborger says.

For example, if the atom smashers at CERN, the largest particle physics lab in the world, entangled the protons being collided together with the detectors used to analyze them and with an extraordinarily powerful quantum computer (on the order of a billion billion qubits), scientists could have a way to directly analyze the Standard Model, currently the best explanation for how all the known elementary particles behave.

“This is the sort of possibility that we’ve begun to contemplate in the quantum machine-learning context,” Sornborger says.

The scientists detailed their findings 18 February in the journal Physical Review Letters.

- How Many Qubits Are Needed for Quantum Supremacy? - IEEE ... ›

- How Quantum Computers Can Make Batteries Better - IEEE Spectrum ›

- A Hybrid of Quantum Computing and Machine Learning Is ... ›

- Machine Learning Will Tackle Quantum Problems, Too - IEEE Spectrum ›

- Better Machine Learning Models With Quantum Computers - IEEE Spectrum ›

Charles Q. Choi is a science reporter who contributes regularly to IEEE Spectrum. He has written for Scientific American, The New York Times, Wired, and Science, among others.