As extreme weather events go, droughts—like the one that singed Russia’s wheat crop two summers ago and the one that engulfed the United States in July—are about as tricky as it gets. Unlike hurricanes and tornadoes, drought does not have an obvious start or end. In fact, there isn’t even a clear definition for it, making it hard to measure and monitor, let alone predict. But with better observations of the earth, oceans, and atmosphere and improvements in computer modeling, scientists think they’ll be able to foresee the chances of drought up to a decade in advance, and better predict droughts that arise suddenly or last longer than a few months.

Today, scientists can forecast drought only about three months ahead for most parts of the world with any significant certainty.

“Forecasting drought is an inexact science,” says Brian Fuchs, a climatologist at the National Drought Mitigation Center (NDMC) at the University of Nebraska–Lincoln. “Drought is typically characterized by slow onset and slow recovery. To pick up signals of something that happens over weeks or months is hard for computer models.”

Drought involves a seemingly inexhaustible list of factors—from local ones such as groundwater level, stream flow, soil moisture, and vegetation, to large-scale global weather patterns such as El Niño and La Niña. All of these change over different time periods, from days to decades, and many are tied to each other. Global warming tops off the chaotic mix.

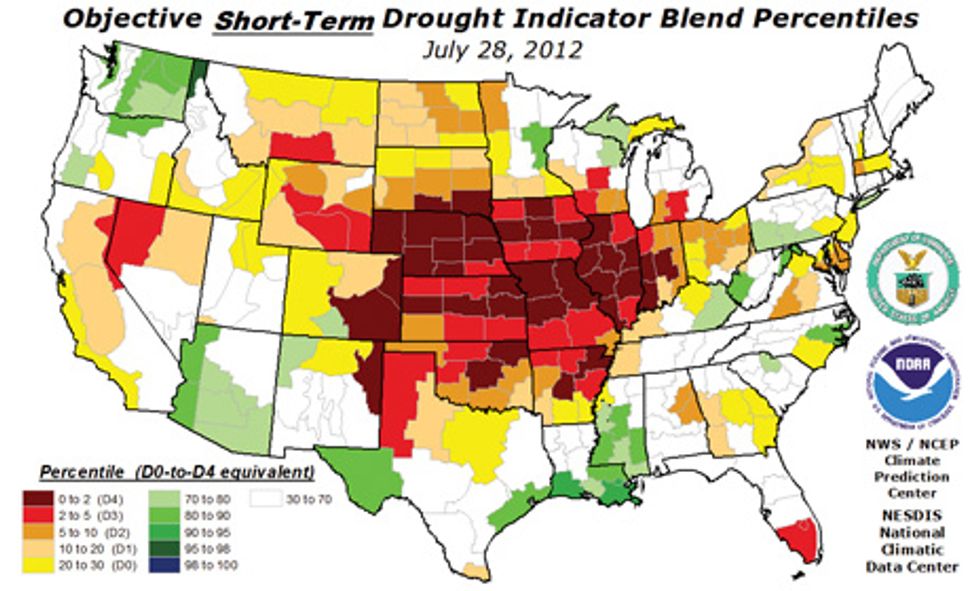

Nevertheless, scientists in the United States have produced some of the most sophisticated tools to monitor and predict drought. Resource planners and policymakers rely mainly on the U.S. Drought Monitor, an online map of dryness that has been updated weekly since it was unveiled in 2000 by the Department of Agriculture, the National Oceanic and Atmospheric Administration (NOAA), and the NDMC. The monitor combines several mathematical indices calculated by feeding computer models with temperature, precipitation, and soil moisture data. The physical data comes from NOAA’s polar-orbiting satellites and on-the-ground temperature, rain, and snow gauges. “The U.S. leads in drought monitoring,” Fuchs says. “Many other countries are trying to emulate the U.S. Drought Monitor.”

While monitoring has been done for decades, forecasting drought is still in its infancy. Today, it blends science and art, and the only place it’s done on a national scale is at NOAA’s Climate Prediction Center (CPC) in Camp Springs, Md. Twice every month, meteorologists there subjectively produce the U.S. Seasonal Drought Outlook, which predicts conditions for the next three months.

To create the Drought Outlook, scientists mix data from the Drought Monitor with soil moisture information and the CPC’s three-month forecast of temperature and rainfall. They also incorporate current climate conditions along with past heat and precipitation.

The temperature and rainfall outlook comes from NOAA’s climate model, which runs on an IBM Power6 mainframe computer, and takes half a day to finish one simulation run. The model, which was unveiled seven years ago, couples ocean and atmosphere models that had to be run separately before, explains Dan Collins, a forecaster at the CPC. The newer, improved version that went into operation this year also includes things like sea- and land-ice melting and changing levels of carbon dioxide in the atmosphere.

Collins says that more-powerful computers would make it possible to include more-detailed physics and more climate system components. “You want to better simulate the way in which clouds are formed or water evaporates from soil,” he says. Greater computing prowess would also mean that each simulation step would cover a shorter time and distance—a few minutes and a few kilometers as opposed to the hours and tens of kilometers used now. This would improve the ability to capture smaller, more rapid changes in temperature and precipitation.

Other advances expected in the coming years include more extensive ground observation networks and NOAA’s next-generation polar satellite, which is scheduled for launch in 2016 and will be equipped with advanced visual and infrared imagers that produce data with a higher temporal and spatial resolution. These will give better temperature, precipitation, and soil moisture data, which will improve the accuracy of drought prediction, says Fuchs. Already, the Drought Monitor maps have gotten more refined over 12 years because of resolution improvements in satellite gear, he says. What’s more, NASA is developing satellite-based instruments that are better at measuring soil moisture, he adds. The Drought Monitor currently uses soil moisture that is indirectly estimated by computer models based on rainfall and temperature observations.

Collins anticipates that longer-term drought prediction could be possible five years from now. That’s when NOAA scientists are expecting to next upgrade the climate model, to include decade-long climate fluctuations. They hope to do this by simulating known long-term ocean fluctuations such as the Atlantic multidecadal oscillation. Fuchs says, “I anticipate continuing to see drought monitoring and prediction evolve, and technology will be a driving factor.”

Prachi Patel is a freelance journalist based in Pittsburgh. She writes about energy, biotechnology, materials science, nanotechnology, and computing.