The global semiconductor shortage has made life tough for anyone using microcontrollers, with lead times now sometimes quoted in years. But there has been one bright spot: the US $4 Pi Pico, a microcontroller based on the new RP2040 chip. Not only does the RP2040 have lots of compute power, it hasn’t suffered the kind of shortages afflicting other chips. So when I decided to build a cheap DIY scintillating gamma spectrometer, it was the natural choice—although I didn’t realize I’d find myself navigating around teething problems of the sort that often affect a first-generation integrated circuit.

My interest in gamma-ray spectroscopy came from my physics studies. I find it fascinating that you can get so much information out of a single device. A gamma-ray spectrometer can be used like a Geiger counter with much better sensitivity, but unlike a Geiger counter, you can identify the exact composition of any gamma-emitting radioisotopes down to the picogram (or less). I started thinking about creating my own gamma-ray spectrometer when I saw the high price of even the cheapest commercially made devices. I wanted to see if I could make it easy and affordable to build a spectrometer.

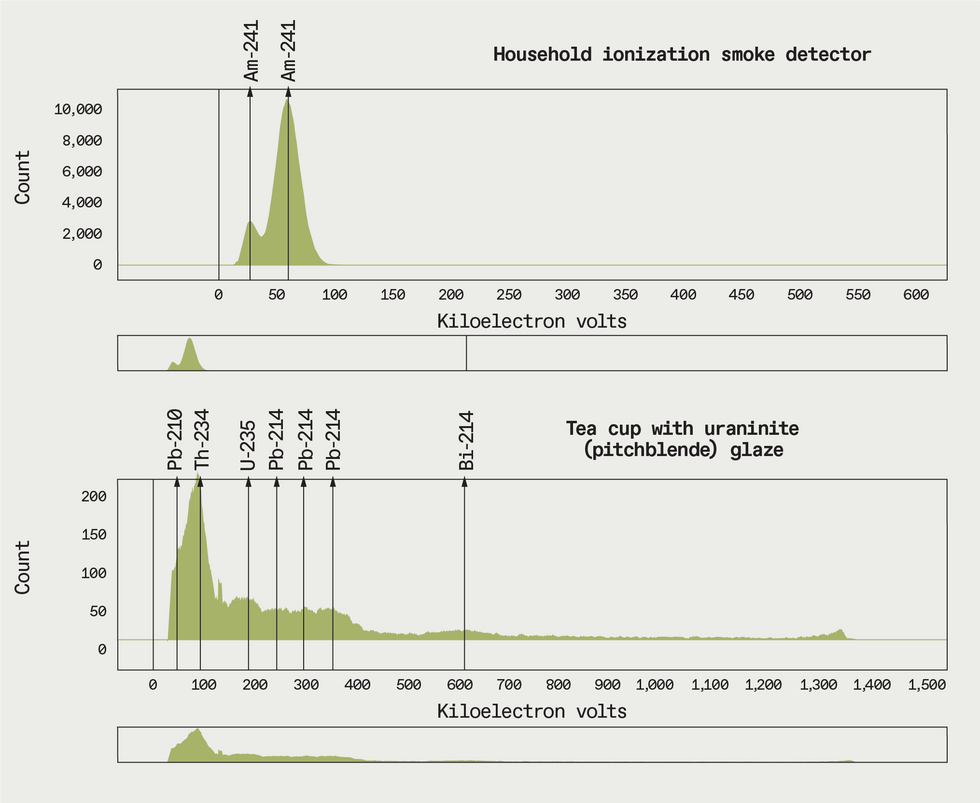

The first step was to choose the scintillator at the heart of the spectrometer. In a nutshell, a scintillator measures both the energy and intensity of a flux of gamma rays, thanks to a transparent crystal. A gamma ray produces a free electron in the crystal, and this electron’s energy is proportional to the gamma ray’s. As the electron moves through the crystal, it excites atoms. The atoms, in turn, emit visible photons, with the total number of photons emitted proportional to the energy of the exciting electron. Thus, by counting the number of photons, you can gauge the energy of the original gamma ray. Counting how many gamma rays you detect over time gives you the radiation’s intensity, and looking at the energies of the gamma rays gives you a spectral fingerprint of a radioisotope.

The photon signal must be amplified to be detectable. Historically, this was done using a photomultiplier vacuum tube, but silicon photomultipliers (SiPMs) have become more common, and for my project they have a number of advantages, particularly in eliminating the need for a high-voltage power supply.

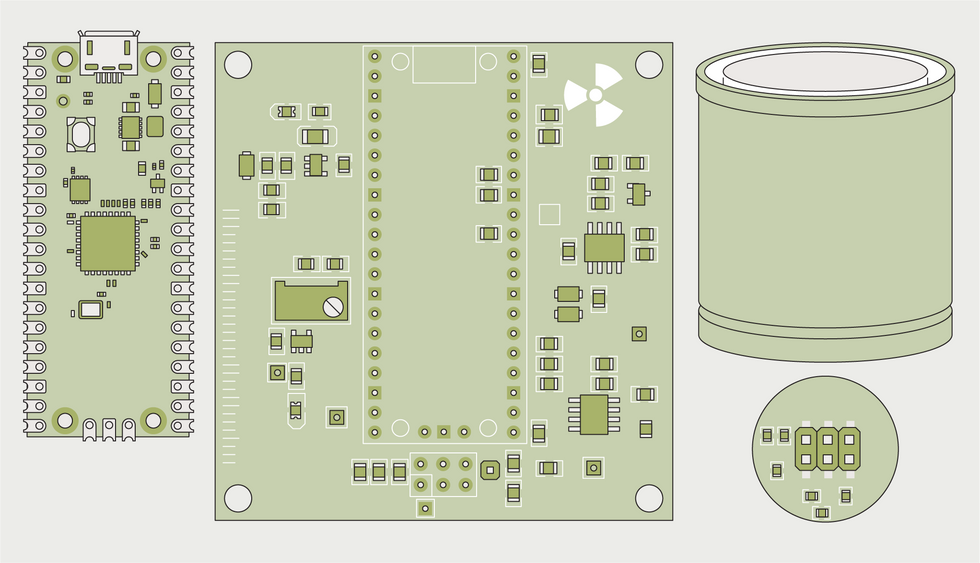

You can buy various used scintillator crystals on eBay fairly cheaply: I purchased a small sodium iodide crystal, 18 millimeters in diameter and 30 mm long, for about US $40. It came with a photomultiplier tube, which I removed and replaced with my SiPM, wrapping the assembly in black tape to prevent external light from leaking in and triggering the sensor.

The scintillator and SiPM plug into a carrier board, which has a DC/DC boost converter to convert 5 volts into the 29.3 V the SiPM needs. The carrier board also hosts the Pico microcontroller along with some other supporting circuitry, including an amplifier that increases the output voltage of the SiPM to a level that the Pico’s built-in analog-to-digital converter (ADC) can detect.

On paper, the Pi Pico’s ADC looks very good. But there’s a flaw lurking in it.

The ADC in the Pico’s RP2040 chip is a critical component, and on paper it looks very good.

It has 12-bit resolution and can take measurements between 0 and 3.3 V at a rate of 500 kilosamples per second. But there’s a flaw lurking in the RP2040’s ADC.

I didn’t realize it existed until I started taking test spectra, writing software for the Pi that breaks up the ADC’s readings into 4,096 channels and counts the number of events in each channel over time. I noticed one channel kept reporting very high count values, creating a thin spike in my spectra. Puzzled, I took a four-hour background radiation measurement and discovered there were four problematic channels where the signal spiked unrealistically.

I started searching for what could be causing this and discovered I was not the first to run into problems with the ADC. A great website by Mark Omo—an EE who took it upon himself to investigate the problem—provides a detailed analysis, but in summary the issue is this: Ideally, an ADC chops the voltage range it can measure into an identically sized sequence of steps, producing a linear relationship between the input voltage and the numeric measurements it outputs. Of course, no ADC has a perfectly linear response across its measurement range, but the RP2040 has four spots where input voltages produce a wildly nonlinear response. This was the source of the mystery spikes in my spectra.

Until the RP2040 is revised to fix this glitch, there’s not much you can do about it directly. Fortunately, with 4,096 channels, I could afford to employ the simplest software fix—just throwing away the measurements in the affected channels—without affecting the quality of the overall spectrum significantly.

Controlling and getting data from the spectrometer can be done via a USB interface (which also provides the power needed to operate it). I wrote software that can accept serial commands to, for example, put the spectrometer into Geiger counter or energy-measurement modes, or upload a histogram of all the measurements taken since the last power-up. You can write your own code to communicate with the spectrometer, or use a Web app I created that also plots spectra. (A link to the Web app, along with full build details and PCB files, is available on GitHub.)

For the future, I hope to make the spectrometer hardware capable of using a wider range of SiPMs and scintillators, so that people can use whatever detectors they can find. I hope you join me in this fascinating hobby!

This article appears in the July 2022 print issue as “DIY Gamma-Ray Spectroscopy.”

- The IceCube Neutrino Detector at the South Pole Hits Paydirt - IEEE ... ›

- DIY Space Programs - IEEE Spectrum ›

- Make It So: Open Source, Arduino-Based Tricorder Nears ... ›

- A DIY Beta Particle Detector - IEEE Spectrum ›

Matthias Rosezky has a bachelor's degree in technical physics and is currently studying for a master's degree in physical energy and measurement Engineering at the Vienna University of Technology.