In-hand manipulation is a skill that, as far as I’m aware, humans in general don’t actively learn. We just sort of figure it out by doing other, more specific tasks with our fingers and hands. This makes it particularly tricky to teach robots to solve in-hand manipulation tasks because the way we do it is through experimentation and trial and error. Robots can learn through trial and error as well, but since it usually ends up being mostly error, it takes a very, very long time.

Last June, we wrote about OpenAI’s approach to teaching a five-fingered robot hand to manipulate a cube. The method that OpenAI used leveraged the same kind of experimentation and trial and error, but in simulation rather than on robot hardware. For complex tasks that take a lot of finesse, simulation generally translates poorly into real-world skills, but OpenAI made their system super robust by introducing a whole bunch of randomness into the simulation during the training process. That way, even if the simulation didn’t perfectly match reality (which it didn’t), the system could still handle the kinds of variations that it experienced on the real-world hardware.

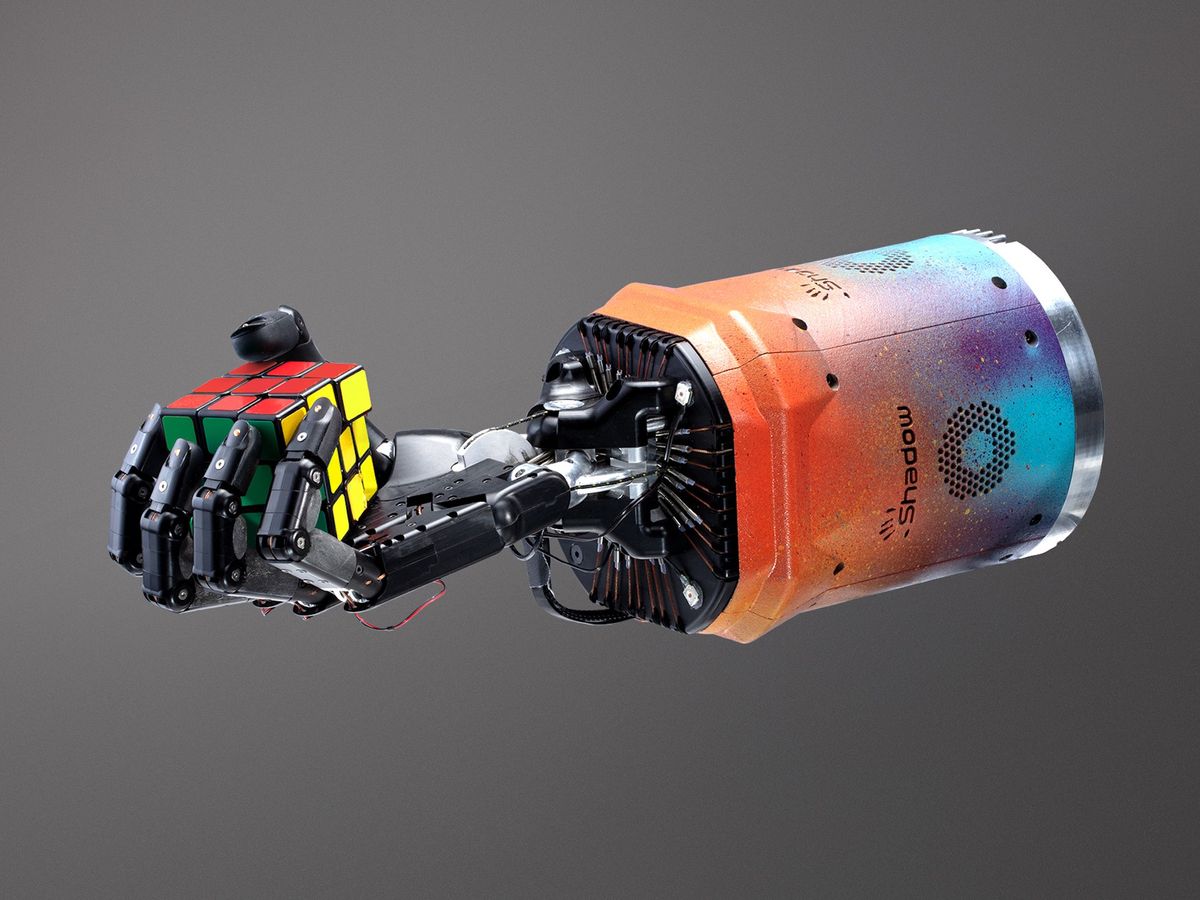

In a preprint paper published online today, OpenAI has managed to teach its robot hand to solve a much more difficult version of in-hand cube manipulation: single-handed solving of a 3x3 Rubik’s cube. The new work is also based on the idea of solving a problem using advanced simulations and then transferring the solution to a real-world system, or what researchers call “sim2real.” In the paper, OpenAI says the new approach “vastly improved sim2real transfer.”

The initial step was to break down the robot manipulation of the Rubik’s cube into two different tasks: 1. rotating a single face of the cube 90 degrees in either direction, and 2. flipping the cube to bring a different face to the top. Since rotating the top face is much simpler for the robot than rotating other faces, the most reliable strategy is to just do a 90-degree flip to get the face you want to rotate on top. The actual process of solving the cube is computationally straightforward, although the solving process is optimized for the motions that the robot can perform rather than the solve that would take the least number of steps.

The physical setup that’s doing the real-world cube solving is a Shadow Dexterous E Series Hand with a PhaseSpace motion capture system, plus RGB cameras for visual pose estimation. The cube that’s being manipulated is also pretty fancy: It’s stuffed with sensors that report the orientation of each face with an accuracy of five degrees, which is necessary because it’s otherwise very difficult to know the state of a Rubik’s cube when some of its faces are occluded.

“While the video makes it easy to focus on the physical robot, the magic is mostly happening in simulation, and transferring things learned in simulation to the real world. The key to this is domain randomization—jittering parts of the simulation around so that your system has to adapt to different situations similar to those that might be encountered in the real-world.”

While the video makes it easy to focus on the physical robot, the magic is mostly happening in simulation, and transferring things learned in simulation to the real world. Again, the key to this is domain randomization—jittering parts of the simulation around so that your system has to adapt to different situations similar to those that might be encountered in the real-world. For example, maybe you slightly alter the weight of the cube, or change the friction of the fingertips a little bit, or turn down the lighting. If your system can handle these simulated variations, it’ll be more robust to real-world operation.

When we spoke to last year to Jonas Schneider (one of the authors of the cube manipulation work) and asked him where he thought that system was the weakest, he said that the biggest problem at that point was that the randomizations were both task-specific and hand designed. It’s probably not surprising, then, that one of the big contributions of the Rubik’s cube work is “a novel method for automatically generating a distribution over randomized environments for training reinforcement learning policies and vision state estimators,” which the researchers call automatic domain randomization (ADR). Here’s why ADR is important, according to the paper:

Our main hypothesis that motivates ADR is that training on a maximally diverse distribution over environments leads to transfer via emergent meta-learning. More concretely, if the model has some form of memory, it can learn to adjust its behavior during deployment to improve performance on the current environment over time, i.e. by implementing a learning algorithm internally. We hypothesize that this happens if the training distribution is so large that the model cannot memorize a special-purpose solution per environment due to its finite capacity. ADR is a first step in this direction of unbounded environmental complexity: it automates and gradually expands the randomization ranges that parameterize a distribution over environments.

Special-purpose solutions per environment are bad, because they work for that environment, but not for other environments. You can think of each little tweak to a simulation as creating a new environment, and the idea behind ADR is to automate these tweaks to create so many new environments that the system is forced to instead come up with general solutions that can work for many different environments all at once. This reflects the robustness required for real-world operation, where no two environments are ever exactly alike. It turns out that ADR is both better and more efficient than the previous manual tuning, say the researchers:

ADR clearly leads to improved transfer with much less need for hand-engineered randomizations. We significantly outperformed our previous best results, which were the result of multiple months of iterative manual tuning.

In terms of results, the researchers were mostly concerned with how many flips and rotations the system could do in a row without failing, rather than how many complete solves it was capable of. It sounds like a complete solve was a bit of an outlier—the starting configuration of the cube could be solved by the system in 43 successful moves, while the average successful run of the best trained policy (continuously trained over multiple months) was about 27 moves. Sixty percent of the time, the system could get halfway to a complete solve, and it made it the entire way 20 percent of the time.

The researchers point out that the method they’ve developed here is general purpose, and you can train a real-world robot to do pretty much any task that you can adequately simulate. You don’t need any real-world training at all, as long as your simulations are diverse enough, which is where the automatic domain randomization comes in. The long-term goal is to reduce the task specialization that’s inherent to most robots, which will help them be more useful and adaptable in real-world applications.

Lastly, just for reference, here’s what (I think) is the current 3x3 cube world record is, set just a few days ago by Max Park:

Wow.

It’s interesting that it appears to be faster and/or more efficient for a human to use table contact to augment their own dexterity. We’ve seen other robots make use of environmental contact for manipulation; it would be cool if OpenAI threw a surface into the simulation to see if their system could make use of it.

[ OpenAI ]

- How DeepMind Is Reinventing the Robot - IEEE Spectrum ›

- Why OpenAI's Codex Won't Replace Coders - IEEE Spectrum ›

- EleutherAI: When OpenAI Isn’t Open Enough - IEEE Spectrum ›

- DALL-E 2's Failures Are the Most Interesting Thing About It - IEEE Spectrum ›

- AI's Path Ahead: Reinforcement Learning Environments - IEEE Spectrum ›

Evan Ackerman is a senior editor at IEEE Spectrum. Since 2007, he has written over 6,000 articles on robotics and technology. He has a degree in Martian geology and is excellent at playing bagpipes.