Emotions can be detected remotely using a device that emits wireless signals to help it measure heartbeat and breathing, say researchers at MIT's Computer Science and Artificial Intelligence Laboratory.

The new device, named “EQ-Radio,” is 87 percent accurate at detecting whether a person is excited, happy, angry or sad—all without on-body sensors or facial-recognition software.

“We picture EQ-Radio being used in entertainment, consumer behavior, and healthcare,” says the study’s lead researcher, Mingmin Zhao. “For example,” says Zhao, a graduate student, “smart homes could use information about your emotions to adjust the music or even suggest that you get some fresh air if you've been sad for a few days.” Zhao adds that remote emotion monitoring could eventually be used to diagnose or track conditions like depression and anxiety.”

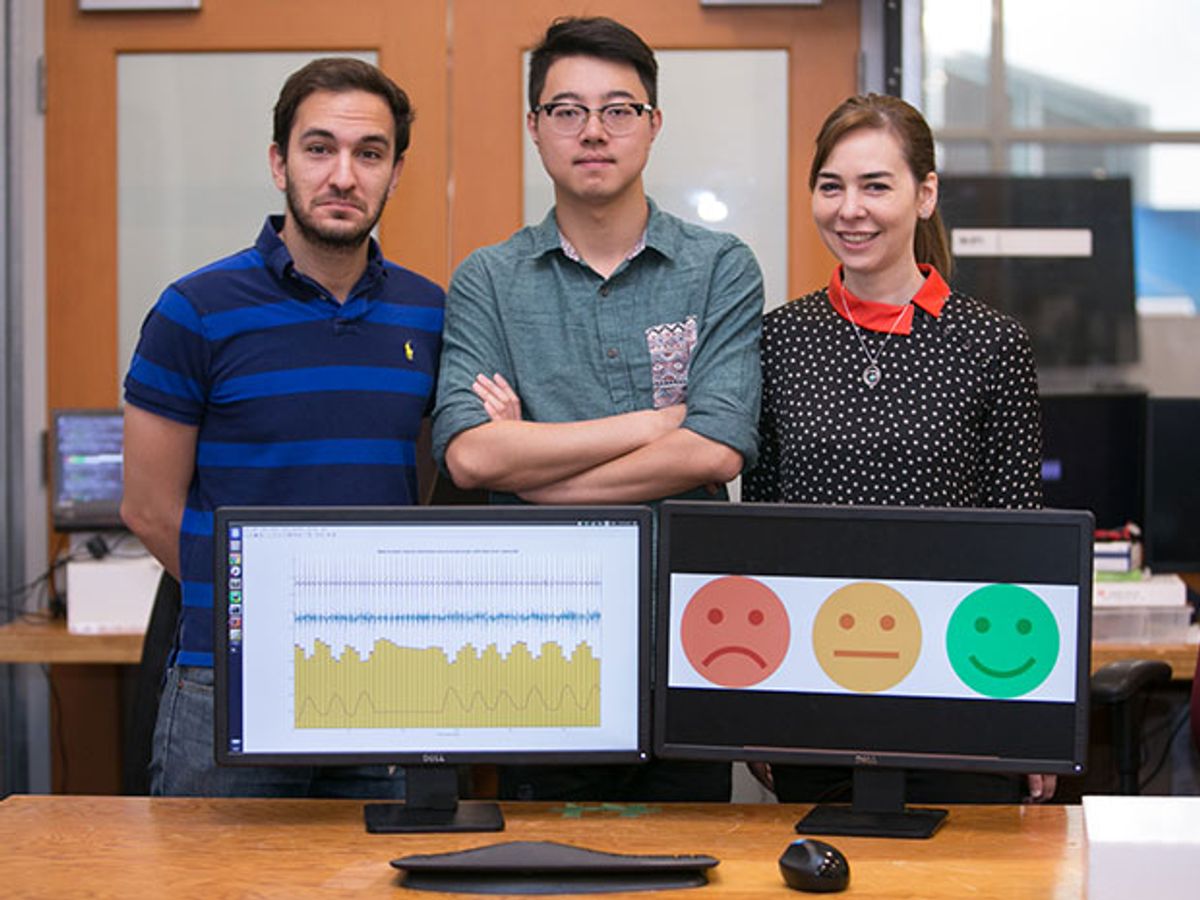

Zhao and study co-authors Dina Katabi and Fadel Adib will present their work in October at the Association of Computing Machinery's International Conference on Mobile Computing and Networking (MobiCom) in New York.

Existing technologies for detecting emotion require on-body sensors or audiovisual cues, but there are downsides to both these methods. For example, on-body sensors such as chest bands and ECG monitors are inconvenient to wear and become less accurate if they shift position over time. Systems that rely on audiovisual cues require people to face cameras, and can miss subtle facial expressions.

Instead, EQ-Radio emits radio signals that reflect off a person's body and back to the device. Its algorithms can detect individual heartbeats from these radio echoes with an accuracy comparable to on-body ECG monitors.

In experiments, the researchers then had EQ-Radio scan 30 volunteers, each seated about one meter away from the gadget. They were listening to music, looking at photos, or watching videos to help them recall memories that made them feel either happy, excited, sad, angry, or neutral. The system collected more than 130,000 heartbeats.

The device examined small differences in heartbeat length that indicated these emotions. It accurately pegged the person’s state of mind 72.3 percent of the time, even if it had not previously analyzed him or her.

“This work demonstrates that wireless technologies can capture meaningful information about human behavior that is invisible to the naked eye,” Zhao says.

Though EQ-Radio achieved a level of accuracy comparable to methods using on-body sensors, Microsoft's vision-based Emotion API, which focuses on facial expressions, was right only about 40 percent of the time. Emotion API figured out when a person is in a neutral emotional state about as well as EQ-Radio did. This is likely because noting the absence of emotion on someone’s face is usually easier to do than labeling whether it’s a happy, excited, sad, or angry face. The MIT team, which tested both systems, noted that Emotion API was more accurate with positive emotions than negative ones, likely because positive emotions typically have more visible features such as smiling, whereas negative emotions are visually closer to a neutral state.

“We view this work as the next step in trying to develop computers that can understand us better at an emotional level and potentially interact with us similarly to how we interact with other human beings,” Zhao says.

The scientists noted that the implications of this work may extend beyond emotion recognition. For instance, wireless heartbeat analysis could be used for non-invasive health monitoring and diagnosis of conditions such as arrhythmia.

Charles Q. Choi is a science reporter who contributes regularly to IEEE Spectrum. He has written for Scientific American, The New York Times, Wired, and Science, among others.