Based on every horror/sci-fi movie I’ve ever seen, squishing an actual fleshy human brain into a robot would make it unstoppable. And probably evil. Sooner or later, I’m sure someone is going try it for real. Until they do, what’s almost as good is letting a robot borrow an actual fleshy human brain to help it balance and complete tasks requiring sensing and dexterity. It’s like teleoperation, except the user’s brain and body are controlling the robot directly, from inside a haptic suit.

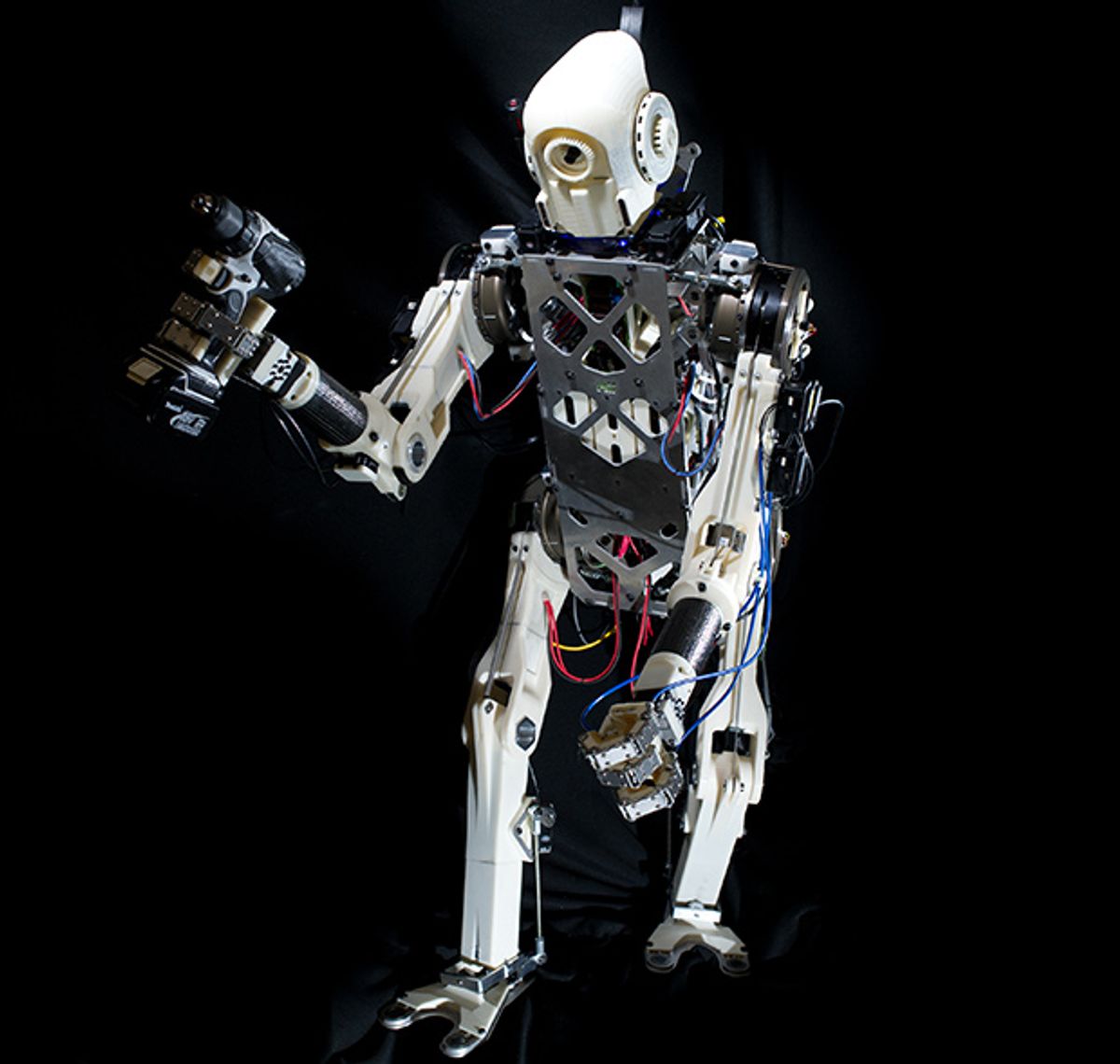

HERMES is a disaster response robot from MIT based on the Cheetah Robot, developed by Professor Sangbae Kim and his group at the MIT Biomimetic Robotics Lab. Basically, imagine that Cheetah could stand on two legs and use its front legs like arms, and you’ve got HERMES: a 24-degrees-of-freedom 45-kilogram biped with those ultra powerful, custom high-torque-density electric actuators that allow Cheetah to sprint and jump. HERMES is about 90 percent of the size of an average human, which is big enough to let it naturally interact with human environments.

The other half of MIT’s system, and what makes it so interesting, is the control interface. It’s got cameras in the head to provide an immersive view for a human teleoperator, which is not uncommon, and the robot’s arms and grippers are controlled directly, using a master-slave Waldo-like system. We’ve seen this sort of thing before. What’s brand new, though, is the Balance Feedback Interface (BFI), which is a sort of force-feedback belt that provides physical force to the human based on ground reaction forces on the robot’s feet (the center of pressure, to be precise), and also uses the movement of the human to balance the robot. As the researchers describe in an upcoming paper to be presented at the IEEE International Conference on Intelligent Robots and Systems next month:

If [...] the force applied to the human is in the opposite direction as the motion necessary to balance the robot, we expect the human to act as a compensator. This strategy means that if the robot is about to lose balance, then the BFI attempts to pull the human out of balance as well. In this case, we hypothesize that the BFI is to trigger human’s natural response to disturbances.

In other words, if the robot starts to tip, the motors in the BFI will start to push you over in the same direction. You’ll naturally try to keep yourself upright, providing feedback that should help keep the robot upright as well. Simple. Preliminary results (derived from beating on the robot with a rubber hammer to try and topple it—good times!) show that this definitely works, although they’re not sure how well it works yet, since they just invented the hardware. What’s notable, too, is that this works without any visual input: it’s almost unconscious, like reacting to geting shoved from behind. Here’s the whole system in action (although it looks like the balancing may not be completely implemented):

Relying on human reflexes like this is two to three times faster than asking a human to use vision to correct the balance of a robot, and the researchers suggest that their system may allow for “awareness of complete whole-body synchronization” that could eventually allow teleoperated robots to achieve motor performance close to that of a human. The researchers do caution that we’re still going to need IMUs and balancing controllers, though:

By no means the proposed controller intends to replace existing autonomous balancing controllers implemented on legged platforms. This study aims to push the limits of how much aid the human can provide as a reflex-based controller and teleoperator, provided the information about the robot’s state of balance. It is still unclear how far the user can synchronize his own motor actions with the robots motion. However it is known that humans are highly adaptive systems and we expect to reveal new possibilities on teleoperation by giving the human information about the robot’s interaction with the surroundings. The long term goal of this project is to merge human flexibility and adaptability capacity with an autonomous controller, taking advantage of the best of both methods.

The next step is to expand the BFI to 6 DOF, removing constraints on the hip motion of the user. Here’s what it’ll look like:

Also, the robot’s arm motions will be worked into the balance strategy, either providing inertia to help reject disturbances to the torso, or if all else fails, catching the robot as it falls, in effect turning it into a quadruped.

And that leads to the other obvious future development here, which is a hybridization of Cheetah and HERMES to create a quadrupedal running robot that can also stand up and balance and perform manipulation tasks. This, of course, would only be the most awesome thing ever:

“A Balance Feedback Human Machine Interface for Humanoid Teleoperation in Dynamic Tasks,” by Joao Ramos, Albert Wang, and Sangbae Kim from MIT, will be presented at IROS 2015 in Germany.

Evan Ackerman is a senior editor at IEEE Spectrum. Since 2007, he has written over 6,000 articles on robotics and technology. He has a degree in Martian geology and is excellent at playing bagpipes.