An optical sensor attached to the forehead could do the work of both an EEG monitor and an MRI, allowing portable monitoring of brain activity in patients and better control of hands-free devices for the physically disabled.

That’s the hope, anyway, of Ehsan Kamrani, a research fellow at Harvard Medical School who presented the idea at the recent 2015 IEEE Photonics Conference in Virginia.

“So far there is no single device for doing brain imaging in a portable device for continuous monitoring,” he says. Instead of a brief set of readings taken in a hospital, a stroke victim or epilepsy patient could get a set of readings over hours or days as she goes about her normal life. The readings could be transferred to her smartphone, then sent to her doctor, or even alert her if another problem was imminent.

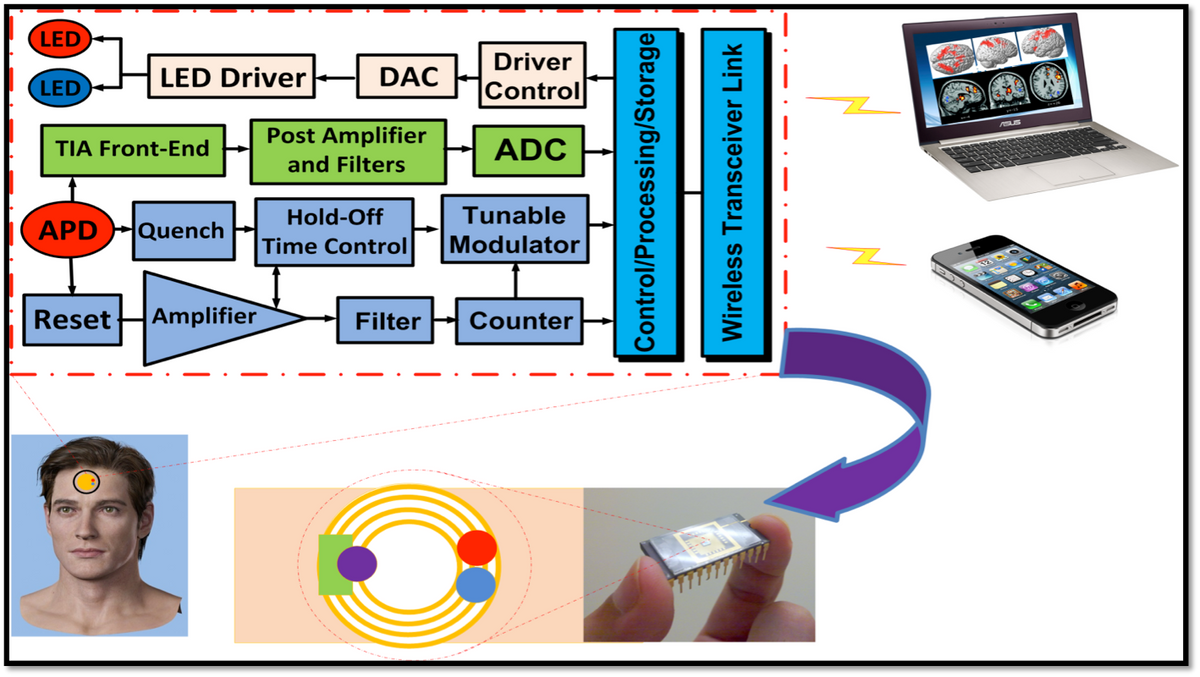

Such an optical electroencephalography (EEG) system would use an LED and a photodetector operating in thenear infrared portion of the spectrum, with a wavelength between 650 and 950 nm. Those wavelengths can penetrate several centimeters into brain tissue and easily distinguish oxygenated and deoxygenated blood, providing the same sort of information about blood flow and brain activity that functional magnetic resonance imaging (fMRI) picks up. The system, called functional near-infrared spectroscopy, could use either a single patch on the forehead or, for more complex readings, a couple of dozen patches placed around the head.

In addition to requiring a huge machine, fMRI only measures the so-called hemodynamic response, a relatively slow measure of brain activity. EEG, on the other hand, picks up only the fast, electrical activity of neurons. But the change in blood flow is a response to the neurons firing, and Kamrani says he can use a statistical trick to translate readings of the blood flow patterns into neuronal activity, so that optical EEG effectively measures both signals. For instance, when the brain fires the neurons that order “raise the right hand,” there’s increased activity in a specific area of the brain, which is reflected in blood flow. That method, though, is still somewhat controversial.

But Kamrani believes it should prove reliable enough that a person unable to control a mouse or keyboard could instead send commands to a computer using only her thoughts. Such a small, portable brain-machine interface would be a boon to the disabled. It might even be possible, he says, to send information from one human brain directly to another.

Though the optical EEG is only at the proof of concept stage, Kamrani says that with enough testing to validate what it’s measuring, such a device could be ready for commercial use within two or three years.

Neil Savage is a freelance science and technology writer based in Lowell, Mass., and a frequent contributor to IEEE Spectrum. His topics of interest include photonics, physics, computing, materials science, and semiconductors. His most recent article, “Tiny Satellites Could Distribute Quantum Keys,” describes an experiment in which cryptographic keys were distributed from satellites released from the International Space Station. He serves on the steering committee of New England Science Writers.