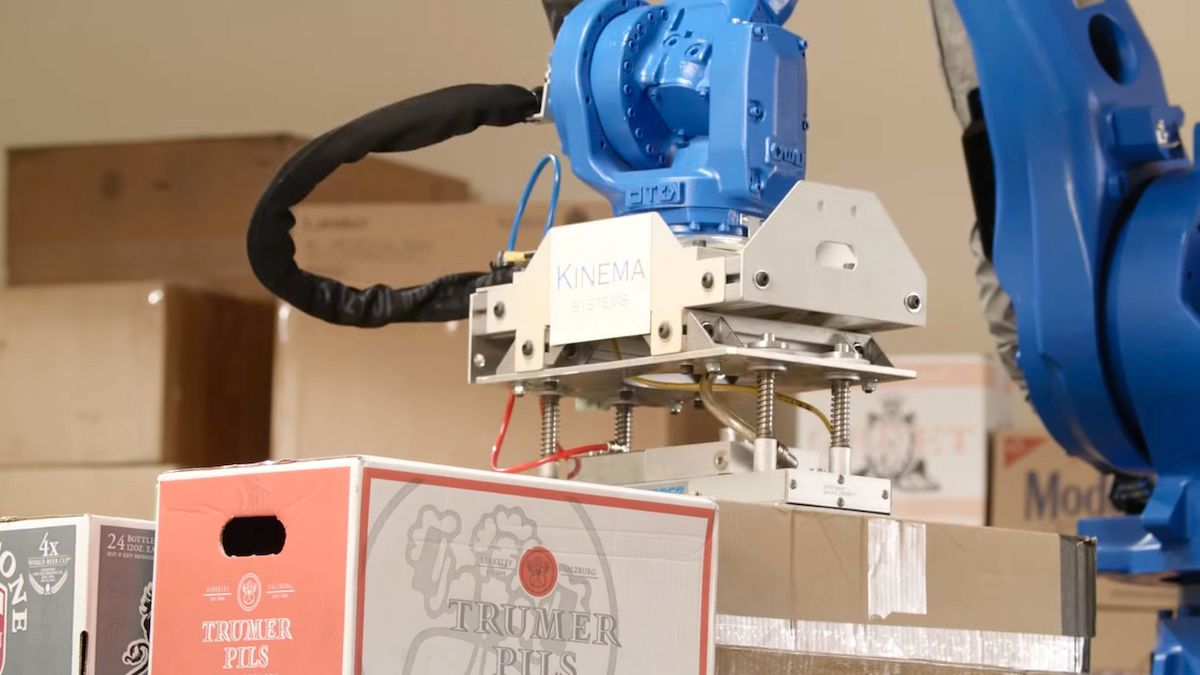

Today, Kinema Systems, a robotics startup based in Menlo Park, Calif., is coming out of stealth mode to announce Kinema Pick, which is “the world’s first self-training, self-calibrating software solution for robotic depalletizing.” I know, it sounds a little dry, but they have a convincingly cool demo, and we have lots of details on how the system works (and why it’s important) from Kinema co-founder and CEO Sachin Chitta.

Depalletizing is the task of picking up boxes of stuff off of shipping pallets and doing something with them. Like, putting them on a conveyor belt. If this sounds like a task that should be easy and useful to automate with an industrial robot arm, that’s because it is, with the caveat that it’s only easy if you get the same pallets with the same boxes on them over and over again. E-commerce companies are getting pallets with all kinds of random boxes tightly jammed on there however they’ll fit, which is too much variability for most robots to handle. So, humans have to do it, by hand, and that’s slow and costly.

This is where Kinema comes in:

It may not look like it, but having a bunch of boxes placed closely together that are different sizes and shapes and colors is a very difficult vision problem for a picking robot. “You’re looking at a layer of boxes, and quite often, it looks like one giant box,” Chitta says. “It’s hard for the system to know, ‘what is a box, and where do I go to pick something?’ That’s the hard part, and that’s what we’re addressing.” Kinema is now starting depalletizing pilots with some of the largest material handling companies in the United States.

Part of the advantage that Kinema is offering is ease of setup, calibration, and use. The robot does pretty much everything by itself—you just need to set up the vision system, tell it where the pallet is going to be, and then tell it where you want the boxes to end up. That’s it. The system is also capable of self-training, which we asked Chitta to explain to us:

“We’re using object recognition approaches to differentiate boxes from each other. Typically, you train the object recognition system first: you give it a bunch of models and say, ‘this is one box, this is another box.’ With our system, it’s self-training, with no manual training step. It starts from scratch, having no idea what a box is, and as it picks, it’s training itself. Once it picks a box for the first time, it builds a model of what the box looks like, and uses that to speed up its pick the next time. There’s also no training in the motion, it does the planning all by itself.”

The tricky part, Chitta says, is that very first pick, since that’s the point where tightly-packed boxes are hardest to differentiate from each other while they’re sitting on the pallet. Once you’ve got one box dealt with and the pallet stack has a chunk taken out of it, it becomes much easier to identify other boxes. So how does Kinema pick the first box off of a pallet? “That’s our secret sauce,” Chitta says, although he went on to tell us that anyone who sees the system live will be able to figure it out. All he would hint is that “it’s a combination of motion and perception,” so our best guess is that the robot gives the pile some sort of nudge and watches what happens. In any case, we’ve been promised a video (at some point) that’ll show the whole process.

So how well does this all work? The short and easy answer is that a team of humans can pick a box off of a randomized pallet and place it on a conveyor belt on average once every 6 seconds, and Kinema can beat that. Without stopping. Ever.

Those of you who closely follow robotic depalletization technology may remember this video, from January 2013:

This is a robot from Industrial Perception Inc., a Willow Garage spin-off that was swallowed whole by Google back in December of 2013. Noting the similarities, we asked Chitta about how what Kinema Systems is doing is unique, and he pointed out one major difference: if you look closely, none of the boxes that the IPI robot is picking up are stacked right next to each other. There are small gaps, aided by the fact that the boxes aren’t homogenous (you can see it even more clearly in this video). This makes it (relatively) easy for a 3D sensor to detect where one box starts and other ends, simplifying the pick. However, real pallets don’t work this way. They’re stuffed full, no gaps, and often all the boxes look identical. Kinema is designed to work with pallets in any configuration, making it practical as an industrial product, Chitta says.

Kinema was founded by Chitta and Dave Hershberger, both of whom spent many years doing robotics research at Willow Garage and SRI International. Chitta led the development of many of the core ROS mobility components, including arm_navigation, MoveIt!, and ros_control. “Basically, any of the manipulation stuff we see in ROS now came from me or my group,” Chitta explains. Hershberger worked on rviz, along with the ROS navigation stack.

After developing a software platform at SRI that was licensed to Verb Surgical, Chitta and Hershberger saw many industrial customers using the open source tools that they had helped develop, and recognized a need for commercial-grade software targeted at end-user applications in the industrial space. Kinema Systems was founded to explore opportunities for using robotic manipulation systems to solve common industrial problems, and Kinema Pick is their first crack at it.

Random picking is a problem that shows up in more places than just depalletizing, and Chitta says Kinema is also looking at things like bin picking. Basically, anywhere where you’ve got a bunch of random stuff, and some of that stuff needs to be efficiently and reliably picked up by a robot. For now, they’re focused on the depalletizing pilots, and once that stage is completed, they expect the commercial system to be available through integrators by the end of this year. If you want one, you can meet with Kinema this week at supply chain trade show MODEX.

[ Kinema Systems ]

Evan Ackerman is a senior editor at IEEE Spectrum. Since 2007, he has written over 6,000 articles on robotics and technology. He has a degree in Martian geology and is excellent at playing bagpipes.