This is part two of a six-part series on the history of natural language processing.

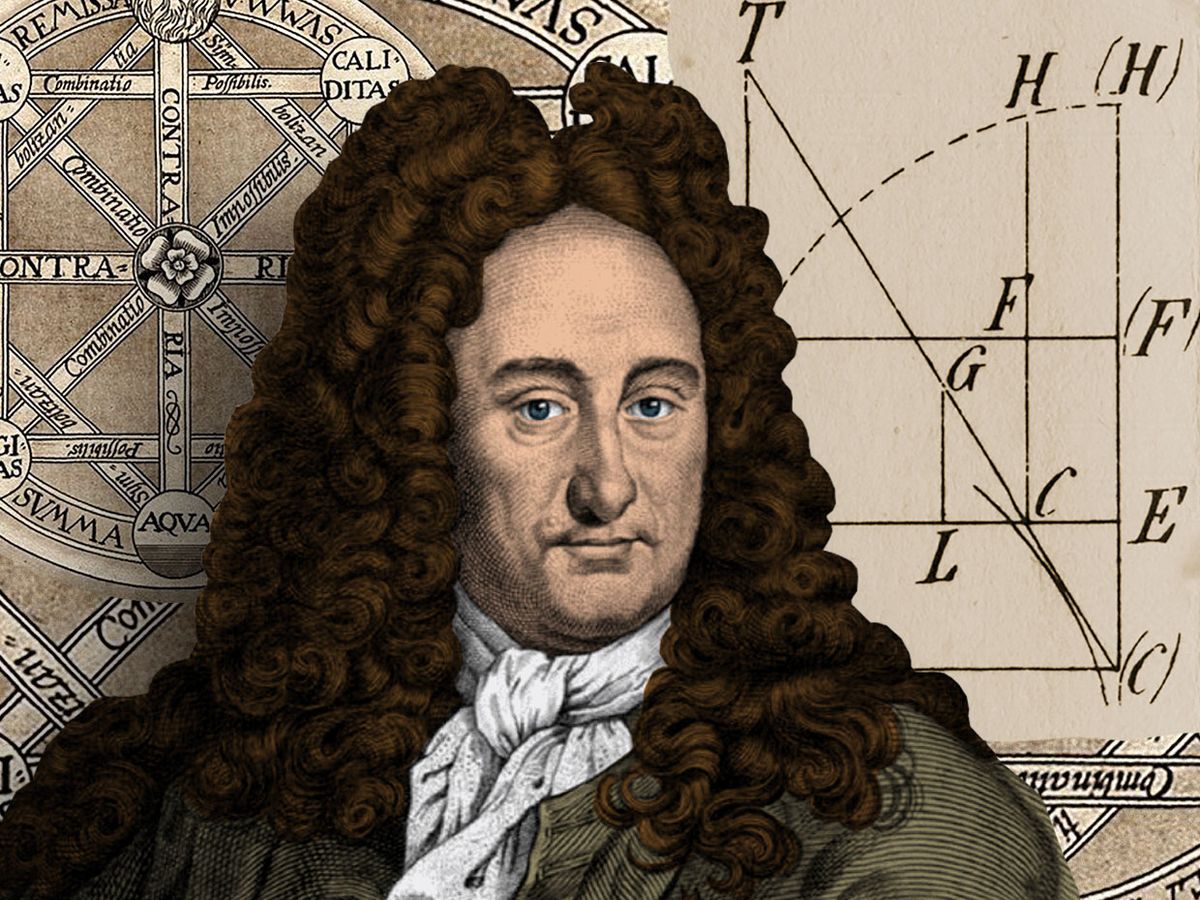

In 1666, the German polymath Gottfried Wilhelm Leibniz published an enigmatic dissertation entitled On the Combinatorial Art. Only 20 years old but already an ambitious thinker, Leibniz outlined a theory for automating knowledge production via the rule-based combination of symbols.

Leibniz’s central argument was that all human thoughts, no matter how complex, are combinations of basic and fundamental concepts, in much the same way that sentences are combinations of words, and words combinations of letters. He believed that if he could find a way to symbolically represent these fundamental concepts and develop a method by which to combine them logically, then he would be able to generate new thoughts on demand.

The idea came to Leibniz through his study of Ramon Llull, a 13th century Majorcan mystic who devoted himself to devising a system of theological reasoning that would prove the “universal truth" of Christianity to non-believers.

Llull himself was inspired by Jewish Kabbalists’ letter combinatorics (see part one of this series), which they used to produce generative texts that supposedly revealed prophetic wisdom. Taking the idea a step further, Llull invented what he called a volvelle, a circular paper mechanism with increasingly small concentric circles on which were written symbols representing the attributes of God. Llull believed that by spinning the volvelle in various ways, bringing the symbols into novel combinations with one another, he could reveal all the aspects of his deity.

Leibniz was much impressed by Llull’s paper machine, and he embarked on a project to create his own method of idea generation through symbolic combination. He wanted to use his machine not for theological debate, but for philosophical reasoning. He proposed that such a system would require three things: an “alphabet of human thoughts”; a list of logical rules for their valid combination and re-combination; and a mechanism that could carry out the logical operations on the symbols quickly and accurately—a fully mechanized update of Llull’s paper volvelle.

He imagined that this machine, which he called “the great instrument of reason,” would be able to answer all questions and resolve all intellectual debate. “When there are disputes among persons,” he wrote, “we can simply say, ‘Let us calculate,’ and without further ado, see who is right.”

The notion of a mechanism that produced rational thought encapsulated the spirit of Leibniz’s times. Other Enlightenment thinkers, such as René Descartes, believed that there was a “universal truth” that could be accessed through reason alone, and that all phenomena were fully explainable if the underlying principles were understood. The same, Leibniz thought, was true of language and cognition itself.

But many others saw this doctrine of pure reason as deeply flawed, and felt that it signified a new age sophistry professed from on high. One such critic was the author and satirist Jonathan Swift, who took aim at Leibniz’s thought-calculating machine in his 1726 book, Gulliver’s Travels. In one scene, Gulliver visits the Grand Academy of Lagado where he encounters a strange mechanism called “the engine.” The machine has a large wooden frame with a grid of wires; on the wires are small wooden cubes with symbols written on each side.

The students of the Grand Academy of Lagado crank handles on the side of the machine causing the wooden cubes to rotate and spin, bringing the symbols into new combinations. A scribe then writes down the output of the machine, and hands it to the presiding professor. Through this process, the professor claims, he and his students can “write books in philosophy, poetry, politics, laws, mathematics, and theology, without the least assistance from genius or study.”

This scene, with its pre-digital language generation, was Swift’s parody of Leibniz’s thought generation through symbolic combinatorics—and more broadly, an argument against the primacy of science. As with the Lagado academy’s other attempts at contributing to its nation’s development through research—such as trying to change human excretion back into food—Gulliver sees the engine as a pointless experiment.

Swift’s point was that language is not a formal system that represents human thought, as Leibniz proposed, but a messy and ambiguous form of expression that makes sense only in relation to the context in which it is used. To have a machine generate language requires more than having the right set of rules and the right machine, Swift argued—it requires the ability to understand the meaning of words, something that neither the Lagado engine nor Leibniz’s “instrument of reason” could do.

In the end, Leibniz never constructed his idea-generating machine. In fact, he abandoned the study of Llull’s combinatorics altogether, and, later in life came to see the pursuit of mechanizing language as immature. But the idea of using mechanical devices to perform logical functions remained with him, inspiring the construction of his ‘step reckoner,’ a mechanical calculator built in 1673.

But as today’s data scientists devise ever-better algorithms for natural language processing, they’re having debates that echo the ideas of Leibniz and Swift: Even if you can create a formal system to generate human-seeming language, can you give it the ability to understand what it’s saying?

This is the second installment of a six-part series on the history of natural language processing. Last week’s post started the story with a Kabbalist mystic in medieval Spain. Come back next Monday for part three, which describes the language models that were painstakingly built by Andrey Markov and Claude Shannon.

You can also check out our prior series on the untold history of AI.