This is a guest post. The views expressed here are solely those of the author and do not represent positions of IEEE Spectrum or the IEEE.

Most people associate artificial intelligence with robots as an inseparable pair. In fact, the term “artificial intelligence” is rarely used in research labs. Terminology specific to certain kinds of AI and other smart technologies are more relevant. Whenever I’m asked the question “Is this robot operated by AI?”, I hesitate to answer—wondering whether it would be appropriate to call the algorithms we develop “artificial intelligence.”

First used by scientists such as John McCarthy and Marvin Minsky in the 1950s, and frequently appearing in sci-fi novels or films for decades, AI is now being used in smartphone virtual assistants and autonomous vehicle algorithms. Both historically and today, AI can mean many different things—which can cause confusion.

However, people often express the preconception that AI is an artificially realized version of human intelligence. And that preconception might come from our cognitive bias as human beings.

We judge robots’ or AI’s tasks in comparison to humans

If you happened to follow this news in 2017, how did you feel when AlphaGo, AI developed by DeepMind, defeated 9-dan Go player Lee Sedol? You may have been surprised or terrified, thinking that AI has surpassed the ability of geniuses. Still, winning a game with an exponential number of possible moves like Go only means that AI has exceeded a very limited part of human intelligence. The same goes for IBM’s AI, Watson, which competed in ‘Jeopardy!’, the television quiz show.

I believe many were impressed to see the Mini Cheetah, developed in my MIT Biomimetic Robotics Laboratory, perform a backflip. While jumping backwards and landing on the ground is very dynamic, eye-catching and, of course, difficult for humans, the algorithm for the particular motion is incredibly simple compared to one that enables stable walking that requires much more complex feedback loops. Achieving robot tasks that are seemingly easy for us is often extremely difficult and complicated. This gap occurs because we tend to think of a task’s difficulty based on human standards.

Achieving robot tasks that are seemingly easy for us is often extremely difficult and complicated.

We tend to generalize AI functionality after watching a single robot demonstration. When we see someone on the street doing backflips, we tend to assume this person would be good at walking and running, and also be flexible and athletic enough to be good at other sports. Very likely, such judgement about this person would not be wrong.

However, can we also apply this judgement to robots? It’s easy for us to generalize and determine AI performance based on an observation of a specific robot motion or function, just as we do with humans. By watching a video of a robot hand-solving Rubik’s Cube at OpenAI, an AI research lab, we think that the AI can perform all other simpler tasks because it can perform such a complex one. We overlook the fact that this AI’s neural network was only trained for a limited type of task; solving the Rubik’s Cube in that configuration. If the situation changes—for example, holding the cube upside down while manipulating it—the algorithm does not work as well as might be expected.

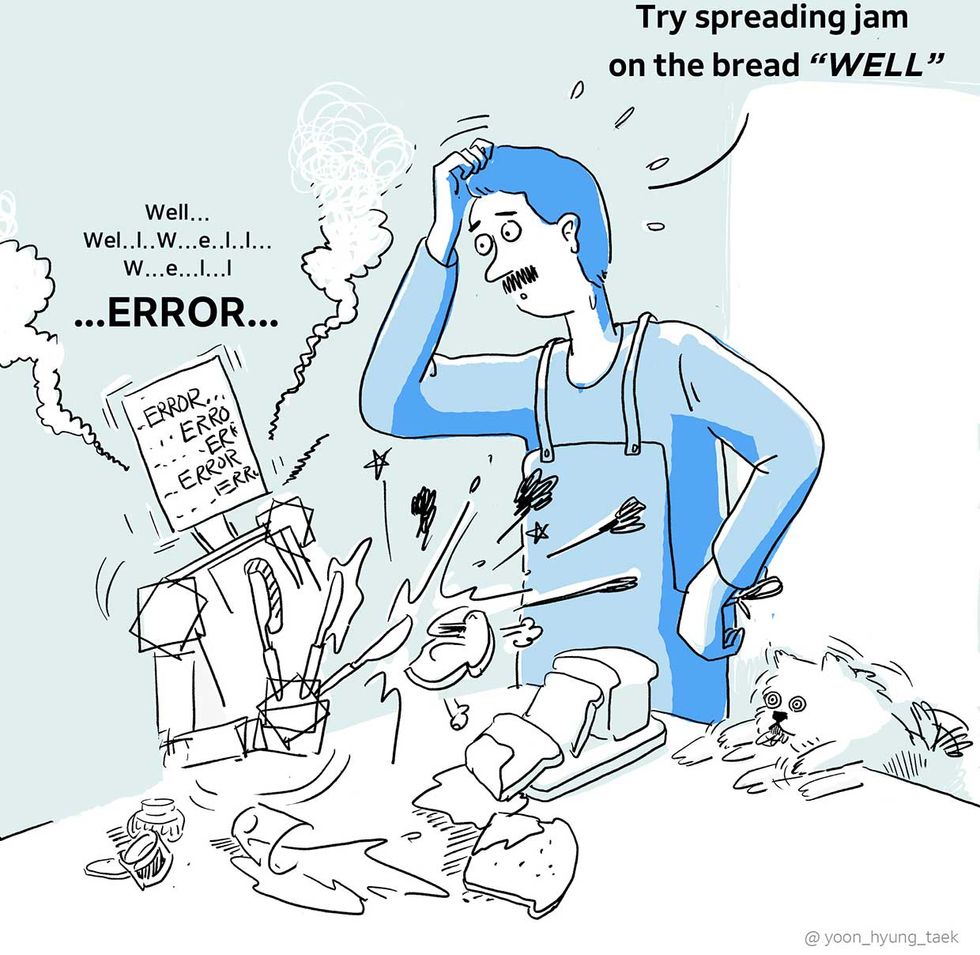

Unlike AI, humans can combine individual skills and apply them to multiple complicated tasks. Once we learn how to solve a Rubik’s Cube, we can quickly work on the cube even when we’re told to hold it upside down, though it may feel strange at first. Human intelligence can naturally combine the objectives of not dropping the cube and solving the cube. Most robot algorithms will require new data or reprogramming to do so. A person who can spread jam on bread with a spoon can do the same using a fork. It is obvious. We understand the concept of “spreading” jam, and can quickly get used to using a completely different tool. Also, while autonomous vehicles require actual data for each situation, human drivers can make rational decisions based on pre-learned concepts to respond to countless situations. These examples show one characteristic of human intelligence in stark contrast to robot algorithms, which cannot perform tasks with insufficient data.

Mammals have continuously been evolving for more than 65 million years. The entire time humans spent on learning math, using languages, and playing games would sum up to a mere 10,000 years. In other words, humanity spent a tremendous amount of time developing abilities directly related to survival, such as walking, running, and using our hands. Therefore, it may not be surprising that computers can compute much faster than humans, as they were developed for this purpose in the first place. Likewise, it is natural that computers cannot easily obtain the ability to freely use hands and feet for various purposes as humans do. These skills have been attained through evolution for over 10 million years.

This is why it is unreasonable to compare robot or AI performance from demonstrations to that of an animal or human’s abilities. It would be rash to believe that robot technologies involving walking and running like animals are complete, while watching videos of the Cheetah robot running across fields at MIT and leaping over obstacles. Numerous robot demonstrations still rely on algorithms set for specialized tasks in bounded situations. There is a tendency, in fact, for researchers to select demonstrations that seem difficult, as it can produce a strong impression. However, this level of difficulty is from the human perspective, which may be irrelevant to the actual algorithm performance.

Humans are easily influenced by instantaneous and reflective perception before any logical thoughts. And this cognitive bias is strengthened when the subject is very complicated and difficult to analyze logically—for example, a robot that uses machine learning.

Robotic demonstrations still rely on algorithms set for specialized tasks in bounded situations.

So where does our human cognitive bias come from? I believe it comes from our psychological tendency to subconsciously anthropomorphize the subjects we see. Humans have evolved as social animals, probably developing the ability to understand and empathize with each other in the process. Our tendency to anthropomorphize subjects would have come from the same evolutionary process. People tend to use the expression “teaching robots” when they refer to programing algorithms. Nevertheless, we are used to using anthropomorphized expressions. As the 18th century philosopher David Hume said, “There is a universal tendency among mankind to conceive all beings like themselves. We find human faces in the moon, armies in the clouds.”

Of course, we not only anthropomorphize subjects’ appearance but also their state of mind. For example, when Boston Dynamics released a video of its engineers kicking a robot, many viewers reacted by saying “this is cruel,” and that they “pity the robot.” A comment saying, “one day, robots will take revenge on that engineer” received likes. In reality, the engineer was simply testing the robot’s balancing algorithm. However, before any thought process to comprehend this situation, the aggressive motion of kicking combined with the struggling of the animal-like robot is instantaneously transmitted to our brains, leaving a strong impression. Like this, such instantaneous anthropomorphism has a deep effect on our cognitive process.

Humans process information qualitatively, and computers, quantitively

Looking around, our daily lives are filled with algorithms, as can be seen by machines and services that run on these algorithms. All algorithms operate on numbers. We use the terms such as “objective function,” which is a numerical function that represents a certain objective. Many algorithms have the sole purpose of reaching the maximum or minimum value of this function, and an algorithm’s characteristics differ based on how it achieves this.

The goal of tasks such as winning a game of Go or chess are relatively easy to quantify. The easier quantification is, the better the algorithms work. On the contrary, humans often make decisions without quantitative thinking.

As an example, consider cleaning a room. The way we clean differs subtly from day to day, depending on the situation, depending on whose room it is, and depending on how one feels. Were we trying to maximize a certain function in this process? We did no such thing. The act of cleaning has been done with an abstract objective of “clean enough.” Besides, the standard for how much is “enough” changes easily. This standard may be different among people, causing conflicts particularly among family members or roommates.

There are many other examples. When you wash your face every day, which quantitative indicators do you intend to maximize with your hand movements? How hard do you rub? When choosing what to wear? When choosing what to have for dinner? When choosing which dish to wash first? The list goes on. We are used to making decisions that are good enough by putting together information we already have. However, we often do not check whether every single decision is optimized. Most of the time, it is impossible to know because we would have to satisfy numerous contradicting indicators with limited data. When selecting groceries with a friend at the store, we cannot each quantify standards for groceries and make a decision based on these numerical values. Usually, when one picks something out, the other will either say “OK!” or suggest another option. This is very different from saying this vegetable “is the optimal choice!” It is more like saying “this is good enough”

This operational difference between people and algorithms may cause troubles when designing work or services we expect robots to perform. This is because while algorithms perform tasks based on quantitative values, humans’ satisfaction, the outcome of the task, is difficult to be quantified completely. It is not easy to quantify the goal of a task that must adapt to individual preferences or changing circumstances like the aforementioned room cleaning or dishwashing tasks. That is, to coexist with humans, robots may have to evolve not to optimize particular functions, but to achieve “good enough.” Of course, the latter is much more difficult to achieve robustly in real-life situations where you need to manage so many conflicting objectives and qualitative constraints.

Actually, we do not know what we are doing

Try to recall the most recent meal you had before reading this. Can you remember what you had? Then, can you also remember the process of chewing and swallowing the food? Do you know what exactly your tongue was doing at that very moment? Our tongue does so many things for us. It helps us put food in our mouths, distribute the food between our teeth, swallow the finely chewed pieces, or even send large pieces back toward our teeth, if needed. We can naturally do all of this, even while talking to a friend, using your tongue also in charge of pronunciation. How much do our conscious decisions contribute to the movement of our tongues that accomplish so many complex tasks simultaneously? It may seem like we are moving our tongues as we want, but in fact, there are more moments when the tongue is moving automatically, taking high-level commands from our consciousness. This is why we cannot remember detailed movements of our tongues during a meal. We know little about their movement in the first place.

We may assume that our hands are the most consciously controllable organ, but many hand movements also happen automatically and unconsciously, or subconsciously at most. For those who disagree, try putting something like keys in your pocket and take it back out. In that short moment, countless micromanipulations instantly and seamlessly coordinated to complete the task. We often cannot perceive each action separately. We do not even know what units we should divide them into, so we collectively express them as abstract words such as organize, wash, apply, rub, wipe, etc. These verbs are qualitatively defined. They often refer to the aggregate of fine movements and manipulations, whose composition changes depending on the situations. Of course, it is easy even for children to understand and think of this concept, but from the perspective of algorithm development, these words are endlessly vague and abstract.

Let’s try to teach how to make a sandwich by spreading peanut butter on bread. We can show how this is done and explain with a few simple words. Let’s assume a slightly different situation. Say there is an alien who uses the same language as us, but knows nothing about human civilization or culture. (I know this assumption is already contradictory..., but please bear with me.) Can we explain over the phone how to make a peanut butter sandwich? We will probably get stuck trying to explain how to scoop peanut butter out of the jar. Even grasping the slice of bread is not so simple. We have to grasp the bread strongly enough so we can spread the peanut butter, but not so much so as to ruin the shape of the soft bread. At the same time, we should not drop the bread either. It is easy for us to think of how to grasp the bread, but it will not be easy to express this through speech or text, let alone in a function. Even if it is a human who is learning a task, can we learn a carpenter’s work over the phone? Can we precisely correct tennis or golf postures over the phone? It is difficult to discern to what extent the details we see are done either consciously or unconsciously.

My point is that not everything we do with our hands and feet can directly be expressed with our language. Things that happen in between successive actions often automatically occur unconsciously, and thus we explain our actions in a much simpler way than how they actually take place. This is why our actions seem very simple, and why we forget how incredible they really are. The limitations of expression often lead to underestimation of actual complexity. We should recognize the fact that difficulty of language depiction can hinder research progress in fields where words are not well developed.

Until recently, AI has been practically applied in information services related to data processing. Some prominent examples today include voice recognition and facial recognition. Now, we are entering a new era of AI that can effectively perform physical services in our midst. That is, the time is coming in which automation of complex physical tasks becomes imperative.

Particularly, our increasingly aging society poses a huge challenge. Shortage of labor is no longer a vague social problem. It is urgent that we discuss how to develop technologies that augment humans’ capability, allowing us to focus on more valuable work and pursue lives uniquely human. This is why not only engineers but also members of society from various fields should improve their understanding of AI and unconscious cognitive biases. It is easy to misunderstand artificial intelligence, as noted above, because it is substantively unlike human intelligence.

Things that are very natural among humans may be cognitive biases for AI and robots. Without a clear understanding of our cognitive biases, we cannot set the appropriate directions for technology research, application, and policy. In order for productive development as a scientific community, we need keen attention to our cognition and deliberate debate in the process of promoting appropriate development and applications of technology.

Sangbae Kim leads the Biomimetic Robotics Laboratory at MIT. The preceding is an adaptation of a blog Kim posted in June for Naver Labs.