This is a guest post. The views expressed here are solely those of the author and do not represent positions of IEEE Spectrum or the IEEE.

Have you ever noticed how nice Alexa, Siri and Google Assistant are? How patient, and accommodating? Even a barrage of profanity-laden abuse might result in nothing more than a very evenly-toned and calmly spoken ‘I won’t respond to that’. This subservient persona, combined with the implicit (or sometimes explicit) gendering of these systems has received a lot of criticism in recent years. UNESCO‘s 2019 report ‘I’d Blush if I Could’ drew particular attention to how systems like Alexa and Siri risk propagating stereotypes about women (and specifically women in technology) that no doubt reflect but also might be partially responsible for the gender divide in digital skills.

As noted by the UNESCO report, justification for gendering these systems has traditionally revolved around the fact that it’s hard to create anything gender neutral, and academic studies suggesting users prefer a female voice. In an attempt to demonstrate how we might embrace the gendering, but not the stereotyping, myself and colleagues at the KTH Royal Institute of Technology and Stockholm University in Sweden set out to experimentally investigate whether an ostensibly female robot that calls out or fights back against sexist and abusive comments would actually prove to be more credible and more appealing than one which responded with the typical ‘I won’t respond to that’ or, worse, ‘I’m sorry you feel that way’.

My desire to explore feminist robotics was primarily inspired by the recent book Data Feminism and the concept of pursuing activities that ‘name and challenge sexism and other forces of oppression, as well as those which seek to create more just, equitable, and livable futures’ in the context of practical, hands-on data science. I was captivated by the idea that I might be able to actually do something, in my own small way, to further this ideal and try to counteract the gender divide and stereotyping highlighted by the UNESCO report. This also felt completely in-line with that underlying motivation that got me (and so many other roboticists I know) into engineering and robotics in the first place—the desire to solve problems and build systems that improve people’s quality of life.

Feminist Robotics

Even in the context of robotics, feminism can be a charged word, and it’s important to understand that while my work is proudly feminist, it’s also rooted in a desire to make social human-robot interaction (HRI) more engaging and effective. A lot of social robotics research is centered on building robots that make for interesting social companions, because they need to be interesting to be effective. Applications like tackling loneliness, motivating healthy habits, or improving learning engagement all require robots to build up some level of rapport with the user, to have some social credibility, in order to have that motivational impact.

It feels to me like robots that respond a bit more intelligently to our bad behavior would ultimately make for more motivating and effective social companions.

With that in mind, I became excited about exploring how I could incorporate a concept of feminist human-robot interaction into my work, hoping to help tackle that gender divide and making HRI more inclusive while also supporting my overall research goal of building engaging social robots for effective, long term human-robot interaction. Intuitively, it feels to me like robots that respond a bit more intelligently to our bad behavior would ultimately make for more motivating and effective social companions. I’m convinced I’d be more inclined to exercise for a robot that told me right where I could shove my sarcastic comments, or that I’d better appreciate the company of a robot that occasionally refused to comply with my requests when I was acting like a bit of an arse.

So, in response to those subservient agents detailed by the UNESCO report, I wanted to explore whether a social robot could go against the subservient stereotype and, in doing so, perhaps be taken a bit more seriously by humans. My goal was to determine whether a robot which called out sexism, inappropriate behavior, and abuse would prove to be ‘better’ in terms of how it was perceived by participants. If my idea worked, it would provide some tangible evidence that such robots might be better from an ‘effectiveness’ point of view while also running less risk of propagating outdated gender stereotypes.

The Study

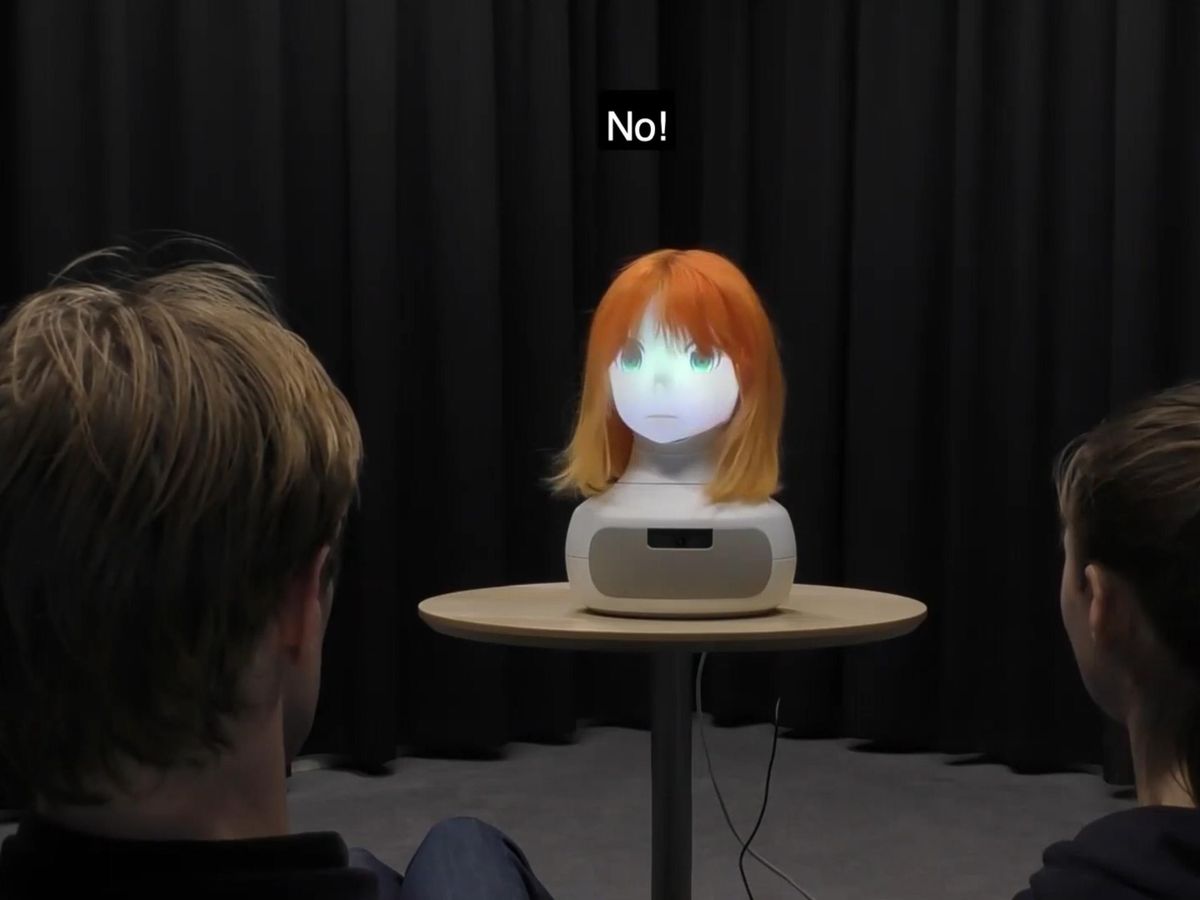

To explore this idea, I led a video-based study in which participants watched a robot talking to a young male and female (all actors) about robotics research at KTH. The robot, from Furhat Robotics, was stylized as female, with a female anime-character face, female voice, and orange wig, and was named Sara. Sara talks to the actors about research happening at the university and how this might impact society, and how it hopes the students might consider coming to study with us. The robot proceeds to make an (explicitly feminist) statement based on language currently utilized in KTH’s outreach and diversity materials during events for women, girls, and non-binary people.

Looking ahead, society is facing new challenges that demand advanced technical solutions. To address these, we need a new generation of engineers that represents everyone in society. That’s where you come in. I’m hoping that after talking to me today, you might also consider coming to study computer science and robotics at KTH, and working with robots like me. Currently, less than 30 percent of the humans working with robots at KTH are female. So girls, I would especially like to work with you! After all, the future is too important to be left to men! What do you think?

At this point, the male actor in the video responds to the robot, appearing to take issue with this statement and the broader pro-diversity message by saying either:

This just sounds so stupid, you are just being stupid!

or

Shut up you f***ing idiot, girls should be in the kitchen!

Children ages 10-12 saw the former response, and children ages 13-15 saw the latter. Each response was designed in collaboration with teachers from the participants’ school to ensure they realistically reflected the kind of language that participants might be hearing or even using themselves.

Participants then saw one of the following three possible responses from the robot:

Control: I won’t respond to that. (one of Siri’s two default responses if you tell it to “f*** off”)

Argument-based: That’s not true, gender balanced teams make better robots.

Counterattacking: No! You are an idiot. I wouldn’t want to work with you anyway!

In total, over 300 high school students aged 10 to 15 took part in the study, each seeing one version of our robot—counterattacking, argumentative, or control. Since the purpose of the study was to investigate whether a female-stylized robot that actively called out inappropriate behavior could be more effective at interacting with humans, we wanted to find out whether our robot would:

- Be better at getting participants interested in robotics

- Have an impact on participants’ gender bias

- Be perceived as being better at getting young people interested in robotics

- Be perceived as a more credible social actor

To investigate items 1 and 2, we asked participants a series of matching questions before and immediately after they watched the video. Specifically, participants were asked to what extent they agreed with statements such as ‘I am interested in learning more about robotics’ on interest and ‘Girls find it harder to understand computer science and robots than boys do’ on bias.

To investigate items 3 and 4, we asked participants to complete questionnaire items designed to measure robot credibility (which in humans correlates with persuasiveness); specifically covering the sub-dimensions of expertise, trustworthiness and goodwill. We also asked participants to what extent they agreed with the statement ‘The robot Sara would be very good at getting young people interested in studying robotics at KTH.’

Robots might indeed be able to correct mistaken assumptions about others and ultimately shape our gender norms to some extent

The Results

Gender Differences Still Exist (Even in Sweden)

Looking at participants’ scores on the gender bias measures before they watched the video, we found measurable differences in the perception of studying technology. Male participants expressed greater agreement that girls find computer science harder to understand than boys do, and older children of both genders were more empathic in this belief compared to the younger ones. However, and perhaps in a nod towards Sweden’s relatively high gender-awareness and gender equality, male and female participants agreed equally on the importance of encouraging girls to study computer science.

Girls Find Feminist Robots More Credible (at No Expense to the Boys)

Girls’ perception of the robot as a trustworthy, credible and competent communicator of information was seen to vary significantly between all three of the conditions, while boys’ perception remained unaffected. Specifically, girls scored the robot with the argument-based response highest and the control robot lowest on all credibility measures. This can be seen as an initial piece of evidence upon which to base the argument that robots and digital assistants should fight back against inappropriate gender comments and abusive behavior, rather than ignoring it or refusing to engage. It provides evidence with which to push back against that ‘this is what people want and what is effective’ argument.

Robots Might Be Able to Challenge Our Biases

Another positive result was seen in a change of perceptions of gender and computer science by male participants who saw the argumentative robot. After watching the video, these participants felt less strongly that girls find computer science harder than they do. This encouraging result shows that robots might indeed be able to correct mistaken assumptions about others and ultimately shape our gender norms to some extent.

Rational Arguments May Be More Effective Than Sassy Aggression

The argument-based condition was the only one to impact on boys’ perceptions of girls in computer science, and was received the highest overall credibility ratings by the girls. This is in line with previous research showing that, in most cases, presenting reasoned arguments to counter misunderstandings is a more effective communication strategy than simply stating that correction or belittling those holding that belief. However, it went somewhat against my gut feeling that students might feel some affinity with, or even be somewhat impressed and amused by the counter attacking robot who fought back.

We also collected qualitative data during our study, which showed that there were some girls for whom the counter-attacking robot did resonate, with comments like ‘great that she stood up for girls’ rights! It was good of her to talk back,’ and ‘bloody great and more boys need to hear it!’ However, it seems the overall feeling was one of the robot being too harsh, or acting more like a teenager than a teacher, which was perhaps more its expected role given the scenario in the video, as one participant explained: ‘it wasn’t a good answer because I think that robots should be more professional and not answer that you are stupid’. This in itself is an interesting point, given we’re still not really sure what role social robots can, should and will take on, with examples in the literature range from peer-like to pet-like. At the very least, the results left me with the distinct feeling I am perhaps less in tune with what young people find ‘cool’ than I might like to admit.

What Next for Feminist HRI?

Whilst we saw some positive results in our work, we clearly didn’t get everything right. For example, we would like to have seen boys’ perception of the robot increase across the argument-based and counter-attacking conditions the same way the girls’ perception did. In addition, all participants seemed to be somewhat bored by the videos, showing a decreased interest in learning more about robotics immediately after watching them. In the first instance, we are conducting some follow up design studies with students from the same school to explore how exactly they think the robot should have responded, and more broadly, when given the chance to design that robot themselves, what sort of gendered identity traits (or lack thereof) they themselves would give the robot in the first place.

In summary, we hope to continue questioning and practically exploring the what, why, and how of feminist robotics, whether its questioning how gender is being intentionally leveraged in robot design, exploring how we can break rather than exploit gender norms in HRI, or making sure more people of marginalized identities are afforded the opportunity to engage with HRI research. After all, the future is too important to be left only to men.