How a Parachute Accident Helped Jump-start Augmented Reality

In 1992, hardware for the first interactive AR system literally fell from the skies

Louis Rosenberg tests Virtual Fixtures, the first interactive augmented-reality system that he developed at Wright-Patterson Air Force Base, in 1992.

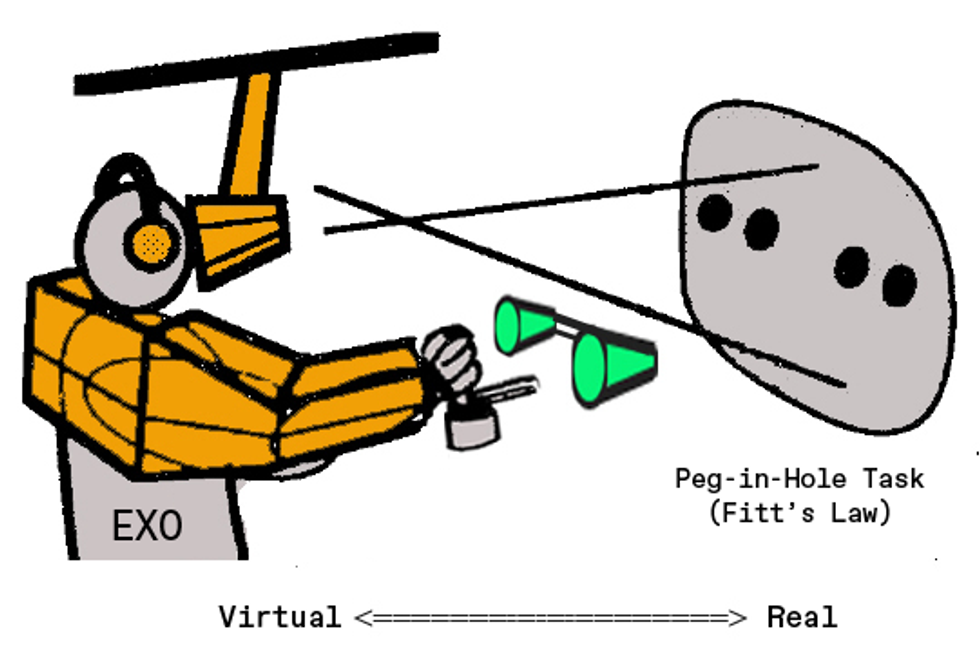

I climb into an upper-body exoskeleton that’s covered in sensors, motors, gears, and bearings, and then lean forward, tilting my head up to press my face against the eyepieces of a vision system hanging from the ceiling. In front of me, I see a large wooden board, painted black and punctuated by a grid of metal holes. The board is real. So is the peg in my hand that I’m trying to move from one hole to another, as fast as I can. When I begin to move the peg, a virtual cone appears over the target hole, along with a virtual surface easing toward it. I can feel the surface as I slide the peg along it toward the cone and into the hole.

This was the Virtual Fixtures platform, which was developed in the early 1990s to test the potential of “perceptual overlays” to improve human performance in manual tasks that require dexterity. And it worked.

These days, virtual-reality experts look back on the platform as the first interactive augmented-reality system that enabled users to engage simultaneously with real and virtual objects in a single immersive reality.

The project began in 1991, when I pitched the effort as part of my doctoral research at Stanford University. By the time I finished—three years and multiple prototypes later—the system I had assembled filled half a room and used nearly a million dollars’ worth of hardware. And I had collected enough data from human testing to definitively show that augmenting a real workspace with virtual objects could significantly enhance user performance in precision tasks.

Given the short time frame, it might sound like all went smoothly, but the project came close to getting derailed many times, thanks to a tight budget and substantial equipment needs. In fact, the effort might have crashed early on, had a parachute—a real one, not a virtual one—not failed to open in the clear blue skies over Dayton, Ohio, during the summer of 1992.

Before I explain how a parachute accident helped drive the development of augmented reality, I’ll lay out a little of the historical context.

Thirty years ago, the field of virtual reality was in its infancy, the phrase itself having only been coined in 1987 by Jaron Lanier, who was commercializing some of the first headsets and gloves. His work built on earlier research by Ivan Sutherland, who pioneered head-mounted display technology and head-tracking, two critical elements that sparked the VR field. Augmented reality (AR)—that is, combining the real world and the virtual world into a single immersive and interactive reality—did not yet exist in a meaningful way.

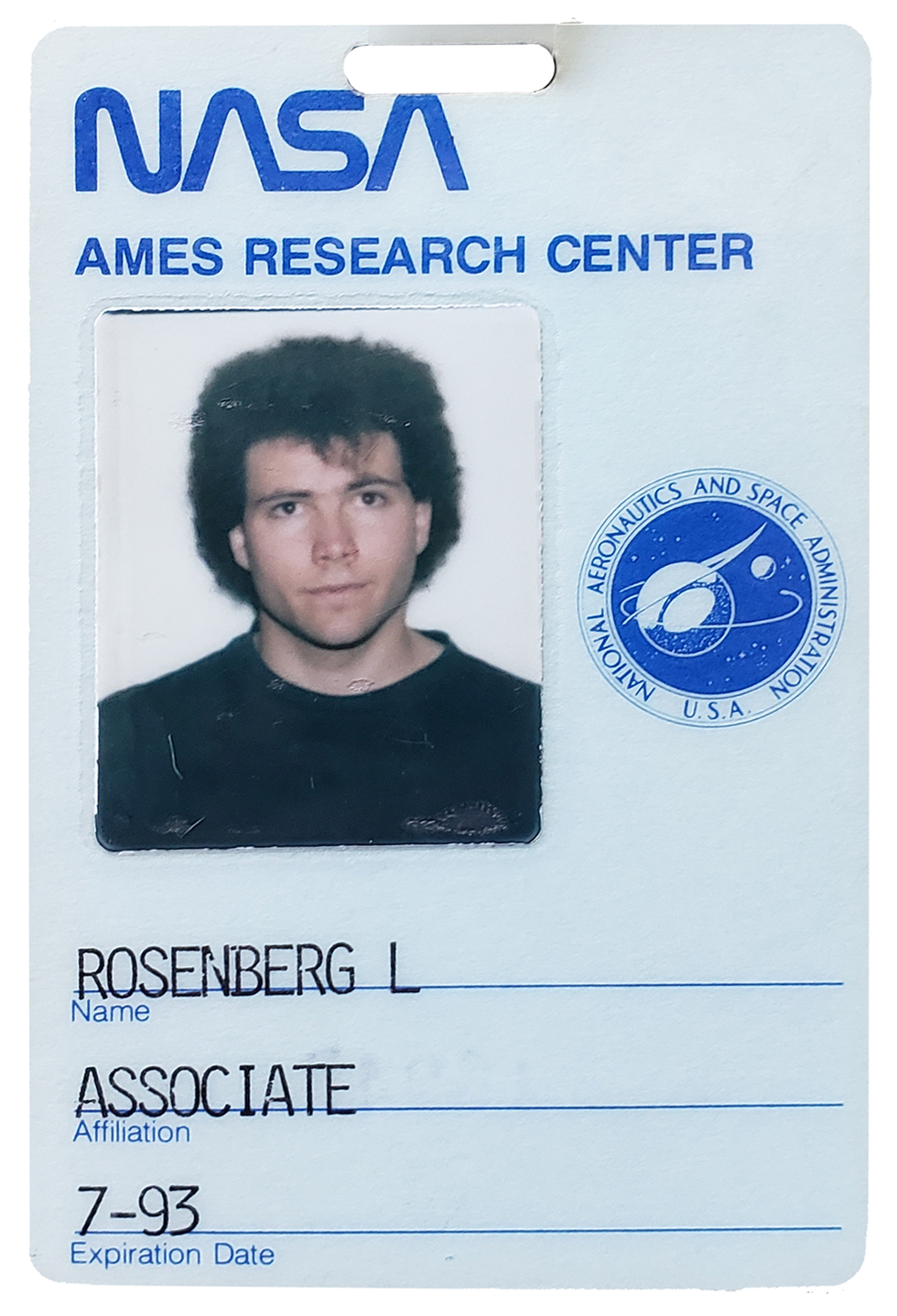

Back then, I was a graduate student at Stanford University and a part-time researcher at NASA’s Ames Research Center, interested in the creation of virtual worlds. At Stanford, I worked in the Center for Design Research, a group focused on the intersection of humans and technology that created some of the very early VR gloves, immersive vision systems, and 3D audio systems. At NASA, I worked in the Advanced Displays and Spatial Perception Laboratory of the Ames Research Center, where researchers were exploring the fundamental parameters required to enable realistic and immersive simulated worlds.

Of course, knowing how to create a quality VR experience and being able to produce it are not the same thing. The best PCs on the market back then used Intel 486 processors running at 33 megahertz. Adjusted for inflation, they cost about US $8,000 and weren’t even a thousandth as fast as a cheap gaming computer today. The other option was to invest $60,000 in a Silicon Graphics workstation—still less than a hundredth as fast as a mediocre PC today. So, though researchers working in VR during the late 80s and early 90s were doing groundbreaking work, the crude graphics, bulky headsets, and lag so bad it made people dizzy or nauseous plagued the resulting virtual experiences.

I was conducting a research project at NASA to optimize depth perception in early 3D-vision systems, and I was one of those people getting dizzy from the lag. And I found that the images created back then were definitely virtual but far from reality.

Still, I wasn’t discouraged by the dizziness or the low fidelity, because I was sure the hardware would steadily improve. Instead, I was concerned about how enclosed and isolated the VR experience made me feel. I wished I could expand the technology, taking the power of VR and unleashing it into the real world. I dreamed of creating a merged reality where virtual objects inhabited your physical surroundings in such an authentic manner that they seemed like genuine parts of the world around you, enabling you to reach out and interact as if they were actually there.

I was aware of one very basic sort of merged reality—the head-up display— in use by military pilots, enabling flight data to appear in their lines of sight so they didn’t have to look down at cockpit gauges. I hadn’t experienced such a display myself, but became familiar with them thanks to a few blockbuster 1980s hit movies, including Top Gun and Terminator. In Top Gun a glowing crosshair appeared on a glass panel in front of the pilot during dogfights; in Terminator, crosshairs joined text and numerical data as part of the fictional cyborg’s view of the world around it.

Neither of these merged realities were the slightest bit immersive, presenting images on a flat plane rather than connected to the real world in 3D space. But they hinted at interesting possibilities. I thought I could move far beyond simple crosshairs and text on a flat plane to create virtual objects that could be spatially registered to real objects in an ordinary environment. And I hoped to instill those virtual objects with realistic physical properties.

I needed substantial resources—beyond what I had access to at Stanford and NASA—to pursue this vision. So I pitched the concept to the Human Sensory Feedback Group of the U.S. Air Force’s Armstrong Laboratory, now part of the Air Force Research Laboratory.

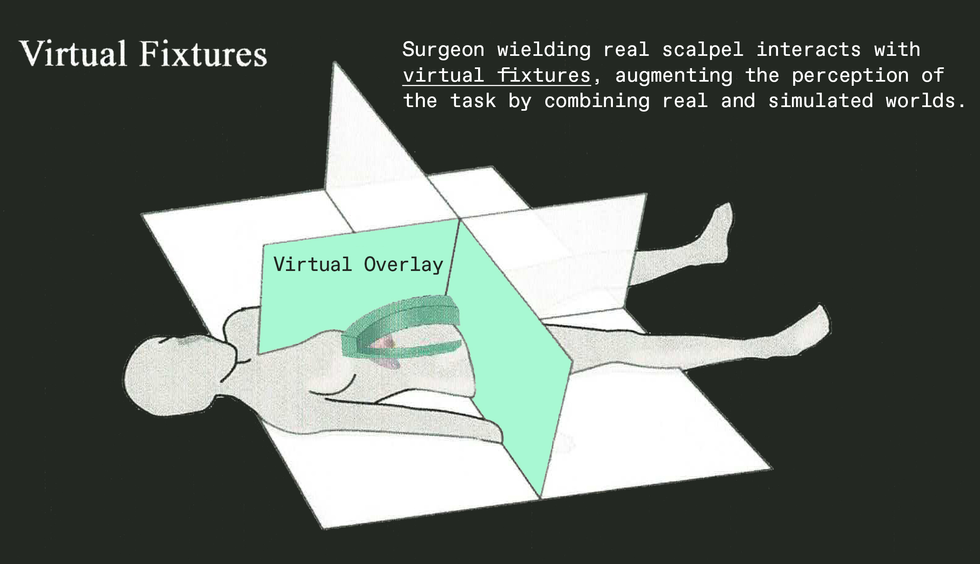

To explain the practical value of merging real and virtual worlds, I used the analogy of a simple metal ruler. If you want to draw a straight line in the real world, you can do it freehand, going slow and using significant mental effort, and it still won’t be particularly straight. Or you can grab a ruler and do it much quicker with far less mental effort. Now imagine that instead of a real ruler, you could grab a virtual ruler and make it instantly appear in the real world, perfectly registered to your real surroundings. And imagine that this virtual ruler feels physically authentic—so much so that you can use it to guide your real pencil. Because it’s virtual, it can be any shape and size, with interesting and useful properties that you could never achieve with a metal straightedge.

Of course, the ruler was just an analogy. The applications I pitched to the Air Force ranged from augmented manufacturing to surgery. For example, consider a surgeon who needs to make a dangerous incision. She could use a bulky metal fixture to steady her hand and avoid vital organs. Or we could invent something new to augment the surgery—a virtual fixture to guide her real scalpel, not just visually but physically. Because it’s virtual, such a fixture would pass right through the patient’s body, sinking into tissue before a single cut had been made. That was the concept that got the military excited, and their interest wasn’t just for in-person tasks like surgery but for distant tasks performed using remotely controlled robots. For example, a technician on Earth could repair a satellite by controlling a robot remotely, assisted by virtual fixtures added to video images of the real worksite. The Air Force agreed to provide enough funding to cover my expenses at Stanford along with a small budget for equipment. Perhaps more significantly, I also got access to computers and other equipment at Wright-Patterson Air Force Base near Dayton, Ohio.

And what became known as the Virtual Fixtures Project came to life, working toward building a prototype that could be rigorously tested with human subjects. And I became a roving researcher, developing core ideas at Stanford, fleshing out some of the underlying technologies at NASA Ames, and assembling the full system at Wright-Patterson.

Now about those parachutes.

As a young researcher in my early twenties, I was eager to learn about the many projects going on around me at these various laboratories. One effort I followed closely at Wright-Patterson was a project designing new parachutes. As you might expect, when the research team came up with a new design, they didn’t just strap a person in and test it. Instead, they attached the parachutes to dummy rigs fitted with sensors and instrumentation. Two engineers would go up in an airplane with the hardware, dropping rigs and jumping alongside so they could observe how the chutes unfolded. Stick with my story and you’ll see how this became key to the development of that early AR system.

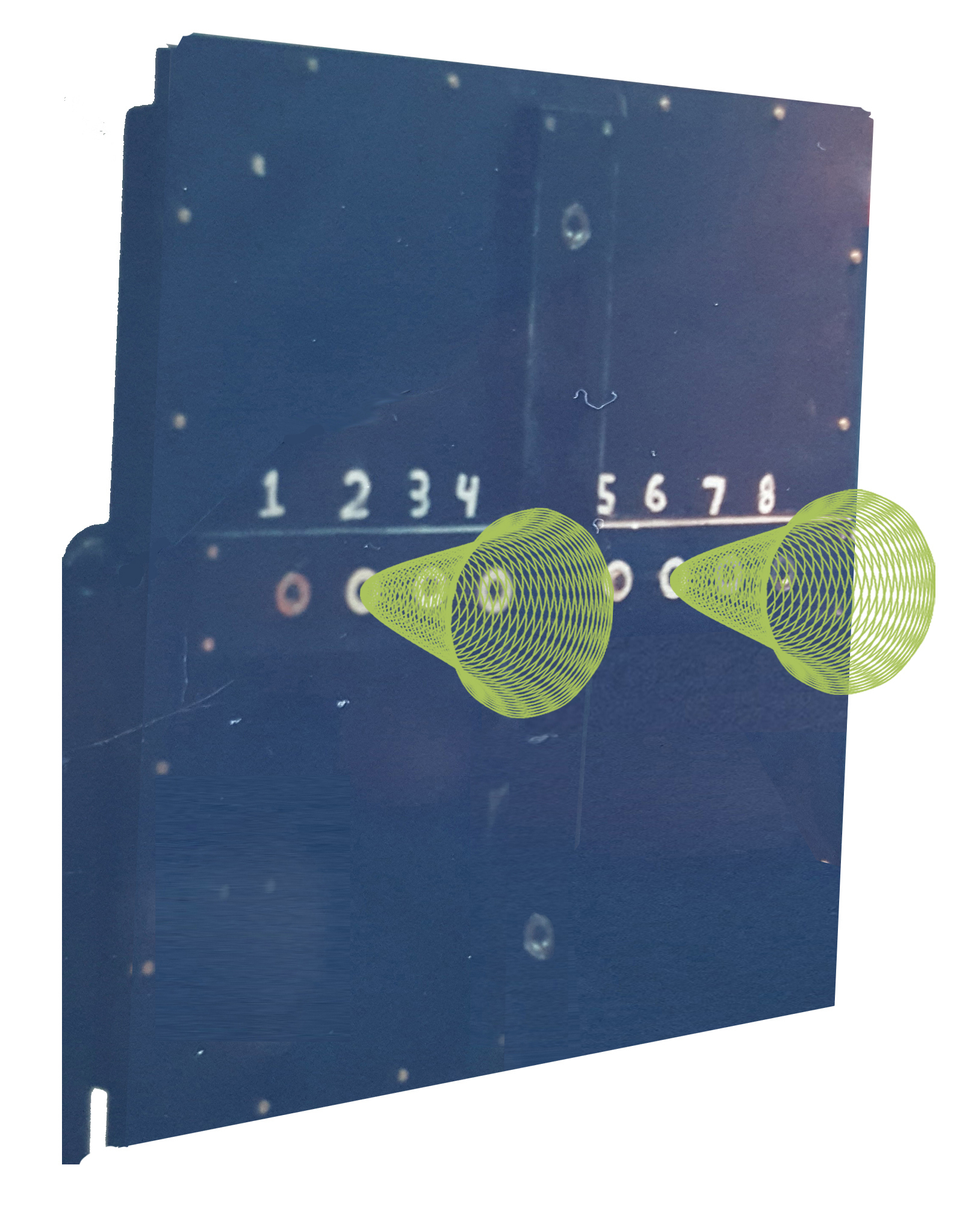

Back at the Virtual Fixtures effort, I aimed to prove the basic concept—that a real workspace could be augmented with virtual objects that feel so real, they could assist users as they performed dexterous manual tasks. To test the idea, I wasn't going to have users perform surgery or repair satellites. Instead, I needed a simple repeatable task to quantify manual performance. The Air Force already had a standardized task it had used for years to test human dexterity under a variety of mental and physical stresses. It’s called the Fitts’s Law peg-insertion task, and it involves having test subjects quickly move metal pegs between holes on a large pegboard.

So I began assembling a system that would enable virtual fixtures to be merged with a real pegboard, creating a mixed-reality experience perfectly registered in 3D space. I aimed to make these virtual objects feel so real that bumping the real peg into a virtual fixture would feel as authentic as bumping into the actual board.

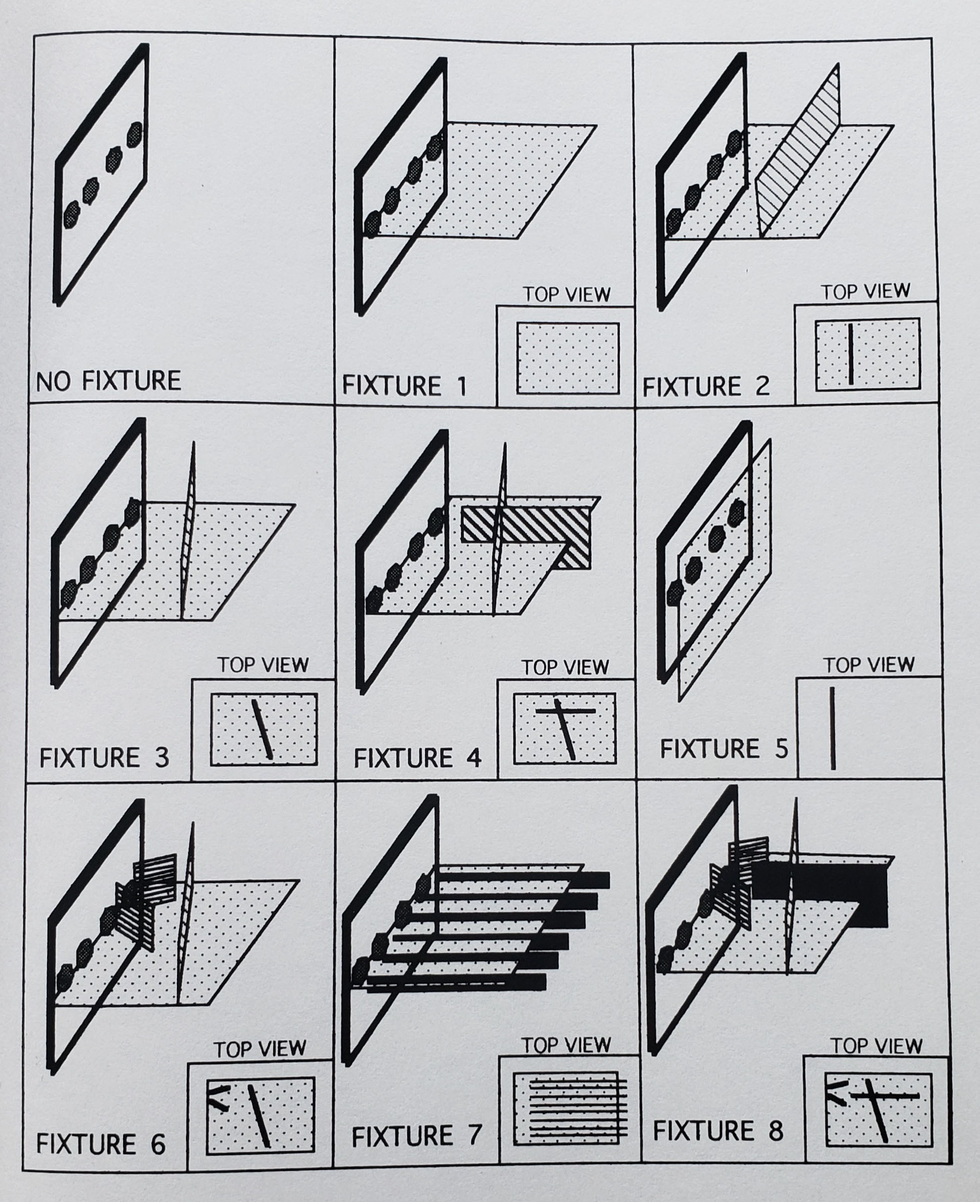

I wrote software to simulate a wide range of virtual fixtures, from simple surfaces that prevented your hand from overshooting a target hole, to carefully shaped cones that could help a user guide the real peg into the real hole. I created virtual overlays that simulated textures and had corresponding sounds, even overlays that simulated pushing through a thick liquid as it it were virtual honey.

For more realism, I modeled the physics of each virtual element, registering its location accurately in three dimensions so it lined up with the user’s perception of the real wooden board. Then, when the user moved a hand into an area corresponding to a virtual surface, motors in the exoskeleton would physically push back, an interface technology now commonly called “haptics.” It indeed felt so authentic that you could slide along the edge of a virtual surface the way you might move a pencil against a real ruler.

To accurately align these virtual elements with the real pegboard, I needed high-quality video cameras. Video cameras at the time were far more expensive than they are today, and I had no money left in my budget to buy them. This was a frustrating barrier: The Air Force had given me access to a wide range of amazing hardware, but when it came to simple cameras, they couldn’t help. It seemed like every research project needed them, most of far higher priority than mine.

Which brings me back to the skydiving engineers testing experimental parachutes. These engineers came into the lab one day to chat; they mentioned that their chute had failed to open, their dummy rig plummeting to the ground and destroying all the sensors and cameras aboard.

This seemed like it would be a setback for my project as well, because I knew if there were any extra cameras in the building, the engineers would get them.

But then I asked if I could take a look at the wreckage from their failed test. It was a mangled mess of bent metal, dangling circuits, and smashed cameras. Still, though the cameras looked awful with cracked cases and damaged lenses, I wondered if I could get any of them to work well enough for my needs.

By some miracle, I was able to piece together two working units from the six that had plummeted to the ground. And so, the first human testing of an interactive augmented-reality system was made possible by cameras that had literally fallen out of the sky and smashed into the earth.

To appreciate how important these cameras were to the system, think of a simple AR application today, like Pokémon Go. If you didn’t have a camera on the back of your phone to capture and display the real world in real time, it wouldn’t be an augmented-reality experience; it would just be a standard video game.

The same was true for the Virtual Fixtures system. But thanks to the cameras from that failed parachute rig, I was able to create a mixed reality with accurate spatial registration, providing an immersive experience in which you could reach out and interact with the real and virtual environments simultaneously.

As for the experimental part of the project, I conducted a series of human studies in which users experienced a variety of virtual fixtures overlaid onto their perception of the real task board. The most useful fixtures turned out to be cones and surfaces that could guide the user’s hand as they aimed the peg toward a hole. The most effective involved physical experiences that couldn’t be easily manufactured in the real world but were readily achievable virtually. For example, I coded virtual surfaces that were “magnetically attractive” to the peg. For the users, it felt as if the peg had snapped to the surface. Then they could glide along it until they chose to yank free with another snap. Such fixtures increased speed and dexterity in the trials by more than 100 percent.

Of the various applications for Virtual Fixtures that we considered at the time, the most commercially viable back then involved manually controlling robots in remote or dangerous environments—for example, during hazardous waste clean-up. If the communications distance introduced a time delay in the telerobotic control, virtual fixtures became even more valuable for enhancing human dexterity.

Today, researchers are still exploring the use of virtual fixtures for telerobotic applications with great success, including for use in satellite repair and robot-assisted surgery.

I went in a different direction, pushing for more mainstream applications for augmented reality. That’s because the part of the Virtual Fixtures project that had the greatest impact on me personally wasn’t the improved performance in the peg-insertion task. Instead, it was the big smiles that lit up the faces of the human subjects when they climbed out of the system and effused about what a remarkable experience they had had. Many told me, without prompting, that this type of technology would one day be everywhere.

And indeed, I agreed with them. I was convinced we’d see this type of immersive technology go mainstream by the end of the 1990s. In fact, I was so inspired by the enthusiastic reactions people had when they tried those early prototypes, I founded a company in 1993—Immersion—with the goal of pursuing mainstream consumer applications. Of course, it hasn’t happened nearly that fast.

At the risk of being wrong again, I sincerely believe that virtual and augmented reality, now commonly referred to as the metaverse, will become an important part of most people’s lives by the end of the 2020s. In fact, based on the recent surge of investment by major corporations into improving the technology, I predict that by the early 2030s augmented reality will replace the mobile phone as our primary interface to digital content.

And no, none of the test subjects who experienced that early glimpse of augmented reality 30 years ago knew they were using hardware that had fallen out of an airplane. But they did know that they were among the first to reach out and touch our augmented future.

- Augmented Reality's Moats Will Be Made of Money - IEEE Spectrum ›

- This Is the Year for Apple's AR Glasses—Maybe - IEEE Spectrum ›

- Looking Through Mojo Vision's Newest AR Contact Lens - IEEE ... ›

- Apple’s Metaverse Snub Highlights AR/VR Tech Woes - IEEE Spectrum ›

- Open Source Eyeware Is AR Without Walls - IEEE Spectrum ›

- Meta’s Flamera Has a New Vision for Augmented Reality - IEEE Spectrum ›

- Creating Virtual Objects With the Flick of a Finger - IEEE Spectrum ›

- AI-Powered Microdisplay Adapts to Users’ Eyesight - IEEE Spectrum ›

- None ›

- From Aspiring Ballerina to VR Pioneer - IEEE Spectrum ›