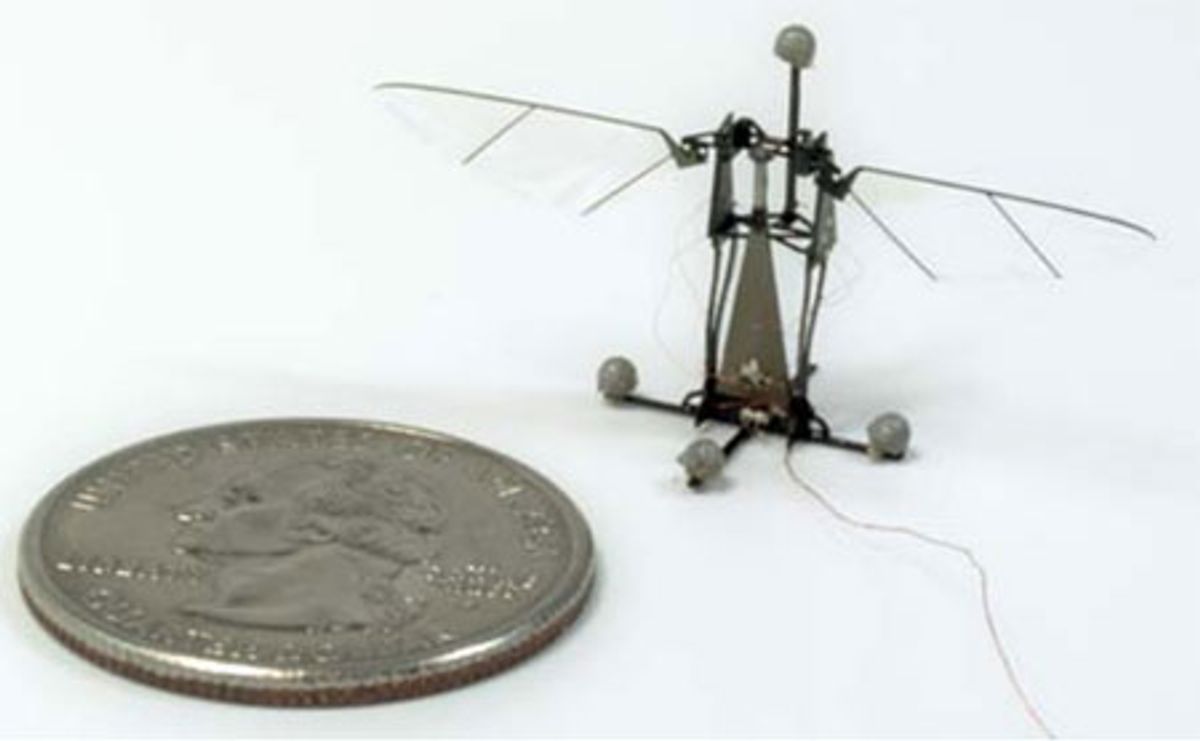

Harvard has been working on a robotic bee for five years now. Five years is a long time in the fast-paced world of robotics, but when you're trying to design a controllable flying robot that weighs less than one tenth of one gram from scratch, getting it to work properly is a process that often has to wait for technology to catch up to the concept.

The RoboBee has been able to take off under its own power for years, but roboticists have only just figured out how to get it to both take off and go where they want it to. Or at least, they're getting very, very close, and the latest testing was presented at one of the opening sessions of IROS this morning.

With the addition two small control actuators underneath the wings, RoboBee has been endowed with the ability to pitch and roll, which is two thirds of what it needs to be able to do to be a fully controllable robotic insect. These maneuvers are currently open-loop, which means that the RoboBee isn't getting any sensor feedback: it's just been instructed to steer itself in one particular way, which it obediently does until it violently crashes into something:

As you can see, these are hardy little robocritters: the prototype RoboBees have gone through dozens of flights, "almost always with crash landings," according to the researchers.

The reason that RoboBee hasn't yet learned to yaw is that all three axes of motion (yaw, pitch, and roll) are coupled together such that it's difficult to get a pure output with a pure input: if you try to get the robot to pitch, it's going to yaw and roll a little bit too, and isolating yaw from pitch and roll proved to be particularly tricky. Ongoing research will develop a feedback controller that can compensate for this, which should (we hope) mean that a RoboBee capable of hovering and fully controllable flight will be buzzing our way sometime soon.

"Open-loop roll, pitch and yaw torques for a robotic bee," by Benjamin M. Finio, and Robert J. Wood from the School of Engineering and Applied Sciences and the Wyss Institute for Biologically Inspired Engineering at Harvard University, was presented today at the 2012 IEEE/RSJ International Conference on Intelligent Robots and Systems in Vilamoura, Portugal.

[ Harvard RoboBees ]

Evan Ackerman is a senior editor at IEEE Spectrum. Since 2007, he has written over 6,000 articles on robotics and technology. He has a degree in Martian geology and is excellent at playing bagpipes.