My teenage son has become interested in making music. In my generation, that would have meant picking up an electric guitar and forming a garage band. Instead, he’s installed a digital-audio workstation on his laptop, studied up on music theory, and started composing “EDM,” or electronic dance music. Frankly, I don’t understand what he’s doing.

I would much prefer that he spend some of his free hours honing his programming skills, and I keep suggesting that he explore one of the machine-learning frameworks now available. Although he’s expressed interest and has started to explore Torch, he’s not found anything that would make him really dive in.

So, while I’m not musical myself, my eyes lit up when I stumbled on Google’s new neural music-synthesis project NSynth (Neural Synthesizer). This, I thought, might be just the ticket to get my music-giddy son hooked on the amazing things possible with machine learning.

NSynth uses a deep neural network to distill musical notes from various instruments down to their essentials. Google’s developers first created a digital archive of some 300,000 notes, including up to 88 examples from about 1,000 different instruments, all sampled at 16 kilohertz. They then input those data into a deep-learning model that can represent all those wildly different sounds far more compactly using what they call “embeddings.” That exercise supposedly took about 10 days running on thirty-two K40 graphics [PDF] processing units.

Why do that? Well, with those results, you can now answer a question like “What do you get when you cross a piano with a flute?” (Musicians: Insert joke here.)

It would, of course, be easy enough to add together the two very distinct sounds of each instrument playing, say, middle C. But that would just sound like the two instruments playing the same note at once. NSynth allows you to combine the two sets of embeddings and create a virtual piano-flute, the sound of which can be synthesized using NSynth’s neural decoder.

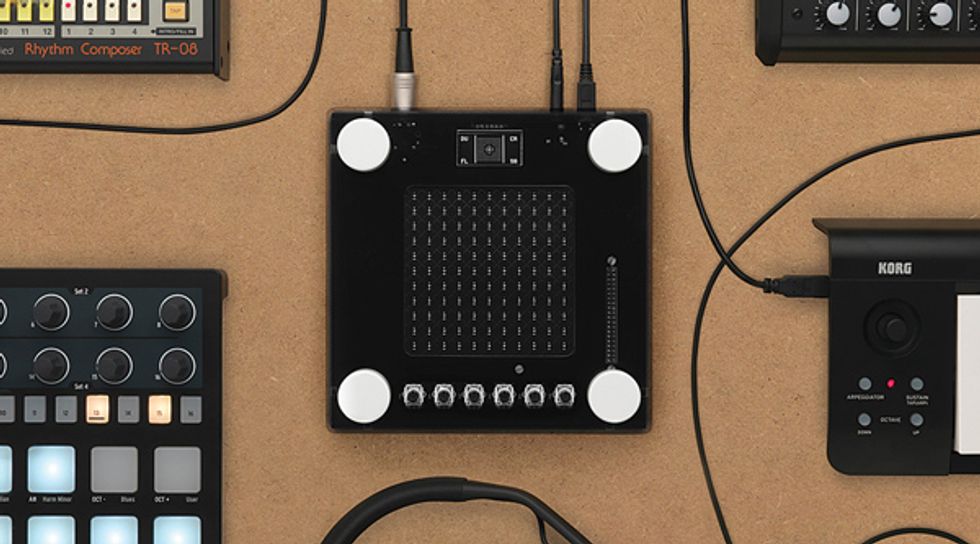

What’s more, the Google team designed a piece of open-source hardware called NSynth Super, which allows you to combine as many as four instruments at once. I figured that building the synthesizer and experimenting with it would be a perfect father-son project.

Alas, my son isn’t particularly adept with hardware, so construction fell mostly on me. Google posted a good set of instructions, so putting it together was fairly straightforward.

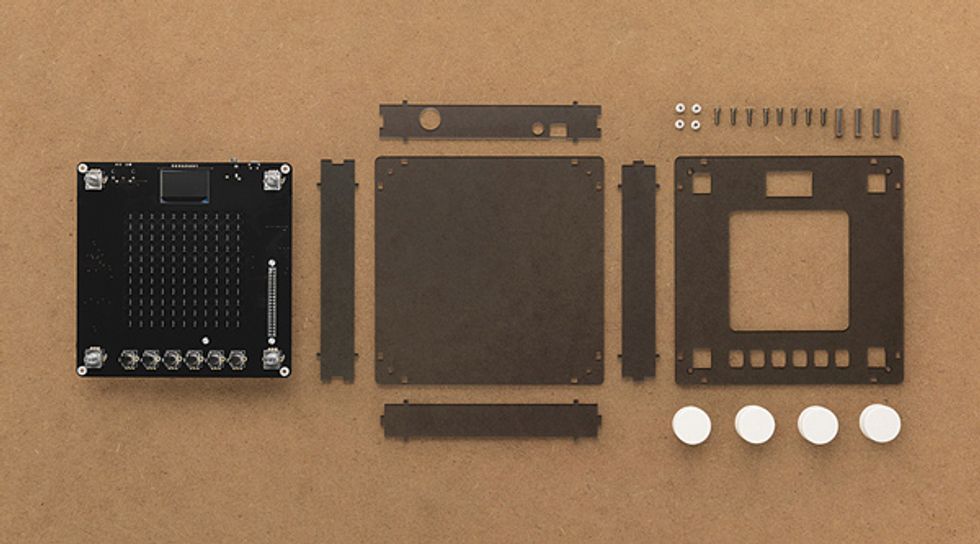

I ordered a premade printed circuit board (PCB) for the project, which cost US $20 on Tindie, making it considerably less expensive than it would have been had I tried to have this rather large board fabricated myself. The same vendor sells a $60 version fully populated with its many surface-mount components, but it was out of stock.

So I ordered the bare board and components separately. On Hackaday, I found a complete bill of materials with links to suggested suppliers, which was handy. Still, a few parts were hard to procure. In particular, the rotary encoders used to assign instruments were unavailable, but I couldn’t see any harm in substituting the 12-indent-per-revolution versions the design specified with 18-indent versions of the same part.

Soldering the surface mount components required a magnifier and tweezers along with the usual flux, wick, and solder. Once I’d assembled all the components, the next step was to acquire the huge 62-gigabyte image file of the NSynth software to put on the SD card of a Raspberry Pi 3, which plugs into the board and provides the bulk of the synthesizer’s computational power. That took many hours to download, but the operation otherwise went smoothly. It was also straightforward to download the firmware for the processor embedded in the NSynth Super that handles user inputs.

Indeed, it all seemed too easy—until it came time to test the thing. The first hurdle was finding a source of MIDI signals to play, MIDI being the industry standard for controlling and playing digital instruments. My son has a MIDI-enabled keyboard, but I discovered that it has no dedicated MIDI output: It just has USB, over which MIDI data packets are transmitted. (Normally, MIDI uses a hardware interface that electrically isolates instruments from incoming signals to prevent interference from ground loops.) So I created a dedicated output-only MIDI source for testing, using an Arduino and a couple of resistors.

I was excited to plug everything together for a test but was quickly disappointed. I heard nothing at all. Many hours and much head-scratching later, I found the problem—a bad connection on one of the pins of the NSynth Super’s digital-to-analog converter, which was sitting a little above the board.

After fixing that issue, it was time to put the NSynth Super PCB into its enclosure. For that, I had earlier ordered an acrylic sheet to be laser cut, which required rearranging the various parts in Google’s Adobe Illustrator file so that things fit on the sheet sizes used by the fabrication house I chose (Ponoko). While ordering the electronic components, I had also gotten the various screws, spacers, and knobs needed. So I had everything on hand, and it was easy enough to involve my son in final assembly.

My son and I spent some late-night hours bonding over the Arduino code needed to get his MIDI keyboard to output true MIDI signals. And we can now tell you how a piano-flute sounds: Awful. Indeed, most combinations of instruments sound pretty bad, at least to me. My son, on the other hand, is more taken with some of NSynth Super’s weird electronic sounds, especially the ones you can dance to.

This article appears in the July 2018 print issue as “Build a Neural Synthesizer.”