Humans use all sorts of bizarre, abstract terms to describe how objects feel, and it’s endlessly frustrating to robots. Or at least, we imagine it must be. Take a word like "squishy," for example: how would you explain that feeling to a robot who experiences touch through some long series of numbers? Researchers at University of Pennsylvania's Haptics Group (part of the GRASP Lab) and UC Berkeley have developed a system to teach robots how these abstract terms apply to real-world objects, to help our mechanical friends communicate with us in a more relatable way.

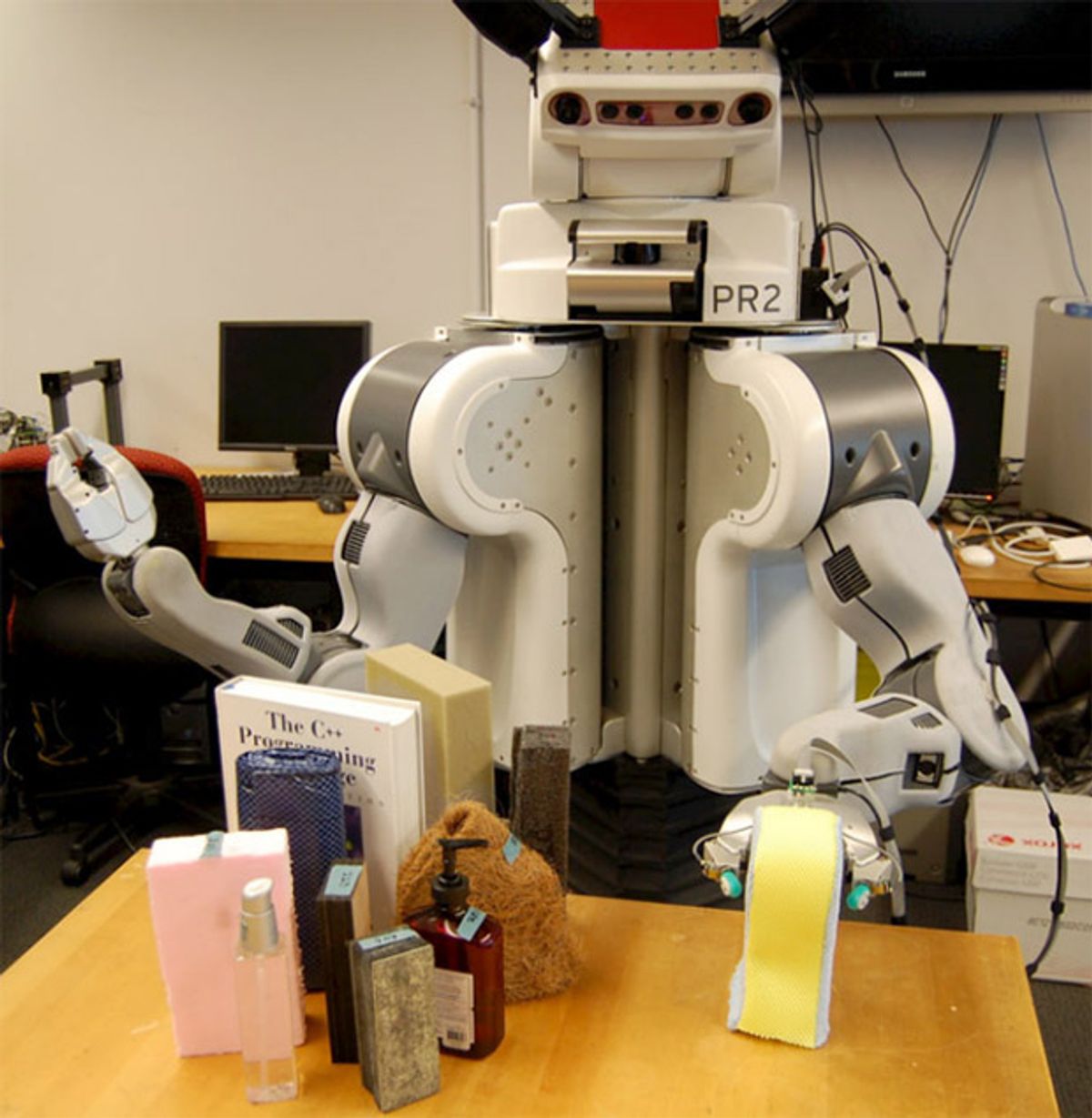

In robotics, the science of touch and touch interaction is called haptics, and at the IEEE International Conference on Robotics and Automation (ICRA) this year, haptics has been all over the place. It’s a tricky thing to experiment with, because it requires sophisticated sensors, and equally sophisticated software to understand what the sensors are saying. Translating such sensor data into something that a human can understand is especially difficult, but in a paper presented this week, a PR2 robot equipped with an innovative finger sensor from SynTouch has been taught to use touch exploration to associate objects with "tactile adjectives."

A tactile adjective is a word like "squishy." "Fuzzy" is another one, and so is "crinkly." Humans easily understand what those terms mean. But robots still have a lot to learn. The study had a bunch of humans feel up a set of common household items, resulting in 34 adjective labels:

The researchers then had a PR2 with the BioTac tactile finger sensor perform a series of exploratory procedures on the same set of objects, including tapping, squeezing, holding, and both slow and fast sliding. Here’s a video of PR2 exploring a folded satin pillowcase through touch:

After training the PR2 by correlating haptic sensor data with adjectives from humans who touched the same objects, the robot was tested out on a series of objects that it had never experienced before to see whether it would be able to derive the same haptic adjectives as humans do. And it worked. As shown in the video above (although it flashes past pretty quickly at the end), humans described the folded satin pillowcase as "compact, compressible, deformable, smooth, and squishy," while the robot thought it was "compact, compressible, crinkly, smooth, and squishy." I’m not sure where "crinkly" came from, but the rest of it is pretty close, and it’s very impressive for words that have a tendency towards subjectiveness. The researchers summarize:

The presented results prove that a robot equipped with rich multi-channel tactile sensors can discover the haptic properties of objects through physical interaction and then generalize this understanding across previously unfelt objects. Furthermore, we have shown that these object properties can be related to subjective human labels in the form of haptic adjectives, a task that has rarely been explored in the literature, though it stands to benefit a wide range of future applications in robotics.

"Using Robotic Exploratory Procedures to Learn the Meaning of Haptic Adjectives," by Vivian Chu, Ian McMahon, Lorenzo Riano, Craig G. McDonald, Qin He, Jorge Martinez Perez-Tejada, Michael Arrigo, Naomi Fitter, John C. Nappo, Trevor Darrell, and Katherine J. Kuchenbecker from the University of Pennsylvania and the University of California, Berkeley, was presented last week at ICRA 2013 in Germany.

Evan Ackerman is a senior editor at IEEE Spectrum. Since 2007, he has written over 6,000 articles on robotics and technology. He has a degree in Martian geology and is excellent at playing bagpipes.