Social media today depends on building echo chambers (a.k.a. “filter bubbles”) that wall off users into like-minded digital communities. These bubbles create higher levels of engagement, but come with pitfalls— including limiting people’s exposure to diverse views and driving polarization among friends, families and colleagues. This effect isn’t a coincidence either. Rather, it’s directly related to the profit-maximizing algorithms used by social media and tech giants on platforms like Twitter, Facebook, TikTok and YouTube.

In a refreshing twist, one team of researchers in Finland and Denmark has a different vision for how social media platforms could work. They developed a new algorithm that increases the diversity of exposure on social networks, while still ensuring that content is widely shared.

Antonis Matakos, a PhD student in the Computer Science Department at Aalto University in Espoo, Finland, helped develop the new algorithm. He expresses concern about the social media algorithms being used now.

While current algorithms mean that people more often encounter news stories and information that they enjoy, the effect can decrease a person’s exposure to diverse opinions and perspectives. “Eventually, people tend to forget that points of view, systems of values, ways of life, other than their own exist… Such a situation corrodes the functioning of society, and leads to polarization and conflict,” Matakos says.

“Additionally,” he says, “people might develop a distorted view of reality, which may also pave the way for the rapid spread of fake news and rumors.”

Matakos’ research is focused on reversing these harmful trends. He and his colleagues describe their new algorithm in a study published in November in IEEE Transactions on Knowledge and Data Engineering

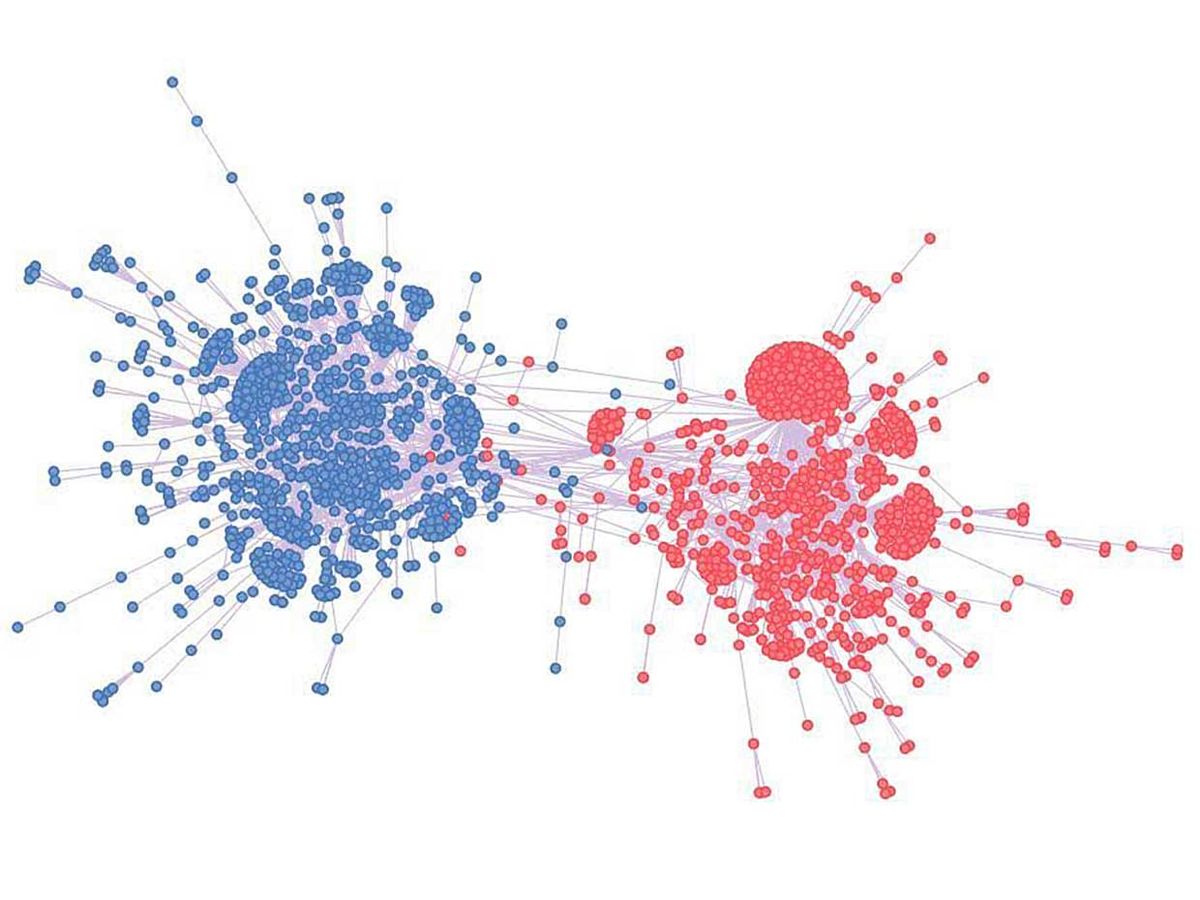

The approach involves assigning numerical values to both social media content and users. The values represent a position on an ideological spectrum, for example far left or far right. These numbers are used to calculate a diversity exposure score for each user. Essentially, the algorithm is identifying social media users who would share content that would lead to the maximum spread of a broad variety of news and information perspectives.

Then, diverse content is presented to a select group of people with a given diversity score who are most likely to help the content propagate across the social media network—thus maximizing the diversity scores of all users.

In their study, the researchers compare their new social media algorithm to several other models in a series of simulations. One of these other models was a simpler method that selects the most well-connected users and recommends content that maximizes a person’s individual diversity exposure score.

Matakos says his group’s algorithm provides a feed for social media users that is at least three times more diverse (according to the researchers’ metric) than this simpler method, and even more so for baseline methods used for comparison in the study.

These results suggest that targeting a strategic group of social media users and feeding them the right content is more effective for propagating diverse views through a social media network than focusing on the most well-connected users. Importantly, the simulations completed in the study also suggest that the new model is scalable.

A major hurdle, of course, is whether social media networks would be open to incorporating the algorithm into their systems. Matakos says that, ideally, his new algorithm could be an opt-in feature that social media networks offer to their users.

“I think [the social networks] would be potentially open to the idea,” says Matakos. “However, in practice we know that the social network algorithms that generate users' news feeds are orientated towards maximizing profit, so it would need to be a big step away from that direction.”

Michelle Hampson is a freelance writer based in Halifax. She frequently contributes to Spectrum's Journal Watch coverage, which highlights newsworthy studies published in IEEE journals.