25 May 2011—German scientists have set a world record for optical communications, transmitting 26 trillion bits of data per second—enough to download 700 DVDs in an instant—using just a single laser.

The work, which was published online Monday in Nature Photonics, should reduce the power consumption of communications systems and could lead the way toward the next standard for optical data transmission, says Juerg Leuthold, a professor at Karlsruhe Institute of Technology, in Germany, who led the work. "Twenty-six terabits is a lot of information," he says. However, other optics experts point out that multiple-laser systems still have the edge.

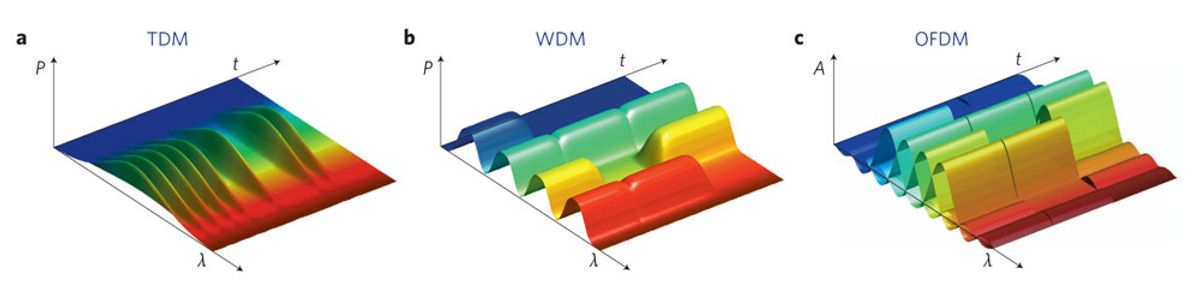

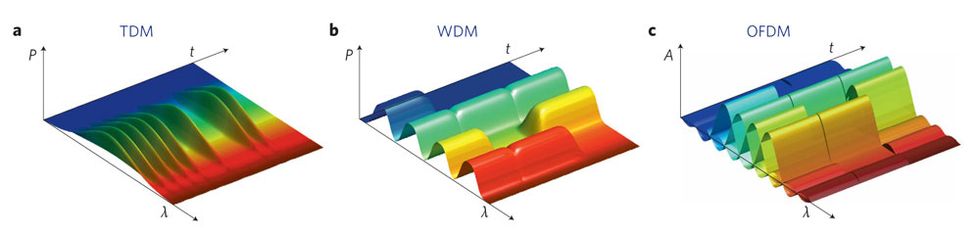

The Karlsruhe researchers built upon a common approach used to increase data rates in wireless communications, called orthogonal frequency-division multiplexing (OFDM). Compared with other schemes used in optical communication, OFDM has the potential to make efficient use of the available spectrum and be tolerant to the dispersion of light in the optical fiber. The technique starts by encoding data onto carrier waves whose frequencies overlap quite a bit, then combining those waves to create a new waveform. For example, the process can combine four bits of data onto a single wave. Previous optical OFDM schemes used multiple lasers to produce the four overlapping wavelengths. "Now, instead of having four transmitters, you have one transmitter," Leuthold explains. The adding and extracting of the waves is done through a mathematical process called the Fast Fourier Transform (FFT) and its inverse.

At the receiver, the new waveform can be broken down into its components, and the data can be decoded. But at such high data rates, there’s a problem. "This is so awfully fast that there is no electronic receiver that could detect it," Leuthold says. To solve this issue, the team created an optical FFT device instead of an electronic one. A simple interferometer breaks the light signal into separate beams, then recombines them out of phase with one another. The difference in the original carrier waves causes the beams to combine to form a stronger signal in one spot or cancel each other out in another spot, creating a string of bits that mirror the bits that were originally encoded. "The light interferes and performs the mathematical operation," says Leuthold.

The team transmitted their data over 50 kilometers of fiber. Leuthold says they didn’t have the equipment to test longer distances, but he’s hoping to do so in the future. (More advanced equipment should also improve the technique’s efficiency, he adds.) The farther a signal can travel in a network, the less need there is for expensive equipment to boost it.

Using multiple lasers, other groups have sent even more data down a single optical fiber and have done so for greater distances. NEC Laboratories, of Princeton, N.J., for instance, presented a paper at the annual Optical Fiber Communication Conference in March showing a rate of 101.7 terabits per second, also using OFDM. But that scheme required 370 lasers, each transmitting at 294 gigabits per second. Ting Wang, a researcher at NEC, says Leuthold’s work is well done and quite significant. But he argues that his own multilaser approach has better spectral efficiency, meaning the total number of useful bits is higher and may in the long run be more practical. "We have a much higher capacity and efficiency," Wang says.

NEC researchers performed a trial with telecom carrier Verizon earlier this year in which they were able to reach rates of 1 Tb/s over 3560 km of fiber. Communications equipment makers are pushing toward the so-called terabit Ethernet and hope to be transmitting at such speeds by the middle of the decade. Much of today’s equipment works at 10 or 40 Gb/s, and IEEE ratified a 100 Gb/s standard last year.

Colja Schubert, head of the high-speed transmission group at the Fraunhofer Institute for Telecommunications, Heinrich Hertz Institute, in Berlin, says the Karlsruhe system is "remarkable." But while this is certainly the highest data capacity with a single laser to date, other technologies have shown higher overall capacities over long distances and higher spectral efficiency. Very dense wavelength-division multiplexing (WDM), which uses many closely spaced (but not overlapping) different wavelengths of light, is also a candidate for the terabit Ethernet, Schubert says. Comparing Leuthold’s single-laser approach "to very dense WDM [so-called Nyquist-WDM], there is no significant advantage or disadvantage in terms of the spectral efficiency, and therefore no increase in total capacity can be expected," Schubert says. "Optical OFDM might have advantages in terms of practical implementation, but this is currently under research."

About the Author

Neil Savage writes about nanotech, optoelectronics, and other technology from Lowell, Mass. In April 2011 he reported on a technique for building diodes inside optical fibers.