For quantum computing to ever fulfill its promise, it will have to deal with errors. That's been a real problem until now, because although scientists have come up with error correction codes, the quantum machines available couldn't make use of them. But researchers report today that they've created a small quantum computing array that for the first time performs with enough accuracy to allow for error correction—paving the way toward practical machines that could outperform ordinary computers.

Today's classical computers perform calculations using bits that can be either 1 or 0. Quantum computers get their potentially amazing ability to make many simultaneous calculations by using quantum bits, or qubits, which can exist as both 1 and 0 at the same time. But it hasn't been easy to build such systems, because qubits are fragile things and the calculations they perform are susceptible to errors. Error correction algorithms exist, but for a practical quantum computer to use them it must operate with an accuracy of 99 percent or more. The latest experimental system, detailed in this week's issue of the journal Nature, is the first of its kind to cross the crucial 99 percent accuracy threshold, opening the door to unprecedented error correction experiments on larger arrays of qubits.

"We made a significant advance in the fidelity that brought it to this important limit, and we did it in such a way that we know how we’re going to scale up to more and more qubits," says one of the prototype's creators, John Martinis, a professor of physics at the University of California, Santa Barbara.

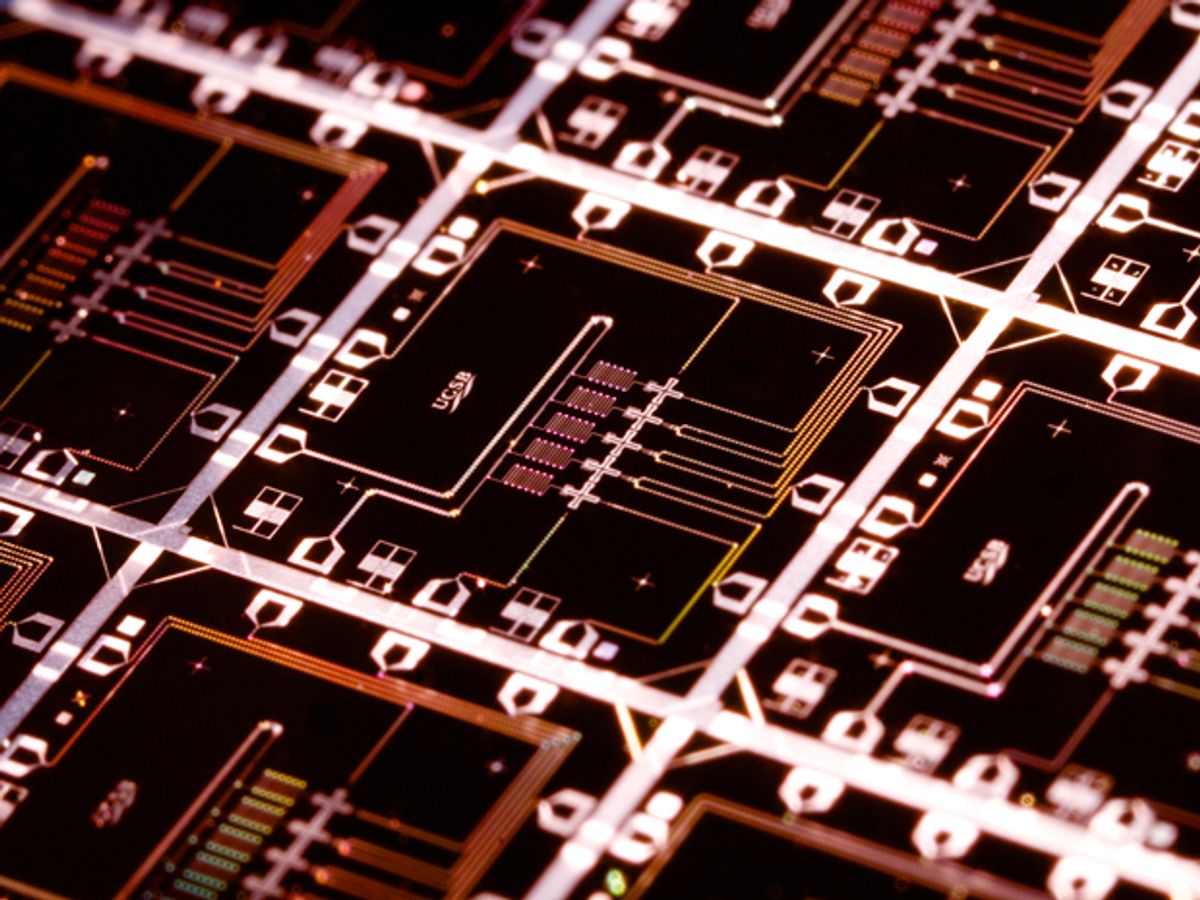

Martinis and his colleagues used superconducting quantum circuits that are just one of several possible designs for quantum computing systems. The qubits themselves are Josephson junctions—two layers of superconductor separated by a thin insulating layer.

By creating an arrangement of five qubits in a line, the researchers showed they could perform the logic operations at the heart of modern computing with an accuracy of 99.92 percent for a quantum logic gate involving one qubit and 99.4 percent for a quantum logic gate involving two qubits. The quantum computing arrangement, based on an approach known as "surface code" architecture, has an accuracy requirement of just 99 percent as opposed to a much more stringent 99.99 percent needed by many other quantum computing architectures.

Such a linear array of qubits could pave the way for building a 2-D grid of qubits arranged in a checkerboard pattern. The pattern would include "white squares" representing data qubits for performing operations, as well as "black squares" representing measurement qubits that detect errors in the neighboring data qubits. Tough engineering challenges lie ahead. In particular, designers will have to figure out a way to keep neighboring qubits from interfering with one another. But the general idea has been proven in this latest experiment.

"The physics of coupling and control is not going to change," says Rami Barends, a postdoctoral fellow in physics at UCSB and lead author of the Nature paper. "But what you'll have to come up with is the wiring and control done in a 2-D system without hampering the fidelity. There will be an engineering challenge."

Success in boosting the accuracy of superconducting qubits has also made the technology a serious contender to rival quantum computer architectures. Other labs have been developing quantum computing based on an "ion trap" architecture that uses electromagnetic fields to control particles floating in free space. But superconducting qubits represent a solid-state architecture—based on materials such as aluminum and sapphire—that seems more easily compatible with existing methods of manufacturing computer chips. That could make the process of scaling up to larger quantum computing arrays more straightforward.

(Both superconducting qubits and ion traps represent quantum computing architectures for so-called universal machines capable of the full range of logic functions, as opposed to quantum annealing machines made by the Canadian company D-Wave, which only focus on solving optimization problems.)

The next step for the UCSB team involves running rigorous error correction experiments—a huge first for the quantum computing field. Previous error correction experiments have shown how researchers could correct big errors deliberately injected into quantum computing arrays. The UCSB researchers want to show how to correct for natural errors that arise in the course of quantum computing operations. A combination of improved accuracy and rigorous error correction could eventually realize the dream of practical quantum computers in the future.

"We have all the requirements for starting to tackle error correction for the first time," says Julian Kelly, a graduate student in physics at UCSB. "People have gone through the motions before, but there has never been a practical way of reducing errors in the system."

Editor's Note: The original story said the new experiment had achieved an accuracy of 99.2 percent for a quantum logic gate involving one qubit. This has been changed to reflect the actual single-qubit accuracy of 99.92 percent.

Jeremy Hsu has been working as a science and technology journalist in New York City since 2008. He has written on subjects as diverse as supercomputing and wearable electronics for IEEE Spectrum. When he’s not trying to wrap his head around the latest quantum computing news for Spectrum, he also contributes to a variety of publications such as Scientific American, Discover, Popular Science, and others. He is a graduate of New York University’s Science, Health & Environmental Reporting Program.