What seems like a simple task—building a useful form of augmented reality into comfortable, reasonably stylish, eyeglasses—is going to need significant technology advances on many fronts, including displays, graphics, gesture tracking, and low-power processor design.

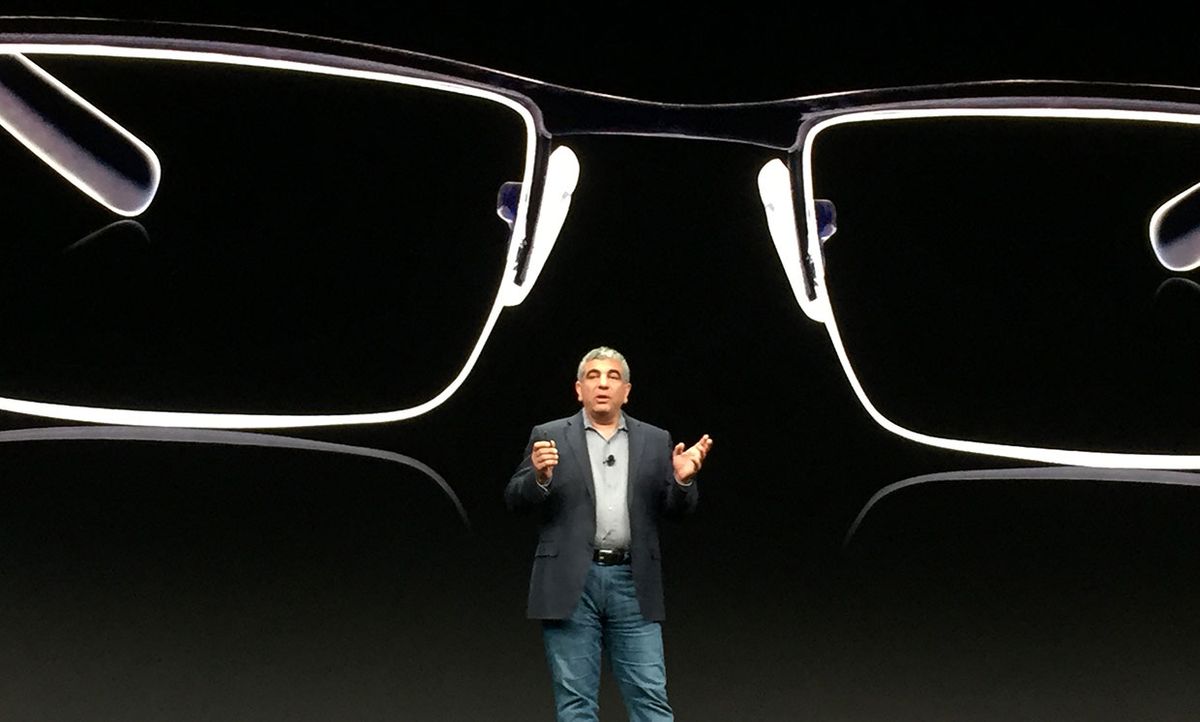

That was the message of Sha Rabii, Facebook’s head of silicon and technology engineering. Rabii, speaking at Arm TechCon 2019 in San Jose, Calif., on Tuesday, described a future with AR glasses that enable wearers to see at night, improve overall eyesight, translate signs on the fly, prompt wearers with the names of people they meet, create shared whiteboards, encourage healthy food choices, and allow selective hearing in crowded rooms. This type of AR will be, he said, “an assistant, connected to the Internet, sitting on your shoulders, and feeding you useful information to your ears and eyes when you need it.”

This vision, he indicated, isn’t arriving anytime soon, but it is achievable. The biggest roadblock, he said, is lowering the energy consumption of the hardware, along with reducing the heat that today’s processors emit.

“The low-power design community is uniquely positioned to take the mantle and create the tools that let us realize this vision,” he said.

Rabii had a couple of suggestions for approaches that chip developers could take. For one, he said, chips have to be better tailored to their intended uses.

“Our use case,” he said, speaking about AR glasses, “is moderate performance but high-power efficiency, form factors that support stylish and lightweight designs, [and chip designs that are] mindful of temperature for user comfort.”

Designers also need to think more realistically about how chips use energy. Energy consumption, he says, is mostly “determined by memory access and data movement. Data transfer is far more expensive than compute.” For example, he indicated, “fetching 1 byte from DRAM takes 12,000 times more energy than performing an 8-bit addition; sending 1 byte wirelessly takes 300,000 times more energy.”

Hardware designers need to keep these differences in mind in the way they implement AI, Rabii says. “The prevalent model is to have a monolithic accelerator as a discrete compute element, with all AI workloads transferred to this element,” he said. “But this is a data transfer intensive architecture, which has implications for power consumption.”

Better, he suggested, would be to “treat AI as a deeply embedded function and distribute it across all the compute” in a system. This type of architecture, he said, brings compute to data, so data doesn’t have to move around as much, dramatically saving power.

There are other ways AI can be designed to use less energy, Rabii says. “Not every AI function needs the same precision,” he says. “A large percentage of the computational effort is required for the last percents of accuracy,” so breaking up workloads and reducing precision when possible can make AI systems far more efficient.

That’s what designers can do now. In the future, he said, Facebook is looking forward to improvements in semiconductor process technologies that will lead to better performance per watt, as well as specialized accelerators that focus on specific types of AI for higher performance and better energy efficiency. Some of those advances, he hopes, will come from Arm Holdings and the Arm ecosystem.

Tekla S. Perry is a senior editor at IEEE Spectrum. Based in Palo Alto, Calif., she's been covering the people, companies, and technology that make Silicon Valley a special place for more than 40 years. An IEEE member, she holds a bachelor's degree in journalism from Michigan State University.