The world has a data storage problem. We’re generating more bits and bytes than ever, from thousands of phone photos to security camera footage to immersive virtual reality experiences. But there’s only so much physical space for server farms, and meanwhile experts are concerned Moore’s law will fall off a cliff as technical innovations outpace processor and storage capacity.

Big Data will only keep getting bigger. So researchers have been working for years to advance a radical approach called DNA data storage: Digital data that would typically be stored as 0s and 1s on a hard drive is instead encoded as the four bases that make up DNA sequences—A, C, G, and T—on synthetic DNA strands expressly created for the purpose of deep archival storage. (Read Spectrum’s Eliza Strickland for a deeper dive.) Massive amounts of data that require loads of disk drives could be stored instead in a smear of DNA, chucked into a fridge, and decoded whenever. Yet DNA data storage has to date been relegated to boutique, proof-of-concept applications like time capsules, and the cost is tremendous.

But for DNA to make a difference in the storage problem, it needs to scale up, while the cost must come down. New research led by the Georgia Tech Research Institute (GTRI) aims to achieve both. Scientists announced they’ve made significant advances toward creating a chip that can grow DNA strands in a tightly packed, ultra-dense format for large storage capacity at very low cost.

Electrochemistry has been used this way before, but what’s new is trying to add a layer of electrical control.

“The great promise of DNA is that it’s a perfect application for deep archival storage; we know this from biology, because people have been able to sequence woolly mammoths,” Nicholas Guise, GTRI senior research scientist, tells Spectrum. “The challenge is the cost and time involved to synthesize DNA for storage applications is very high. It’s cost-prohibitive to do anything more than a couple hundred megabytes, which would take a full day to print [the DNA] and cost a few thousand dollars.”

“So you can see that’s pretty different than the cost of a thumb drive,” he says.

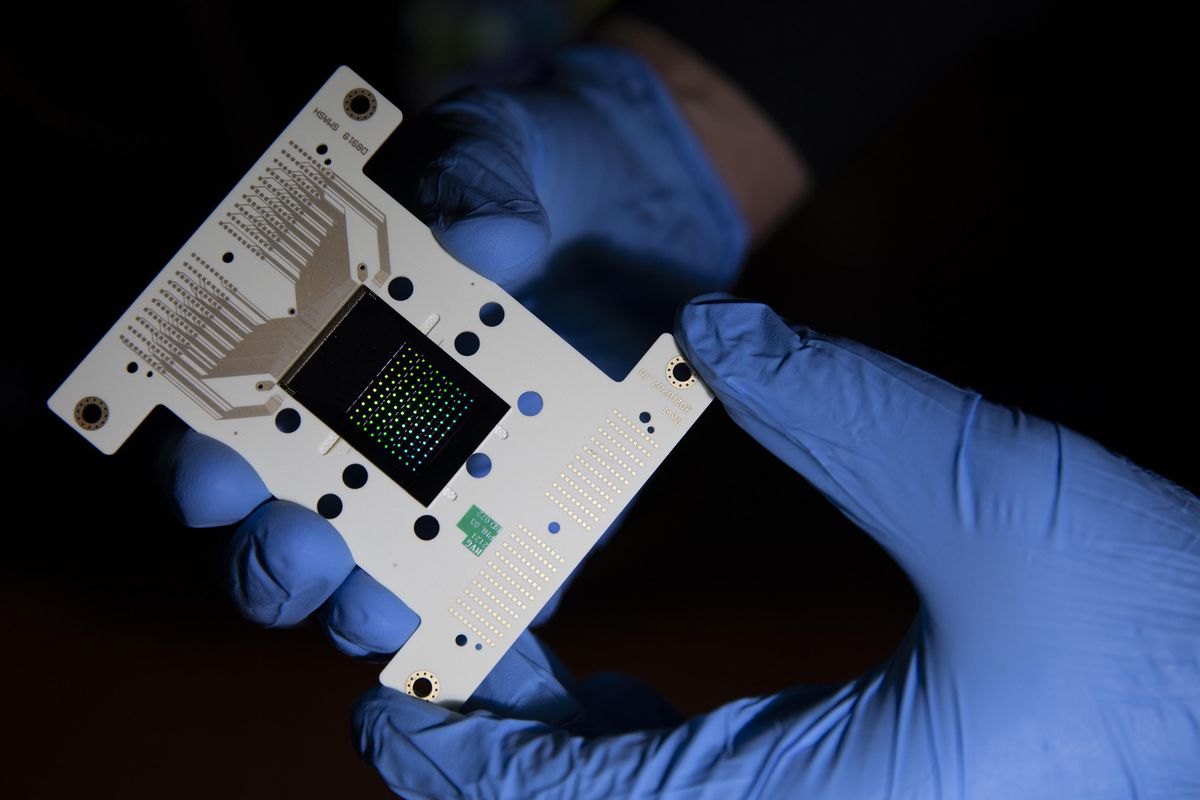

But it may not be that way forever, thanks to work like GTRI’s new project. The team developed a prototype one-inch square chip that’s outfitted with 10 banks of “microwells” that are each a few hundred nanometers deep, where DNA strands are grown in massive parallel. The team has managed to optimize the geometry of the microwells to fit more of them, with the ultimate goal of squeezing millions of DNA sequences across the chip—each one acting as a little electrochemical reactor.

Guise explains: “The way synthetic DNA is made now is there is a chemical process where you introduce the right agents, one acid at a time, to bring in the base you want: A,C, G, or T to add to that string of DNA and you do many cycles of these reactions. It’s like a modified inkjet printer, where the printer head travels around the chip, putting the G down here and the C down there, doot doot doot.”

The new chips, by contrast, are designed to activate the synthesis locally through electrochemistry. This will be the next step in the project: The chips will at some point include a second layer of electronic controls, made in your standard complementary metal-oxide-semiconductor (CMOS), that will initiate the chemical process of building each strand of DNA one base at a time.

“So instead of having this inkjet print it up, we can just send electrical signals to put on the chip,” Guise says. “Just by applying a little voltage to some electrodes, it can generate a little bit of acid and opens up the DNA strand in that location so we can add the base there.”

That would be much cheaper than the current process. Electrochemistry has been used in this way before, Guise says, but what’s new is trying to push the density of the DNA as high as it can go, and ultimately adding electrical control. (Guise estimates this next step could happen possibly within a year.)

GTRI works with biotech companies Twist Bioscience and Roswell Biotechnologies on these projects, with the aim of creating proof-of-concept of commercial, scalable DNA data storage that could eventually scale into exabyte capacity. The work is part of the Scalable Molecular Archival Software and Hardware (SMASH) project, a GRTI-led collaboration that Guise also leads that is supported by the Intelligence Advanced Research Projects Activity (IARPA)’s Molecular Information Storage program.

In another layer of the work, GTRI also collaborates with the University of Washington to smooth the comparatively high error rate that DNA storage can introduce—sort of fat-finger mistakes like two As instead of one, or a C instead of a G. Microsoft recently published its own work with the University of Washington, which like GTRI is trying to increase the density of DNA on a chip.

“They’ve shown synthesis features spaced 2 microns apart, while ours are designed to be 1 micron, but to their credit they’ve done more to show you can sequence that closely without errors,” Guise says.

Both projects are still proof-of-concept level experiments, showing what’s theoretically possible on a smaller scale.

“What they haven’t done yet, and we haven’t either, is showing you can generate a large amount of data on a chip like this,” Guise says. “The data on these chips is still small, but it has the potential to scale up much, much higher.”

Julianne Pepitone is a freelance journalist who reports via text, video, and television. She spent years on staff at CNN Business and then at NBC News, covering consumer tech, cybersecurity, and business. Now a freelancer, she works with an eclectic roster of clients. Beyond Spectrum, CNN, and NBC, her bylines can also be found at HGTV Magazine, Memorial Sloan Kettering, NYMag.com, Glassdoor, Popular Mechanics, Cosmopolitan, Town & Country, Thrillist, MagnifyMoney, The Village Voice, and more.