David Forney: The Man Who Launched a Million Modems

The 2016 IEEE Medal of Honor recipient turned information theory into practice

It can sometimes seem as though there's a gaping chasm between theory and reality. But G. David Forney Jr., the recipient of this year's IEEE Medal of Honor, masterfully straddles both realms, his colleagues say. A key figure in the development of the high-speed modem, a device that opened up the Internet and all its associated world-changing technologies, Forney has balanced the practical and the theoretical throughout his career. Over the years, he has not only made critical contributions to communications and information theory but also put some of this recondite mathematical theory into practice. And as a result, he can claim a key role in the greatest communications revolution in modern history.

“He's one of the people who gets to the heart of abstract subjects very quickly," says Thomas Kailath, an emeritus professor of engineering at Stanford and the 2007 IEEE Medal of Honor recipient. “But uniquely, when needed, he also designed and built circuits, wrote code, and got things working. Everything Dave does, he does well."

And yet, as an underclassman at Princeton in the late 1950s, Forney wasn't necessarily set on a career in engineering. He did ultimately decide to pursue a bachelor of science in engineering, but not out of any burning desire to invent or design.

“I thought, 'I'll keep my options open,' but without any great intention to become an engineer," Forney recalls, relaxing in the sunny sitting area off the kitchen of his Cambridge, Mass., home one December afternoon last year. But an elective course in thermodynamics taught by John Archibald Wheeler stirred a sense of discovery in him.

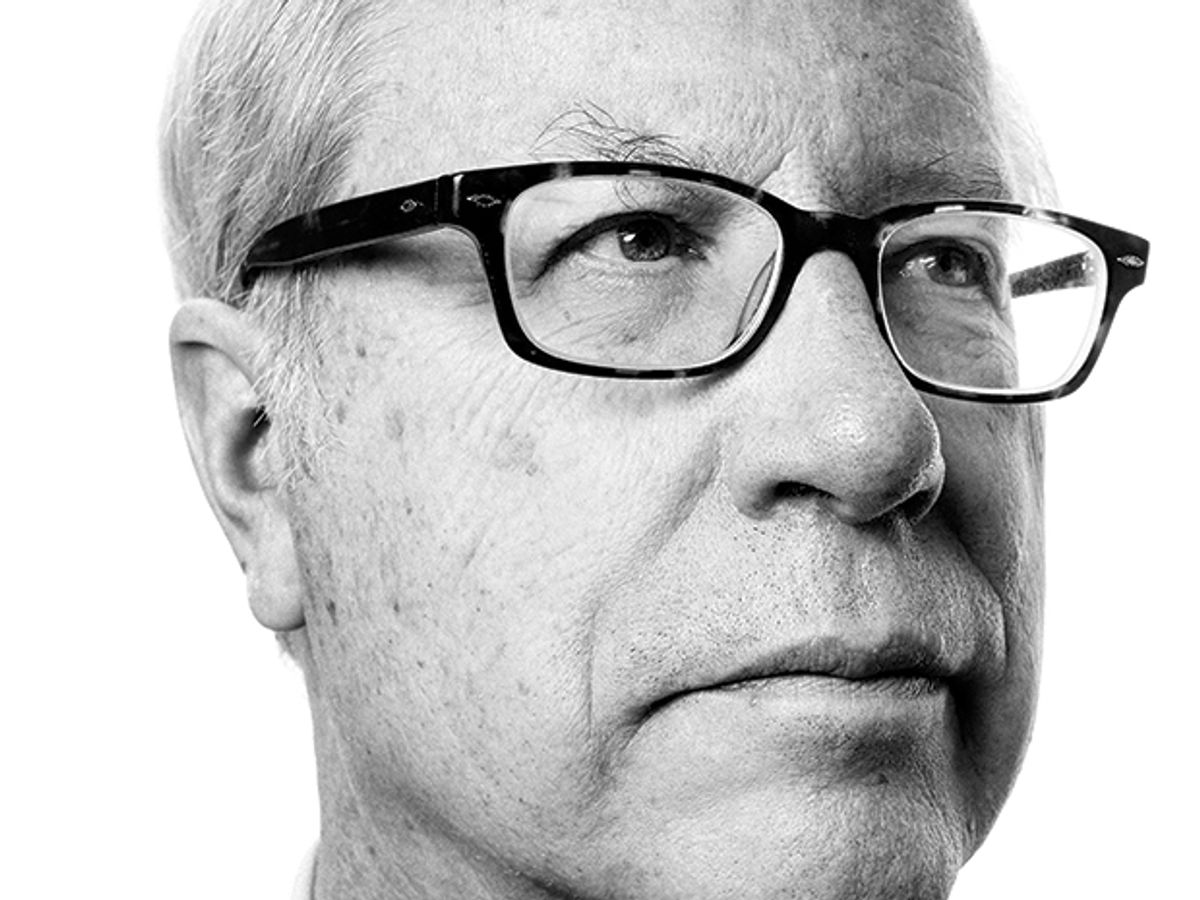

G. David Forney Jr.

Date of birth: 6 March 1940

Birthplace: New York City

First job: Junior counselor, Camp Agawam, Maine, 1955

First job in science or technology: Summer intern, Bell Laboratories, Murray Hill, N.J., 1960

Most recent book read:Between the World and Me, Ta-Nehisi Coates

Favorite music: The Beatles

Computer most frequently used: MacBook Pro

Favorite restaurant: T.W. Food, Cambridge, Mass.

Honors (partial list): Fellow, IEEE, 1973; Member, National Academy of Engineering (U.S.), 1983; IEEE Edison Medal, 1992; IEEE Information Theory Society Claude E. Shannon Award, 1995; Marconi Prize, 1997; Fellow, American Academy of Arts and Sciences, 1998; Member, National Academy of Sciences (U.S.), 2003

“I really liked his approach," Forney says of the legendary physicist's down-to-earth teaching style. “It was much more of an engineering course than a physics course. And he [assigned] a term paper instead of a final exam."

For the paper, Forney decided to read Léon Brillouin's 1956 book, Science and Information Theory. The book tackled thermodynamics in the context of information theory, then a fledgling field, founded about a decade earlier with a groundbreaking paper by Claude Shannon. In that 1948 paper, “A Mathematical Theory of Communication," Shannon laid out the mathematical foundation for the transmission of information (the centenary of his birth is being celebrated this year).

Forney says he was struck by Brillouin's resolution of the problem of Maxwell's demon, a thought experiment in which energy appears to be created for free by sorting molecules by their speed. Brillouin noted that every physical system contains information, and extracting that information—in the case of Maxwell's demon, the speed of particles—always costs energy, enough to precisely satisfy the laws of thermodynamics. “Information isn't free; it comes at a cost," Forney says, in summary. “Probably I could poke holes in that now. But certainly as an undergraduate this was all very interesting."

He carried this interest to graduate school at MIT, in 1961, where he found a whirlwind of research activity. Shannon himself had recently arrived from Bell Labs, and a research group was trying to extend his work and find practical uses for it.

In a master's thesis on information theory and quantum mechanics, and a doctoral dissertation on error-correcting codes, Forney displayed a bloodhound's nose for finding the right problems and the right questions to ask about those problems. The 1990 IEEE Medal of Honor recipient, Robert Gallager, a young faculty member in the mid-1960s and now an emeritus professor at MIT's Research Laboratory of Electronics, singles out Forney's doctoral thesis as a leap forward in the field.

At that time, digital technology was taking off, and researchers were hunting for coding schemes—ways of transforming those 1s and 0s into a form that could be carried from place to place with little power and few errors. In his celebrated 1948 paper, Shannon had already worked out the ultimate limit to such efficiency, a maximum achievable error-free data rate for any communications channel. But reaching that limit was easier said than done.

Disturbances during transmission will flip bits at random. To tackle errors, Shannon proposed adding redundant bits to a sequence of data before transmission to create an encoded packet. The longer the packet, the less likely it would be corrupted to look like another potential sequence. This approach could push transmission to its limits, but it posed a practical challenge at the decoding stage. The straightforward, brute-force approach would compare the incoming sequence with every possible transmitted sequence to find the most likely one. This process could work for relatively short packets, Forney says. But longer packets, which would be needed to obtain very low error rates, would quickly exhaust the computational power of a decoder, even with today's technology.

Researchers proposed various coding schemes to try to achieve data rates close to the Shannon limit with low error rates and reasonable decoding complexity. But there was no single code that could do it all.

Forney had another idea. Why not break the problem down and use multiple, complementary codes to encode and decode data, one operating on the outcome of the other? A simple inner code operating directly on the input and output of a communications channel could achieve a moderate error rate at data rates near Shannon's limit. An outer code, used before data enters the inner code and after exiting it, could drive error rates down further using a powerful but less computationally complex algorithm.

Forney showed that this approach, dubbed concatenation, could achieve a much better trade-off between data rates and computational complexity, and that it would in principle let existing modems transmit and receive all the way up to Shannon's limit. “Today, almost every coding scheme for transmission is in some form a concatenated scheme," says colleague Gottfried Ungerboeck. “You can transmit information faster and more reliably with the same power and bandwidth."

Coming out of graduate school in 1965, Forney recalls that information theory was a field brimming with ideas and yet-to-be-realized applications. Any such IT field today might have launched a dozen startups, with angel investors hovering to pounce on possible secondary spin-off opportunities. But at the time, startups weren't really part of the culture, Forney says. Smart and capable grads who didn't intend to stay in academia typically sought out big companies.

Forney submitted applications to some of the usual suspects—IBM and Bell Labs. But on the advice of Gallager, Forney also applied to the recently formed Codex Corp. The Massachusetts-based company gave Forney the lowest offer. But he was sold. “They were in business to try to make information theory practical, to make coding practical. Nobody else was doing that," he says.

Forney became the 13th employee in the company, which would be bought by Motorola in 1977 and ultimately employ thousands. One of his first projects was a communications contract for NASA, creating coding and decoding algorithms for some of the agency's Pioneer spacecraft.

But the project that put Codex—and Forney—on the map was a 9,600-bit-per-second modem that became the basis of the company's commercial success. The company had devised the first such modem in 1968, Forney says, and it sold for more than US $20,000 to big firms with international data networks, such as banks and airlines. But, like its competitors, the modem turned out to be unreliable owing to a previously unrecognized problem with the telephone networks that added “phase jitter"—random variation in the phase of the signal-carrying waves traveling across them.

Following up on a suggestion made by Gallager, Forney designed a family of modems that used a modulation scheme called quadrature amplitude modulation. This approach, which could better handle phase shifts, produced a more reliable and successful modem. The design eventually became the basis of the international V.29 9,600-bit-per-second modem standard.

But this early practical success didn't lure Forney away from the fundamentals. After his modem work in 1970 and 1971, he spent a sabbatical year at Stanford. And when he returned, he set out to write up some thoughts he'd had on how an algorithm proposed in 1967 by Andrew Viterbi might be applied to signal decoding.

The result was a landmark 1973 paper in the Proceedings of the IEEE that popularized the Viterbi algorithm by introducing a visualization technique called the “trellis diagram." The algorithm can be used to recover data from a patchy or noisy signal. Today, it is used in an extraordinary range of disparate technologies, including modems, wireless communications, and voice and handwriting recognition, as well as DNA sequencing.

To explain how the Viterbi algorithm works, Forney gives a handwriting recognition example. Reading in the letters for the word “hand," the computer might initially determine that the second letter looks more like a q than an a. But a recognizer running the Viterbi algorithm will also factor in the fact that “hand" is a much more likely English word than “hqnd." “It finds the most likely sequences, taking into account both raw likelihood and sequence constraints," Forney says.

Viterbi-algorithm decision trees can be plotted in a latticelike trellis diagram, an approach Forney and his colleagues say is much simpler than writing out formulas and logical if-then statements. Trellis diagrams “laid things out graphically, which appeals to people more than just a collection of equations [does]," says Stanford's Kailath. “They provided a tool for engineers to appreciate what the Viterbi algorithm could do and make extensions of it."

From 1975 to 1985, Forney served as an R&D executive for Codex and then Motorola, after the company's acquisition. In the 1980s, Forney was inspired by trellis-coded modulation, a new signaling scheme developed by Ungerboeck that was rapidly adopted in modems, and he was drawn back into research. In the decades since, he has worked extensively on codes, with an eye toward continuing to raise the efficiency of data transmission. A number of his papers, as with his work on trellis diagrams, reintroduced key concepts into the field that other researchers could then apply.

Forney retired from Motorola in 1999. He has been an adjunct professor in the department of electrical engineering and computer science and the Laboratory for Information and Decision Systems at MIT since 1996.

Regarding his contributions, Forney says modestly, “I'm just the guy who comes along at the end of the circus parade." After a discovery has been made, he'll add to it, he says. In a sentence he sums up these insights: “You know, the right mathematical language to talk about this invention is this."

Filling out the framework around a new theory or algorithm might not be the kind of work that garners headlines, but it's still critical to progress, Gallager says. “Researchers 'keep score' by counting inventions and weighting them by their popularity," he says, but “what makes a research field grow and evolve is the context, relations, and connection to reality." Forney has created this “context in the information theory and communication technology fields to a greater extent than almost anyone else," Gallager says.

And in the ever-evolving world of engineering, the ability to draw from theory to push the limits of what's practical is one skill that will never go out of style.

Check out IEEE.tv's biographical video detailing the life and work of Dave Forney, 2016 IEEE Medal of Honor winner.

Video: IEEE.tv

This article appears in the May 2016 print issue as "Modem Maestro."

- Closing in on the perfect code - IEEE Spectrum ›

- A Man in a Hurry: Claude Shannon's New York Years - IEEE Spectrum ›

- Bell Labs Looks at Claude Shannon's Legacy and the Future of ... ›

- Recognizing Those Making a Difference in Engineering Education - IEEE Spectrum ›