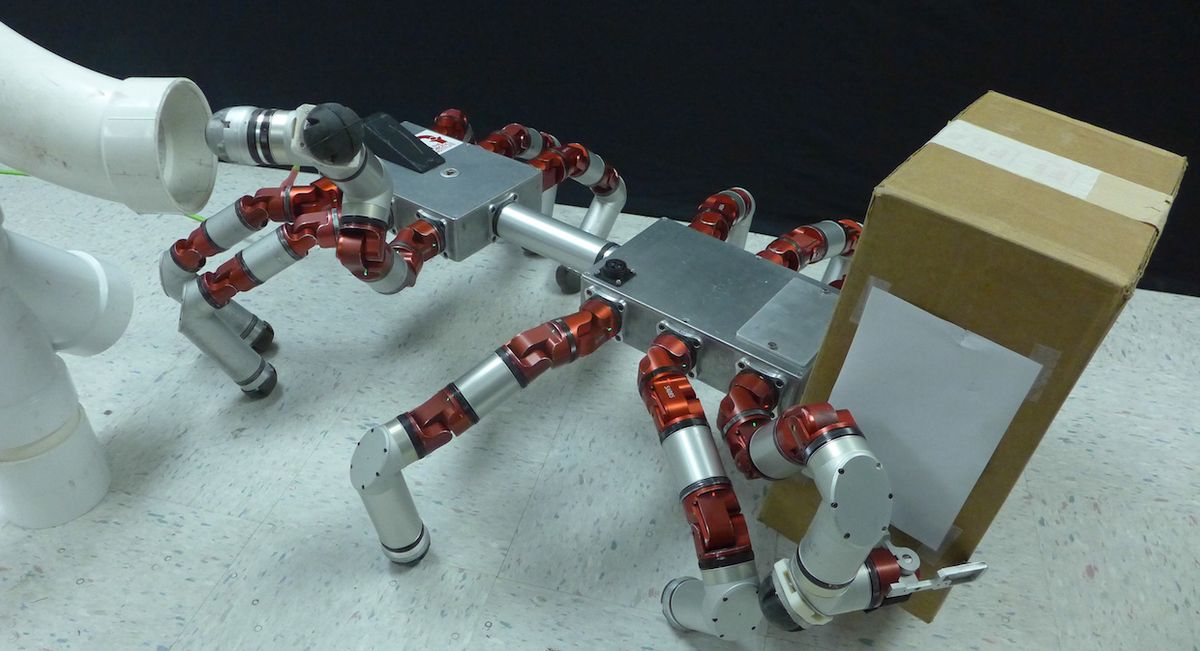

Robots that can be physically reconfigured to do lots of different things are, in theory, a great way to maximize versatility while saving time and effort. In theory. The idea is that you could have a robot with a bunch of different limbs that would be arms or legs depending on what worked best at the time, but in practice, this means coming up with entirely new gaits along with a way to transition from the old gait to the new one. You could, if you had a lot of time to kill and nothing better to do, pre-compute every possible combination of gaits and transitions in advance, but who would want to do that when you could instead “create new gaits online to enable rapid deployment minutes after reconfiguration.” Okay, yeah, that may not sound super exciting, but it means you can teach a dodecapod robot to transition into a septapod robot that can carry stuff with two arms while using a third to point a camera.

There are plenty of robots out there that have more legs than they need, often because there’s some expectation that at some point one (or more) of those legs will cease to function and the other legs will have to compensate. If this happens, the robot is programmed to change its gait to walk on whichever legs it has left. Programmed in advance, that is, which is fine, except that as robots get more modular and easier to physically reconfigure, it becomes more and more useful to have a generalized system that can dynamically generate gaits (and transitions between gaits) on the fly no matter what the leg configuration of your robot happens to be.

While dealing with dead legs is certainly useful, a recent project by CMU researchers Julian Whitman, Shuang Su, Stelian Coros, Alex Ansari, and Howie Choset, focuses on something different: allowing robots with multiple limbs to switch them from locomotive to non-locomotive tasks. That is, turn them from legs into arms that can manipulate things.

Here’s how they explain it:

We demonstrated our approach to gait and transition generation with a hexapod and a dodecapod robot. We emphasize the utility of our method for redundant locomotors, like our robots, where limbs can be reassigned simultaneously to peripheral tasks while still leaving enough limbs for quasistatic locomotion. In each demonstration, the robot begins with all limbs in locomotive roles. We choose a subset of limbs to be reassigned, and create a new gait. We then create a transition that maintains robot heading, orientation, and speed. These examples show different sets of limbs used to pick up and carry objects or to position a camera while the robots locomote.

As useful as this seems, it’s still very preliminary, and there’s a lot more to be done. The researchers are planning on extending their method to include dynamic gaits, which means things like (we hope) running and jumping, and they’re also going to generalize to other morphologies like bipeds and tripeds. Ultimately, the idea is to develop a system that you can feed high level tasks to—something like “go over there and pick up that thing”—and the robot will choose how best to make that happen, no matter how many legs it starts (or finishes) with.

“Generating Gaits for Simultaneous Locomotion and Manipulation,” by Julian Whitman, Shuang Su, Stelian Coros, Alex Ansari, and Howie Choset from Carnegie Mellon University, was presented this week at IROS 2017 in Vancouver, Canada.

Evan Ackerman is a senior editor at IEEE Spectrum. Since 2007, he has written over 6,000 articles on robotics and technology. He has a degree in Martian geology and is excellent at playing bagpipes.