Among the great engineers of the 20th century, who contributed the most to our 21st-century technologies? I say: Claude Shannon.

Shannon is best known for establishing the field of information theory. In a 1948 paper, one of the greatest in the history of engineering, he came up with a way of measuring the information content of a signal and calculating the maximum rate at which information could be reliably transmitted over any sort of communication channel. The article, titled “A Mathematical Theory of Communication,” describes the basis for all modern communications, including the wireless Internet on your smartphone and even an analog voice signal on a twisted-pair telephone landline. In 1966, the IEEE gave him its highest award, the Medal of Honor, for that work.

If information theory had been Shannon’s only accomplishment, it would have been enough to secure his place in the pantheon. But he did a lot more.

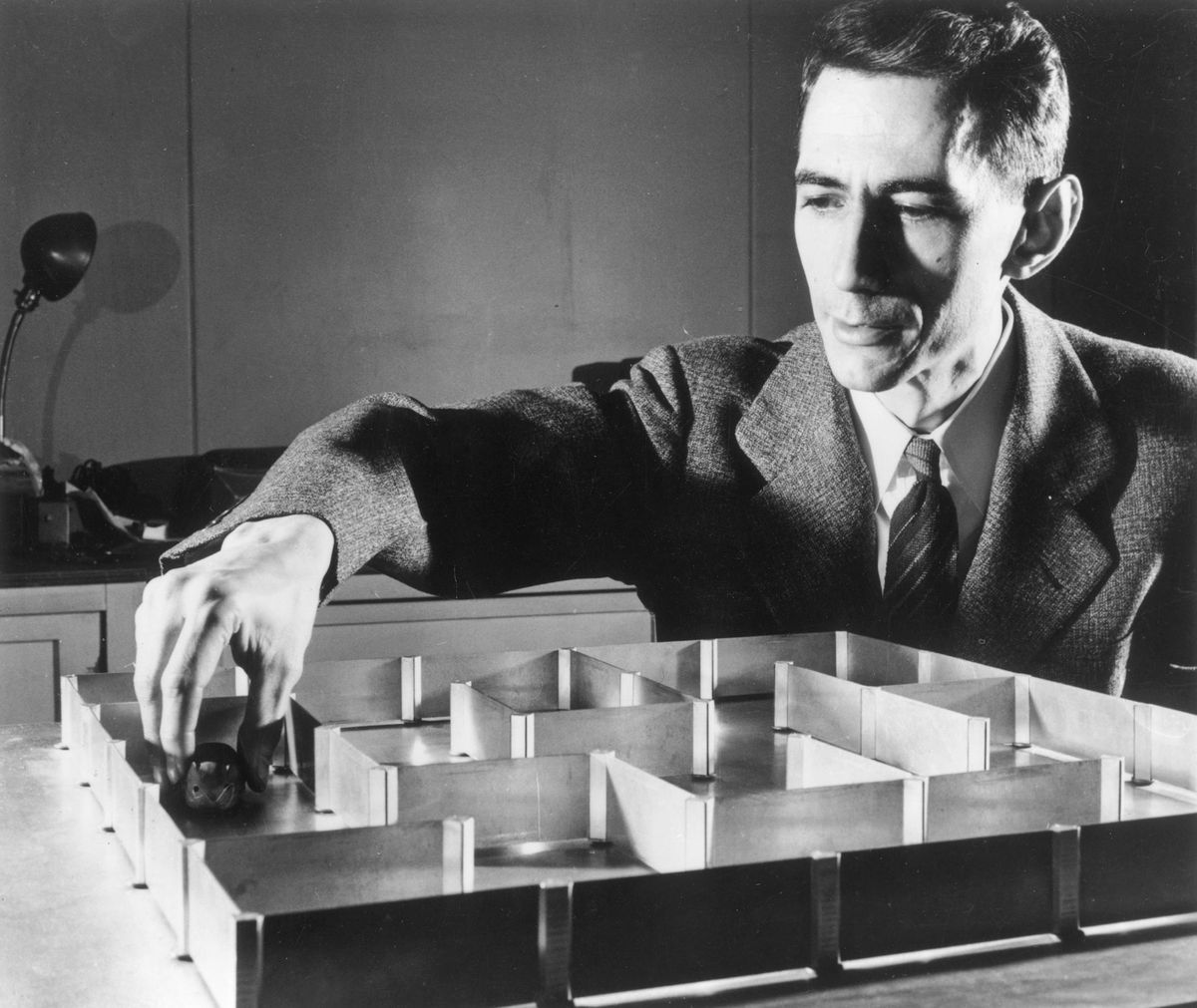

A decade before, while working on his master’s thesis at MIT, he invented the logic gate. At the time, electromagnetic relays—small devices that use magnetism to open and close electrical switches—were used to build circuits that routed telephone calls or controlled complex machines. However, there was no consistent theory on how to design or analyze such circuits. The way people thought about them was in terms of the relay coils being energized or not. Shannon showed that Boolean algebra could be used to move away from the relays themselves, into a more abstract understanding of the function of a circuit. He used this algebra of logic to analyze, and then synthesize, switching circuits and to prove that the overall circuit worked as desired. In his thesis he invented the AND, OR, and NOT logic gates. Logic gates are the building blocks of all digital circuits, upon which the entire edifice of computer science is based.

In 1950 Shannon published an article in Scientific American and also a research paper describing how to program a computer to play chess. He went into detail on how to design a program for an actual computer. He discussed how data structures would be represented in memory, estimated how many bits of memory would be needed for the program, and broke the program down into things he called subprograms. Today we would call these functions, or procedures. Some of his subprograms were to generate possible moves; some were to give heuristic appraisals of how good a position was.

While working on his master’s thesis at MIT, Shannon invented the logic gate.

Shannon did all this at a time when there were fewer than 10 computers in the world. And they were all being used for numerical calculations. He began his research paper by speculating on all sorts of things that computers might be programmed to do beyond numerical calculations, including designing relay and switching circuits, designing electronic filters for communications, translating between human languages, and making logical deductions. Computers do all these things today. He gave four reasons why he had chosen to work on chess first, and an important one was that people believed that playing chess required “thinking.” Therefore, he reasoned, it would be a great test case for whether computers could be made to think.

Shannon suggested it might be possible to improve his program by analyzing the games it had already played and adjusting the terms and coefficients in its heuristic evaluations of the strengths of board positions it had encountered. There were no computers readily available to Shannon at the time, so he couldn’t test his idea. But just five years later, in 1955, Arthur Samuel, an IBM engineer who had access to computers as they were being tested before being delivered to customers, was running a checkers-playing program that used Shannon's exact method to improve its play. And in 1959 Samuel published a paper about it with “machine learning” in the title—the very first time that phrase appeared in print.

So, let’s recap: information theory, logic gates, non-numerical computer programming, data structures, and, arguably, machine learning. Claude Shannon didn’t bother predicting the future—he just went ahead and invented it, and even lived long enough to see the adoption of his ideas. Since his passing 20 years ago, we have not seen anyone like him. We probably never will again.

This article appears in the February 2022 print issue as “Claude Shannon’s Greatest Hits.”

- Bell Labs Looks at Claude Shannon's Legacy and the Future of ... ›

- Claude Shannon: Tinkerer, Prankster, and Father of Information ... ›

- A Man in a Hurry: Claude Shannon's New York Years - IEEE Spectrum ›

- Undetectable Backdoors Plantable In Any Machine Learning Algorithm - IEEE Spectrum ›

- What They Did That Summer in Dartmouth - IEEE Spectrum ›

Rodney Brooks is the Panasonic Professor of Robotics (emeritus) at MIT, where he was director of the AI Lab and then CSAIL. He has been cofounder of iRobot, Rethink Robotics, and Robust AI, where he is currently CTO.