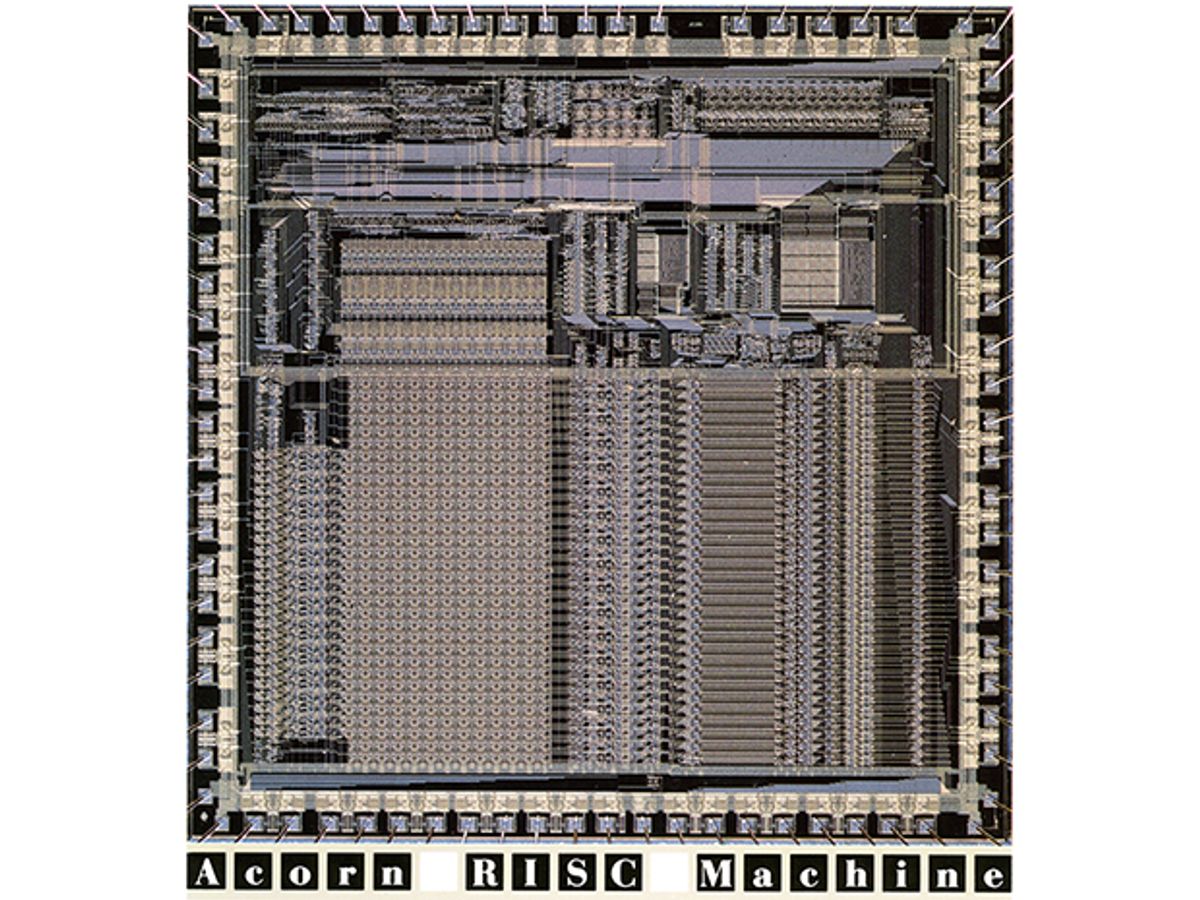

In the early 1980s, Acorn Computers was a small company with a big product. The firm, based in Cambridge, England, had sold over 1.5 million 8-bit BBC Micro desktop computers as part of the BBC’s national Computer Literacy Project. It was now time to design a new computer. Unsatisfied with the processors then available on the market, the Acorn engineers decided to make the leap to creating their own 32-bit microprocessor.

They called it the Acorn RISC Machine, or ARM. RISC, which stood for reduced-instruction-set computer, was an approach to designing processors that traded more complex machine code for higher efficiency. The engineers knew it wouldn’t be easy; in fact, they half expected they’d encounter an insurmountable design hurdle and have to scrap the whole project. “The team was so small that every design decision had to favor simplicity—or we’d never finish it!” says codesigner Steve Furber, now a computer engineering professor at the University of Manchester. In the end, the simplicity made all the difference. The ARM was small, low power, and easy to program. Sophie Wilson, who designed the instruction set, still remembers when they first tested the chip in a computer. “We did ‘PRINT PI’ at the prompt, and it gave the right answer,” she says. “We cracked open the bottles of champagne.” In 1990, Acorn spun off its ARM division, and the ARM architecture went on to become the dominant 32-bit processor for embedded application. More than 10 billion ARM cores have been used in all sorts of gadgetry, including one of Apple’s most humiliating flops, the Newton handheld, and one of its most glittering successes, the iPhone. Indeed, ARM chips are now found in more than 95 percent of the world’s smartphones.