New artificial versions of the neurons and synapses in the human brain may be as small as one-thousandth the size of neurons and at least 10,000 times as fast as biological synapses, a study now finds.

These new devices may help improve the speed at which the increasingly common and powerful artificial-intelligence systems known as deep neural networks learn, researchers say.

In artificial neural networks, electrical components dubbed “neurons” are fed data and cooperate to solve a problem, such as recognizing images. The neural net repeatedly adjusts the links between its ersatz neurons and sees if the resulting patterns of behavior are better at finding a solution. Over time, the network discovers which patterns are best at computing results. It then adopts these as defaults, mimicking the process of learning in the human brain.

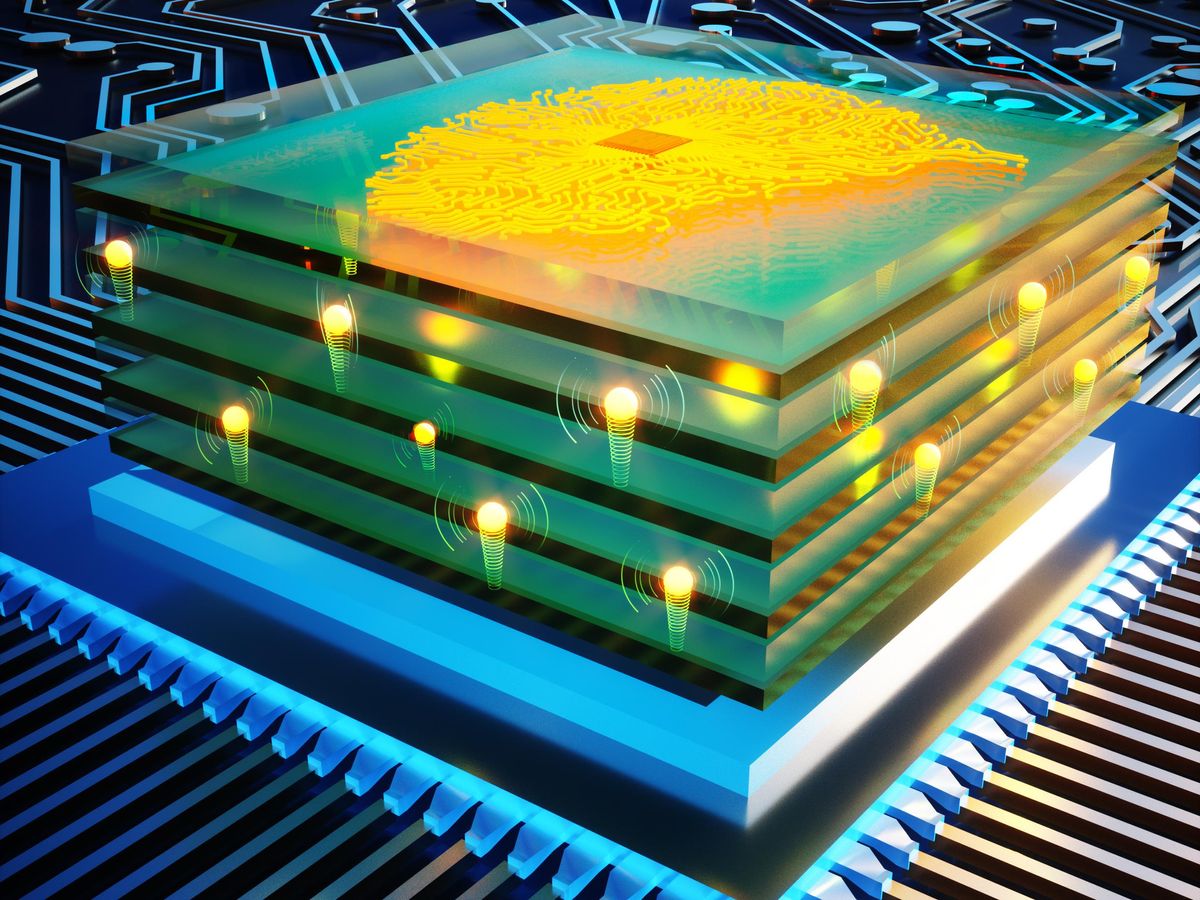

A neural network is dubbed “deep” if it possesses multiple layers of neurons. Deep neural networks are increasingly finding use in applications such as analyzing medical scans, designing microchips, predicting how proteins fold, and empowering autonomous vehicles.

The speed of thought in animals is typically limited to milliseconds—constrained by the weak voltages and watery medium in which neural signals are shuffled. However, artificial solid-state neurons and synapses are not hemmed in by these constraints.

The amount of time, energy, and money needed to train deep neural networks is skyrocketing. One approach that researchers are pursuing to help overcome this challenge involves training brain-imitating deep neural networks on brain-mimicking hardware instead of conventional computers, a strategy called analog deep learning.

Just as transistors are the core elements of digital computers, so too are neuron- and synapselike components the key building blocks in analog deep learning. In the new study, researchers experimented with artificial synapses called programmable resistors.

The new programmable resistors are similar to memristors, or memory resistors. Both kinds of devices are essentially electric switches that can remember which state they were toggled to after their power is turned off. As such, they resemble synapses, whose electrical conductivity strengthens or weakens depending on how much electrical charge has passed through them in the past. Memristors are two-terminal devices, whereas the new programmable resistors are three-terminal devices, says study lead author Murat Onen, an electrical engineer at MIT.

The research team’s programmable resistors increased or decreased their electrical conductance by moving protons around. To increase conductance, electric fields helped insert protons into the devices. To decrease conductance, protons were taken out.

These protonic programmable resistors used an electrolyte similar to those found in batteries to let protons pass while blocking electrons. Their electrolyte was phosphosilicate glass, which the researchers suspected would possess high proton conductivity at room temperature. This glass accommodated many nanometer-size pores for proton transport and could also withstand very strong pulsed electric fields to help protons move quickly.

“Previously the operation timescales were around milliseconds, whereas in this work we achieved nanoseconds.”

—Murat Onen, MIT

Unlike the organic Nafion electrolyte used in an earlier version of the team’s device, phosphosilicate is compatible with silicon fabrication techniques. This helped scale the devices “all the way down to 10-nanometer scale,” Onen says. In contrast, biological neurons are roughly 1,000 times as long.

The speed at which biological neurons and synapses can process and transfer data is limited by the weak voltages and watery medium in which these signals are shuffled. Anything more than 1.23 volts causes liquid water to split into hydrogen and oxygen gas. As such, the speed of thought in animals is typically limited to millisecond timescales. In contrast, artificial solid-state neurons and synapses are not hemmed in by these constraints. However, it was unclear how fast they were compared with their biological counterparts.

In experiments, the scientists found their protonic programmable resistors could perform at least 10,000 times as fast as biological synapses at room temperature. “The most surprising part was to see how fast we could move protons within solid media,” Onen says. “Previously the operation timescales were around milliseconds, whereas in this work we achieved nanoseconds.”

The devices could run for millions of cycles without breaking down. Furthermore, the amount of heat they generated during computation compared well with that of human synapses. The insulating properties of the phosphosilicate glass meant that almost no electric current passed through the material as protons moved, making the gadgets highly energy efficient.

“The primary technological implication is that we can now have protonic programmable devices for analog deep learning applications,” Onen says. "Predecessors of such devices already had many promising qualities compared to competing technologies but were very slow, which meant they were not appropriate to be used in processors.”

In addition, Onen says, “the discovery of ultrafast ion transport in solids could have broader implications beyond analog deep learning, whenever fast ion motion is required, such as in microbatteries, fuel cells, artificial photosynthesis, and electrochromism.”

The scientists detailed their findings in the 29 July issue of the journal Science.

Charles Q. Choi is a science reporter who contributes regularly to IEEE Spectrum. He has written for Scientific American, The New York Times, Wired, and Science, among others.