This article is part of our exclusive IEEE Journal Watch series in partnership with IEEE Xplore.

Since the advent of the smartphone, the use of touch to interact with digital content has become ubiquitous. So far though, touchscreens have mainly been limited to pocket-sized devices. Now researchers have come up with a low-cost way to turn any surface into a touchscreen, opening a host of new possibilities for interacting with the digital world.

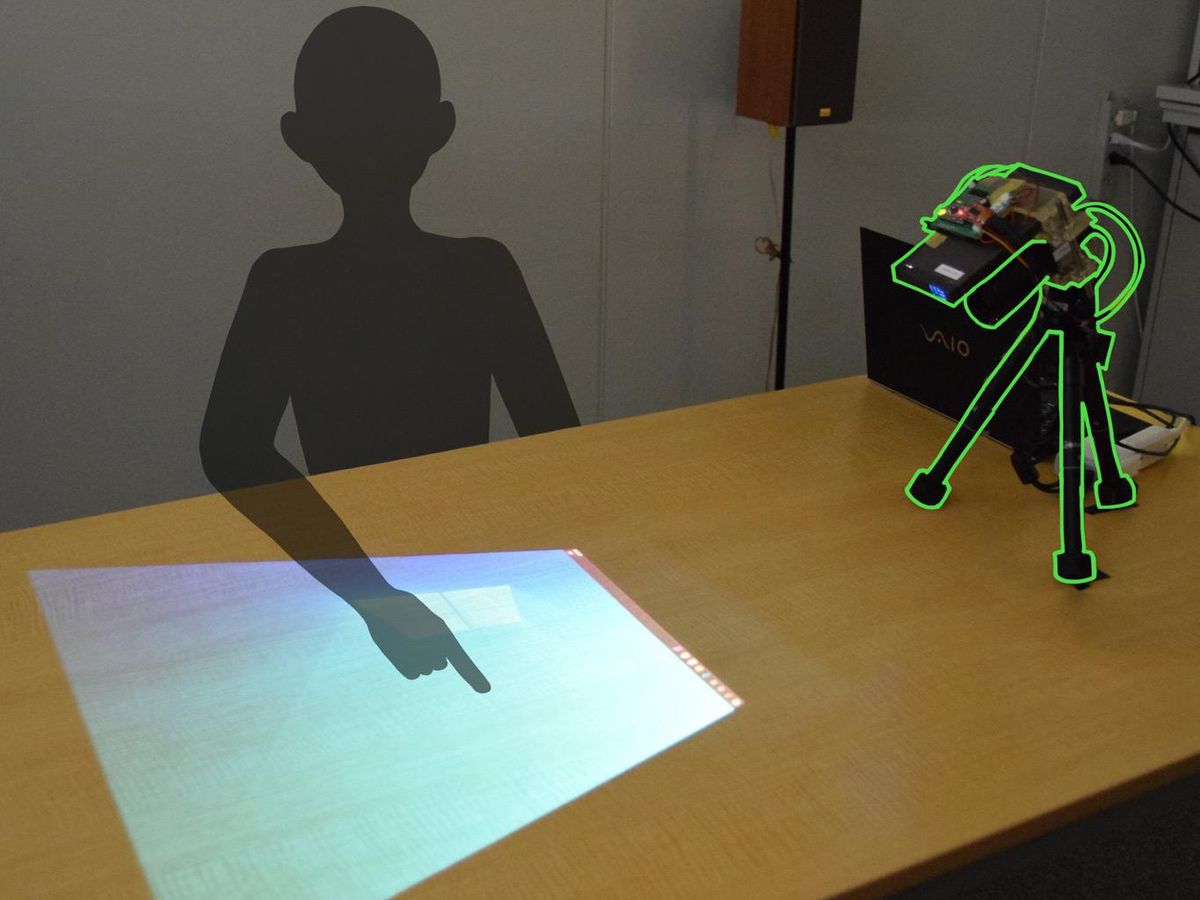

Previous attempts to allow people to manipulate projected images with touch have relied on special input devices, multiple sensors, or image processing algorithms that struggle with cluttered or confusing visual content. The new system only requires a single camera attached just below the projector, and the system's developers say it works well no matter what you want to project.

The key to the approach is a clever optical trick that ensures only movements just above the surface of the projection are detected. This makes it possible to register users fingers as they press buttons, while ignoring everything else in the camera's field of view. Its designers hope the technology could be used to create large, interactive displays almost anywhere.

"You can project whatever you want and make the system interactive for games or for different types of user experiences," says Suren Jayasuriya—an assistant professor at Arizona State University's schools of Arts Media and Engineering and Electrical, Computer and Energy Engineering—who helped design the system alongside colleagues from Nara Institute of Science and Technology (NAIST) in Japan.

"The infrastructure required is very minimal, its just this one projector camera," Jayasuriya says. "Other systems, to try to get to this level of interactivity, would need either multiple cameras or additional depth sensors."

The approach relies on a laser scanning projector, which rapidly scans up and down, drawing the projected image line by line. This is paired with a rolling shutter camera, which exposes its sensor's pixels one row at a time so that it scans the visual scene line by line, much like the projector.

By synchronizing the two devices, the horizontal plane of light emitted by the projector can be made to intersect with the horizontal plane that the camera is receiving from. And because the two devices are slightly offset, the principle of triangulation lets you calculate the depth of this point where they overlap.

That makes it possible to calibrate the setup so that the camera only picks up light at a specific distance from the projector, which can be set to hover just above the projected image. That means that while the camera can pick up users' fingers as they press on areas of the projected image, it ignores the rest of the visual scene.

In a paper in IEEE Access, the researchers describe how they paired this set-up with a simple image-processing algorithm to track the position of the users' fingers relative to the projected image. This tracking information can then be used as input for any touch-based application.

The system is already fairly simple to set up, says Jayasuriya, but the team is now working on an auto-calibration scheme to make things even easier. It's also relatively low-cost, he adds. The initial prototype took about $500 to build, but that could be reduced significantly if commercialized.

"The idea of combining a scanning laser with a scanning camera to create a virtual sensing plane is very clever," says Chris Harrison, an associate professor at Carnegie Mellon University's Human-Computer Interaction Institute.

The accuracy of the approach is unlikely to match physical touchscreens, he says. But being able to project them on any surface could enable a variety of novel functionalities, like watching an interactive sports match on your coffee table or projecting TV controls onto a nearby surface so you don't need to use a remote. "It is similar to the vision of augmented reality in many ways, but no one has to wear headsets," says Harrison.

The researchers think the technology could be particularly useful in situations where sticky fingers might be a problem, for instance when dealing with young children or while trying to use a touchscreen while cooking. "With this device we can search recipes or look up directions on how to make the dishes without having to wash our hands first," says Takuya Funatomi, an associate professor at NAIST.

At present the device can only register one finger at a time, but enabling multi-touch would simply require the researchers to swap out the image processing algorithm for a smarter one. The team also hopes to enable more complex gesture recognition in future iterations of the device.

This article appears in the November 2021 print issue as "Any Surface Can Be a Touch Screen."

Edd Gent is a freelance science and technology writer based in Bengaluru, India. His writing focuses on emerging technologies across computing, engineering, energy and bioscience. He's on Twitter at @EddytheGent and email at edd dot gent at outlook dot com. His PGP fingerprint is ABB8 6BB3 3E69 C4A7 EC91 611B 5C12 193D 5DFC C01B. His public key is here. DM for Signal info.