This is a guest post. The views expressed in this article are solely those of the writer and do not represent positions of IEEE Spectrum, or the IEEE.

Quantum computers promise an exponential increase in power compared with today's classical CMOS-based systems. This increase is of a magnitude that is difficult for the human mind to comprehend. So there is real excitement that quantum computers will deliver benefits that are not possible with today's systems. With such promise, we are seeing the rise of quantum computing prophets who say that, in just a few years, these machines will have the ability change the world. And conversely, we're seeing more quantum computing skeptics who say it will never happen.

At Intel, we are taking a pragmatic view of quantum computing. Our enterprise and high-performance computing customers are already asking for this capability from us. And we expect that demand to grow. However, quantum computing is still most certainly in the research stage. It may never work.

But we all see the enormous opportunities and they are worth pursuing.

Recently, a perspective published in IEEE Spectrum suggested that quantum computing will never materialize. Its main argument was that quantum computing will require control over an exponentially large number of quantum states, and that this amount of control is too difficult to achieve.

As both a quantum computing optimist and as a realist who has seen how long it takes for new semiconductor technologies to come to market, I recognize where the concern is coming from, but I believe it is still far too soon to say we'll “never" realize the promise of quantum computing.

I believe there are four key challenges that could keep quantum computing from becoming a reality. But if solved, we could create a commercially relevant quantum computer in about 10-12 years, a computer that might change your life or mine.

- Qubit Quality: We need to make qubits that we will be able to generate useful instructions or gate operations for on a large scale. As a community, we are not there yet. Even the few qubits in today's cloud-based quantum computers are not good enough for large scale systems. They still generate errors when running operations between two qubits at a rate that is far higher than what we would need to effectively compute. In other words, after a certain number of instructions or operations, today's qubits produce the wrong answer when we run calculations. The result we get can be indistinguishable from noise.

- Error Correction: Now, because qubits aren't quite good enough for the scale we need them to operate at, we need to implement error correction algorithms that check and then correct for random qubit errors as they occur. These are complex instruction sets that use many physical qubits to effectively extend the lifetime of the information in the system. Error correction has not yet been proven at scale for quantum computing, but it is a priority area of our research and one that I consider a prerequisite to a full-scale commercial quantum system.

- Qubit Control: In order to implement complex algorithms, including error correction schemes, we need to prove that we can control multiple qubits. That control must have low-latency—on the order of 10's of nanoseconds. And it must come from CMOS-based adaptive feedback control circuits. This is a similar argument to that made in the aforementioned IEEE Spectrum article. However, though it is daunting, I have every reason to believe it is not impossible.

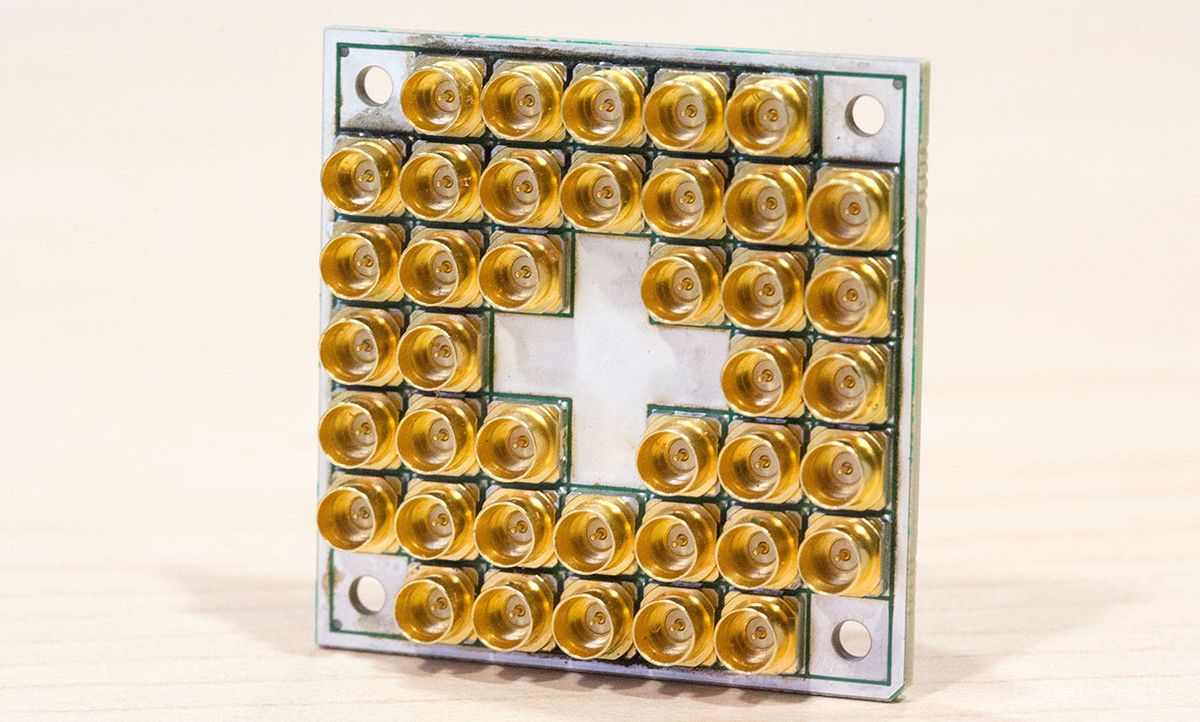

- Too Many Wires: Finally, we need to address “fan-out"—or how to scale up the number of qubits within a quantum chip. Today, we require multiple control wires, or multiple lasers, to create each qubit. It is difficult to believe that we could build a million-qubit chip with many millions of wires connecting to the circuit board or coming out of the cryogenic measurement chamber. In fact, the semiconductor industry recognized this problem in the mid-1960s and designated it Rent's Rule. Put another way, we will never drive on the quantum highway without well designed roads.

At Intel, we are working to tackle each of these challenges. As an example, we are working on qubits that operate at slightly higher temperatures and would, therefore, allow co-integration with CMOS-based electronics to facilitate qubit control. Higher temperature allows us to put CMOS electronics into the fridge without impacting the quantum states. Local CMOS electronics help us with the latency of our control systems and allow for more freedom for qubit wiring or interconnects.

It will take work. Do not be fooled by shiny tools or by pronouncements that this technology will arrive tomorrow. Every major change in the semiconductor community has happened on the decade timescale: from the transistor in 1947 to the integrated circuit in 1958 to the first microprocessor, the Intel 4004, in 1970. At the same time, do not be defeated by pronouncements of “never."

The potential is too great, and the stakes are too high to quit at mile one of a marathon.