With Moore’s Law slowing, engineers have been taking a cold hard look at what will keep computing going when it’s gone. Certainly artificial intelligence will play a role. So might quantum computing. But there are stranger things in the computing universe, and some of them got an airing at the IEEE International Conference on Rebooting Computing in November.

There were also some cool variations on classics such as reversible computing and neuromorphic chips. But some less-familiar ones got their time in the sun too, such as photonics chips that accelerate AI, nanomechanical comb-shaped logic, and a “hyperdimensional” speech recognition system. What follows includes a taste of both the strange and the potentially impactful.

Cold Quantum Neurons

Engineers are often envious of the brain’s marvelous energy efficiency. A single neuron only expends about 10 femtojoules (10-15 joules) with each spiking event. Michael L. Schneider and colleagues at the U.S. National Institute of Standards and Technology think they can get close to that figure using artificial neurons made up of two different types of Josephson junctions. These are superconducting devices that depend on the tunneling of pairs of electrons across a barrier, and they’re the basis of the most advanced quantum computers coming out of industrial labs today. A variant of these, the magnetic Josephson junction, has properties that can be tuned on the fly by varying currents and magnetic fields. Both can be operated in such a way that they produce spikes of voltage using only zeptojoules of energy—on the order of a 100,000th of a femtojoule.

The NIST scientists saw a way to link these devices together to form a neural network. In a simulation, they trained the network to recognize three letters (z, v, and n—a basic neural network test). Ideally, the network could recognize each letter using a mere 2 attojoules, or 2 femtojoules if you include the energy cost of refrigerating such a system to the needed 4 kelvins. There are a few spots where things are quite a bit less than ideal, of course. But assuming those can be engineered away, you could have a neural network with power consumption needs comparable to those of human neurons.

Computing with Wires

With transistors packed so tightly in advanced processors, the interconnects that link them up to form circuits are closer together than ever before. That causes crosstalk, where the signal on one line impinges on a neighbor via a parasitic capacitive connection. Rather than trying to engineer the crosstalk away, Naveen Kumar Macha and colleagues at the University of Missouri Kansas City decided to embrace it. In today’s logic the interfering “signal propagates as a glitch,” Macha told the engineers. “Now we want to use it for logic.”

They found that certain arrangements of interconnects could go a long way toward mimicking the actions of fundamental logic gates and circuits. Imagine three interconnect lines running parallel. Applying a voltage to either or both of the lines on the side, causes a crosstalk voltage to appear at the center line. Thus you have the makings of an OR gate with two inputs. By judiciously adding in a transistor here and there, the Kansas City crew constructed AND, OR, and XOR gates as well as a circuit that performs the carry function. The real advantage comes when you compare the transistor count and area to CMOS logic. For example, crosstalk logic needs just three transistors to carry out XOR while CMOS uses 14 and takes up one-third more space.

Attack of the Nanoblob!

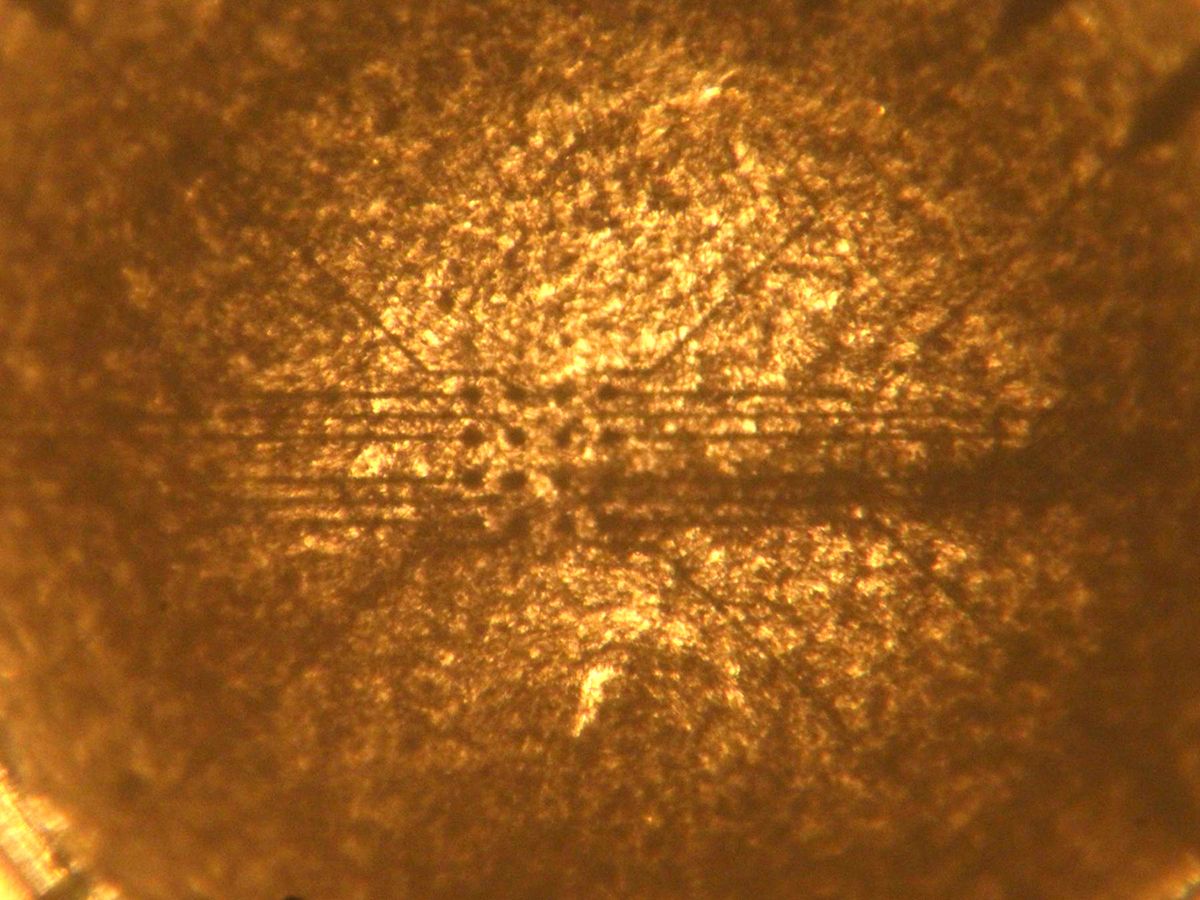

Scientists and engineers at Durham University in England have taught a thin film of nanomaterials to solve classification problems, such as spotting a cancerous lesion in a mammogram. Using evolutionary algorithms and a custom circuit board, they sent voltage pulses through an array of electrodes into a dilute mix of carbon nanotubes dispersed in a liquid crystal. Over time, the carbon nanotubes—a mix of conducting and semiconducting varieties—arranged themselves into a complex network that spanned the electrodes.

This network was capable of carrying out the key part of an optimization problem. What’s more, the blob could then learn to solve a second problem, so long as that problem was less complex than the first.

Did it solve these problems well? In one case, the results were comparable to a human’s; in the other, they were a bit worse. Still, it’s amazing that it works at all. “What you have to remember is that we’re training a blob of carbon nanotubes in liquid crystals,” said Eléonore Vissol-Gaudin, who helped develop the system at Durham.

Silicon Circuit Boards

Computer designers have long bemoaned the mismatch between how quickly and efficiently data moves within a processor and how much more slowly and wastefully it moves between them. The problem, according to engineers at the University of California, Los Angeles, lies in the nature of chip packages and the printed circuit boards they connect with. Both chip packages and circuit boards are poor conductors of heat so they limit how much power you can expend, they increase the energy needed to move a bit from one chip to another, and they slow computers down by adding latency. To be sure, industry has recognized a lot of these disadvantages and increasingly focuses on putting multiple chips together in the same package.

Puneet Gupta and his UCLA collaborators think computers would be much better if we got rid of both packages and circuit boards. They propose replacing the printed circuit board with a portion of silicon wafer. On such a “silicon integrated fabric,” unpackaged bare silicon chips could snuggle up within 100 micrometers of each other connected by the same type of fine, dense interconnects found on ICs—limiting latency and energy consumption and making for more compact systems.

If industry really did go in this direction, it would likely lead to a change in what kinds of ICs are made, Gupta contends. Silicon integrated fabric would favor breaking up systems-on-a-chip into small “chiplets” that do the functions of the various cores of the SoC. That’s because the SoC’s close integration would no longer give much of an advantage in terms of latency and efficiency, and it’s cheaper to make smaller chips. What’s more, because silicon is better than printed circuit boards at conducting heat, you could run those processor cores at higher clock speeds without having to worry about the heat.

An abridged version of this post appears in the January 2018 print issue as “4 Strange New Ways to Compute.”

Samuel K. Moore is the senior editor at IEEE Spectrum in charge of semiconductors coverage. An IEEE member, he has a bachelor's degree in biomedical engineering from Brown University and a master's degree in journalism from New York University.