Net Neutrality's Technical Troubles

The debate has centered on policy, law, and finance, as if the network itself were a given. It is not

After years of fence-sitting, the U.S. Federal Communications Commission has come down strongly in favor of Net neutrality, which in some sense must mean the equal treatment of all Internet data packets. The FCC plans to vote on a proposal on 26 February.

What, however, does equal treatment mean? The Net needs to manage its diverse traffic, just as a city must manage the flow of pedestrians, bicycles, buses, delivery trucks, cars, and the occasional emergency vehicle on its streets. Any general rule must affect some kinds of traffic differently from the others, which is why you have car-friendly roads, bike lanes, and malls given over entirely to foot traffic.

Everyone who suffers from Internet traffic jams has a favorite villain. Streaming-video watchers blame carriers for throttling data flow to their phones or computers. Real-time gamers howl that delays and losses in data transmission hobble their competitive performance. This problem is so bad that Riot Games, maker of the popular League of Legends, plans to build a dedicated high-performance gaming network.

The stakes are rising with the promise of new Net applications, such as communication among autonomous vehicles. Also at stake is the future of wire-line telephone service, which FCC chairman Tom Wheeler wants to shift from aging landline networks to the Internet.

Yet so far the debate has centered on policy, law, and finance, as if the network itself were a given. It is not.

“There’s a lot of complexity here at a technical level that is absolutely lost in the policy conversations,” says Fred Baker, a distinguished engineering fellow at Cisco Systems and former chair of the Internet Engineering Task Force. Getting the technology right is crucial for the future of the Net.

The fundamental technical challenge is getting the Net to carry traffic that it was never meant to handle. Internet packet switching was designed for digital file transfers between computers, and it was later adapted for e-mail and Web pages. For these purposes the digital data does not have to be delivered at a specific rate or even in a specific order, so it can be chopped into packets that are routed over separate paths to be reassembled, in leisurely fashion, at their destinations.

By contrast, voice and video signals must come fast and in a specific sequence. Conversations become difficult if words or syllables go missing or are delayed by more than a couple of tenths of a second. Our eyes can tolerate a bit more variation in video than our ears can tolerate in voice; on the other hand, video needs much more bandwidth.

Voice and video can be converted into series of packets coded to identify their contents as requiring transmission at a regular rate. For telephony, the packet priority codes are designed to keep the conversation flowing without annoying jitter—variations in when the packets are received. Similar codes help keep video packets flowing at the proper rate.

In practice, these flow controls are not crucial in today’s fixed broadband networks, which generally have enough capacity to transmit voice and video. But mobile apps are a different story.

“Wireless has a lot of stuff moving around, so it needs much tighter management than wire line,” says a senior engineer at Nokia Networks, where he is responsible for innovation. (Like a number of sources cited here, he spoke anonymously, as carriers and equipment makers are reluctant to discuss proprietary network management techniques.)

To understand more, we will dig into the details of voice telephony, which despite its low bandwidth is particularly time sensitive.

Conventional telephony uses circuit switching to directly connect two phones to each other with an audio bandwidth of 300 to 3,400 hertz, good enough for reasonably intelligible speech. The modern landline phone system digitizes that audio signal into a stream of 64,000 bits per second, which can be combined with many other calls on the same carrier, a technique called time-division multiplexing. Cellphones are also circuit switched, but digital speech compression is used to fit more calls into the limited radio spectrum, which reduces voice quality. Both landline and cellular phones feed into the same backbone telephone network.

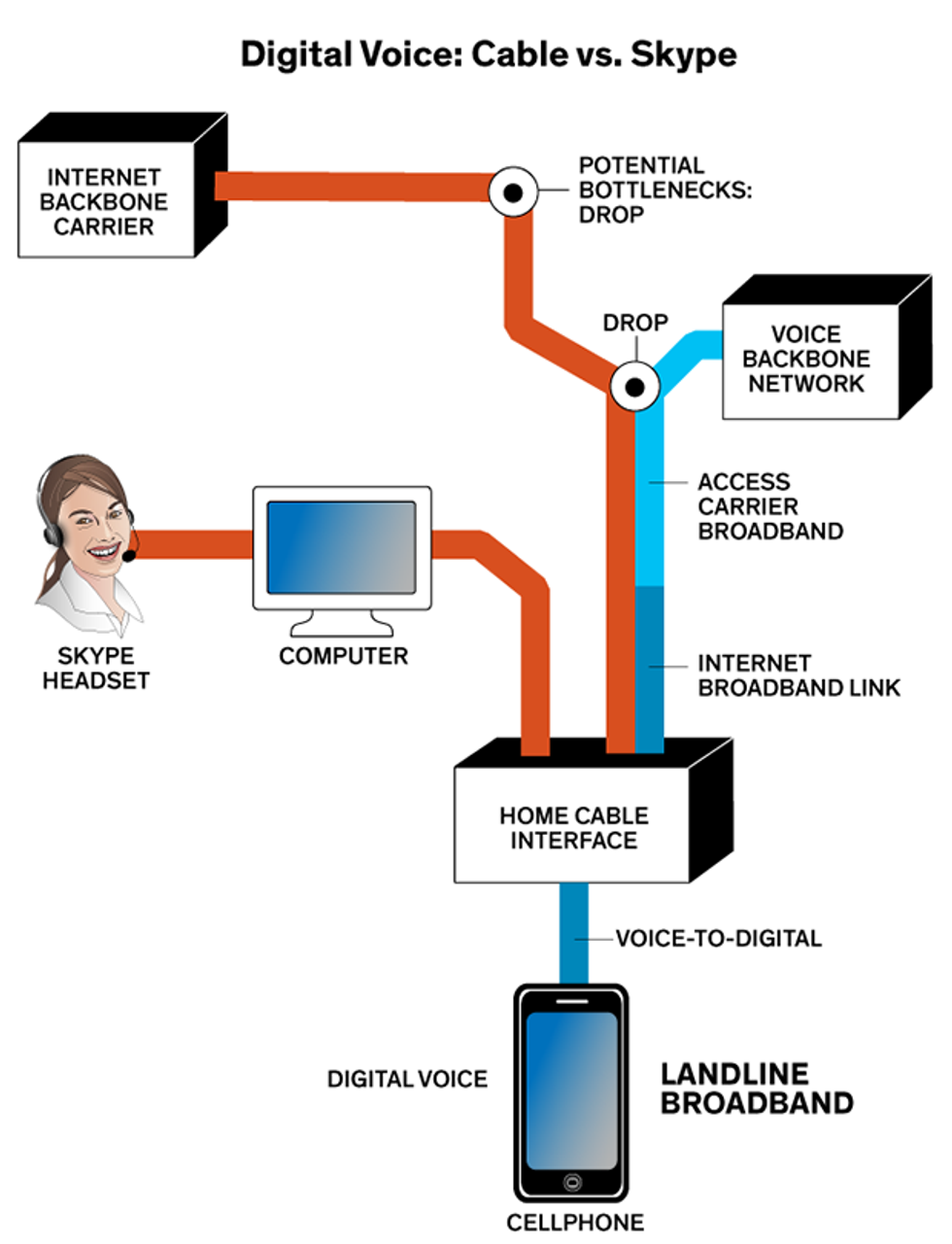

Carriers, with the FCC’s backing, propose to abandon the old twisted-pair copper wire lines, which have become a maintenance headache. To replace landlines, the carriers want to convert 64-kilobit voice channels into packets, which can be sent from home or office Internet connections over the Internet’s fiber-optic backbone—along with wireless conversations—more efficiently than over the existing backbone phone network.

Though this method does not provide a dedicated voice channel, it can still usually make the digital voice services act the same and sound as good as a landline. In fact, cable systems and Verizon’s FiOS fiber system already offer “digital voice” services, which send packets over the carrier’s broadband lines to the backbone phone network, where they are converted into 64-kb voice channels. That works well because fixed broadband access networks, like those operated by the cable systems and Verizon, usually have plenty of capacity for connecting to the backbone phone system.

However, calls on Skype and other Voice over IP (VoIP) services aren’t nearly so reliable, because they go over the carriers’ broadband lines to the Internet rather than to the backbone phone network. Internet traffic is more vulnerable to bottlenecks, where packets may suffer jitter, be delayed, or be lost entirely.

The human hearing system does not tolerate these flaws well because of its acute sense of timing. Twenty milliseconds of sudden silence can disturb a conversation, says speech researcher Harry Levitt, the CEO and chief scientist at Sense Synergy, in Bodega Bay, Calif. The longer the round-trip transit time, or latency, the more likely people are to talk over each other. Calls bounced off geosynchronous satellites never took off, in part because people couldn’t stand the quarter-to-half-second round-trip time. But such satellites work fine for data traffic.

The Internet discards packets that arrive after a maximum delay, and it can request retransmission of missing packets. That’s okay for Web pages and downloads, but real-time conversations can’t wait. Software may skip a missing packet or fill the gap by repeating the previous packet. That’s tolerable for vowels, which are long, even sounds, so a packet lost from the middle of “zoom” would go unnoticed. But consonants are short and sharp, so losing a packet at the end of “can’t” turns it into “can.” Severe congestion can cause whole sentences to vanish and make conversation impossible.

Such congestion is most serious on wireless networks, and it also already affects fixed broadband and backbone networks. Consumers frustrated by long video-buffering delays sometimes blame cable companies for intentionally throttling streaming video from companies like Netflix. But in 2014 the Measurement Lab consortium reported that the real bottlenecks are at interconnections between Internet access providers and backbone networks. “I suspect there is very little throttling within the United States,” says Measurement Lab researcher Collin Anderson.

The study measured data rates of broadband traffic in major urban centers including Dallas, New York City, and Los Angeles over much of 2013 and 2014. It reported “sustained performance degradation” when traffic from AT&T, Comcast, Centurylink, Time Warner Cable, and Verizon went through interconnections with three major backbone transit carriers: Cogent Communications, Level 3, and XO Communications. The degradation repeated when traffic passed between the same pairs of carriers.

Here’s how bad it got: For nearly a year, median download rates failed to reach 4 megabits per second for customers of Comcast, Time Warner, and Verizon who were connected to the test system through Cogent in New York City. The download rate varied daily, peaking in the low-traffic wee hours of the morning and crawling at peak usage times in the late afternoon and evening. In January 2014, peak-hour download rates for Comcast and Verizon customers dropped below the 0.5 Mb/s that the FCC considers the minimum rate usable for Web browsing. Then in late February 2014, the median download rate jumped above 12 Mb/s.

Those particular bottlenecks were low-capacity connections between Cogent and the carriers. But the root cause, says Anderson, “is not one culprit, not one transit provider, not one access ISP. This is a systemic issue.” It arises from business agreements that specify traffic volume and payment for service, the details of which are confidential. Those bottlenecks affect third-party VoIP services like Skype—which route their traffic through the Internet—but not carrier digital voice services, which connect to the backbone telephone network.

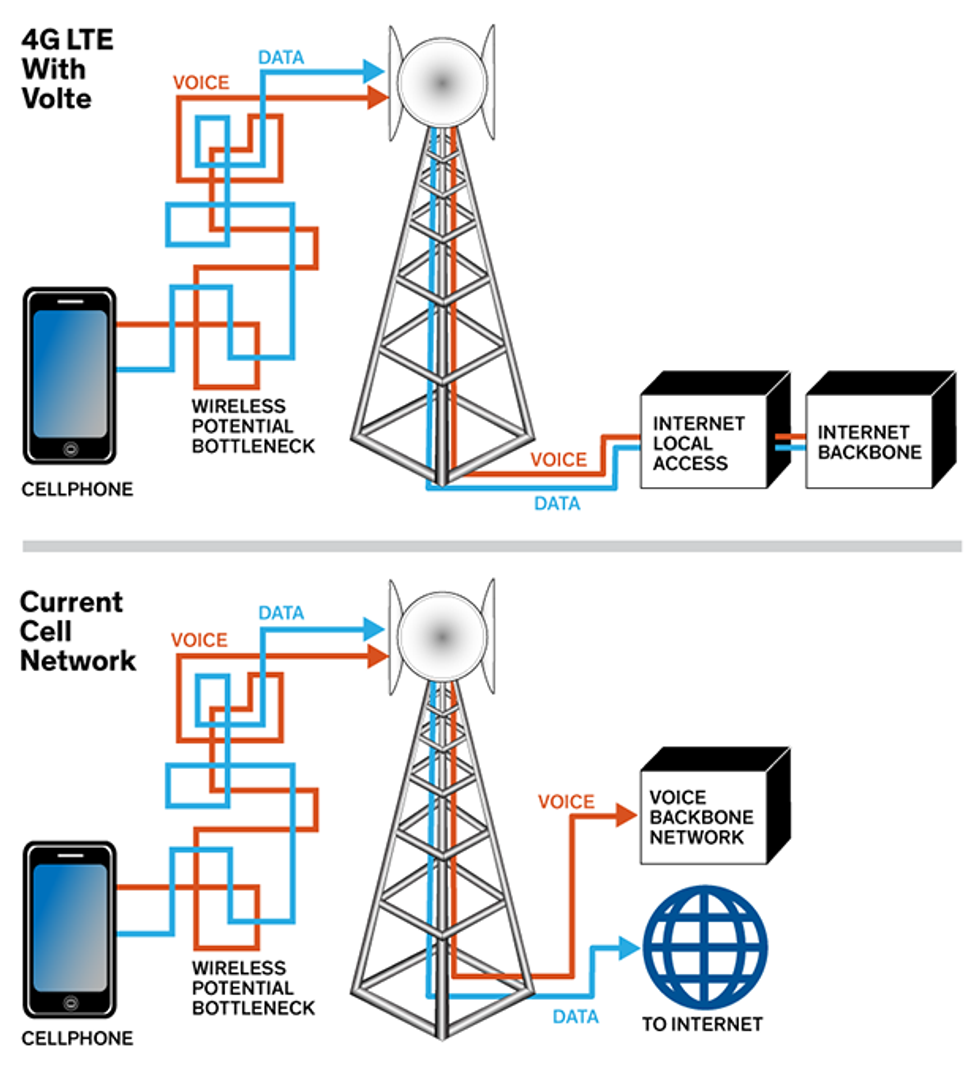

Wireless networks have their own internal congestion, which results from sharing a limited radio spectrum among many users. In 2G and 3G wireless systems, data and voice traffic are kept apart; they shunt the data over the Internet and the voice over a circuit-switched network linked to the backbone. The first 4G LTE phones sent data over the new LTE network but used the old 3G network for voice. Now carriers are phasing in a new generation of 4G LTE phones that use a protocol called Voice over LTE (VoLTE) that converts voice directly to packets for transmission on 4G networks along with data. VoLTE phones have an audio bandwidth of 50 to 7,000 Hz, twice that of conventional phones, which is supplied by a service called HD voice. VoLTE phones also use network management tools to manage the flow of time-sensitive packets.

Engineers decided that the best way to manage traffic flow was to label each packet with codes based on the time sensitivity of the data, so routers could use them to schedule transmission. Everyone called them priority codes, but the name wasn’t meant to imply that some packets were more important than others, only that they were more perishable, Baker says. It’s like the difference between a shipment of fresh fruit and one of preserves.

Here’s a set of such codes that the IEEE P802.1p task force defined in 1998 for local area networks. The highest priority values are for the most time-sensitive services, with the top two slots going to network management, followed by slots for voice packets, then video packets and other traffic.

Talk Is Not Cheap For Internet Traffic Managers

| PCP | Priority | Acronym | Traffic types |

| 1 | 0 (lowest) | BK | Background |

| 0 | 1 | BE | Best Effort |

| 2 | 2 | EE | Excellent Effort |

| 3 | 3 | CA | Critical Applications |

| 4 | 4 | VI | Video, < 100 ms latency and jitter |

| 5 | 5 | VO | Voice, < 10 ms latency and jitter |

| 6 | 6 | IC | Internetwork Control |

| 7 | 7 (highest) | NC | Network Control |

Source: https://standards.ieee.org/getieee802/download/802.1Q-2005.pdf, page 282, and https://en.wikipedia.org/wiki/IEEE_P802.1p

Although these codes have been accepted as potentially useful, industry sources tell IEEE Spectrum that they haven’t been widely used for wire line, fiber broadband, or the backbone Internet. Those systems generally have adequate internal capacity.

The packet coding built into LTE and VoLTE is a different matter because that traffic goes over wireless networks, which do have limited internal capacity. The LTE packet coding standard reflects the mobile environment and the introduction of new services. It assigns a special priority code to real-time gaming traffic, which requires very fast transit times to keep competition even. It also divides video into two classes with distinct requirements. Real-time “conversational” services such as conferencing and videophone are similar to voice telephony in that delays degrade their usability. Buffered streaming video can better tolerate packet delays because it is not interactive.

Here are the LTE codes:

| QCI | Resource Type | Priority | Packet Delay Budget | Packet Error Loss Rate | Example Services |

| 1 | GBR | 2 | 100 ms | 10-2 | Conversational Voice |

| 2 | 4 | 150 ms | 10-3 | Conversational Video (live streaming) | |

| 3 | 3 | 50 ms | 10-3 | Real-Time Gaming | |

| 4 | 5 | 300 ms | 10-6 | Non-Conversational Video (buffered streaming) | |

| 5 | Non-GBR | 1 | 100 ms | 10-6 | IMS Signaling |

| 6 | 6 | 300 ms | 10-6 |

| |

| 7 | 7 | 100 ms | 10-3 |

| |

| 8 | 8 | 300 ms | 10-6 |

| |

| 9 | 9 |

These new Net management tools allowed carriers to improve their existing services and offer new ones. Carriers now boast of the good voice quality of VoLTE phones, after years of ignoring the poor sound of 2G and 3G phones. Premium-price services could follow, such as special channels for remote real-time control of Internet of Things devices.

Yet the differential treatment of packets worries advocates of Net neutrality, who fear that carriers could misuse those technologies to limit customer access to sites and services.

The central tenet of Net neutrality is that carriers should not discriminate among the services they carry. That way the cable companies, for instance, won’t be able to throttle Netflix just because it competes with their own video programming.

But Net neutrality means different things to different people. Some want equal treatment for all bits; others merely want equal treatment for all information providers, which would then be free to assign priorities to their own services. Still others say that carriers should be able to charge extra for premium services, but not to block or throttle access. Each approach has different implications for network management.

Treating all bits equally has become a popular mantra. It says just what it means, giving it a charming simplicity that leaves little wiggle room for companies trying to game the system. Championed by the nonprofit Electronic Frontier Foundation (EFF), the purists’ position seems to be gaining advocates.

Yet its philosophical clarity could come at the cost of telephone clarity. “LTE uses expedited forwarding services and [packet] priority to reduce jitter, which reduces voice quality,” says Cisco’s Baker. But that involves giving some bits priority over others. And all telephone traffic could be affected if the FCC pursued its plan to shift wire-line phone service to the Internet.

Some observers doubt that Net neutrality purists mean what they say. Yet Jeremy Gillula, a technologist for EFF, says “network operators shouldn’t be doing any sort of discrimination when it comes to managing their networks.” One reason is that EFF advocates the encryption of Internet traffic, and as Gillula points out, encrypted data can’t be examined to see whether it should get priority. Moreover, he adds, “by allowing some packets to be treated better than others, we’re closing off a universe of new ways of using the Internet that we haven’t even discovered yet, and resigning ourselves to accepting only what already exists.”

Other advocacy groups take a less restrictive approach. “We realize that the network needs management to provide the desired services,” says Danielle Kehl, a policy analyst for the New America Foundation’s Open Technology Institute. “The key is to make sure network management is not an excuse to violate Net neutrality.” Thus they would allow carriers to schedule conversational video packets differently than those carrying streaming video, which is less time sensitive. But they would not allow carriers to differentiate between streaming video packets from two different companies.

A key argument for this approach is the 2003 observation by Tim Wu, now a Columbia University law professor, that packet switching inherently discriminates against time-sensitive applications. That is, packet switching without Net management can’t prevent degradation of time-sensitive services on a busy network.

President Obama largely followed this lead in his November 2014 speech advocating Net neutrality. He did not say that all bits should be treated equally but specified four rules: no blocking, no throttling, no special treatment at interconnections, and no paid prioritization to speed content transmission.

The industry’s view of Net neutrality has another key difference—it should allow companies to offer premium-priced services. A Nokia policy paper says that users should be able to “communicate with any other individual or business and access the lawful content of their choice free from any blocking or throttling, except in the case of reasonable network management needs, which are applied to all traffic in a consistent manner.” But the paper adds that “fee-based differentiation” should be allowed for specialized services, as long as it is transparent.

Carriers like this approach because adding premium services would give them a financial incentive to improve their networks. Critics counter that offering an express lane to premium customers could relegate other users to the slow lane, particularly in busy wireless networks. A crucial issue to be resolved is who pays for premium service.

The big technology question in the debate over Net neutrality is which approach to packet management would give the best performance now and in the future.

“Networks just plain don’t work worth s--t if you literally treat every packet exactly the same as every other packet,” said one engineer, who asked not to be named. Cisco’s Baker put it more delicately, saying that equal treatment for all packets “would be setting the industry back 20 years.”

That’s particularly true of wireless networks, where high demand and limited bandwidth make network management crucial. Take away priority coding and you break VoLTE, the first technology to offer major improvements in cellular voice quality. And without VoLTE or a similar packet-management scheme, there’s no obvious way to realize the FCC’s tentative plan to move wire-line telephony onto the Internet without degrading voice quality to cellphone level.

Other proposed services also depend on priority coding. “If the Internet of Things develops, a lot of applications will require accurate real-time data to work well,” says Jeff Campbell, vice president of global policy and government affairs at Cisco. Telemedicine, teleoperation of remote devices, and real-time interaction among autonomous vehicles could be problematic if data packets could get stalled at peak congestion times.

Some analysts argue that packet scheduling could throttle other traffic by limiting the unscheduled bandwidth. But others counter that this should not be a problem in a well-designed network, one with adequate capacity and interconnections.

As undemocratic as packet scheduling may be, it seems the best technology available for delivering a mixture of time-sensitive and -insensitive services. “Some Net neutrality advocates are convinced that any kind of management will create bad results, but they’re not willing to accept that having no management will also have bad results,” says a senior Nokia engineer.

So, Internet purists take heed: Traffic management is as vital on the Internet as it is on streets and highways.

About the Author

Jeff Hecht writes for Laser Focus World and New Scientist and is the author of 11 books. His last feature for IEEE Spectrum was “Why Mobile Voice Quality Still Stinks—and How to Fix It, ” in September 2014.