Lasers: Coming to a Theater Near You

Laser-based projection technology will make cinema screens bigger and brighter

That’s the problem.

The movie industry is among the world’s most important businesses. The Motion Picture Association of America says that films produced in the United States alone pulled in US $34.7 billion in worldwide box-office revenues in 2012. And yet the industry is beset by a dismaying trend: More and more, people are watching movies on their laptops, tablets, and smartphones. In wealthy countries, middle-class homes are now typically outfitted with huge flat-panel TVs and powerful surround-sound audio systems. The upshot is that for many people, particularly middle-aged ones, a trip to a movie theater is becoming a rare event, if not an increasingly distant memory.

The motion-picture industry is fighting back, though—with technology. There’s a huge push today to develop sophisticated systems that will ensure that the theater experience remains far superior to anything you can get at home or while watching a small screen. These systems will foster the continued growth of 3-D motion pictures, as well as a gradual migration to movies with a higher frame rate, higher spatial resolution, deeper contrast, and even a vastly greater color palette than today’s films.

The upshot is that the next three to five years will see the most significant and the fastest technological transition in the history of motion pictures. For the first time, industry leaders have agreed on the need to go beyond the familiar but optically limited characteristics of film stock and embrace the dazzling capabilities of all-digital motion pictures. At the end of this transition, the optical parameters of motion pictures will for the first time approach the capabilities of the human visual system.

This technological revolution will come from some radical transformations in motion-picture projectors. Since 2000, movie theaters have been switching over to digital projectors. But these projectors continue to rely on a 60-year-old technology: xenon electric-arc lamps, whose brightness fades over time. Even brand new, these lamps are not up to the demands of 3-D movies, especially on larger screens. The movie projectors of the future will replace these lightbulbs with lasers.

Actually, the revolution has already started. In September of 2012, Martin Scorsese’s film Hugo became the first commercial feature-length movie to be shown publicly with a laser projector. The film was an appropriate choice, because Hugo is partly an homage to the early history of cinema. Christie Digital Systems, the world’s largest supplier of digital cinema projectors, achieved this milestone at the International Broadcasting Convention in Amsterdam.

In the United States, Italy, and Australia, theater owners will start installing commercial laser-based projectors in the next several months. Some sales have already been announced. Christie, based in Cypress, Calif., has sold its first laser projector to the historic Seattle Cinerama Theater, which is owned by Paul Allen, Microsoft’s cofounder. IMAX, the leading large-screen theater organization, has announced several contracts to convert its 70-millimeter film projectors to laser digital. These systems are scheduled to be shipped in the second half of this year. NEC Display Solutions, which also makes cinema projectors, introduced a laser-based unit for smaller screens at the ShowEast conference this past October. Barco, based in Kortrijk, Belgium, and Sony, the other big players in the cinema-projector market, also intend to introduce laser-equipped models later this year or in 2015. A U.S. company, Laser Light Engines, is working on retrofit kits that will allow technicians to upgrade an existing digital projector by replacing the arc lamp with a laser illumination system. There are more than 100 000 digital theater projectors in the world.

In the larger scheme, these new movie projectors will operate within a globally standardized regime for the capture, encryption, distribution, and exhibition of digital movie content. For more than a decade, the world’s movie industries have sought an international standard to guide their transition from 35-mm film to digital. In 2002, the (then) seven major Hollywood studios formed a consortium, the Digital Cinema Initiative, for just this purpose. But such an agreement largely eluded them until 2007, when the first version of the standard, DC28, was finally announced. The most recent version of that standard specifies how current and future projectors will handle and show movies that are breathtakingly more vivid than today’s.

Want to know how these projectors work? Then sit back, relax, and let’s get on with the show….

A typical digital cinema projector costs between $40 000 and $80 000 and is a combination of video, audio, and security components. A typical 2-hour movie fits into a compressed data file of about 150 to 200 gigabytes, which contains the encrypted picture, sound, and other data. A motion-picture projector must do more than turn that data file into a series of color images beamed at the screen at 24 frames per second. It must also be capable of downloading the movie data, which is delivered in encrypted form from the studios to theaters. The most common method of delivery today is on a hard-disk drive, shipped by a courier such as FedEx. At the theater, the digital file is loaded onto a server. The server must store that movie file securely, and the projector must decrypt it and process it for display. The projector must also provide a synchronized output for a variety of multichannel digital sound systems and also for such features as subtitles and tracks for the hearing or visually impaired.

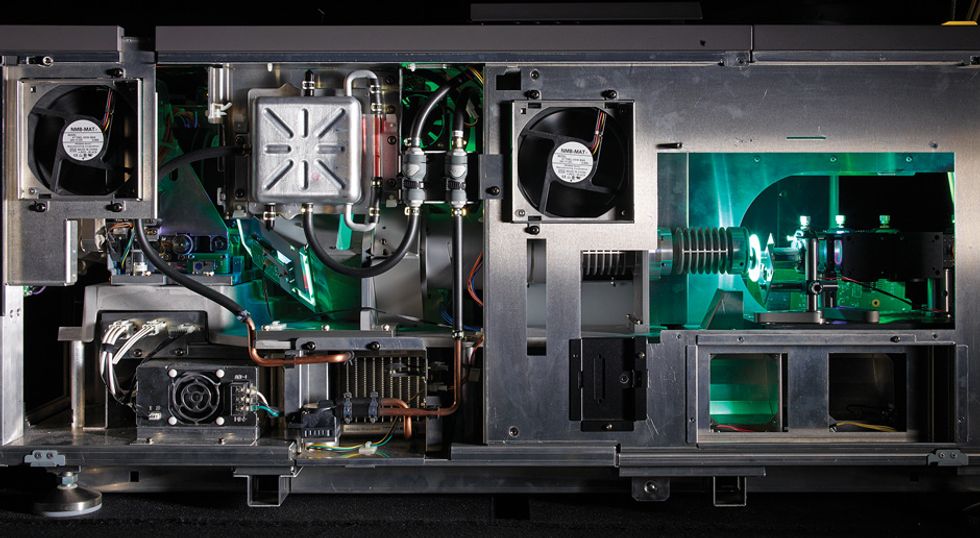

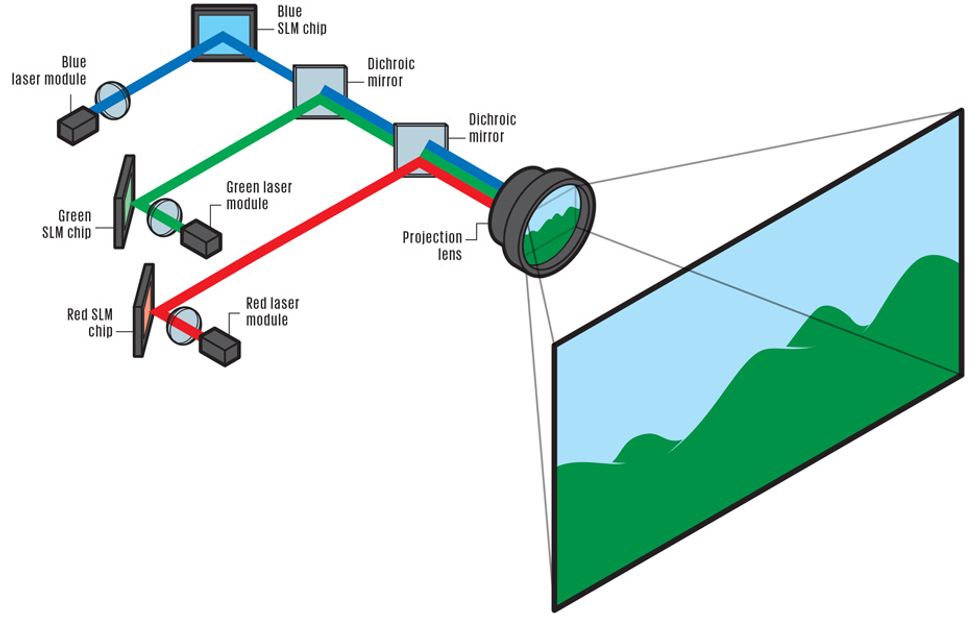

At the heart of a digital movie projector is something called the image block. It consists of a complex optical assembly of prisms and filters, as well as three spatial light-modulator chips, one each for the red, green, and blue image components. In operation, the optical assembly takes a bright beam of white light and separates it into red, green, and blue beams. Each colored beam has a spectral bandwidth of 40 to 60 nanometers and illuminates its respective chip, which determines, for each pixel of each frame, the amount of light that is sent on to the screen. There the three primary colors combine to make a full-color moving image.

These light-modulator chips are based on one of two competing technologies: Texas Instruments’ digital micromirror device (DMD) and Sony’s liquid crystal on silicon (LCoS). TI’s DMD chip has millions of tiny mirrors that tilt to deflect light, creating millions of tiny beams, one for each pixel in the image. The timing of their tilting controls the amount of light projected for each pixel during that frame. Sony’s LCoS, on the other hand, uses a liquid-crystal valve to adjust the amount of light that gets reflected off the chip for each pixel during the frame. That both light-modulator chips rely on reflection is important because it means that the backs of these chips can be cooled, with either a flowing liquid or air directed by a fan.

Both the TI and Sony chips are capable of projecting colors at 4096 different brightness levels per pixel. This is called 12-bit precision, or 36 bits total for the three primary colors. Thus the total number of possible combinations of the three primary colors is 40963, or 68.7 billion colors.

TI’s chips are used in movie projectors from Barco, Christie Digital, and NEC, while Sony’s chips are used exclusively in Sony’s projectors. TI offers chip sets at two different resolution levels: 2048 by 1080 pixels (called 2K) and 4096 by 2160 pixels (4K). Sony offers only 4K.

So why the need for new technology? Basically, because of 3-D. Until the late 2000s, the various groups involved in the Digital Cinema Initiative—the studios, the theater owners, and the projector manufacturers—were moving methodically toward a system that would give them acceptable image quality and lower distribution costs. In 2005, the studios and manufacturers rushed two prototype 3-D systems to a small number of theaters, to test customer reactions and promote the new capability. These early systems, which were developed outside of the Digital Cinema Initiative, produced images that were dim in comparison with those of 2-D movies and weren’t very popular at first.

Movie Projectors, Today and Tomorrow

| Performance Metric | Present: Arc Lamps | Future: Lasers |

| Projector brightness, lumens | Peak: 34 000 Constant: 22 000 | 50 000 to 100 000 |

| Lifetime of light source, hours | Large lamp: 300 to 800 Medium lamp: 1000 | 20 000 to 50 000 (5 to 12 years) |

| Dynamic range, brightest to darkest for each primary color | 2000:1 | 10 000:1 |

| Resolution, pixels per frame | 2 211 840 (“2K”) or 8 847 360 (“4K”) | Up to 33 177 600 (“8K”) |

| Frame rate, frames per second | 24 and 48 for 2K; > 24 for 4K | 60 and 120 possible for 2K; 48 for 4K |

| Color gamut, percentage of gamut visible to people | 40 percent | 60 percent |

| Efficiency, lumens per wall-plug watt | 4.8 | >10 |

But then, in December 2009, along came Avatar. James Cameron’s record-breaking film convincingly demonstrated 3-D cinema’s ability to dazzle audiences and command higher ticket prices. Finally, theater owners had a financial incentive to convert to digital. But the introduction of 3-D was ad hoc and uncontrolled. It was a kind of technological Wild West, with studios and 3-D-system suppliers engaging in shoot-outs that pitted the studios’ rollout schedules against the suppliers’ efforts to maintain standards of image quality and brightness. Money won over quality. After Avatar, these improvised 3-D systems became entrenched worldwide as “good enough” solutions.

The problem is that on anything except a very small screen, 3-D movies are simply too dim. One reason is that the high-pressure xenon arc lamps used in movie projectors today lose a lot of brightness over time. The brightest digital projectors can emit about 30 000 lumens with a brand new lamp, but this brightness drops to about 22 000 lm after about 200 hours and eventually levels off at something less than 15 000 lm within 800 hours.

What’s more, the 3-D technology itself cuts way down on the light. A 3-D projector shows the left and the right eye slightly different views of the same scene. And using a single projector, there are only two ways you can display different left-eye and right-eye frames: You can separate them in time or in space. Projectors incorporating TI chips do the former, and Sony projectors, the latter.

The typical 3-D system developed for TI chips projects alternate left-eye and right-eye images. To portray motion smoothly, the system actually flashes three separate left-eye and right-eye images within the frame period, which is 1/24th of a second. The left-eye and right-eye images are projected with different polarizations. Basically, in a linearly polarized beam of light, the electromagnetic waves vibrate in a single plane. Different polarizations means that the planes are at an angle with respect to each other, typically 90 degrees.

In a 3-D projector setup, the polarization is actually circular, but the phenomenon is no less useful for discriminating between two beams of light. In the projector, the alternating polarizations are accomplished with an electro-optical polarizing filter that switches from one polarization to the other with each image flash. The viewer, meanwhile, wears eyeglasses that have corresponding polarization filters, so only the appropriate image reaches each eye. The projector displays images at 24 times 6 (3 for the right eye and 3 for the left)—or 144—flashes per second.

In Sony’s approach, both views are projected at the same time and on the same screen. The Sony spatial light modulators can’t flash at a rate of 144 per second. So Sony subdivides the 4K chip into two different 2K subpanels, one for the left-eye images and one for the right. Thus in this 3-D system, the left- and right-eye images are 2K wide but only 0.8K tall (some pixels are lost to a guard band). The Sony 3-D projector has two lenses and a polarizing filter in front of each. The images projected to the left eye go through one lens and the right-eye images go through the other. Then the two images move onto the screen, where they are superimposed on each other. As with the TI system, the viewer wears glasses with corresponding polarized lenses.

Both approaches waste a lot of light—not just at the polarizing filters but also at the spatial light modulators. Consider a viewer watching a 3-D movie projected with TI chips: Each eye sees light slightly less than half the time. With the Sony system, the lamp inside the projector illuminates the entire spatial light-modulator chip, but the projector uses less than half the chip’s area to illuminate the screen—again, a roughly 50 percent light reduction.

All told, the losses at the projector, including the polarizing filters, are about 75 percent. Then, 20 percent of what’s left is lost at the viewer’s 3-D glasses. So the overall loss is approximately 80 percent. Even highly reflective screens can’t make up for that much loss. And remember, the brightness of the bulb declines with time.

So perhaps it’s no surprise that the popularity of 3-D has been declining, with the exception of the recent motion picture Gravity (which often kept the screen filled with the blackness of space, making dimness less of an issue).

Why not just make brighter xenon arc lamps? The short answer is: Because it wouldn’t help. Like any arc lamp, projector bulbs throw out light in all directions. And then this white light must be split into red, green, and blue bands, which are focused onto the light-modulator chips inside the projector. These chips are just 17.5 or 35 millimeters on the diagonal. To make an arc lamp brighter, the bulb itself must become physically larger, including the arc that produces the light. But increasing the size of the arc makes it harder to focus the light onto the chip. In practical terms, we’ve already hit the wall in terms of getting more light from arc lamps through digital cinema projectors.

Lasers do not share this limitation. All of their power can be easily focused onto a small area, and essentially all of that power is used. That’s not the case with white light: After the three primary colored beams are separated from the arc light, the rest of the visible light spectrum, as well as a lot of infrared and ultraviolet radiation, is wasted. It is dumped within the projector, which must therefore dissipate a lot of heat.

Lasers have other advantages, too. They can be very efficient electrically, last 20 000 to 50 000 hours, have near-constant output, and are highly controllable. Also, because lasers are compact and do not get very hot, they can be packaged into a system small enough to replace the xenon lamp assembly in an existing projector.

These considerations have long intrigued projector makers. The laser technology itself goes back more than a decade, when U.S. and German companies developed laser light sources to go into flight simulators for pilot training. IEEE Life Fellow Peter Moulton and I conceived the company Laser Light Engines to commercialize the laser system Moulton developed for the U.S. Air Force Research Laboratory. This system used infrared laser diodes to pump a laser crystal, which produced another infrared laser beam. That beam went into a series of nonlinear optical crystals that converted the infrared into the red, green, and blue beams. Nowadays, the company uses an aggregation of semiconductor laser diodes to produce the red and blue laser beams. The red beam is generated with gallium arsenide–based diodes, with quantum wells of aluminum gallium indium phosphide. The blue are gallium nitride diodes, with indium gallium nitride quantum wells. The green comes from a high-powered, frequency-doubled, diode-pumped laser.

Early on, though, it was far from clear that lasers were the way forward. Their biggest problem was a shimmery image artifact called speckle. Instead of a patch of solid color with completely uniform brightness, early laser projectors produced images with sparkling surfaces that seemed to dance and move, especially if you moved your head. Speckle occurs because the surface roughness of most movie screens is on the order of a wavelength of visible light. So rays of laser light reflecting from the screen constructively and destructively interfere with one another.

Thus laser projectors seemed dead on arrival until Laser Light Engines, which is based in Salem, N.H., solved the speckle problem in 2010. It developed several solutions before settling on one: broadening the spectral bandwidth of the red, green, and blue beams enough to avoid speckle. For that it uses a proprietary nonlinear optical process, which effectively reduces the coherence of the laser beam, widening the bandwidth of the colored beams from about 0.1 nanometers to 10 to 30 nm.

After speckle was tackled, the challenges became more conventional: delivering as many as 600 watts of total laser power while achieving the desired figures for projector lifetime, energy efficiency, and cost. These goals are 50 000 hours or about 10 years, 10 white lumens per wall-plug watt, and an acquisition cost lower than that of a lamp-based projector, plus the many bulbs needed over its lifetime (these lamps cost about $1000 apiece). Laser Light Engines is close to achieving all of these figures; the last goal will be the most difficult, but I am confident that it will be achieved in three to five years.

All the major projector makers have now joined Laser Light Engines in building laser-illumination systems. The brightest of these projectors can put out 70 000 lumens—several times as many as an arc-lamp projector. That brightness is more than enough to offset the losses caused by 3-D. This past November, Laser Light Engines and NEC demonstrated projectors with this new light source at the Technicolor facility in Burbank, Calif.

Higher brightness benefits not just 3-D but 2-D movies, too. The reason is that more brightness means a greater range of luminance, or brightness, from sunlight bright to deep black. But to take advantage of that wider range, software specialists will have to increase the number of levels of digital encoding between bright and dark pixels, to create a smooth ramp in brightness over that extended range. This increase in “bit depth” per pixel in turn will require huge increases in digital bandwidth, but it’ll be worth it. Today, movies don’t even come close to displaying the natural contrast that the human eye can see.

In the longer term, digital cinema and laser projectors will far transcend the boundaries of traditional film. Today’s arc-lamp-based projectors can produce only about 40 percent of the colors that most people are capable of perceiving, whereas laser-based systems can reproduce up to around 60 percent. Laser illumination can also project much more saturated colors because its red, green, and blue beams can have much narrower spectral bandwidth than filtered lamp light.

This vastly greater, brighter, and more saturated palette will translate into movies that are more vivid than anything possible today. But such an advance won’t come easily: More colors will require coordinated changes to global standards, and that won’t happen without a lot of arguing over how “wide” to go. More colors will require more bits, which will in turn require more bandwidth to and within the projector.

Higher contrast and color rendering aren’t the only factors that will increase the size of movie files. Movie directors are starting to use frame rates higher than 24 per second, the standard since around 1927. Peter Jackson’s The Hobbit: An Unexpected Journey (2012) was filmed at 48 frames per second, as was its recent sequel, The Hobbit: The Desolation of Smaug. A movie’s frame rate has a huge influence on how the viewer perceives motion, and it also increases perceived contrast and resolution. Higher frame rates allow the appearance of fast-moving objects to remain supersharp and can eliminate the jerky effects that can arise when the camera or the subject is moving. But as with higher dynamic range, there is a price to pay. Showing more frames per second increases not only the quantity of data in the movie file but also the data-transmission rates necessary to project that movie.

Yet another possibility for future movies is greater spatial resolution, or pixels per scene. This resolution is limited by spatial-light-modulator technology, either DMD or LCoS. Most theaters today show movies in 2K (2 211 840 pixels per frame). Some motion-picture executives would like to see a migration to 4K (8 847 360 pixels per frame).

However, as resolution doubles, the uncompressed data required increases by a factor of 4. So, again, the refrain is “more bandwidth, please.”

What does all this mean? If you were to make a 2-hour motion picture with an extended color range in 4K and at 48 frames per second, the raw (uncompressed) movie file would occupy more than 15 terabytes. For comparison, the total amount of data in all of the e-mails sent in the United States in one year has been estimated to be 10.6 TB.

All of these parameters—luminance (brightness), chrominance (color), frame rate, and even laser-pulse rate—will be independently adjustable on future movie projectors. This controllability will make the projector more versatile, enabling it to present many different levels of image quality. This processing, done on the fly in the projector, will ensure the highest image quality for a given data rate.

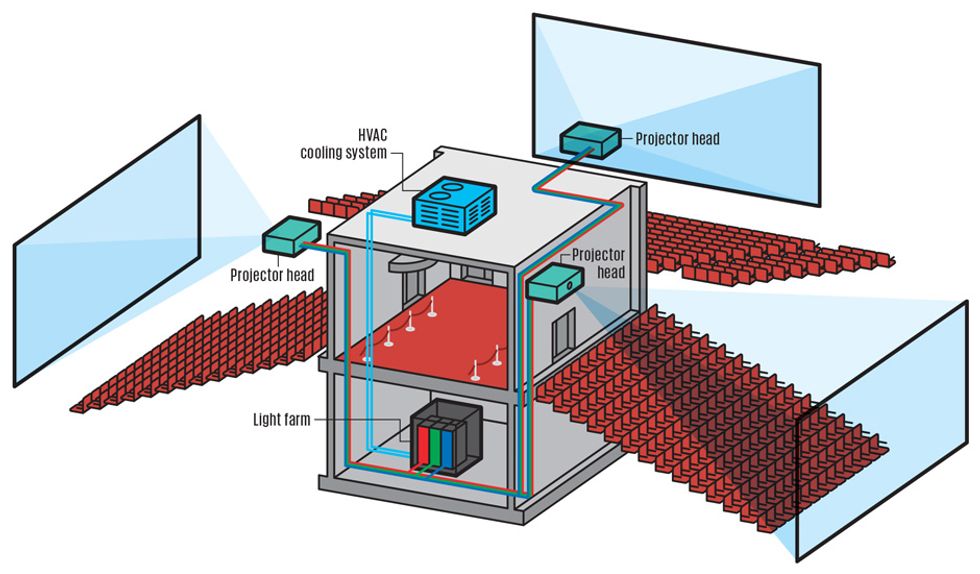

These developments will inevitably change movie theaters. Already, new “projector head” designs are available that contain no light source, only the spatial light modulators and the optics that create the moving image and focus it on the screen. Connected by a fiber-optic cable, these projector heads can be fed with laser light from sources that are tens of meters away. So it is likely that a future multiplex theater will have a centrally located “light farm” in which racks of high-power red, green, and blue lasers will be supported by efficient power supplies and liquid cooling from the cinema’s rooftop HVAC system.

Compact projector heads would hang from the ceiling of each auditorium in the theater. Red, green, and blue laser light from the light farm would be channeled into separate armored, fiber-optic cables that snake through the walls of the theater to the projection heads in the auditoriums. With such a scheme, there would be no need for a projection booth in the theater. The projectors and light farm could be controlled from remote network operating centers, greatly reducing the overhead costs of running a chain of theaters.

All of these improvements will require more engineering and also big, complex, and coordinated system-level developments. There will have to be more compromises and consensus. And the payoff is never certain: Some observers contend that a generation has already been trained to be content with the small screen.

I say that there will always be a market for premium, large-format, truly social entertainment. But we’d better get on with it. Cinema must evolve if it’s going to get us off the sofa and into the theater.

This article originally appeared in print as “Lasers Light up the Silver Screen.”

About the Author

Bill Beck is president of BTM Consulting and cofounder and former chairman of LIPA, the Laser Illuminated Projector Association, which promotes the adoption of laser-illuminated digital cinema projectors. In a long career as a contrarian entrepreneur, he started four businesses involving unlikely applications of fiber optics and lasers. He holds a BA degree from Dartmouth College, where he dabbled in film criticism, and an MBA from Rensselaer Polytechnic Institute.