23 June 2010—Earlier this month Intel and Imec—a European nanotech research center in Leuven, Belgium—announced that they are partnering to push supercomputers to the fastest, highest performance levels yet. According to Imec engineers, that could help solve incredibly complex research problems, such as simulating the human brain, predicting space weather, and modeling Earth’s climate and the global economy. The two companies see software innovation as key to making it all happen.

Today’s models for complex systems like space weather won’t run in even the most advanced supercomputers at the level of detail needed to fully understand the systems, says Imec engineer Wilfried Verachtert, who heads up the newly minted Flanders ExaScience Lab. The programs would chew through so much power while calculating that they’d literally burn up today’s supercomputer hardware, he says.

Two dozen computer programmers, hardware engineers, and scientists at the ExaScience Lab—a collaboration of Intel, Imec, five Flemish universities, and the Flemish Agency for Innovation by Science and Technology (IWT)—will design software to overcome the heat and reliability problems that tomorrow’s supercomputing hardware will face. They hope their code will power the next generation of supercomputers, which will run a billion concurrent threads on a million cores to carry out their calculations.

The best supercomputers today can do about 2 petaflops (2 quadrillion floating point operations per second). That’s about 100 000 times as many operations per second as an average PC, according to Verachtert. Exascale supercomputers will be 1000 times as fast as the petascale—the equivalent of 50 million of today’s PCs.

They will be "the future of high-performance computing," according to Martin Curley, a senior principal engineer and director of Intel Labs Europe, who spoke at Imec’s 2010 Technology Forum, where the new lab initiative was announced. Using today’s most powerful supercomputer to model 100 years of global climate, Curley told local dignitaries and Imec partners, would take more than a month. With a supercomputer speeding along at an exascale pace, it would take mere seconds.

However, experts have expressed doubts that exascale computing is achievable in the near term. A report for the U.S. Defense Advanced Research Projects Agency (DARPA) by a group of supercomputer luminaries concluded that power consumption and other factors—including the manycore model itself—would make exascale computing impractical.

Indeed, the Flanders consortium leaders don’t see the path to exascale as easy—you can’t just stack up 50 million laptops. To make exascale computing viable, you need advances in hardware systems like memory technology, communication infrastructure, and hardware redundancy. Yet these alone are not enough, says Imec’s president and CEO, Luc Van den hove. It will also take advances in programming. Exascale computing will require new ways to program massively parallel machines and to compensate for the inevitable hardware failures that will result from the sheer amount of equipment needed. It will also require advances to properly manage the machine’s power consumption, which could run as high as hundreds or thousands of megawatts. If the heat is not handled correctly, the software could easily melt the very hardware it was designed to control.

The fastest supercomputer to date, the Oak Ridge National Laboratory’s Cray XT5 Jaguar, which ran 1.76 petaflops in the June 2010 Top500 supercomputer tests, consumes almost 7 megawatts running at top speed. But even the rosiest estimates of power consumption by future exascale computing systems come in at a few hundred megawatts, which would take a small nuclear power plant to run.

"That’s not acceptable or sustainable," says Intel’s Curley. The consortium wants future systems to run at 50 MW or less and believes that software will be the key to doing so.

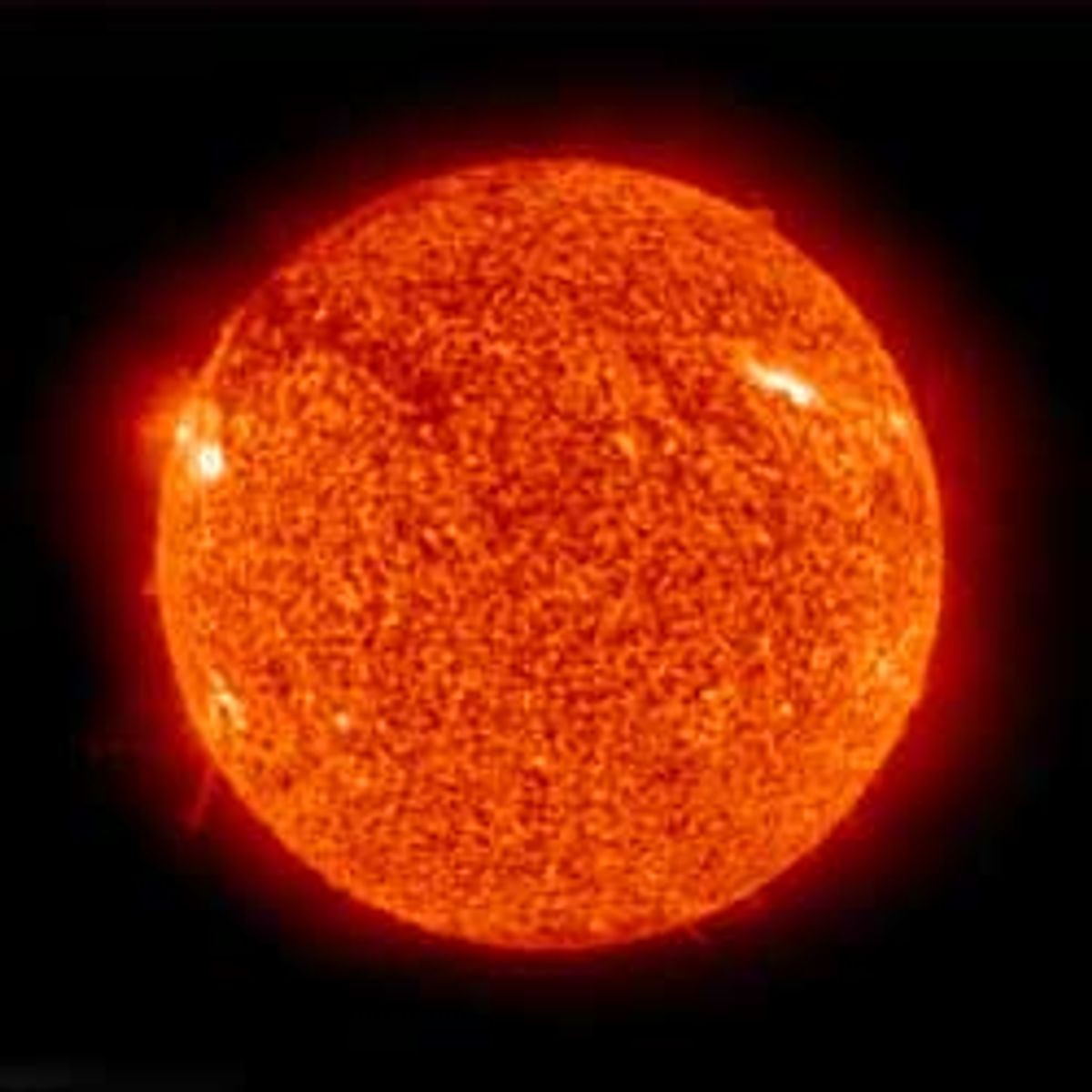

To design the new software, Verachtert’s group is first tackling simulations of space weather—the electromagnetic activity surrounding Earth’s atmosphere that comes from solar flares. Space weather is "a very good representation for the kind of applications that we will run on exascale systems," Verachtert says. "Compute-bound" problems like this, he says, are limited only by how fast the system can churn through its intensive calculations.

Space weather is also a good problem to model because it can cause damage here on Earth, Verachtert says. Magnetic waves from solar flares, which create the auroras near the poles, can also potentially knock out satellites, injure astronauts, and disrupt GPS capabilities and electric power networks on Earth. Predicting space weather could help prepare for such events or at least alert astronauts in orbit to go to a "safety bunker" if radiation is headed their way.

It would take a year to accurately predict space weather using today’s machines. But a year is far, far too slow to be useful. Scientists have "roughly two days after a solar flare to predict how it will hit Earth, if it will hit Earth," Verachtert says. We could be using those two days to "make simulations using big supercomputers," he says.

The ExaScience Lab engineers won’t be building a supercomputer itself, Verachtert says. Instead they will focus on the programming side, designing the software to mitigate hardware failures in exascale computers. Though Imec’s Van den hove does not expect there to be an exascale supercomputer until 2018, the first demonstration of the lab’s software is planned for next April.