How do you say "robot" in sign language?

With the DARPA Robotics Challenge looming large on the horizon, it's easy to overlook robots that aren't taking part. One of them was Nino, a humanoid unveiled earlier this year by the National Taiwan University's Robotics Laboratory. Unlike the DARPA robots, Nino may not find itself performing tasks in dangerous situations any time soon. But this robot has some special skills: It is likely the first full-sized humanoid to demonstrate sign language.

"Sign language has a high degree of difficulty, requiring the use of both arms, hands, and fingers as well as facial expressions," said Professor Han-Pang Huang, who leads NTU's Robotics Lab. That's why sign language, he added, is an ideal task to help researchers develop capable robot hands, which could also find applications in factories or other kinds of work that require dexterous manipulation.

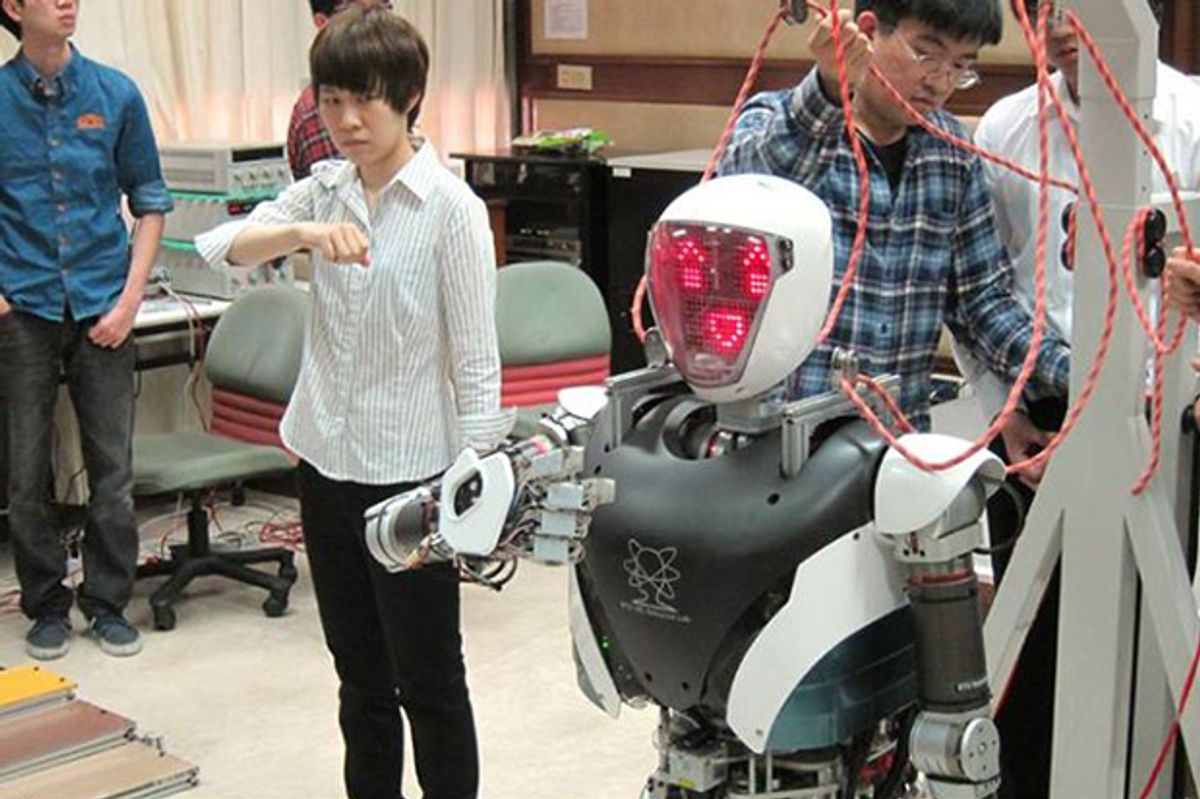

Nino stands 1.45 meter (4' 9") tall and weighs 68 kilograms (150 lbs). It has 52 degrees of freedom, including individual finger joints, and is equipped with 112 sensors that monitor the robot's motors, power usage, and temperature. An LED array in its head produces simple emoticon-style expressions such as blinking and lip movements. Nino is also able to walk, turn, and slowly climb up and down stairs and ramps.

One challenge for robot sign language is that mechanical hands with five fingers are usually considered slightly redundant for the type of work robots are expected to do. Advanced robots such as Boston Dynamics' Atlas or Willow Garage's PR2 feature only two or three fingers. Up until its latest revision, even Honda's ASIMO (considered one of the world's most advanced humanoids) didn't have hands with individually moving fingers. KAIST's Hubo robots are among the exceptions.

It took 20 researchers and students about three years to develop Nino, but they still have their work cut out for them. Although the robot was programmed to sign certain words in advance, such as introducing itself at the press conference, it doesn't have the image processing software necessary to interpret human signing, which uses subtle movements and can be very quick. In the future, they'd like Nino to be able to hold a conversation with a person using only sign gestures.

And if you're wondering, this is how to say "robot" in American sign language.

Source: [ NTU Robotics Lab ] via [ RTI news ]