“I had to double check I wasn’t playing the wrong audio file.”

The first time Abe Davis coaxed intelligible speech from a silent video of a bag of crab chips (an impassioned recitation of “Mary Had a Little Lamb”) he could hardly believe it was possible. Davis is a Ph.D. candidate at MIT, and his group’s image processing algorithm can turn everyday objects into visual microphones—deciphering the tiny vibrations they undergo as captured on video.

The research, which will be presented at the computer graphics conference SIGGRAPH 2014 next week, builds on work from MIT’s Computer Science and Artificial Intelligence Laboratory to capture movement on video much smaller than a single pixel. By seeing how border pixels on an object fluctuated in color, the group’s algorithm can measure and calculate the object's minuscule movements (and even magnify a wine glass’s oscillations when a tone is played or visually reveal a heartbeat under the skin).

“It was clear for us quickly that there’s a strong relation between sound and visual motion,” says Michael Rubinstein, a postdoc at Microsoft Research who worked on this and the earlier CSAIL research. “We had this crazy idea: can we actually use videos to recover sound?”

The first speech recovered from the chip bag can be played below. (Future recordings were much clearer, but probably less funny.)

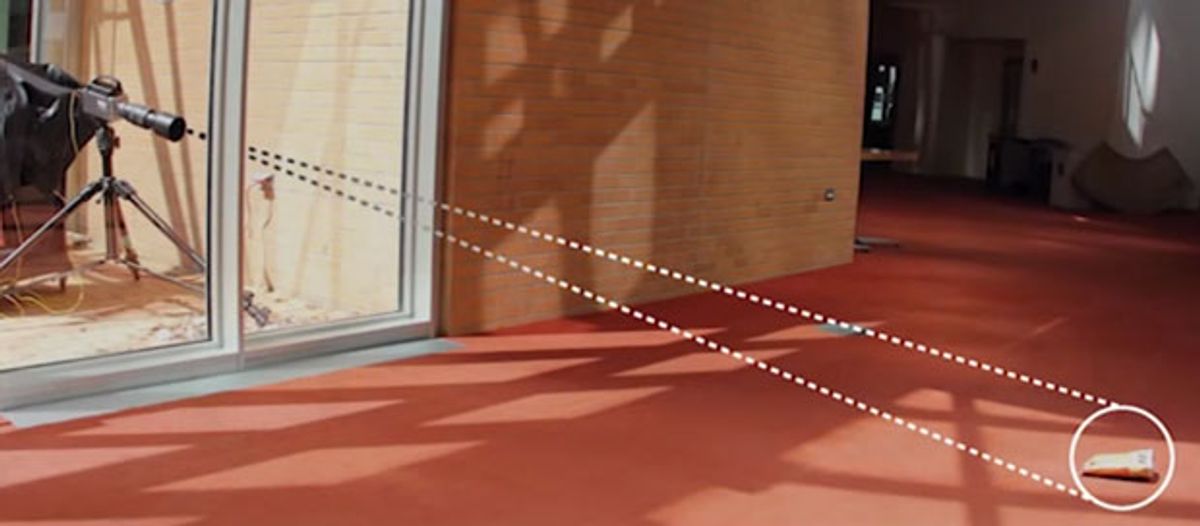

According to Davis, previous ways to recover sound remotely require more than just a video camera. By shining a laser on a vibrating object and measuring how the light scatters or how its phase changes, other researchers have been able to pull out detailed data about the sound.

The team’s processing algorithm lets them take a new tack: a completely passive recovery of the sound. By recording objects’ movements on high-frame-rate video, in ambient lighting—no laser needed—they are able to translate the vibrations caused by speech and music back to sound waves, with only a little bit of noise.

The group found that a number of factors affected how well the sound could be captured: for instance, low-frequency sounds were easier than high-frequency, which needed a faster frame rate, and smaller movements required a stronger zoom to catch. Low frame-rate footage from an ordinary digital camera posed a particular challenge because less signal could get through. But because of the way a “rolling-shutter” camera processes inputs, it could be made to exceed its frame rate and gather enough details to recover comprehensible sounds.

And then there were the test items themselves: “We asked ourselves what objects are going to be good visual microphones,” says Rubinstein. “It turns out those are objects like paper bags, chip bags, and aluminum foil that are very light and kind of rigid.” On those types of objects, the vibrations move the entire object, so there is less noise to filter out.

The group tested an eclectic selection of materials, including a bag of chips (excellent), a soda can (surprisingly mediocre), and a potted plant (average). They were even able to recreate music playing using footage of the vibrating ear buds. The best material of all was the thin foil wrapper on a Lindt chocolate bar Davis had been snacking on.

The worst was a brick, which they intended to use for measuring experimental error. Even that “did better than we expected it to do,” says Davis.

The researchers are learning how to predict how well any given microphone and camera setup will work, and are even looking into analyzing “found” footage—although the compression algorithms most video goes through eliminate the slight variations they need to analyze. The technique could be helpful anywhere sound can’t carry, or even to identify the composition of the objects themselves, and they plan to release the code behind it on the project’s website.

“Most people, their mind immediately goes to espionage and spying,” says Davis. “But I think that probably the most important applications of this are yet to be found. We just discovered the signal is there, and now we can start asking what to do with it.”

“What we’re doing pushes the boundary of what you can do with just cameras,” adds Rubinstein.

Watch the video below: