Will Today’s Digital Movies Exist in 100 Years?

We need new storage technologies, standards, and practices to preserve modern cinema

You don’t need to worry about anything like that happening at the moment because the major movie studios go to great lengths to protect their treasures. They can do this efficiently and inexpensively for one reason: Photochemical film is cheap and easy to preserve. All you need is a cold room that’s not too humid and not too dry, and the chemically processed film will last for 100 years or longer. Film archivists know that because many works from the earliest days of motion pictures, produced in the first decade of the 20th century or even before, are still around. Centuries from now, it’ll be easy enough to retrieve what’s stored on such films—a process that requires little more than a light source and a lens—even if information about how exactly those movies were made is lost.

But times are changing: The advent of digital cinematography means that most movies these days are not shot on film but recorded as bits and bytes, using specialized electronic motion-picture cameras. The resolution and dynamic range of the digital images can be as good as or better than those captured on 35-millimeter film, so there’s generally no loss of quality. Indeed, the changeover from film to digital offers many economic, environmental, and practical benefits. That’s why more than 80 percent of movie theaters in the United States no longer handle film [PDF]: They use digital projectors and digital playback systems exclusively. [For more on digital projection technology, see "Lasers: Coming to a Theater Near You," in this issue.]

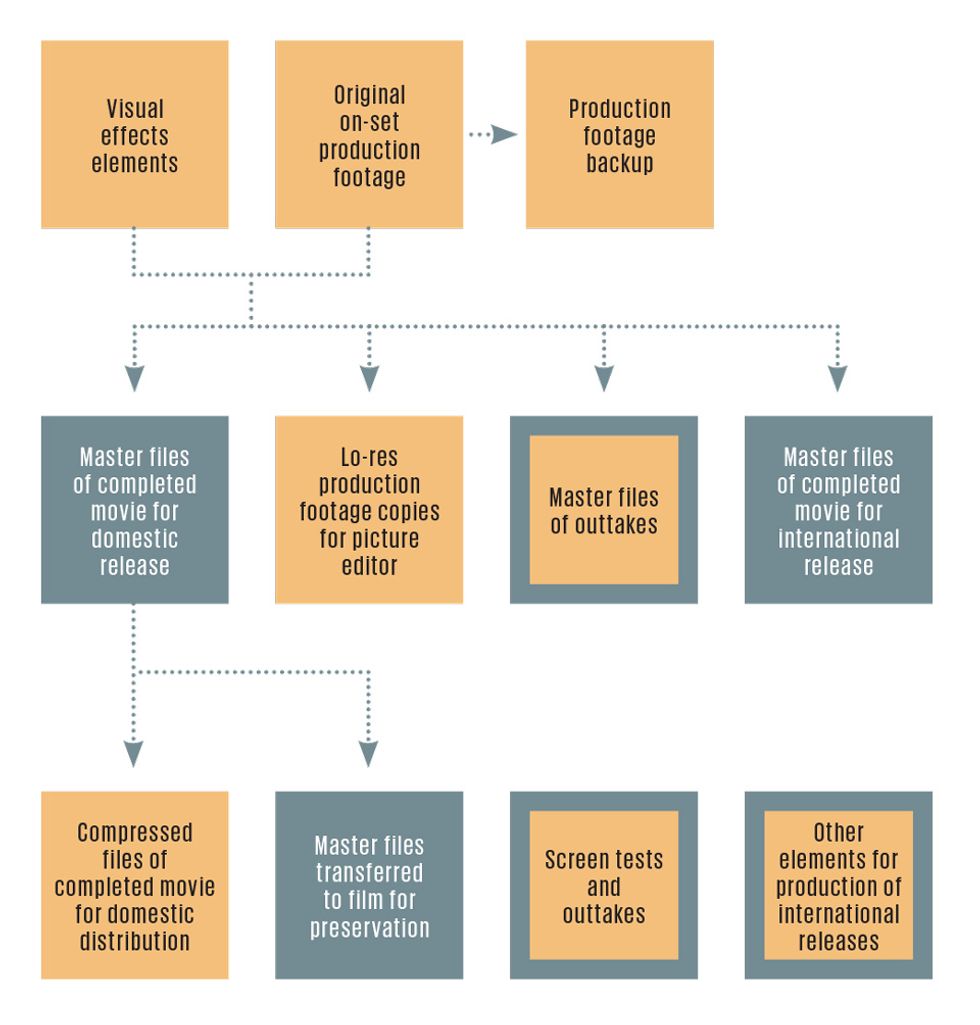

MAKING A MOVIE, STEP BY STEP

For the most part, this transition has been a boon to moviemakers and viewers alike. But as “born digital” productions proliferate, a huge new headache emerges: preservation. Digital movies are not nearly as easy to archive for the long term as good old film. In 2007, the Science and Technology Council of the Academy of Motion Picture Arts and Sciences estimated that the annual cost of preserving an 8.3-terabyte digital master is about US $12 000—more than 10 times what it costs to preserve a traditional film master. (This figure is based on an annual cost of $500 per terabyte for fully managed storage of three copies. While this cost has declined since the report was published, it remains significant.) And that doesn’t include the expense of preserving alternate versions or source material for a movie or the ongoing costs of maintaining accessibility to the digital work, as file formats, hardware, and software change over time.

If you take pictures with a digital camera or record video on a smartphone or store files on a laptop, you face the same problem, albeit on a smaller scale. Every few years, you have to transfer all that digital content to the latest recording medium or risk losing it altogether. You might attempt to free yourself of that hassle by archiving your digital collections in the cloud, but keep in mind that no cloud service can be relied on to keep your data alive forever. When the photo-storage site Digital Railroad shut down abruptly in 2008, for instance, it gave users just 24 hours to download their images before pulling the plug. Some professional photographers and photo agencies lost years’ worth of work.

Even though it generates petabytes of new digital footage each year, the motion-picture industry still has no better solution than you have to the looming problem of data extinction.

The phenomenon of digital movies is recent, but not exactly new. The Academy of Motion Picture Arts and Sciences (the group responsible for the Academy Awards) began paying serious attention to digital cinema technologies back in 2003, when it formed the Science and Technology Council (which I manage) and charged it with, among other things, monitoring technology developments in the film industry.

Even then, the writing was on the wall: Digital technologies would soon eclipse film as the dominant means of movie production, distribution, and projection. But what about preservation? In 2005, the academy sponsored a Digital Motion Picture Archiving Summit, which brought together specialists from the major Hollywood studios and U.S. film archives, including the U.S. Library of Congress, to discuss the long-term preservation of these digital works.

The summit in turn led to the academy’s 2007 report, The Digital Dilemma: Strategic Issues in Archiving and Accessing Digital Motion Picture Materials, which looked at preservation issues not just in the movie world but also in government, medicine, science, and the oil-and-gas industry. Not surprisingly, the report concluded that each of these enterprises faces similar issues with digital data, and nobody has yet come up with a good long-term solution. A follow-on report in 2012 examined digital archiving for independent filmmakers, documentarians, and nonprofit archivists, who are in even worse shape because they lack the financial wherewithal and organized infrastructure of the major studios.

This is not the first movie-preservation crisis. Before the industry switched to fire-resistant “safety film” in the 1950s, motion pictures were shot on highly flammable nitrate-based film, which generates its own oxygen while burning and is therefore nearly impossible to extinguish once ignited. Early movie producers didn’t really care about archiving either. Stories abound of nitrate-film bonfires on studio lots because movies had no perceived long-term value to justify the cost of storing them. According to researchers at the Library of Congress, less than half of the feature films made in the United States before 1950 and less than 20 percent from the 1920s are still around. The early films that did survive did so largely through the efforts of private and institutional collectors.

Attitudes changed with the introduction of home video—VHS and Betamax, followed by DVD, Blu-ray, and now Internet streaming—which gave movie studios a strong economic incentive to make their content last. Older material could be sold to new audiences every few years, and titles with a narrower appeal could remain profitable. And so the major Hollywood studios began investing heavily in archiving facilities, on their studio lots and in deep, underground temperature- and humidity-stable caverns throughout the United States. These days the studios tend to save everything, including various versions of finished movies, as well as the original camera negative film, original camera data (for digitally captured movies), original audio recordings, still photographs taken on set, notated scripts, and more.

As the days of analog film draw to a close, moviemakers and archivists now must confront a host of new issues. Most of them spring from the fact that the digital information stored in a master copy of a movie represents the movie only indirectly. Many layers—the storage medium, the hardware and software interfaces between that medium and the computer used, that computer’s operating system and its file system, the digital image and sound file formats, the imaging and sound application software—all need to work in concert to turn the 0s and 1s into what we think of as a movie.

This complexity isn’t a big deal over short time horizons, but for archival purposes—considered to be 100 years or longer for motion pictures and “the life of the Republic” for records deposited in the U.S. National Archives—it becomes a real concern. Most computer hardware is designed for a useful life of no more than three to five years, and most software—operating systems and applications alike—is superseded by upgraded versions every few years. Remember 8-inch and 5.25-inch floppy disks? There is still some legacy hardware and software around that can read them, but for practical purposes, those dinosaurs are extinct.

Also, digital storage requires active management. That is, the data must be checked regularly for errors, multiple copies must be maintained in different locations, technical staff must continually refresh their skills to keep abreast of new technologies and techniques, and the electricity bill always needs to be paid. Digital data, unlike film, cannot survive long periods of benign neglect.

The rapid obsolescence of digital technology has forced all of us who use it into a continual process of data migration—regularly copying data collections from old media and file formats to new. You can do that fairly easily when you replace your old laptop with a new one, but the process does not scale up well to collections measured in tera- or petabytes. Copying and reformatting masses of data that size quickly becomes impractical.

To give a crude example, just moving a petabyte of data (that’s 1 billion megabytes) over a gigabit Ethernet connection takes upwards of 90 days. Now, a single feature-length digital motion picture generates upwards of 2 petabytes, the equivalent of almost half a million DVDs. And worldwide, about 7000 new feature films are released each year [PDF]. So it’s not hard to see why data migration alone isn’t a long-term solution.

If this situation sounds ominous, it is. True, the cost per bit of digital storage has dropped dramatically over the last three decades: A gigabyte of storage 30 years ago might have run you a couple hundred thousand dollars; these days, it’s mere pennies. And there’s reason to believe that advances in automation will lower labor costs, while advances in energy efficiency will reduce power bills.

But once you’re on the technology treadmill, it is impossible to get off. For large collections of important or valuable digital data—not just motion pictures but news footage, scientific measurements, governmental records, and our own personal collections—today’s digital storage technologies simply do not work to ensure their survival for future generations. The uncertain state of digital preservation is such that most major studios continue to archive their movies by transferring them to separate, black-and-white polyester-based film negatives, one each for red, green, and blue. This is the case even for those works that are born digital.

Three years ago, Dominic Case of Australia’s National Film and Sound Archive made the following observation, which still holds true: “Even if we could switch to a low-cost, low-maintenance, secure digital solution tomorrow, we’d still need our film collection to last for 100 years, because it would take that long to transfer everything onto it.”

The good news is that there is a lot of work being done to raise awareness of the risk of digital decay generally and to try to reduce its occurrence. The Library of Congress, active in this area for many years, is leading these efforts through its National Digital Stewardship Alliance. The Data Conservancy, headquartered at Johns Hopkins University’s Sheridan Libraries, is similarly working on solutions. And the National Academies’ Board on Research Data and Information is studying digital curation as a future career opportunity. With these and other groups focused on digital preservation, the movie industry may well come up with some workable strategies—or at least be able to find people who can.

One promising thrust led by the motion-picture academy is the development of format standards for digital-image files, through its Academy Color Encoding System, or ACES. Work on ACES began 10 years ago, at a time when there was no clear standard for interchanging digitally mastered motion-picture elements. The Digital Picture Exchange or DPX file format (also known as the SMPTE 268M-2003 standard) was and still is widely used, but it leaves many technical details up to the end user, which leads to many different interpretations and implementations as well as a proliferation of proprietary and nonstandard formats. These days, the digital images moviemakers create can be recorded using any one of a dozen or more file formats and color-encoding schemes. The result of all that variety is reduced efficiency, increased costs, and degraded image quality, because everyone in the production chain has to play “guess the format.”

ACES aims to eliminate the ambiguity of today’s file formats. In simple terms, selected raw footage gets converted into the ACES format, which renders it clearly interpretable at any later step in the moviemaking process, including the addition of visual effects, postproduction, and mastering. And it yields a usable archival master in a digital form.

ACES is currently being used in many motion pictures, blockbusters and small films alike. It’s also being adopted in television production, because it produces higher quality images than do HDTV standards. Equipment manufacturers and postproduction and visual-effects facilities are also adopting it and participating in the ongoing refinement of the system. And the Society of Motion Picture and Television Engineers has already published five ACES standards documents, with more on the way.

The academy has also mounted what we call the Digital Motion Picture Archive Framework Project. As part of that effort, we created in partnership with the Library of Congress an experimental system for managing a 20-terabyte digital movie, plus 76 TB of related material. Given that there were no commercial products for performing such a task, the project team adapted various pieces of open-source software. We called the resulting package ACeSS, for Academy Case Study System, which we continue to use and learn from. Among the things we discovered from that exercise is the crucial role of metadata for maintaining long-term access to digital materials. That is, the information that gets stored needs to include a description of its contents, its format, what hardware and software were used to create it, how it was encoded, and other detailed “data about the data.” And digital data needs to be managed forever, so the organization and its preservation processes need to be built to last.

So what can you do to ensure your own important digital collections don’t disappear? Follow the best practices for data curation: Store multiple copies in multiple locations, regularly check the health of your data, and keep your hardware, software, and file formats up to date. And if you’re an engineer or computer scientist up for a grand challenge, investigate ways to make the problem of digital-information decay itself become obsolete.

This article originally appeared in print as “How Do You Store a Digital Movie for 100 Years?”

About the Author

Andy Maltz, managing director of the Science and Technology Council of the Academy of Motion Picture Arts and Sciences, in Beverly Hills, Calif. “It seems only fitting that as a technologist who has contributed to the transition from film to digital moviemaking, I now work on some of the problems that I helped create,” he says.