Will the NSA Finally Build Its Superconducting Spy Computer?

The U.S. government eyes cryogenically cooled circuitry for tomorrow’s exascale computers

Today, silicon microchips underlie every aspect of digital computing. But their dominance was never a foregone conclusion. Throughout the 1950s, electrical engineers and other researchers explored many alternatives to making digital computers.

One of them seized the imagination of the U.S. National Security Agency (NSA): a superconducting supercomputer. Such a machine would take advantage of superconducting materials that, when chilled to nearly the temperature of deep space—just a few degrees above absolute zero—exhibit no electrical resistance whatsoever. This extraordinary property held the promise of computers that could crunch numbers and crack codes faster than transistor-based systems while consuming far less power.

For six decades, from the mid-1950s to the present, the NSA has repeatedly pursued this dream, in partnership with industrial and academic researchers. Time and again, the agency sponsored significant projects to build a superconducting computer. Each time, the effort was abandoned in the face of the unrelenting pace of Moore’s Law and the astonishing increase in performance and decrease in cost of silicon microchips.

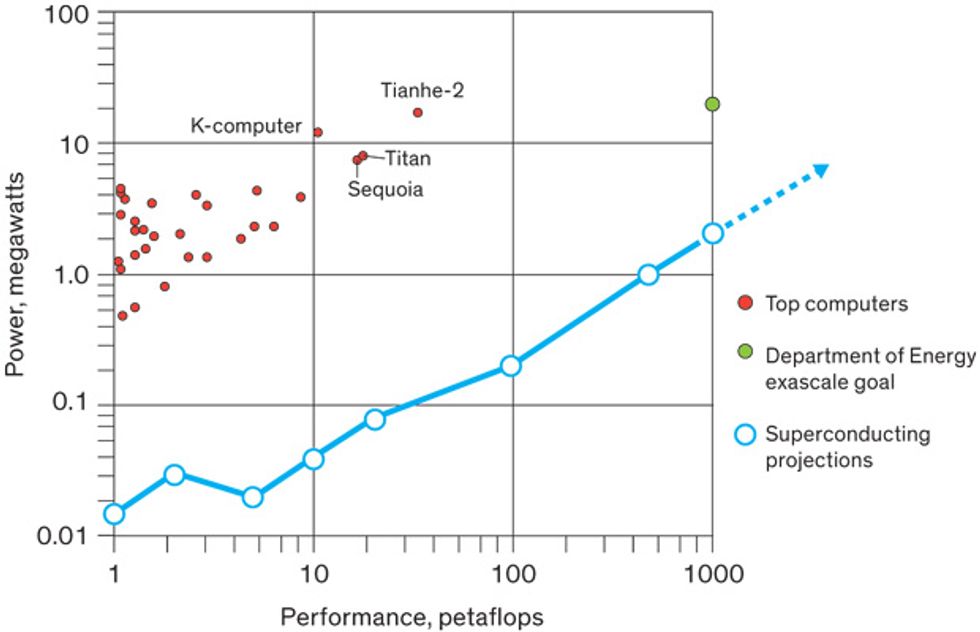

Now Moore’s Law is stuttering, and the world’s supercomputer builders are confronting an energy crisis. Nuclear weapon simulators, cryptographers, and others want exascale supercomputers, capable of 1,000 petaflops—1 million trillion floating-point operations per second—or greater. The world’s fastest known supercomputer today, China’s 34-petaflop Tianhe-2, consumes some 18 megawatts of power. That’s roughly the amount of electricity drawn instantaneously by 14,000 average U.S. households. Projections vary depending on the type of computer architecture used, but an exascale machine built with today’s best silicon microchips could require hundreds of megawatts.

The exascale push may be superconducting computing’s opening. And the Intelligence Advanced Research Projects Activity, the U.S. intelligence community’s arm for high-risk R&D, is making the most of it. With new forms of superconducting logic and memory in development, IARPA has launched an ambitious program to create the fundamental building blocks of a superconducting supercomputer. In the next few years, the effort could finally show whether the technology really can beat silicon when given the chance.

The NSA’s dream of superconducting supercomputers was first inspired by the electrical engineer Dudley Buck. Buck worked for the agency’s immediate predecessor on an early digital computer. When he moved to MIT in 1950, he remained a military consultant, keeping the Armed Forces Security Agency, which quickly became the NSA, abreast of new computing developments in Cambridge.

Buck soon reported on his own work—a novel superconducting switch he named the cryotron. The device works by switching a material between its superconducting state—where electrons couple up and flow as a “supercurrent,” with no resistance at all—and its normal state, where electrons flow with some resistance. A number of superconducting metallic elements and alloys reach that state when they are cooled below a critical temperature near absolute zero. Once the material becomes superconducting, a sufficiently strong magnetic field can drive the material back to its normal state.

In this, Buck saw a digital switch. He coiled a tiny “control” wire around a “gate” wire, and plunged the pair into liquid helium. When current ran through the control, the magnetic field it created pushed the superconducting gate into its normal resistive state. When the control current was turned off, the gate became superconducting again.

Buck thought miniature cryotrons could be used to fashion powerful, fast, and energy-efficient digital computers. The NSA funded work by him and engineer Albert Slade on cryotron memory circuits at the firm A.D. Little, as well as a broader project on digital cryotron circuitry at IBM. Quickly, GE, RCA, and others launched their own cryotron efforts.

Engineers continued developing cryotron circuits into the early 1960s, despite Buck’s sudden and premature death in 1959. But liquid-helium temperatures made cryotrons challenging to work with, and the time required for materials to transition from a superconducting to a resistive state limited switching speeds. The NSA eventually pulled back on funding, and many researchers abandoned superconducting electronics for silicon.

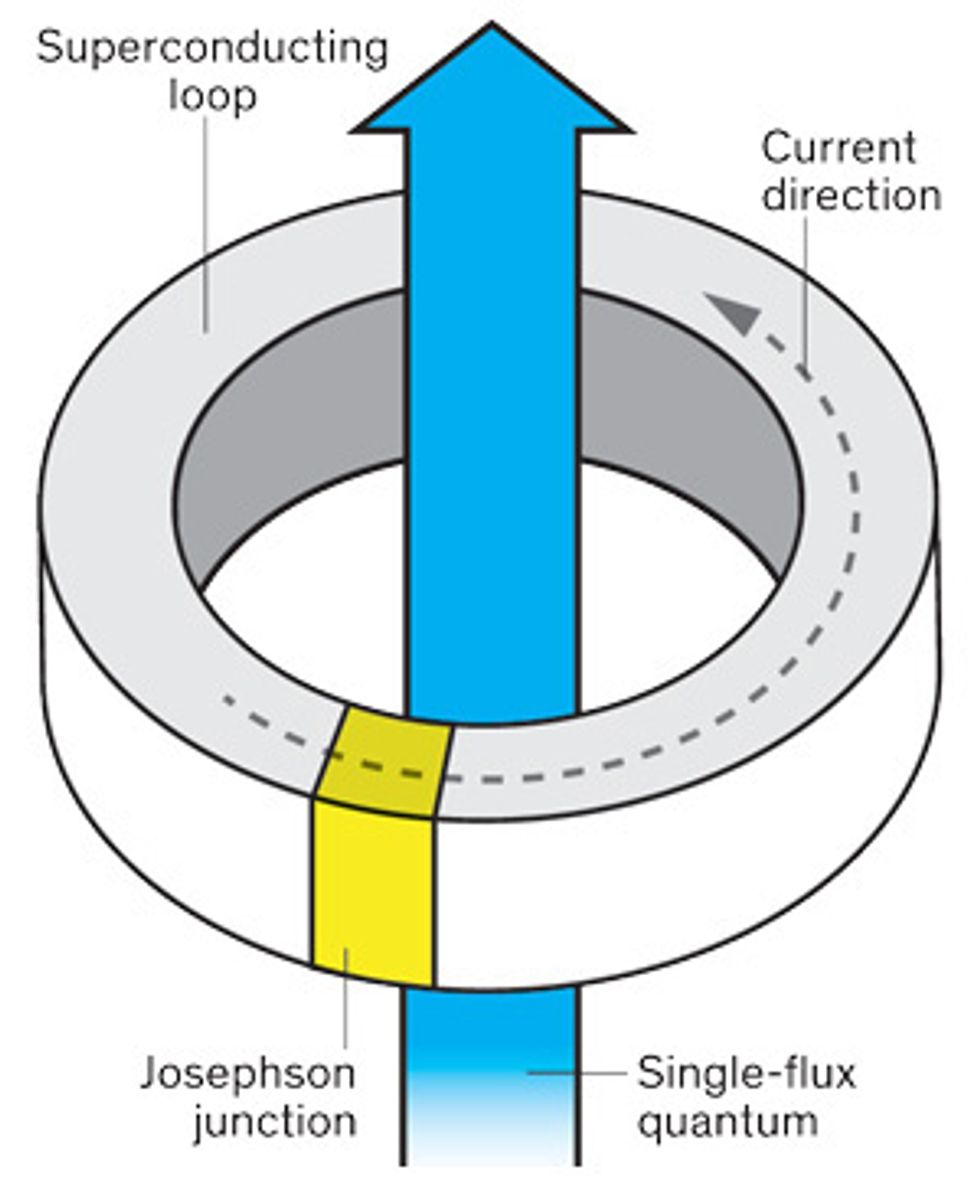

Even as these efforts faded, a big change was under way. In 1962 British physicist Brian Josephson made a provocative prediction about quantum tunneling in superconductors. In typical quantum-mechanical tunneling, electrons sneak across an insulating barrier, assisted by a voltage push; the electrons’ progress occurs with some resistance. But Josephson predicted that if the insulating barrier between two superconductors is thin enough, a supercurrent of paired electrons could flow across with zero resistance, as if the barrier were not there at all. This became known as the Josephson effect, and a switch based on the effect, the Josephson junction, soon followed.

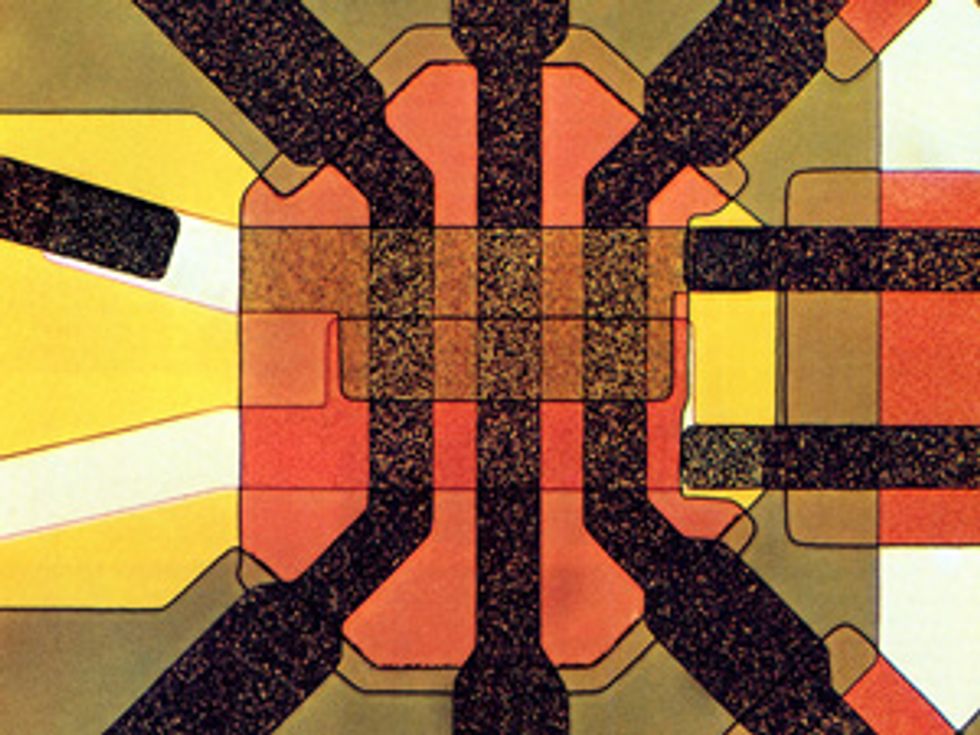

IBM researchers developed a version of this switch in the mid-1960s. The active part of the device was a line of superconducting metal, interrupted by a thin oxide barrier cutting across it. A supercurrent would freely tunnel across the barrier, but only up to a point; if the current rose above a certain threshold, the device would saturate and unpaired electrons would trickle across the junction with some resistance. The threshold could be tuned by a magnetic field, created by running current through a nearby superconducting control line. If the device operated close to the threshold current, a small current in the control could shift the threshold and switch the gate out of its supercurrent-tunneling state. Unlike in Buck’s cryotron, the materials in this device always remained superconducting, making it a much faster electronic switch.

As explored by historian Cyrus Mody, by 1973 IBM was working on building a superconducting supercomputer based on Josephson junctions. The basic building block of its circuits was a superconducting loop with Josephson junctions in it, known as a superconducting quantum interference device, or SQUID. The NSA covered a substantial fraction of the costs, and IBM expected the agency to be its first superconducting-supercomputer customer, with other government and industry buyers to follow.

IBM’s superconducting supercomputer program ran for more than 10 years, at a cost of about US $250 million in today’s dollars. It mainly pursued Josephson junctions made from lead alloy and lead oxide. Late in the project, engineers switched to a niobium oxide barrier, sandwiched between a lead alloy and a niobium film, an arrangement that produced more-reliable devices. But while the project made great strides, company executives were not convinced that an eventual supercomputer based on the technology could compete with the ones expected to emerge with advanced silicon microchips. In 1983, IBM shut down the program without ever finishing a Josephson-junction-based computer, super or otherwise.

Japan persisted where IBM had not. Inspired by IBM’s project, Japan’s industrial ministry, MITI, launched a superconducting computer effort in 1981. The research partnership, which included Fujitsu, Hitachi, and NEC, lasted for eight years and produced an actual working Josephson-junction computer—the ETL-JC1. It was a tiny, 4-bit machine, with just 1,000 bits of RAM, but it could actually run a program. In the end, however, MITI came to share IBM’s opinion about the prospect of scaling up the technology, and the project was abandoned.

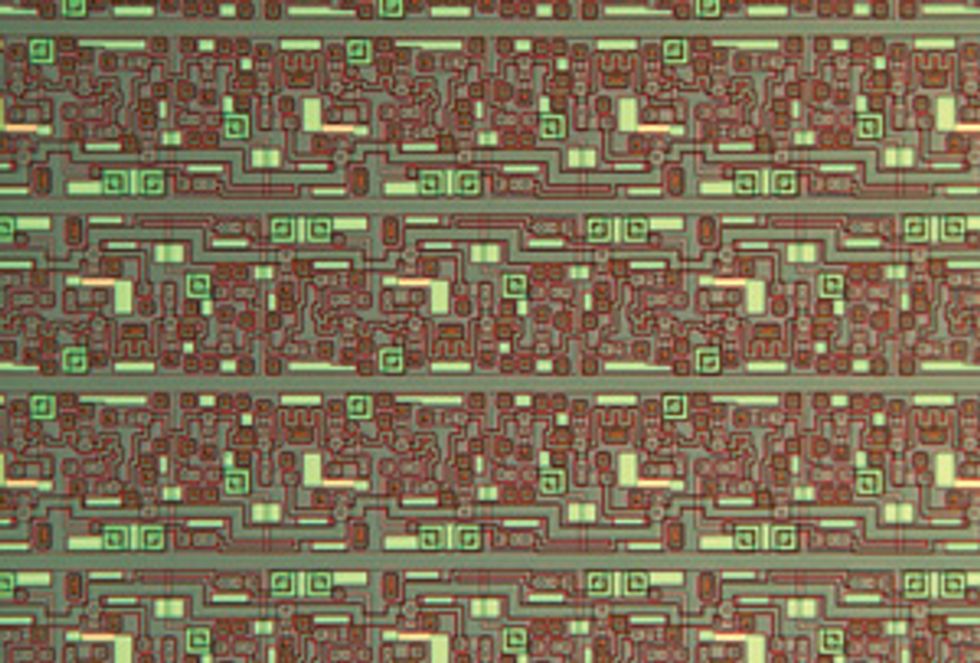

Critical new developments emerged outside these larger superconducting-computer programs. In 1983, Bell Telephone Laboratories researchers formed Josephson junctions out of niobium separated by thin aluminum oxide layers. The new superconducting switches were extraordinarily reliable and could be fabricated using a simplified patterning process much in the same way silicon microchips were.

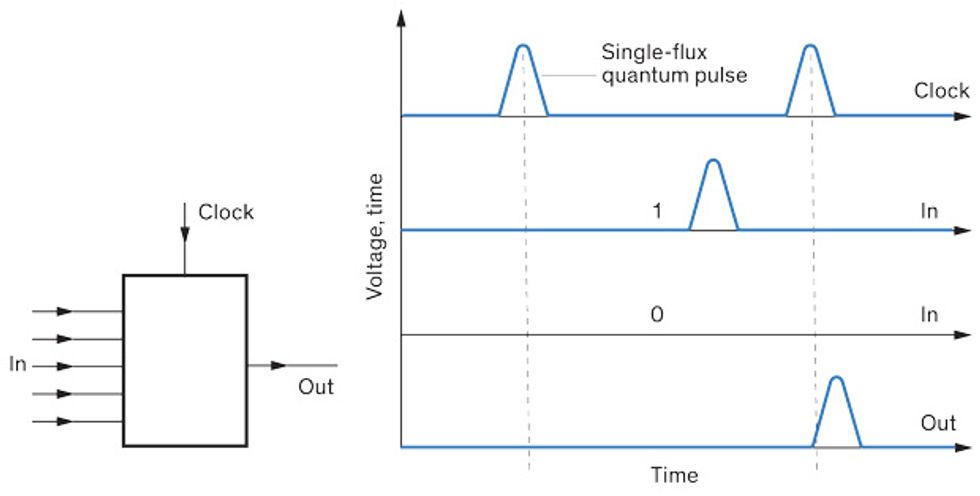

Then in 1985, researchers at Moscow State University proposed [PDF] a new kind of digital superconducting logic. Originally dubbed resistive, then renamed “rapid” single-flux-quantum logic, or RSFQ, it took advantage of the fact that a Josephson junction in a loop of superconducting material can emit minuscule voltage pulses. Integrated over time, they take on only a quantized, integer multiple of a tiny value called the flux quantum, measured in microvolt-picoseconds.

By using such ephemeral voltage pulses, each lasting a picosecond or so, RSFQ promised to boost clock speeds to greater than 100 gigahertz. What’s more, a Josephson junction in such a configuration would expend energy in the range of just a millionth of a picojoule, considerably less than consumed by today’s silicon transistors.

Together, Bell Labs’ Josephson junctions and Moscow State University’s RSFQ rekindled interest in superconducting electronics. By 1997, the U.S. had launched the Hybrid Technology Multi-Threaded (HTMT) project, which was supported by the National Science Foundation, the NSA, and other agencies. HTMT’s goal was to beat conventional silicon to petaflop-level supercomputing, using RSFQ integrated circuits among other technologies.

It was an ambitious program that faced a number of challenges. The RSFQ circuits themselves limited potential computing efficiency. To achieve tremendous speed, RSFQ used resistors to provide electrical biases to the Josephson junctions in order to keep them close to the switching threshold. In experimental RSFQ circuitry with several thousand biased Josephson junctions, the static power dissipation was negligible. But in a petaflop-scale supercomputer, with possibly many billions of such devices, it would have added up to significant power consumption.

The HTMT project ended in 2000. Eight years later, a conventional silicon supercomputer—IBM’s Roadrunner—was touted as the first to reach petaflop operation. It contained nearly 20,000 silicon microprocessors and consumed 2.3 megawatts.

For many researchers working on superconducting electronics, the period around 2000 marked a shift to an entirely different direction: quantum computing. This new direction was inspired by the 1994 work of mathematician Peter Shor, then at Bell Labs, which suggested that a quantum computer could be a powerful cryptanalytical tool, able to rapidly decipher encrypted communications. Soon, projects in superconducting quantum computing and superconducting digital circuitry were being sponsored by the NSA and the U.S. Defense Advanced Research Projects Agency. They were later joined by IARPA, which was created in 2006 by the Office of the Director of National Intelligence to sponsor intelligence-related R&D programs, collaborating across a community that includes the NSA, the Central Intelligence Agency, and the National Geospatial-Intelligence Agency.

Nobody knew how to build a quantum computer, of course, but lots of people had ideas. At IBM and elsewhere, engineers and scientists turned to the mainstays of superconducting electronics—SQUIDs and Josephson junctions—to craft the building blocks. A SQUID exhibits quantum effects under normal operation, and it was fairly straightforward to configure it to operate as a quantum bit, or qubit.

One of the centers of this work was the NSA’s Laboratory for Physical Sciences. Built near the University of Maryland, College Park—“outside the fence” of NSA headquarters in Fort Meade—the laboratory is a space where the NSA and outside researchers can collaborate on work relevant to the agency’s insatiable thirst for computing power.

In the early 2010s, Marc Manheimer was head of quantum computing at the laboratory. As he recently recalled in an interview, he saw an acute need for conventional digital circuits that could physically surround quantum bits in order to control them and correct errors on very short timescales. The easiest way to do this, he thought, would be with superconducting computer elements, which could operate with voltage and current levels that were similar to those of the qubit circuitry they would be controlling. Optical links could be used to connect this cooled-down, hybrid system to the outside world—and to conventional silicon computers.

At the same time, Manheimer says, he became aware of the growing power problem in high-performance silicon computing, for supercomputers as well as the large banks of servers in commercial data centers. “The closer I looked at superconducting logic,” he says, “the more that it became clear that it had value for supercomputing in its own right.”

Manheimer proposed a new direct attack on the superconducting supercomputer. Initially, he encountered skepticism. “There’s this history of failure,” he says. Past pursuers of superconducting supercomputers “had gotten burned…so people were very cautious.” But by early 2013, he says, he had convinced IARPA to fund a multisite industrial and academic R&D program, dubbed the Cryogenic Computing Complexity (C3) program. He moved to IARPA to lead it.

The first phase of C3—its budget is not public—calls for the creation and evaluation of superconducting logic circuits and memory systems. These will be fabricated at MIT Lincoln Laboratory—the same lab where Dudley Buck once worked.

Manheimer says one thing that helped sell his C3 idea was recent progress in the field, which is reflected in IARPA’s selection of “performers,” publicly disclosed in December 2014.

One of those teams is led by the defense giant Northrop Grumman Corp. The company participated in the late 1990s HTMT project, which employed fairly-power-hungry RSFQ logic. In 2011, Northrop Grumman’s Quentin Herr reported an exciting alternative, a different form of single-flux quantum logic called reciprocal quantum logic. RQL replaces RSFQ’s DC resistors with AC inductors, which bias the circuit without constantly drawing power. An RQL circuit, says Northrop Grumman team leader Marc Sherwin, consumes 1/100,000 the power of the best equivalent CMOS circuit and far less power than the equivalent RSFQ circuit.

A similarly energy-efficient logic called ERSFQ has been developed by superconducting electronics manufacturer Hypres, whose CTO, Oleg Mukhanov, is the coinventor of RSFQ. Hypres is working with IBM, which continued its fundamental superconducting device work even after canceling its Josephson-junction supercomputer project and was also chosen to work on logic for the program.

Hypres is also collaborating with a C3 team led by a Raytheon BBN Technologies laboratory that has been active in quantum computing research for several years. There, physicist Thomas Ohki and colleagues have been working on a cryogenic memory system that uses low-power superconducting logic to control, read, and write to high-density, low-power magnetoresistive RAM. This sort of memory is another change for superconducting computing. RSFQ memory cells were fairly large. Today’s more compact nanomagnetic memories, originally developed to help extend Moore’s Law, can also work well at low temperatures.

The world’s most advanced superconducting circuitry uses devices based on niobium. Although such devices operate at temperatures of about 4 kelvins, or 4 degrees above absolute zero, Manheimer says supplying the refrigeration is now a trivial matter. That’s thanks in large part to the multibillion-dollar industry based on magnetic resonance imaging machines, which rely on superconducting electromagnets and high-quality cryogenic refrigerators.

One big question has been how much the energy needed for cooling will increase a superconducting computer’s energy budget. But advocates suggest it might not be much. The power drawn by commercial cryocoolers leaves “considerable room for improvement,” Elie Track and Alan Kadin of the IEEE’s Rebooting Computing initiative recently wrote. Even so, they say, “the power dissipated in a superconducting computer is so small that it remains 100 times more efficient than a comparable silicon computer, even after taking into account the present inefficient cryocooler.”

For now, C3’s focus is on the fundamental components. This first phase, which will run through 2017, aims to demonstrate core components of a computer system: a set of key 64-bit logic circuits capable of running at a 10-GHz clock rate and cryogenic memory arrays with capacities up to about 250 megabytes. If this effort is successful, a second, two-year phase will integrate these components into a working cryogenic computer of as-yet-unspecified size. If that prototype is deemed promising, Manheimer estimates it should be possible to create a true superconducting supercomputer in another 5 to 10 years.

Such a system would be much smaller than CMOS-based supercomputers and require far less power. Manheimer projects that a superconducting supercomputer produced in a follow-up to C3 could run at 100 petaflops and consume 200 kilowatts, including the cryocooling. It would be 1/20 the size of Titan, currently the fastest supercomputer in the United States, but deliver more than five times the performance for 1/40 of the power.

A supercomputer with those capabilities would obviously represent a big jump. But as before, the fate of superconducting supercomputing strongly depends on what happens with silicon. While an exascale computer made from today’s silicon chips may not be practical, great effort and billions of dollars are now being expended on continuing to shrink silicon transistors as well as on developing on-chip optical links and 3-D stacking. Such technologies could make a big difference, says Thomas Theis, who directs nanoelectronics research at the nonprofit Semiconductor Research Corp. In July 2015, President Barack Obama announced the National Strategic Computing Initiative and called for the creation of an exascale supercomputer. IARPA’s work on alternatives to silicon is part of this initiative, but so is conventional silicon. The mid-2020s has been targeted for the first silicon-based exascale machine. If that goal is met, the arrival of a superconducting supercomputer would likely be pushed out still further.

But it’s too early to count out superconducting computing just yet. Compared with the massive, continuous investment in silicon over the decades, superconducting computing has had meager and intermittent support. Yet even with this subsistence diet, physicists and engineers have produced an impressive string of advances. The support of the C3 program, along with the wider attention of the computing community, could push the technology forward significantly. If all goes well, superconducting computers might finally come in from the cold.

This article appears in the March 2016 print issue as “The NSA’s Frozen Dream.”

About the Author

A historian of science and technology, David C. Brock recently became director of the Center for Software History at the Computer History Museum. A few years back, while looking into the history of microcircuitry, he stumbled across the work of Dudley Buck, a pioneer of speedy cryogenic logic. He wrote about Buck in our April 2014 issue. Here he explores what happened after Buck, including a new effort to build a superconducting computer. This time, he says, the draw is energy efficiency, not performance.