A few years ago, I met one of the previous versions of the REEM robot. REEM was taller and broader than me, and its big, black eyes tracked me as I moved. When I shifted, its stare followed. It watched me with target-locked precision, like a laser into my soul.

I've been working with robots for over eight years now, and REEM was certainly beautifully designed. But something was bugging me about its behavior, and now researchers know what it is.

According to Sean Andrist from the University of Wisconsin Madison, robots need to look away sometimes. Eye contact provides a basis for human social communication (which is why we seek to implement it in robots), but there's a sweet spot for the amount of time we spend gazing into each other's eyes.

In a conversation, humans don't look at each other 100 percent of the time, says Andrist. When listening, we look at the speaker around 70 percent of the time. On the other hand, speakers only look directly at the other person around 40 percent of the time when talking.

Psychology literature gives at least three reasons why we avert our gaze, noted Andrist at his talk at the 2014 ACM/IEEE Human-Robot Interaction Conference last month. First, we might look away to show that we're thinking, usually by looking upwards. Secondly, humans display something called intimacy regulation—we look to the side to avoid the negative connotations that come with staring at someone. Finally, there's floor management. We "hold the floor" by looking away during a pause in our speech. In other words, we look away when we want to keep talking but need to take a breath.

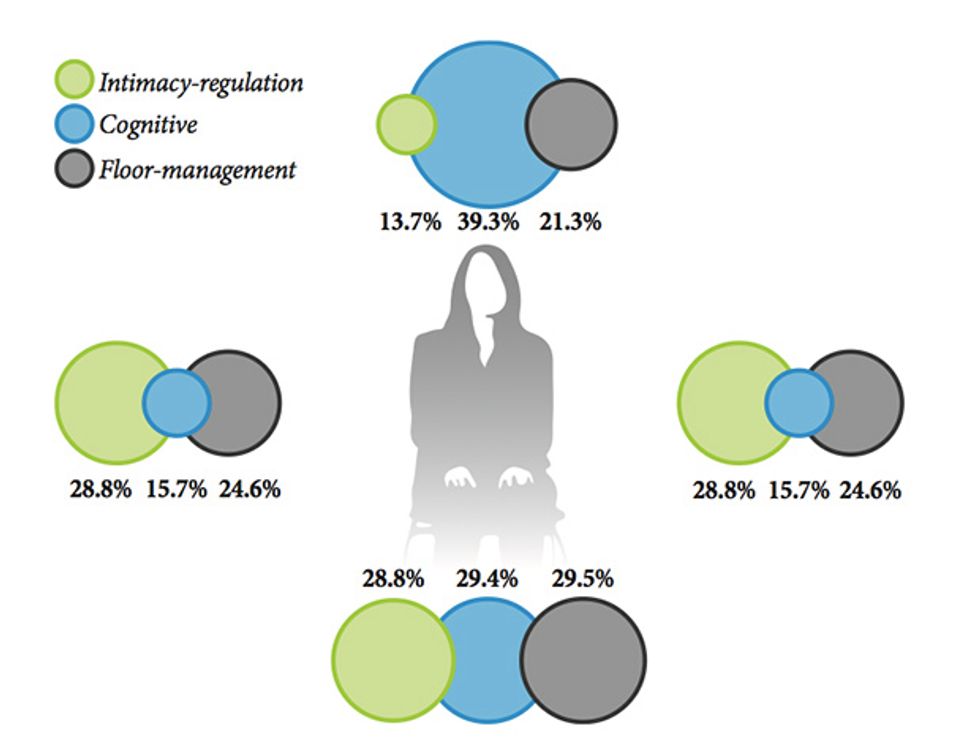

To study these behaviors in detail, Andrist took statistics of gaze aversions between pairs of conversing people:

Combined data from multiple interaction experiments is shown in the diagram below: each circle shows what a human is thinking when their gaze is directed there. For example, the blue “cognitive” circle above the head shows that when we look up, 39.3 percent of the time it’s because we’re thinking.

Andrist used these statistics to build an automatic gaze shifting program on a NAO robot. NAO used a Kinect to track the face of the humans it spoke with, and occasionally averted its gaze with the same probabilities as humans. For example, it regulated intimacy using small left-right head motions. Andrist used a Kalman Filter to blend together idle movements (random head motions) with gaze aversions, to produce a smooth, lifelike gaze.

Here’s an example of the robot using his system:

His results showed that users found NAO to be more intentional, thoughtful, and creative when it used gaze aversions. In addition, it was better at holding the floor when speaking, shown by a minimal amount of interruptions when it paused between statements.

And what happens if a robot averts their gaze too much? Andrist also tested a "bad-timing" condition:

Inappropriate or excess gaze aversion is linked to emotions like embarrassment or disgust, so completely avoiding gaze definitely isn't the way to go.

One surprising finding came when Andrist compared the number of words users said to NAO in response to a personal question. The users didn't appear to be any more comfortable (i.e. say more words) to a gaze-averting robot than with a static gaze robot. Andrist says that this is surprising, because his previous experiments using human-like virtual characters showed a significant increase in comfort when including gaze aversion.

A possible explanation is that the NAO is not by default very intimidating when it looks at you, Andrist suggests. The more lifelike a robot, the more important gaze aversion may be.

Sean Andrist's NAO code for the gaze aversion project is available freely here.

The study “Conversational Gaze Aversion for Humanlike Robots” by Sean Andrist, Xiang Zhi Tan, Michael Gleicher, and Bilge Mutlu of the Department of Computer Sciences, University of Wisconsin–Madison, was presented at the Human Robot Interaction Conference on March 4, 2014.