What 5G Engineers Can Learn from Radio Interference’s Troubled Past

Radio interference is an old problem, but 5G and other forms of digital radio may tackle it in new ways

The last—and only—radio innovator having no reason to think about interference was Heinrich Hertz, when he fired up the world’s first radio transmitter in 1886. Once he turned on a second one, he created the potential for interference. It’s been a problem ever since.

Indeed, the problem is now acute and could easily get much worse. That’s because 5G [pdf] mobile data service is on the way, promising as much as gigabit-per-second data connections over short distances. In advance of the 5G rollout, which should begin around 2020, engineers are working through all the usual concerns, including frequency choices, propagation, reliability, and battery life—plus one more: keeping transmissions from millions of very small, very mobile radios from interfering with one another. If these engineers don’t solve this problem, digital services on your phone won’t be much better than they are now.

Over the decades, regulators and engineers have managed to keep radio interference in check using a number of techniques. Here, I will look at some of those past and present methods and also some that engineers may increasingly explore in the future.

The radio interference problem became obvious with early radio’s first killer app: telegraphy at sea. The spark-gap transmitters [pdf] used at the start of the 20th century occupied the entire spectrum that receivers could hear, effectively putting all users onto one shared channel. A ship’s radioman would simply listen for a break in the traffic and then transmit, much as amateur and citizens-band operators are supposed to do today. That procedure limited interference, but it had shortcomings. On the night of 14 April 1912, the RMS Titanic was so busy handling passengers’ ship-to-shore messages that it rebuffed an attempted transmission from the nearby Californian trying to warn it of icebergs in the vicinity.

Development of the resonant tank circuit early in the last century enabled a transmitter and receiver to communicate on just one specific frequency. This allowed many stations to share the spectrum, each one working at a different spot on the radio dial.

At around the same time, startled Morse-code operators began hearing voices and music among the dots and dashes, heralding the invention of amplitude modulation. Land-based AM radio spread quickly during the 1920s. But success brought problems. Those early radio-broadcasting entrepreneurs used any frequency they wanted, anywhere they wanted, often interfering with other stations on the same or adjacent frequencies. In some cities, no one could reliably be heard.

Several countries soon solved the problem in the same way: with a map and a drawing compass. Governments made it illegal to transmit without a license—and made sure that stations licensed for the same frequency were far enough apart to avoid interference. Because signals in the AM band travel farther at night, less fortunate stations had to cease transmitting at sundown. Some countries also used the licensing process to suppress antigovernment voices and for other nontechnical purposes.

Radio waves ignore national boundaries, of course. Countries quickly found they had to cooperate with their neighbors in assigning frequencies. The International Telegraph Union, founded in 1865 to facilitate cross-border telegraphy, updated its name in 1932 to the International Telecommunication Union (ITU) and became a forum for countries to negotiate on radio interference. Now an agency of the United Nations, the ITU still does that job today. States that have been implacable enemies for generations, whose leaders refuse to speak the other country’s name, nonetheless sit down together and peaceably work out international frequency usages.

The national licensing schemes set up for AM easily incorporated FM and television as they appeared. When radio-frequency transistors led to inexpensive two-way radios in the 1970s, the model required only a small change: The license covered the system’s base station plus all of its mobile transmitters within a specified area. Cellphone licensing still works basically the same way.

But today we live in a very different world, surrounded by countless low-power radio transmitters like the one in your laptop or key fob, which send digital data and operate without anyone having had to take out a license for them. The future will bring many more digital wireless devices. So avoiding radio interference has taken on greater urgency than ever before.

Despite a federal statute requiring that all transmitters be licensed, the U.S. Federal Communications Commission (FCC) decided early on that this law applies only to transmitters whose power is high enough to threaten others with interference. Much of the rest of the world came to agree: A sufficiently low-powered transmitter can operate legally without a license.

Until the 1980s, unlicensed devices proliferated slowly: garage-door openers, analog cordless phones, and a handful of other primitive, flea-powered gadgets. The conservative power limit for this kind of equipment—a small fraction of a watt—protected licensed users against interference and also let a lot of people use unlicensed devices at the same time without interfering with one another.

That was all about to change. One reason was the proliferation of digital signal processors and other microcircuits, which sharply reduced the cost of radios. Another was an FCC engineer named Michael Marcus, who persuaded his bosses to try an experiment—one that was to succeed beyond anyone’s wildest fantasies. Marcus wanted to authorize unlicensed transmitters for data communications at power levels high enough for transmission over tens or even hundreds of meters. When the FCC sought public comment, the response was unanimous from existing spectrum users: Not in my frequency band!

The FCC went ahead anyway in 1985, but it relegated Marcus’s concept to three undesirable “junk bands,” which had been set aside for noncommunications radio-emitting gear (like microwave ovens) as well as amateur radio and a few other purposes. The commission set the power limit at 1 watt, a then unheard-of level for unlicensed devices, high enough to cover a retail store or a floor of an office building. No one at the time could have foreseen the coming impact of low-cost, high-capacity, unlicensed digital radios.

The new category was originally called spread spectrum because the early rules required the signal to take up a larger range of frequencies than is strictly necessary to carry the data. Most other radio signals of that era, by contrast, were restricted to narrow slices of the spectrum. Spread spectrum evolved over the next few decades into today’s Wi-Fi, Bluetooth, ZigBee, and hundreds of lesser-known protocols.

Many of us have a dozen or more of these transmitters literally within arm’s reach, built into our phones, tablets, laptops, e-readers, music players, cameras, cordless phones—almost everything with a battery. The devices’ unlicensed status means anyone can use them anywhere without permission from anybody.

The authors of some specifications had the foresight to build in anti-interference measures. Wi-Fi can automatically select the least congested channel available; if interference still occurs, it downshifts to slower, more robust protocols. Bluetooth, which hops among dozens of frequencies, avoids those showing the heaviest use. ZigBee uses narrow channels, just a couple of megahertz wide, which fit in between other users, a technique that succeeds even in congested environments.

If you doubt that these anti-interference techniques are effective, log your tablet onto the Wi-Fi in a crowded Starbucks and hook up your Bluetooth keyboard and headphones. Notice that everyone else is doing the same. Then watch the barista turn on the microwave oven, which generates radio energy in the very same frequency band that you and many others are using. Everyone’s devices still function: The Wi-Fi and Bluetooth signals move smoothly aside to accommodate the microwave without anyone so much as noticing.

Some unlicensed devices mitigate interference with a “listen before talk” protocol, which is basically an automated version of the Titanic-era method. Others, like RFID tags and some smart utility meters, simply transmit when they’re ready. Because these devices are low power and transmit in short, widely separated bursts, whatever interference they cause is generally tolerable.

Even more than mobile phones and other licensed radio applications, unlicensed communications have mushroomed. Fortunately, the power and range of all these unlicensed transmitters have both been dropping, which has helped to make room for more of them. But to avoid interference from becoming a dire problem in the future, particularly with the arrival of 5G, engineers will need to find new ways for many different transmitters to share the same airwaves.

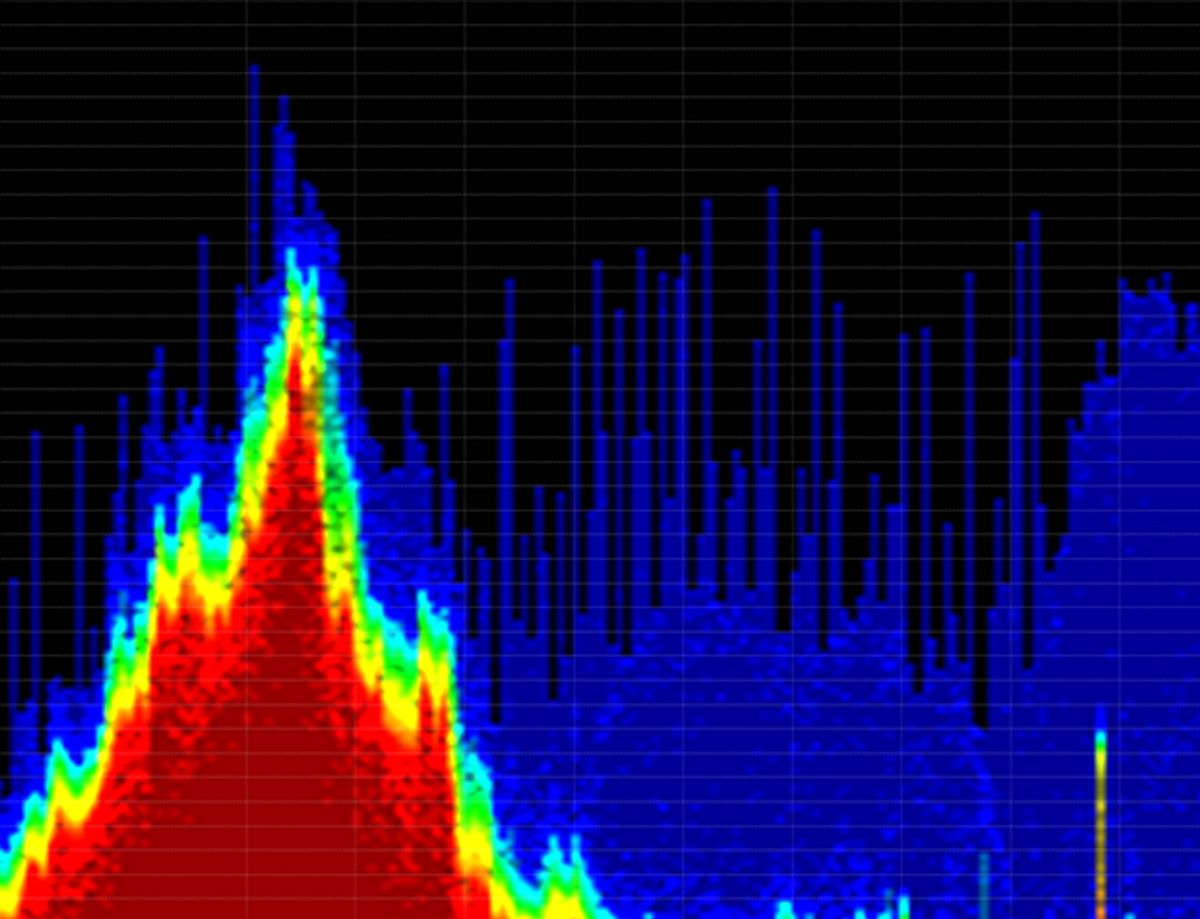

Newcomers to the interference problem sometimes suggest monitoring the spectrum to find vacant frequencies available for use. This makes sense on the surface, but if you dig deeper you’ll discover that it’s surprisingly hard to do.

One of the early attempts to promote such spectrum sensing was the FCC’s “interference temperature” proceeding [pdf], a legal rulemaking that the commission launched in 2003. Proponents envisioned a nationwide grid of sensing stations that continuously monitor specified bands and tell radios when and where it is safe to transmit. This concept probably looked good in PowerPoint, but it’s a poor fit for the real world.

Informed commenters pointed out that even very sensitive detectors wouldn’t register much of the activity. They’d routinely miss even strong signals aimed away from the sensor, such as satellite uplinks and fixed microwave links. The detectors might also miss activity in bands carrying low-power signals: GPS and other satellite downlinks, celestial sources of interest to radio astronomers, search-and-rescue beacons, distant TV stations, and more. To reliably detect all of these, a sensing device would have to be situated within the beams of directional transmitters, or, for other kinds of radio signals, be as large and sensitive as the receivers used. The FCC dropped the interference-temperature idea four years later.

The commission suffered a different kind of setback with sensing technology when it opened additional frequencies to the grandiosely named Unlicensed National Information Infrastructure (U-NII), a high-frequency form of Wi-Fi that is also authorized in Europe, Israel, Turkey, and several Asian countries. In the United States, the new U-NII bands—used for wireless routers, wireless LAN connections, and the like—overlapped those used by certain government radars, including airport weather radars, which are of course important for maintaining aviation safety.

Reasonably enough, the FCC required U-NII devices to sense and avoid interfering with the radars. Despite the radars’ strong signals, formulating appropriate rules and test procedures proved unexpectedly difficult. And after those rules were in place, some radars experienced interference anyway, so the FCC published a list of the weather-radar locations and asked U-NII users for help in avoiding them. The interference continued. The FCC tracked down several of the interfering transmitters and found that the software in each had been illegally modified to disable the sensing function. The FCC sanctioned the operators and amended its rules to require better software security and more effective sensing. Getting to that point took more than 10 years and a lot of coffee. There is still a lot of older equipment in the field that can easily be hacked—and that may cause dangerous interference in the future.

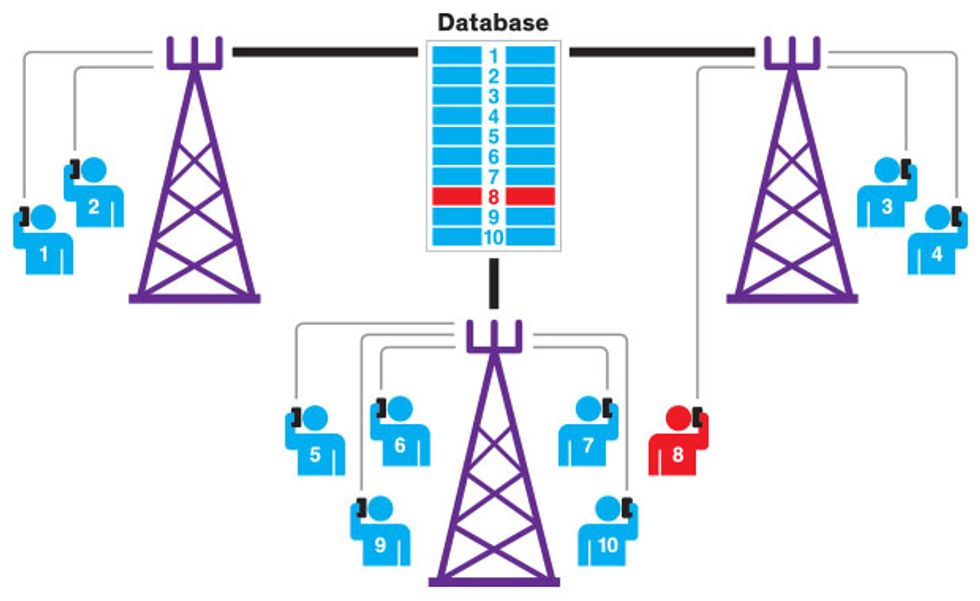

A different means of controlling interference relies on a database of protected-receiver locations and frequencies. This kind of database is key to operations in what’s known as TV white space (TVWS): locally vacant TV frequencies available for use without a license. TVWS communications have been authorized in the United States, Canada, the United Kingdom, and a few African countries. TVWS devices must avoid causing interference to TV stations, wireless microphones, and other protected users operating in the TV bands. U.S. proposals in the early 2000s considered two options for implementing TVWS. The first was to use database lookup to tell a geolocation-equipped TVWS device which channels are free at its location. The second was to ensure that the device had the capability to sniff the spectrum for any activity in a candidate channel. As the TVWS proceeding advanced and the difficulties of spectrum sensing became more apparent, the primary mechanism for avoiding interference shifted to the database.

Most U.S. TVWS devices must consult this national database at least once a day for information about available channels at their locations. (If a device lacks an Internet connection, it must instead consult a nearby device that in turn can access the database.) Equipment that uses just spectrum sensing to avoid interference is allowed, but only at low power and after passing stringent certification tests.

Why was spectrum sensing relegated to a minor role? Suppose you’re using a mobile TVWS device while standing next to a house that sports an outdoor TV antenna on a 5-meter-tall mast. A signal from a far-off television station could well be strong enough to provide an enjoyable picture in the house, yet be too weak for the mobile device to detect. If the mobile unit decides the channel is clear and starts to operate, it will overwhelm the TV signal.

So far the FCC hasn’t certified any sensing-only TVWS equipment as being compliant with its technical requirements, so you can’t buy such equipment yet. The Canadian and U.K. rules are more cautious: They require a database lookup and do not permit equipment to rely on sensing.

The FCC is planning a much more ambitious database for its new Citizens Broadband Radio Service (CBRS), which will operate at 3.55 to 3.7 gigahertz. Although modeled in some respects on TVWS, the CBRS database (which is not yet operational) must work on a timescale of fractional seconds, with devices checking it continually. It will assign frequency slots on the fly while prioritizing among three classes of users: fixed incumbents (including government radars), users who pay for superior access, and nonpaying general users. A network of sensors will locate the radar transmissions, allowing operation closer to the radars than would otherwise be possible. If this combination of a fast database and ongoing sensing can be made to work, it should let many more users than usual share the available spectrum and could become an irresistible model for other countries and other bands.

Interference-prevention methods will really be put to the test with the next generation of mobile phones, 5G. Many of the predicted 5G applications will arise in places where large numbers of people congregate: stadiums, shopping malls, urban business districts, hospitals, university campuses, and the like. Having so many devices operating within a small area will challenge conventional methods for preventing interference.

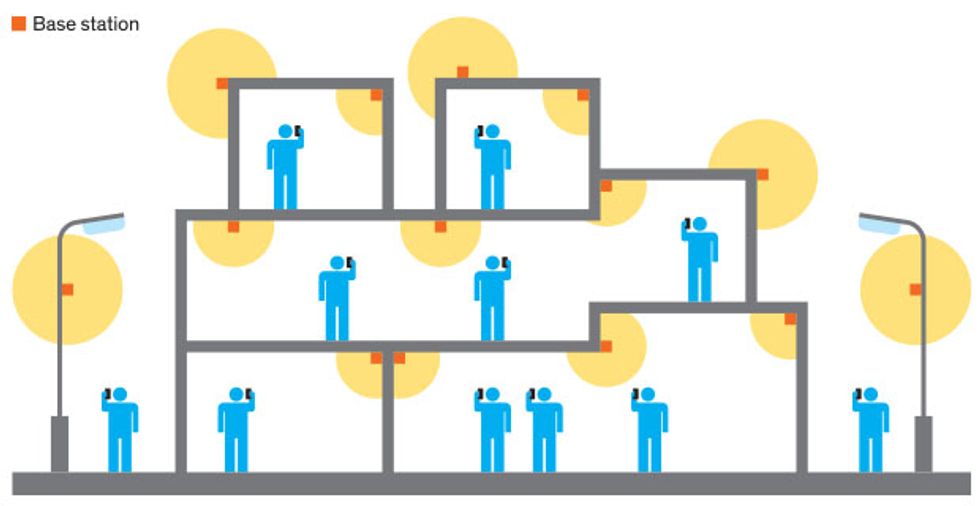

The FCC has a proceeding under way [pdf] to mark out the foundations of 5G in bands between 24 and 40 GHz. There will be enough bandwidth to carry many times the data that today’s 4G can handle. But at those frequencies, signal propagation becomes an issue. Assuming a conventional base-plus-mobiles setup, every mobile handset will have to be within a few hundred meters of its base, with direct line of sight. Worse, because these signals cannot reliably penetrate building materials, using your 5G device indoors will require indoor base stations—at least one per floor of an office building.

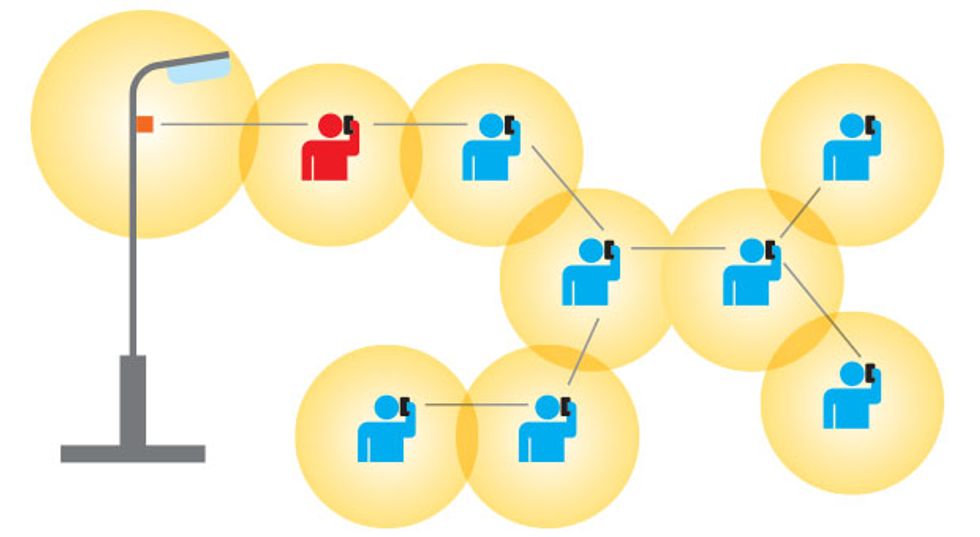

One way to get around these limitations is with an approach called mesh networking, which uses intervening mobile devices to reach a unit that would otherwise be out of range. Mesh-networking protocols such as ZigBee and Thread now connect unlicensed devices. Cities in Afghanistan and Kenya use an open-source mesh network system called FabFi for Internet access. So far, though, the idea has not caught on with wireless carriers. One reason for that lack of interest is that the available bandwidth of the connection goes down with each hop along the mesh. But high data capacity at 5G frequencies should nonetheless allow mesh networks to provide users with satisfactory performance.

The attractiveness of mesh networks for 5G means that we might see many more of these radio networks in the future, so it’s worth thinking about new ways to arrange their spectrum management. The centrally managed, nationwide databases used by TVWS and (soon) CBRS are, after all, very 20th century. Both operate in facilities separate from the radio systems they control and must connect to those systems over the Internet. Some authority must establish and maintain them, and at least in the case of CBRS, they require facilities that grow roughly in proportion to the population of devices being coordinated. All of this costs money.

A better approach to managing mesh networks could use the mobile handsets themselves to collectively keep track of their frequencies and locations. Communications would still be organized according to a database, but the information would not be held on one central computer. Rather, each mobile device in the network would automatically lend its hardware and software to form part of the ever-shifting database of information about all mobile units in the area.

When a user seeks to transmit, his handset would first query the collective database for a vacant frequency and then register its own location and frequency so as to obtain protection from later-arriving network participants. When the mobile unit ends its transmission, it would automatically notify its fellows, which would then make that frequency slot locally available. When a user powers down his mobile device or carries it beyond the edge of the network, that device would hand off its component of the database to other handsets.

This approach would have several advantages over a conventional centralized database. There would be no need for separate facilities or management, or for nationwide coverage. A mobile transmitter that needs a frequency in Boston, after all, has no interest in Philadelphia. The concept scales easily: Adding more devices to the network requires no new equipment beyond the mobile units themselves.

A swarm of mobiles working together to collectively operate a database and work harmoniously begins to resemble a science fiction “hive mind,” like the Borg of Star Trek. Of course, science fiction has often presaged reality, so such a system may well come to pass. Fortunately, the goal here is not to conquer the universe but just to keep those silly cat videos playing.

About the Author

Mitchell Lazarus has worked as an electrical engineer, psychology professor, education reformer, educational-TV developer, freelance writer, and most recently, a telecommunications lawyer. He is a partner with the law firm Fletcher, Heald & Hildreth, where he specializes in regulatory approvals for new technologies. Lazarus blogs actively for the firm at CommLawBlog.com.