Video Friday is your weekly selection of awesome robotics videos, collected by your Automaton bloggers. We’ll also be posting a weekly calendar of upcoming robotics events for the next two months; here’s what we have so far (send us your events!):

NASA Swarmathon – April 18-20, 2017 – NASA KSC, Florida, USA

RoboBusiness Europe – April 20-21, 2017 – Delft, Netherlands

RoboGames 2017 – April 21-23, 2017 – Pleasanton, Calif., USA

ICARSC – April 26-30, 2017 – Coimbra, Portugal

AUVSI Xponential – May 8-11, 2017 – Dallas, Texas, USA

AAMAS 2017 – May 8-12, 2017 – Sao Paulo, Brazil

Austech – May 9-12, 2017 – Melbourne, Australia

Innorobo – May 16-18, 2017 – Paris, France

NASA Robotic Mining Competition – May 22-26, 2017 – NASA KSC, Fla., USA

IEEE ICRA – May 29-3, 2017 – Singapore

University Rover Challenge – June 1-13, 2017 – Hanksville, Utah, USA

IEEE World Haptics – June 6-9, 2017 – Munich, Germany

NASA SRC Virtual Competition – June 12-16, 2017 – Online

Let us know if you have suggestions for next week, and enjoy today’s videos.

The Japanese Volleyball Association and the University of Tsukuba have developed a seriously intimidating volleyball blocking robot:

There will be more on this at ICRA next month, and we’re hoping for a live demo.

[ New Scientist ]

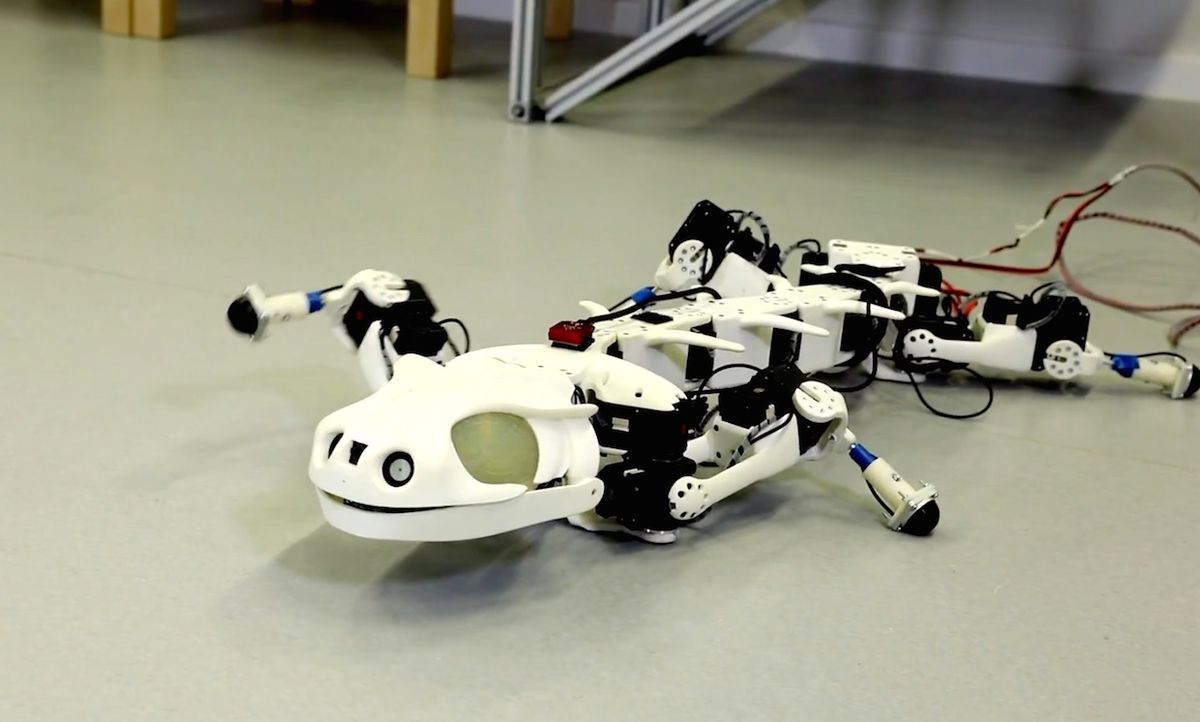

We’ve long been fans of Professor Auke Ijspeert’s bioinspired robot locomotion research at EPFL. Here’s an overview of his lab.

[ EPFL ]

OpenAI has created “the world’s first Spam-detecting AI trained entirely in simulation and deployed on a physical robot.” Trash that Spam!

Nice to see a Fetch robot out in the world doing manipulation tasks and assisting in some top notch research, too.

[ OpenAI ]

Your Uncanny Valley thing of the week is a Tickle Me Elmo doll with the fur removed:

We post lots of videos about MARLO, but it’s not always obvious from those videos just how advanced the robot is. This video accompanies a paper from Michigan’s Dynamic Legged Locomotion Lab that explains how MARLO is able to achieve “the lowest [cost of transport] of any unsupported bipedal robot tested over rough terrain (at a faster speed than any other bipedal benchmark).”

For more details, the full paper is linked below.

To demonstrate the capabilities of their Cataglyphis rover (which won a NASA robotics competition a few years ago), researchers at WVU have programmed it how to autonomously deploy and retrive easter eggs. The next step, we assume, is teaching the robot the most important part of hunting easter eggs: Knocking over small children who are in your way.

[ WVU IRL ]

Thanks Professor Gu!

This is an interesting package-sorting setup in China. It’s a bit strange that a human needs to be involved here at all, frankly, but it could simply be that humans are still cheaper than robots for some tasks. Not as many tasks as they used to be, though.

Chinese delivery firm is moving to embrace automation. Orange robots at the company’s sorting stations are able to identify the destination of a package through a code-scan, virtually eliminating sorting mistakes. Shentong’s army of robots can sort up to 200,000 packages a day, and are self-charging, meaning they are operational 24/7. The company estimates its robotic sorting system is saving around 70-percent of the costs a human-based sorting line would require.

[ People’s Daily ]

Happy National Robotics Week from Sphero <3

[ Sphero ]

RxRobots programs Nao robots to help children handle extremely difficult situations. This includes time in the hospital, but also things like preparing to testify in court:

[ RxRobots ]

I’m not sure how recent this video of SAFFIR is, but it looks competent a hose handling while walking:

[ Virginia Tech ]

Interesting (and award winning!) new research on soft, diaelectric actuators from UCSD:

Recently, dielectric elastomer actuators (DEAs) have gathered interest for soft robotics due to their low cost, light weight, large strain, low power consumption, and high energy density. However, developing reliable, compliant electrodes for DEAs remains an ongoing challenge due to issues with fabrication, uniformity of the conductive layer, and mechanical stiffening of the actuators caused by conductive materials with large Young’s moduli. In this work, we present a method for preparing, patterning, and utilizing conductive fluid electrodes. Further, when we submerse the DEAs in a bath containing a conductive fluid connected to ground, the bath serves as a second electrode, obviating the need for depositing a conductive layer to serve as either of the electrodes required of most DEAs. When we apply a positive electrical potential to the conductive fluid in the actuator with respect to ground, the electric field across the dielectric membrane causes charge carriers in the solution to apply an electrostatic force on the membrane, which compresses the membrane and causes the actuator to deform. We have used this process to develop a tethered submersible robot that can swim in a tank of saltwater at a maximum measured speed of 9.2 mm/s. Since saltwater serves as the electrode, we overcome buoyancy issues that may be a challenge for pneumatically actuated soft robots and traditional, rigid robotics. This research opens the door to low-power underwater robots for search and rescue and environmental monitoring applications.

[ UCSD ]

Seeing IIT’s HyQ quadruped walking on slopes like these is very impressive:

You can find the paper, “High-slope terrain locomotion for torque-controlled quadruped robots,” at the link below.

[ IIT ]

Well, people are officially running out of interesting things to do with drones.

[ YouTube ]

It’s a re-edit of an old (2009, I think?) video, but this Motoman robot making okonomiyaki gets me every time because it’s sooo delicious looking:

[ Yaskawa ]

Magnus Egerstedt, director of Georgia Tech’s Institute for Robotics and Intelligent Machines (IRIM), describes his views on robotics research and plans for the Robotarium, which is not quite finished yet, but sounds promising anyway:

[ Georgia Tech ]

PAL Robotics interviews Professor Michael Anderson from the University of Hartford about robot ethics:

[ PAL Robotics ]

Oliver Brock from Technische Universität Berlin gave a talk at Softbank Robotics on operating robots in unstructured environments:

The biggest success story of robotics remains factory automation. But our field is struggling to repeat this success in any other domain, especially in domains we call "unstructured." Among the main culprits for this difficulty have been uncertainty and the high-dimensionality of configuration spaces associated with versatile robots. In my talk, I will argue that our field has found successful solutions for uncertainty and high-dimensionality, as long as they pertain to the robot itself. I will argue that after alleviating these two problems, a new problem has become the next bottleneck: the variability of the environment. On our path towards general robotics, we must focus on handling this variability. This might seem completely obvious, but I believe the most important implications of this realization have been largely ignored. I will present some attempts to address the variability in the environment, including interactive perception and learning with robotic priors. I will also place these solutions in the context of other attempts to address variability, such as big data and deep learning.

The Center for Brains, Minds and Machines at MIT presents a talk by Amnon Shashua, the CTO and Chairman of Mobileye, on The Convergence of Machine Learning and Artificial Intelligence Towards Enabling Autonomous Driving.

[ CBMM ]

This week’s CMU RI Seminar is from Sergey Levine, assistant professor at UC Berkeley, on “Deep Robotic Learning.”

Deep learning methods have provided us with remarkably powerful, flexible, and robust solutions in a wide range of passive perception areas: computer vision, speech recognition, and natural language processing. However, active decision making domains such as robotic control present a number of additional challenges, standard supervised learning methods do not extend readily to robotic decision making, where supervision is difficult to obtain. In this talk, I will discuss experimental results that hint at the potential of deep learning to transform robotic decision making and control, present a number of algorithms and models that can allow us to combine expressive, high-capacity deep models with reinforcement learning and optimal control, and describe some of our recent work on scaling up robotic learning through collective learning with multiple robots.

[ CMU RI

Evan Ackerman is a senior editor at IEEE Spectrum. Since 2007, he has written over 6,000 articles on robotics and technology. He has a degree in Martian geology and is excellent at playing bagpipes.

Erico Guizzo is the Director of Digital Innovation at IEEE Spectrum, and cofounder of the IEEE Robots Guide, an award-winning interactive site about robotics. He oversees the operation, integration, and new feature development for all digital properties and platforms, including the Spectrum website, newsletters, CMS, editorial workflow systems, and analytics and AI tools. An IEEE Member, he is an electrical engineer by training and has a master’s degree in science writing from MIT.