Video Friday is your weekly selection of awesome robotics videos, collected by your Automaton bloggers. We’ll also be posting a weekly calendar of upcoming robotics events for the next few months; here’s what we have so far (send us your events!):

ELROB 2018 – September 24-28, 2018 – Mons, Belgium

RoboBusiness – September 25-27, 2018 – Santa Clara, Calif., USA

ARSO 2018 – September 27-29, 2018 – Genoa, Italy

ROSCon 2018 – September 29-30, 2018 – Madrid, Spain

IROS 2018 – October 1-5, 2018 – Madrid, Spain

Japan Robot Week – October 17-19, 2018 – Tokyo, Japan

ICSR 2018 – November 28-30, 2018 – Qingdao, China

Let us know if you have suggestions for next week, and enjoy today’s videos.

The latest version of the self-solving Rubik’s Cube is adorable in how it tries to throw itself off of the table it’s solving itself on:

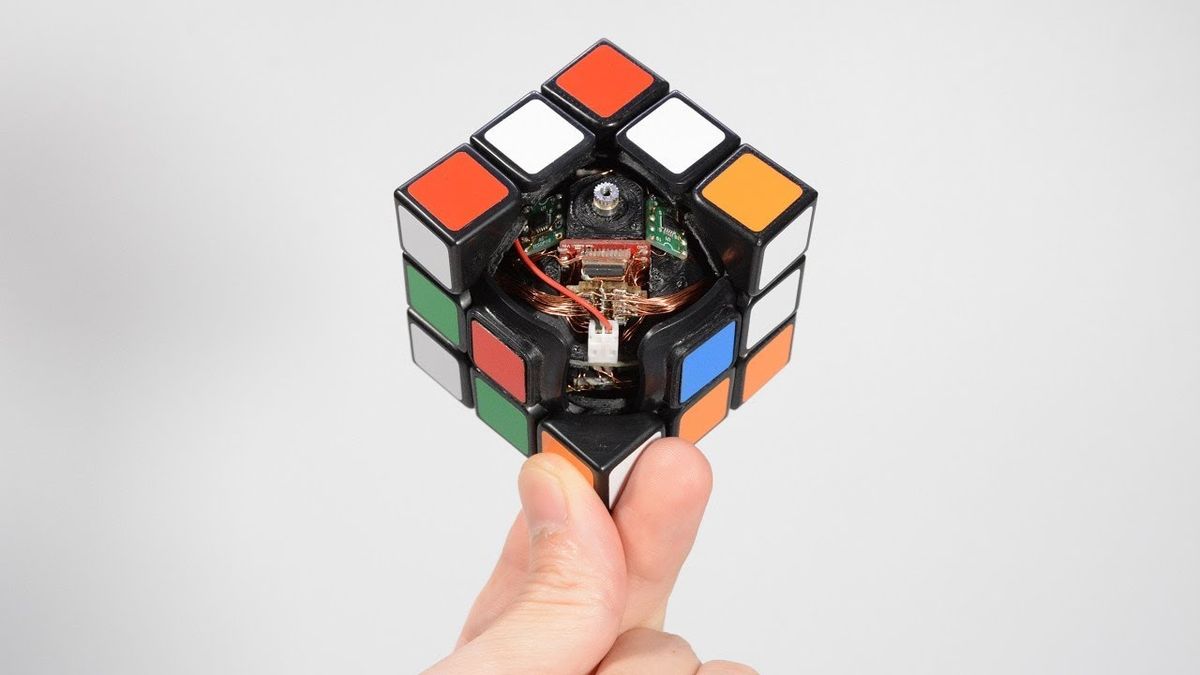

Not exactly an optimised solve, but we’ll forgive it, because that just means we get to watch it for longer. And here’s what it looks like if you’re holding it:

This is from the same folks who brought you the future of telepresence:

[ Human Controller ]

When you think of robotics, you likely think of something rigid, heavy, and built for a specific purpose. New “Robotic Skins” technology developed by Yale researchers flips that notion on its head, allowing users to animate the inanimate and turn everyday objects into robots.

To demonstrate the robotic skins in action, the researchers created a handful of prototypes. These include foam cylinders that move like an inchworm, a shirt-like wearable device designed to correct poor posture, and a device with a gripper that can grasp and move objects.

[ Yale ]

This is a clever bit of robot navigation: Rather than worry about obstacles and stuff, just draft behind humans who are going the same way you are, and let them do all the sensing and stuff.

We propose a novel navigation system for mobile robots in pedestrian-rich sidewalk environments. We developed a group surfing method which aims to imitate the optimal pedestrian group for bringing the robot closer to its goal. For pedestrian-sparse environments, we propose a sidewalk curb following method. Both approaches are shown in this video.

[ CARIS Lab ]

Some new footage from UMD’s Cassie here, including what looks like some accidental slip recovery that’s pretty impressive. And obligatory falling over at the end.

[ Paper ]

The LittleBot kit by Slant Robotics—which calls it “the world’s most affordable and simple robotics kit”—is now on Kickstarter.

The basic kit is only US $28, but a $79 pledge puts you down for a version with a bunch of sensors that’ll make it way more fun.

[ Kickstarter ]

Thanks Ben!

Last month we posted about mobile robots that could 3D print structures, and they’re now able to move and print at the same time, which is new.

[ NTU CRI ]

Using custom light design boards, ArtBoats glides across the water with changing color strips to create moonlit light paintings. The project, launched by MIT Media Lab PhD student Laura Perovich, aims to light up rivers to excite and engage local communities about their water.

[ MIT Media Lab ]

Rumble, crush and plow into the new UBTECH JIMU Robot BuilderBots Series: Overdrive Kit. With this kit you can create buildable, codable robots like DozerBot and DirtBot or design your own JIMU Robot creation. The fun is extended with the Blockly coding platform, allowing kids ages 8 and up to build and code these robots to perform countless programs and tricks.

[ UBTECH ]

A very Kiva-like pitch for an autonomous robot cart from 6 River Systems:

What’s it like to work with automated warehouse robots? Kind of like having your own personal helper. Meet Chuck, a collaborative mobile robot that works with employees to get the job done faster and better. With 6 River Systems and Chuck, warehouses are doubling or tripling their pick rates, at a fraction of the cost of traditional goods-to-person automation. When you need a better way, follow the leader!

[ 6 River Systems ]

Flying at 28 800 km/h, 400 km above Earth, from the International Space Station, ESA astronaut Alexander Gerst controlled a robot called Justin on 17 August 2018. Justin was stationed at the DLR German Aerospace Center in Oberpfaffenhofen, Germany.

ESA has run multiple experiments from the Space Station with robots to test the network, the control system and the robots on Earth. This is a new area for everybody involved and each aspect needs to be tested. This is the third in a series of SUPVIS-Justin orbital experiments. The first was carried out by ESA astronaut Paolo Nespoli in August 2017.

The SUPVIS-Justin experiment took around four hours in total. This included set-up, software updates and two hours of interaction between Alexander and Justin.

The tests were chosen to enact future scenarios in which astronauts orbiting distant planets and moons can instruct robots to do difficult or dangerous tasks and set up base before landing for further exploration. The experiment fits in ESA’s strategy to prepare for further exploration of our Universe.

[ ESA ]

We wrote about this exosuit when it was undergoing military testing, and Harvard has continued to optimize its performance:

[ Wyss Institute ]

KUKA partner andyRobot (AKA Andy Flessas) has developed a plug-in for industry leading animation software, Autodesk Maya, that allows KUKA robots to be programmed by anyone who knows how to animate inside Maya. Simply by dragging the 3D model of the robot through space in the virtual world and setting keyframes, a robot program can be created. Called Robot Animator, andyRobot’s Maya plug-in is aimed squarely at creative professionals, but could also find use in other less creative robot programming endeavors. The technology is enabled by KUKA’s EntertainTech, a piece of hardware and software that allows for the robot animation to be turned into a robot program and drive the robot.

[ andyRobot ]

The AEROARMS project is one of those EU 2020 flagship robotics initiatives with a long-winded website, but “aero” and “arms” is pretty much all you need to know.

In Bavaria, your Münchner weißwurst are palletized by robot.

Since when do sausages come in a can?

[ Kuka ]

Ron Arkin is the guest on this episode of The Interaction Hour, a podcast from GA Tech hosted by Ayanna Howard.

The emergence of artificial intelligence in society has elicited visceral reactions from people the world over, many of whom, thanks to portrayals in popular culture, can’t quite decide whether they believe we are building the future – or destroying it. Are we actually dealing with “killer robots?” Why has the public perception become so polarizing? Can we trust algorithms to make appropriate and trustworthy decisions, or do we risk too much by turning power over to the robots? Professor Ron Arkin, an expert in robotics and roboethics joins the podcast to discuss.

[ GA Tech ]

We’re still sad about what happened with Kuri, but whether or not it could have been a sustainable commercial product, the vision was certainly there. This SXSW talk from Mayfield CEO Kaijen Hsiao is still worth listening to.

[ Mayfield ]

In this week’s (particularly excellent) CMU RI Seminar, Herman Herman, director of the National Robotics Engineering Center (NREC) at CMU, shares “Lesson Learned from Two Decades of Robotics Development and Thoughts on Where We Go from Here.”

In this talk, Herman Herman will offer various lessons learned from developing various robots for the last 2 decades at the National Robotics Engineering Center. He will also offer his perspective on the future of autonomous robots in various industries, including self-driving cars, material handling and consumer robotics.

[ CMU RI ]

Evan Ackerman is a senior editor at IEEE Spectrum. Since 2007, he has written over 6,000 articles on robotics and technology. He has a degree in Martian geology and is excellent at playing bagpipes.

Erico Guizzo is the Director of Digital Innovation at IEEE Spectrum, and cofounder of the IEEE Robots Guide, an award-winning interactive site about robotics. He oversees the operation, integration, and new feature development for all digital properties and platforms, including the Spectrum website, newsletters, CMS, editorial workflow systems, and analytics and AI tools. An IEEE Member, he is an electrical engineer by training and has a master’s degree in science writing from MIT.