Video Friday is your weekly selection of awesome robotics videos, collected by your Automaton bloggers. We’ll also be posting a weekly calendar of upcoming robotics events for the next two months; here’s what we have so far (send us your events!):

European Robotics Forum – March 22-24, 2017 – Edinburgh, Scotland

NDIA Ground Robotics Conference – March 22-23, 2017 – Springfield, Va., USA

Automate – April 3-3, 2017 – Chicago, Ill., USA

ITU Robot Olympics – April 7-9, 2017 – Istanbul, Turkey

ROS Industrial Consortium – April 07, 2017 – Chicago, Ill., USA

U.S. National Robotics Week – April 8-16, 2017 – USA

NASA Swarmathon – April 18-20, 2017 – NASA KSC, Florida, USA

RoboBusiness Europe – April 20-21, 2017 – Delft, Netherlands

RoboGames 2017 – April 21-23, 2017 – Pleasanton, Calif., USA

ICARSC – April 26-30, 2017 – Coimbra, Portugal

AUVSI Xponential – May 8-11, 2017 – Dallas, Texas, USA

AAMAS 2017 – May 8-12, 2017 – Sao Paulo, Brazil

Austech – May 9-12, 2017 – Melbourne, Australia

Innorobo – May 16-18, 2017 – Paris, France

Let us know if you have suggestions for next week, and enjoy today’s videos.

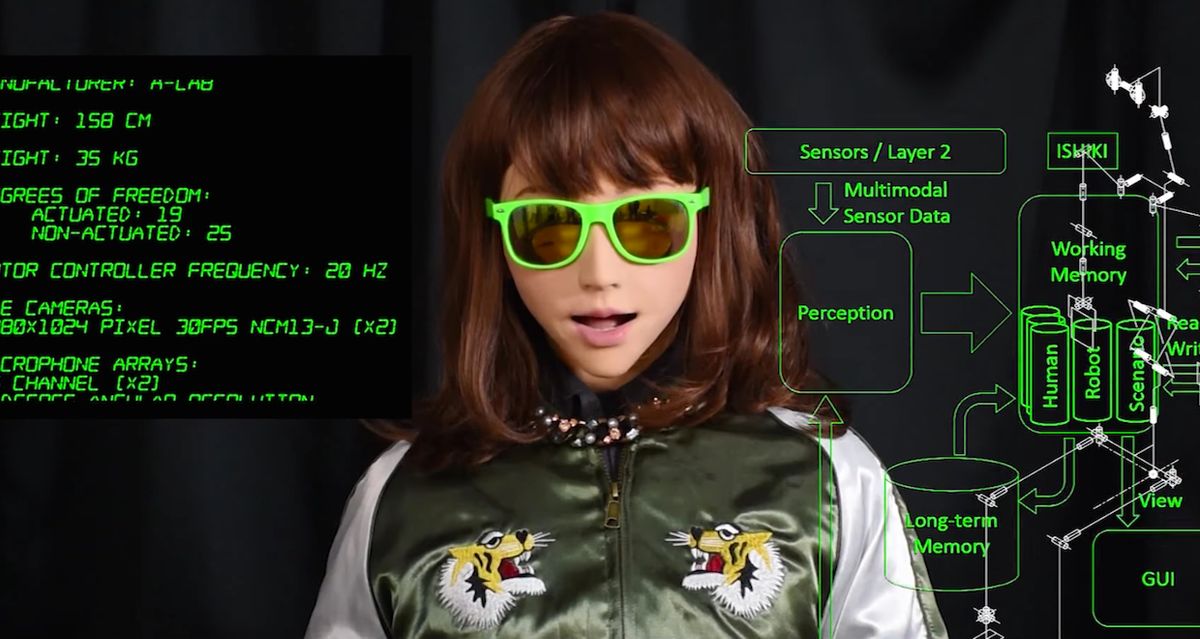

The winner of the best video competition at HRI 2017 was this masterpiece from Professor Hiroshi Ishiguro (aka “The Man Who Made a Copy of Himself”) and colleagues Dylan F. Glas, Malcolm Doering, Phoebe Liu, and Takayuki Kanda from the Advanced Telecommunications Research Institute in Kyoto:

Stay tuned for the live concert tour, and read the paper at the link below.

[ “Robot’s Delight: A Lyrical Exposition on Learning by Imitation from Human-human Interaction” ]

Dreamer’s disembodied head can follow you without blinking. Ever.

And apparently, this is how UT Austin students choose to spend their Saturday nights: Trying to get a robot to poke itself in the eye.

[ UT Austin ]

Combining a UGV with a Fotokite (featured on our Robot Gift Guide) seems like a very safe, reliable, and effective way of inspecting nuclear plants:

An example of an Endeavor PackBot with a tethered Fotokite UAV demonstrated for safe nuclear plant inspection and decommissioning. This is an extension of our AirJoey work where a mother robot carries and supports daughter UAVs. This was funded by the DOE Environmental Management division through a cooperative agreement with Sandia National Labs.

[ CRASAR ]

Sphero is here to wish you a happy St. Patrick’s Day:

[ Sphero ]

RoboBoat 2017 takes place this June in Daytona, Fla:

[ Roboboat ]

Try and crash this drone with a suite of TeraRanger sensors on it into an obstacle. Go on, try it!

[ TeraRanger ]

I’m glad a couple of Sawyers have a job at DHL in the U.K., but for the life of me I can’t figure out what they’re accomplishing:

[ Rethink Robotics ]

Endeavor Robotics, the military and security robot company spun out of iRobot, offers a behind-the-scenes look at how it builds and tests its Made in the USA ground robots:

NXROBO’s BIG-I home robot was part of a demo smart bedroom from Haier:

It’s still a little bit difficult to tell how real-world functional or useful this robot will be, but I’m liking its design more and more.

[ NXROBO ]

You’ll probably need to turn on subtitles for this video from Pollen Robotics, but it’s worth it:

Meed has been designed as a robot accessible for all. It allows the discovery of robotics and learning to code. This journey will take place among new adventures that you will share with Meed. It is intended for young girls and boys, starting from 10, but also to anyone interesting in discovering the robotic in a new entertaining and poetic way.

Once they figure out what Meed will be and do (you can contribute to this, if you have ideas), they’ll be crowdfunding it, so stay tuned.

[ Pollen Robotics ]

From the University of Zurich:

We propose a novel collaborative transport scheme, in which two quadrotors transport a cable-suspended payload at accelerations that exceed the capabilities of previous collaborative approaches, which make quasi-static assumptions. Furthermore, this is achieved completely without explicit communication between the collaborating robots, making our system robust to communication failures and making consensus on a common reference frame unnecessary. Instead, they only rely on visual and inertial cues obtained from on-board sensors.

They’ll be presenting a paper on this at ICRA 2017 in Singapore.

[ UZH RPG ]

The simplest solution is always the most robust. With this in mind, we designed a four wheeled rover able to easily overcome vertical steps of more than 150% of its ground clearance without any active control. In other words, ROVéo doesn’t need to actively change its shape to overcome obstacles. The extended mobility is purely provided by the mechanical design of its chassis.

[ Rovenso ]

Robots playing games, drumming, serving drinks... I would say that Kuka’s booth at the China International Industry Fair was going overboard, but that might imply that I don’t approve of everything that they were doing:

[ Kuka ]

The Takanishi Lab at Waseda Univeristy in Japan has uploaded a pile of old robot videos. There’s all kinds of weird stuff, but here’s a selection of the weirdest:

[ Takanishi Lab ]

Evan Ackerman is a senior editor at IEEE Spectrum. Since 2007, he has written over 6,000 articles on robotics and technology. He has a degree in Martian geology and is excellent at playing bagpipes.

Erico Guizzo is the Director of Digital Innovation at IEEE Spectrum, and cofounder of the IEEE Robots Guide, an award-winning interactive site about robotics. He oversees the operation, integration, and new feature development for all digital properties and platforms, including the Spectrum website, newsletters, CMS, editorial workflow systems, and analytics and AI tools. An IEEE Member, he is an electrical engineer by training and has a master’s degree in science writing from MIT.