Video Friday is your weekly selection of awesome robotics videos, collected by your seafaring Automaton bloggers. We’ll also be posting a weekly calendar of upcoming robotics events for the next two months; here’s what we have so far (send us your events!):

Field Robot Event – June 14-18, 2016 – Haßfurt, Germany

EuroEAP 2016 – June 14-15, 2016 – Copenhagen, Denmark

RSS 2016 – June 18-22, 2016 – Ann Arbor, Mich., USA

European Land Robot Trial – June 20-24, 2016 – Eggendorf, Austria

Automatica 2016 – June 21-25, 2016 – Munich, Germany

ISR 2016 – June 21-22, 2016 – Munich, Germany

ICROM 2016 – June 23-25, 2016 – Singapore

The Rise of Machine Learning – June 24, 2016 – San Francisco, Calif., USA

UK Robotics Week – June 25-1, 2016 – United Kingdom

Hamlyn Symposium on Medical Robotics – June 25-28, 2016 – London, England

TAROS 2016 – June 28-30, 2016 – Sheffield, United Kingdom

RoboCup 2016 – June 30-4, 2016 – Leipzig, Germany

Amazon Picking Challenge – June 30-4, 2016 – Leipzig, Germany

IEEE AIM 2016 – July 12-15, 2016 – Banff, Canada

DLMC 2016 – July 13-15, 2016 – Zurich, Switzerland

ROS Industrial Workshop – July 14-15, 2016 – Singapore

MARSS 2016 – July 18-22, 2016 – Paris, France

IEEE WCCI 2016 – July 25-29, 2016 – Vancouver, Canada

Let us know if you have suggestions for next week, and enjoy today’s videos.

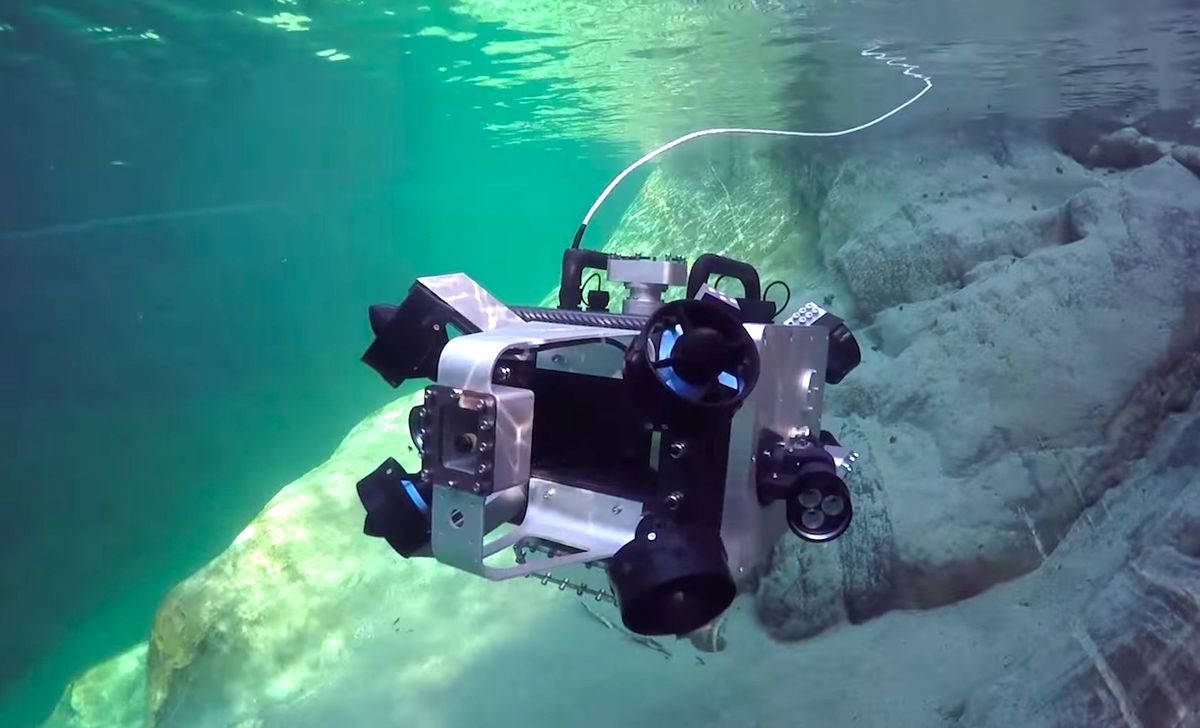

A team from ETH Zurich is working on a nifty little robot submarine called Scubo:

25% of all marine species live in coral reefs whereas coral reefs represent only a small fraction of the ocean. Within the last 30 years half of all corals have died already. However, there are selective measures in restoring coral reefs by cultivating and eventually statically outplanting them on reefs. Yet, in order to do so the corals as well as the reefs have to be measured by divers in a time-consuming process.

Our goal is to build a small and highly agile submersible that makes it possible to scan entire coral reefs. Additionally it should provide an intuitive way to explore foreign waters without any prior technical knowledge. Thereby Scubo is to be the first robot which meets the needs of the research as well as entertainment sectors.

In order to achieve our vision Scubo will be able to move omnidirectional, which means that it can move and turn in every direction. In this manner the object which has to be scanned can be looked at from every desired direction and angle. To obtain an intuitive steering we will implement six on-board cameras that cover the entire space around Scubo.

Yet, Scubo should not be restricted to scanning corals. Via five ports each user can connect his very own sensor or even a high-definition camera. Whether corals in the Caribbean, the shore of Lake Zurich or even a virtual dive in an aquarium - Scubo not only convinces with its captivating technology but also with its modern design. Innovation starts when science meets entertainment.

[ Scubo ]

David Pietrocola from Luvuzo let us know about a new research version of their healthcare service robot:

Building on the success of Luvozo’s SAM service robot for healthcare settings, we are excited to announce that we’ve created a research version for university/industry labs, educators, and anyone looking to get moving quickly with a fully-capable, mid-sized indoor mobile robot. We’re a team of roboticists developing products every day to help improve quality of life for older adults, and SAM has become a great platform that we want to share with the research community. The SAM Research Platform is perfect for:

- Autonomous navigation and path planning

- Experimental testing of simulation results

- Multi-robot and cooperative teams

- Human-robot interaction

- New sensor testing and fusion

There’s lots more info on Luvozo’s website, except for how much it costs, but we’re told that it’s “competitive with other research platforms.” Hopefully, we’ll get a closer look at one of these in the very near future, so stay tuned for more.

[ Luvozo ]

Thanks David!

Over the last five years, the U.S. National Science Foundation and the National Robotics Initiative, unveiled by President Barack Obama in 2011, have funded 230 projects in 33 states, with an investment totaling more than $150 million. That’s a lot. Here’s a video to celebrate. And before you get annoyed at the music, it was made by robots. So you can still get annoyed, but get annoyed at the robots, not at the video.

Tax dollars unusually well spent.

[ NRI ]

DHL has successfully run a pilot test including robot technology for collaborative automated order picking in a DHL Supply Chain warehouse in Unna, Germany. The robot called EffiBOT from the French start-up Effidence is a new, fully automated trolley that follows pickers through the warehouse and takes care of most of the physical work. It is specifically designed to work safely with and around people. During the test, two robots supported the pickers by carrying the weight and automatically dropping off the orders once fully loaded. The warehousing staff highly appreciated the option to work hands-free and not having to push or pull heavy carts.

Well, this was inevitable, wasn’t it?

Next up: how to perform open heart surgery using two snake robots and an autonomous chainsaw.

[ Simone Giertz ] + [ Samy Kamkar ]

Dr. Conghui Liang at Nanyang Technological University in Singapore has a couple students, who may also be chipmunks, who put together some cool demos on a Nextage dual-arm robot:

[ RRC ]

The only thing better than a remote control robot rescue boat is a remote control robot rescue boat that you don’t even have to remote control:

EMILY has been upgraded since her deployment in Jan, 2016, assisting with the Syrian boat refugees in Greece, to have navigational autonomy (GPS waypoint). This is part of a project to allow lifeguards to designate where EMILY should go while they directly help people in more urgent distress.

[ EMILY ]

The new Dyson School of Design Engineering at Imperial College London has its first robot, DE NIRO (Design Engineering’s Natural Interaction Robot):

You talkin’ to me?

[ Imperial College ]

I hate getting dragged into “what is a robot” conversations, but if UW wants to tackle it, they can go right ahead:

Yaskawa Motoman is dedicated to inspiring students to become interested in STEM courses and careers in robotics. Hosting student tours provides a great opportunity to share why STEM robotics education is vital to workforce development. Students have the opportunity to learn about industrial robotics and the many career path opportunities that are available.

That’s right, kids: roboticists just have fun all day and are really cool. Also, Vernon is the best.

[ Yaskawa ]

There’s a new droid roaming around Disney’s Star Wars Launch Bay. No info about it yet, but I could believe that it’s fully autonomous: it looks like it’s probably got a shin-height LIDAR, and it’s following a fixed path around a pre-mapped environment, politely avoiding people along the way.

Werner Huber, Manager Highly Automated Driving at BMW Group, explains studies regarding BMW and autonomous driving. The question for BMW is always: How far can we go and how far should we go with automation regarding the promise “sheer driving pleasure”.

[ BMW ]

This is what happens when you feed a neural network a bunch of sci-fi movies and ask it to write you a script, and then you produce that script, and you add Thomas Middleditch:

Are you not entertained?

[ Ars Technica ]

The Campaign to Stop Killer Robots was at the U.N. in Geneva in April to present their views on lethal autonomous weapons. Here are three videos from some of the presenters, and there are more on their YouTube channel. Keep in mind that this is just one viewpoint on this issue, and while it’s certainly a valid one, it’s a complex issue that’s worth considering from different perspectives as well.

[ Campaign to Stop Killer Robots ]

And last, a panel from Microsoft Research: “Progress in AI: Myths, Realities, and Aspirations.”

Moderated by Eric Horvitz, Managing Director, Microsoft Research, and featuring panelists Fei-Fei Li, Associate Professor, Stanford University; Michael Littman, Professor of Computer Science, Brown University; Josh Tenenbaum, Professor, Massachusetts Institute of Technology; Oren Etzioni, Chief Executive Officer, Allen Institute for Artificial Intelligence; and Christopher Bishop, Distinguished Scientist, Microsoft Research.

Evan Ackerman is a senior editor at IEEE Spectrum. Since 2007, he has written over 6,000 articles on robotics and technology. He has a degree in Martian geology and is excellent at playing bagpipes.

Erico Guizzo is the Director of Digital Innovation at IEEE Spectrum, and cofounder of the IEEE Robots Guide, an award-winning interactive site about robotics. He oversees the operation, integration, and new feature development for all digital properties and platforms, including the Spectrum website, newsletters, CMS, editorial workflow systems, and analytics and AI tools. An IEEE Member, he is an electrical engineer by training and has a master’s degree in science writing from MIT.