You know how we keep saying that robots are designed for places that are dirty and dangerous? Yeah, we need to get ourselves some robots that can go in and help with the Ebola outbreak. That’s why I got all excited to see something about an Ebola-fighting robot in the news this week. But as it turns out, this thing is totally not a robot, since a human has to wheel it around, and then it just sits there and turns some UV lights on and off for five minutes. No sensing, no reacting to its environment, no autonomy or intelligence. It’s just a dumb machine. This isn’t to say that it’s not effective at what it does; it’s just not a robot.

Sigh.

To make ourselves feel a little bit better, we’ve done what we usually do, which is to fill our Friday with videos of robots that really are robots.

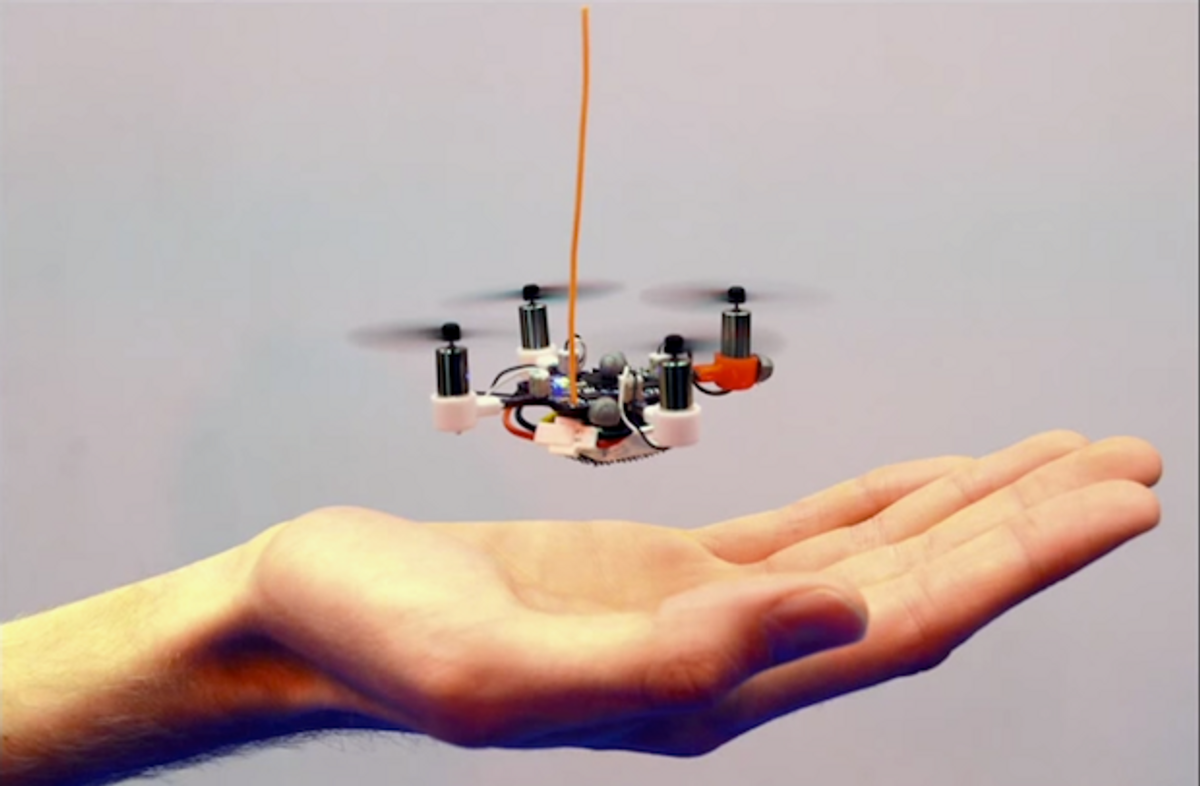

It’s so small! It’s so bouncy! It’s the Pico Quadrotor!

[ Vijay Kumar Lab ]

We’ve held off on posting videos from Team BlackSheep recently, since they do a lot of stuff with drones that’s fairly reckless and (depending on where you are) probably illegal. But this video is worth posting because it illustrates exactly why flying drones near populated areas (be it a village or a city) can be tricky and why you shouldn’t believe the hype about urban drone delivery. See if you can spot the wire that brought the drone down. I barely could, even after replaying it five times.

[ Team BlackSheep ]

iRobot’s new uPoint Multi-Robot Control System swaps out bulky controllers for a streamlined tablet interface that standardizes the operation of all of iRobot’s, um, robots. You get as many touchscreen controls as you can throw your fingers at, along with lots of other features designed to make driving the robots and operating its sensors and manipulators easy and intuitive:

- A virtual joystick that allows users to touch and drag anywhere on the main video feed to steer the robot

- Predictive drive lines that help guide operators through tight spots

- Autonomous driving modes including vector drive to hold a desired heading

- Simplified manipulation with direct control of the arm on a virtual model

- Data sharing from the operator’s controller to other team members or remote observers

- Easy switching on the same tablet between different robots operating in the vicinity

You can also multitask on the Android-based tablet and check your email in the middle of a mission. “Hi, hon, I’m just finishing with an IED. How’s your day going?”

[ iRobot ]

The Ishikawa Watanabe Laboratory at the University of Tokyo is famous for combining high-speed vision and robotic manipulation. But now they’re applying the same vision-based strategy to control a biped robot.

Since the launch of the visual-feedback based bipedal running project in 2009, we have designed highly coordinated running experiment system, encompassing a 600 fps high-speed camera, real-time feedback controllers, image-processing programs and high-power actuators. This video provides brief understanding of how the project has progressed up until now.

[ ACHIRES ]

Helicopters? What do you need helicopters for? If you give a fixed wing aircraft big enough ailerons, it helicopter itself for you:

Kuka, take note: if you’re going to make a ping pong robot, for real, it’s going to look something like this:

If you somehow manage to beat this robot, it will climb over the table and laser you in the eyeball. I assume.

Via [ Monotech ]

Apparently there is an annual ladder-climbing competition for robots. Who knew!

Jimmy, the 2013 champion in the FIRA ladder-climbing event held in Malaysia, demonstrates his new, dynamic ladder-climbing technique.

In 2013 the ladder had fixed, evenly-spaced rungs. For the 2014 competition the rungs will be randomly-spaced 10 to 20cm apart. This means we can no longer rely entirely on static motions as we did last year. Instead Jimmy uses his on-board camera to calculate the distance to the next rung while climbing, dynamically calculating where to place his hands and feet.

[ Autonomous Agents Laboratory ]

SenseFly, the Swiss company responsible for the fantastic eBee drone, is working on something new and exciting. Not much in the way of details yet, but we’re asking around, and should have something for you soon, if we can swing it.

[ eXom ]

When I was an undergrad, I didn’t get to play with expensive robots like these. Granted, I was studying astrogeology, not robotics, but still…

Overhead projectors, tracking cameras, and physical robots combine to turn your living room into an active gaming environment:

[ RomoCart ]

After some many drone hoaxes, we don’t know what is real and what is fake and what is an art project anymore.

Artemis is a personal security system that utilizes smart jewelry. If a user triggers the necklace, a request is sent over bluetooth and the Internet to a security service which calls for police, fire or medical assistance. We often get requests for emergency help that is faster that typical police response times. Enter the *Personal Security Drone*. In this hack we have re-routed the request call and the user's GPS coordinates to automatically initiate the launch of a quadcopter to the user's current location where it begins filming what is occurring. The Personal Security Drone offers a user-controlled, non-violent, preventative security system for urban residents.

[ Artemis ]

And now for something completely different to finish the week: a robot starring in a production of Kafka's The Metamorphosis.

Robot brought to you by Hiroshi Ishiguro, which makes perfect sense.

Evan Ackerman is a senior editor at IEEE Spectrum. Since 2007, he has written over 6,000 articles on robotics and technology. He has a degree in Martian geology and is excellent at playing bagpipes.