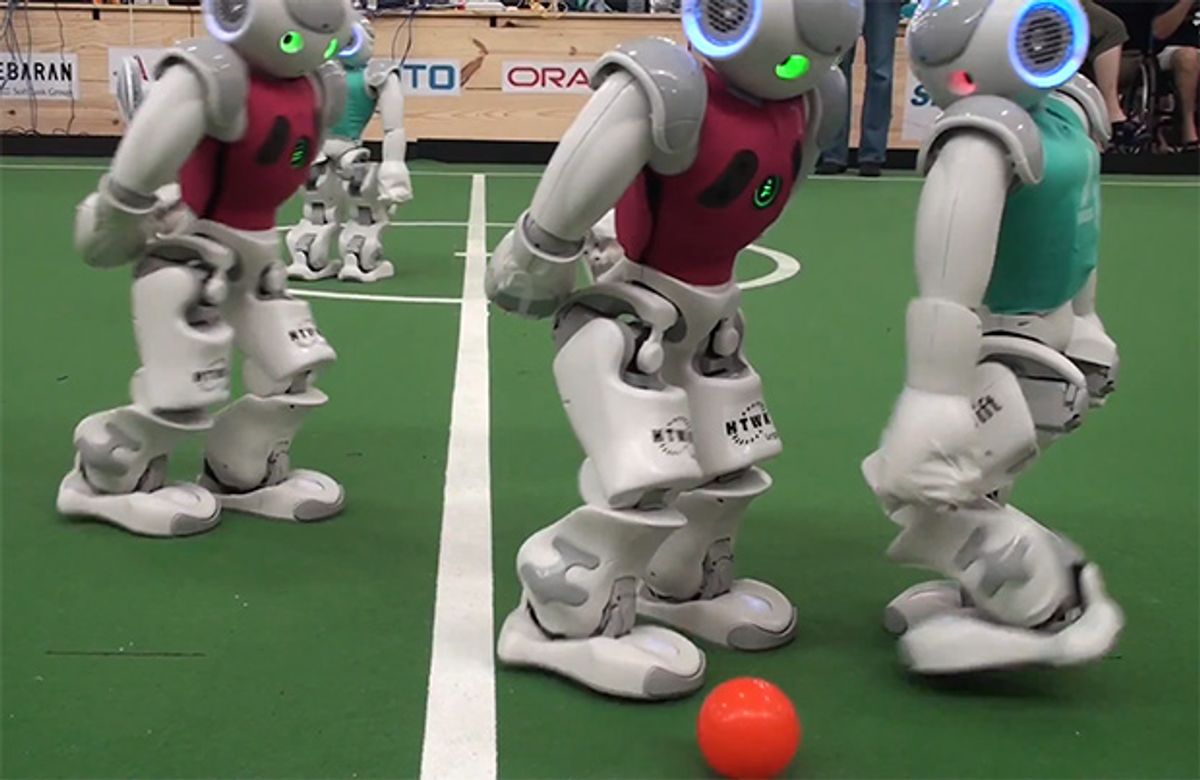

World Cup may be over, but anyone who stuck around in Brazil for an extra week or two got to experience a robotic version of the same thing. RoboCup isn't quite yet at the level that it's aiming for, which is to (by the middle of this century) field "a team of fully autonomous humanoid robot soccer players [that] shall win a soccer game, complying with the official rules of FIFA, against the winner of the most recent World Cup." As you'll see, these robots have a long ways to go, as there's a lot of falling down but zero moaning about it. Someone needs to program NAO to reflexively clutch its ankle.

All that, and more, await you in this week's Video Friday.

As of about 10 seconds ago, Cynthia Breazeal's new social robot for the home, Jibo, was up to $1.155450 million in funding on Kickstarter. That's a lot of robots.

[ Jibo ]

This is one of the most boring robot videos we've had in weeks, and for that, I apologize. But the robot itself is cool: it drills through ice by sucking in cold water, heating it up, and then shooting it out its nose to melt the ice in front of it, slowly creating a channel through which it can descend. Power permitting, it can make it through as much ice as you have patience for, and a design like this may one day be used to explore icy moons like Europa.

[ New Scientist ]

During Rim of the Pacific 2014, the same military exercise that the Boston Dynamics LS3 quadruped was a part of, the U.S. Marines also got to test an autonomous ground vehicle, the Ground Unmanned Support Surrogate (GUSS):

Via [ Gizmodo ]

How many robot arms do you need? N. You need N robot arms, where N is a very, very large number. To control them all, Ben Cohen (who does awesome work) and colleagues from Carnegie Mellon have constructed a planner that can move an object from a start pose to a goal pose using N arms, featuring handoffs between arms:

The work was presented at RSS 2014, and the entire paper is available here.

[ RSS 2014 ]

With N arms, think about how many beers you could pour. For Baxter, N = 2, and that significantly restricts its beer pouring speed:

[ Rethink Robotics ]

Some of the absolute cutest little arms belong to PR-MINI, which almost certainly was not an ultra-secret shrink ray'd PR2 that Willow Garage sold to the U.S. Special Forces for covert operations behind enemy lines:

You've been warned.

[ PR-MINI ]

The goal of this research is to develop an aerial water sampling system that can be quickly and safely deployed to reach varying and hard to access locations, that integrates with existing static sensors, and that is adaptable to a wide range of scientific goals. The capability to obtain quick samples over large areas will lead to a dramatic increase in the understanding of critical natural resources. This research will enable better interactions between non-expert operators and robots by using semi-autonomous systems to detect faults and unknown situations to ensure safety of the operators and environment.

Cool! Or at least, cool when your drone doesn't immediately crash:

Heh. Thanks to NIMBUS Lab for posting their failures as well as their successes.

[ NIMBUS Lab ]

We had some RoboBoat 2014 recaps for you last Friday, and here's a recap of those recaps (focusing on the finals) in case you missed them. Or in case you're really bored right now.

[ RoboBoat ]

Lighting is one of the hardest things to get right in photography, and part of the reason for this is that as a photographer, unless you're in a studio with a bunch of expensive gear, it's frequently impossible to position lights the way you'd like while also being able to take the picture from the angle that you want. How do we solve this? WITH ROBOTS:

We present a new approach to lighting dynamic subjects where an aerial robot equipped with a portable light source lights the subject to automatically achieve a desired lighting effect. We focus on rim lighting, a particularly challenging effect to achieve with dynamic subjects, and allow the photographer to specify a required rim width.Our algorithm processes the images from the photographer's camera and provides necessary motion commands to the aerial robot to achieve the desired rim width in the resulting photographs. We demonstrate a control approach that localizes the aerial robot with reference to the subject and tracks the subject to achieve the necessary motion. Our proof-of-concept results demonstrate the utility of robots in computational lighting.

[ MIT ]

Let's end this week with a whooooole bunch of videos from RoboCup 2014. Most of these are from Tech United Eindhoven, not because we're fans of them (although we are), but because they do an awesome job of filming their competitions and putting them on YouTube. High five, guys! Woo!

Tech United Eindhoven Highlights Day 1:

Tech United Eindhoven Highlights Day 2:

Tech United Eindhoven Highlights Day 3:

Tech United Eindhoven Semifinal Highlights vs. Cambada:

Final: Tech United vs. Water

Standard Platform Final: rUNSWift vs. HTWK

Tech United Eindhoven RoboCup@Home Cocktail Challenge:

AMIGO responding to QR code commands:

What's the QR code for "never, ever, ever, ever, ever do the Macarena again"?

And finally, a taste of the future: robots refereeing robots, from Team B-Human:

In all RoboCup soccer leagues that use real robots, refereeing is still performed by humans. However, some of the situations that referees have to decide upon are at least partially already detected by the players themselves. For instance, B-Human's robots estimate, where the ball has to reenter the field after it was kicked out, and they also know when the ball is free after a kick-off. Therefore it makes sense to think about which situations could be detected by robots and to start implementing robot referees.

Evan Ackerman is a senior editor at IEEE Spectrum. Since 2007, he has written over 6,000 articles on robotics and technology. He has a degree in Martian geology and is excellent at playing bagpipes.