Video Friday is your weekly selection of awesome robotics videos, collected by your Automaton bloggers. We’ll also be posting a weekly calendar of upcoming robotics events for the next few months; here’s what we have so far (send us your events!):

Robotic Arena – January 12, 2019 – Wrocław, Poland

RoboDEX – January 16-18, 2019 – Tokyo, Japan

Let us know if you have suggestions for next week, and enjoy today’s videos.

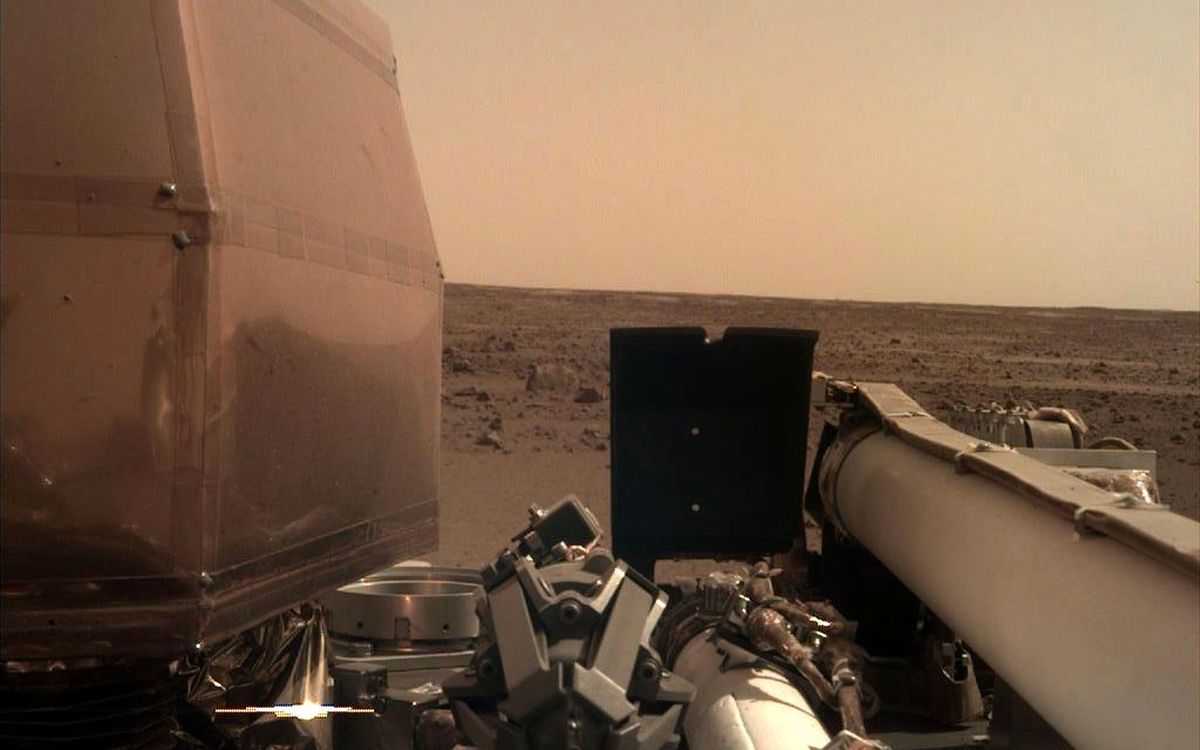

In case you missed it, NASA JPL landed a robot on Mars. They LANDED A ROBOT ON MARS. ON MARS! AGAIN!

[ InSight ]

What Thanksgiving is all about: robot arms and flamethrowers.

Thanks for keeping it real, HMI.

[ HMI ]

I’m sure you were all anxiously wondering why the blog has been a little bit quiet for the last month; and the short answer is because we were in Africa to check out drone stuff, including at Lake Victoria.

Africa is farther ahead on drones than just about anywhere else in the world, and we’ll have lots more for you early next year.

[ LVC ]

We wrote about Roam Robotics’ skiing exoskeleton earlier this year, but you can now sign up to try one out for yourself around either Tahoe next month or in Park City early next year.

This is quite possibly the first useful exoskeleton that normal people like us will be able to take advantage of.

[ Roam Robotics ]

This impressively edited footage from a 360 camera mounted on top of a Team Blacksheep drone is some wild, wild stuff.

[ Team Blacksheep ]

This is Softbank’s newest robot. It cleans floors.

[ Robotstart ]

Please consider muting the following video.

As an experienced bagpipe player (seriously), I’m the first to admit that an improperly tuned bagpipe is quite possibly the third worst sound that anyone has ever heard. McBlare needs a tune up.

And hopefully it gets one before it puts on a performance at Subsurface, a sort of concert festival thing that CMU is putting on deep in a limestone mine, where the acoustics are good and you can’t escape.

[ CMU Subsurface ]

This looks to be a new humanoid robot from JSK Lab called Musashi, a successor to Kengoro. It’s driving what appears to be an unmodified car, which is impressive, and it manages to detect and not hit a human, which is even more impressive.

[ JSK Lab ]

The following is just a rendering, and maybe it should stay that way.

This is causing serious problems for those of us who thought we knew where SpotMini’s face was.

[ Youbionic ] via [ TechCrunch ]

WeRobotics, Tanzania Flying Labs and Agrinfo have been gathering field data in rural Tanzania for the NASA Harvest Consortium’s “Pre-Harvest loss for smallholder farmers” initiative in collaboration with IFPRI and University of Maryland. Data acquisition included 2 rounds of multispectral drone data and 3 rounds of ground truthing data, including data on farm boundaries, crop variety and harvest volumes.

[ WeRobotics ]

Did you know that Fetch has a built-in disco script?

It’s for arm testing and calibration, but Fetch sure does seem like it’s enjoying itself, right?

[ Trenton Schulz ]

Intuition Robotics’ ElliQ has been spending some time beta testing in seniors’ homes in California.

[ ElliQ ]

You might not care about any of the maps that Cassie Blue is making, but two things interest me about this video. The first is that Cassie Blue seems to have some sort of torso now, and the second is that when she blows a capacitor, it’s remarkably similar to a human pulling a muscle, at least visually.

[ Biped Lab ]

Are you lost and alone? Never fear, ANYmal is here! Or will be soon, at least.

ANYmal, you can rescue me anytime.

[ ANYbotics ]

Thanks Marko!

If you come to our lab, this is what you can see! We pulled a camera out when we welcomed visitors to the lab recently, to create this video for all of you. The video features live demonstrations of our lab as of September 2018.

Researchers have created a new autonomous system, called Autotrans, for transporting objects in unstructured outdoor settings—including anything from harvesting fruit in an orchard to collecting trash from city streets. The system includes both hardware and software components: put them all together, and you’ve got a room-cleaning, trash-collecting, fruit-carrying robot!

I think PLEN Mini might be my spirit robot.

[ PLEN ]

In mid-2020, a NASA spacecraft will poke an asteroid with its robot arm, and that arm just stretched out for the first time in deep space.

[ OSIRIS-REX ] via [ Engadget ]

Acutronic Robotics presents MARA, the first modular cobot with the Robot Operating System (ROS 2.0) natively running into each actuator, sensor or other component. This works through the H-ROS SoM, the hardware device that makes any robot part compatible and interoperable with others, regardless of its vendor. MARA delivers industrial-grade features such as real time synchronization or deterministic communication latencies and will be open for pre-order on December 10th 2018.

[ Acutronic ]

We developed a dynamic human-robot interactive system consisting of a high-speed vision and a high-speed robot hand. The high-speed vision can measure the position and the orientation of the board to be manipulated by a human and a robot. Then the high-speed robot hand can react based on the board information. This system can correspond to a randomly human motion at high-speed and low-latency.

Traditional motion capture systems rely on fixed infrastructure. Why not use drones instead?

[ Paper ]

Evan Ackerman is a senior editor at IEEE Spectrum. Since 2007, he has written over 6,000 articles on robotics and technology. He has a degree in Martian geology and is excellent at playing bagpipes.

Erico Guizzo is the Director of Digital Innovation at IEEE Spectrum, and cofounder of the IEEE Robots Guide, an award-winning interactive site about robotics. He oversees the operation, integration, and new feature development for all digital properties and platforms, including the Spectrum website, newsletters, CMS, editorial workflow systems, and analytics and AI tools. An IEEE Member, he is an electrical engineer by training and has a master’s degree in science writing from MIT.