Video Friday is your weekly selection of awesome robotics videos, collected by your Automaton bloggers. We’ll also be posting a weekly calendar of upcoming robotics events for the next few months; here’s what we have so far (send us your events!):

International Symposium on Medical Robotics – March 1-3, 2018 – Atlanta, Ga., USA

HRI 2018 – March 5-8, 2018 – Chicago, Ill., USA

US National Robotics Week – April 7-17, 2018 – United States

Xconomy Robo Madness – April 12, 2018 – Bedford, Mass., USA

NASA Swarmathon – April 17-19, 2018 – Kennedy Space Center, Fla., USA

RoboSoft 2018 – April 24-28, 2018 – Livorno, Italy

ICARSC 2018 – April 25-27, 2018 – Torres Vedras, Portugal

NASA Robotic Mining Competition – May 14-18, 2018 – Kennedy Space Center, Fla., USA

ICRA 2018 – May 21-25, 2018 – Brisbane, Australia

Let us know if you have suggestions for next week, and enjoy today’s videos.

An extra special thank-you to Boston Dynamics this week for posting another video of SpotMini that includes a nice, detailed explanation of what’s actually going on:

A test of SpotMini’s ability to adjust to disturbances as it opens and walks through a door. A person (not shown) drives the robot up to the door, points the hand at the door handle, then gives the ’GO’ command, both at the beginning of the video and again at 42 seconds. The robot proceeds autonomously from these points on, without help from a person. A camera in the hand finds the door handle, cameras on the body determine if the door is open or closed and navigate through the doorway. Software provides locomotion, balance and adjusts behavior when progress gets off track. The ability to tolerate and respond automatically to disturbances like these improves successful operation of the robot. (Note: This testing does not irritate or harm the robot.)

[ Boston Dynamics ]

Are you a cat person and a robot person? Good news, meet OpenCat!

You may have seen Boston Dynamic Dogs and the recently released Sony Aibo. They are supper cool but are too expensive to enjoy. I hope to provide some affordable alternatives that have most of their motion capabilities. I’m not saying I can reproduce the precise motions of those robotics giants. I’m just breaking down the barrier from million dollars to hundreds. I don’t expect to send it to battlefield or other challenging realities. I just want to fit this naughty buddy in a clean, smart, yet too quiet house.

[ OpenCat ] via [ Rongzhong Li ]

Thanks Rongzhong!

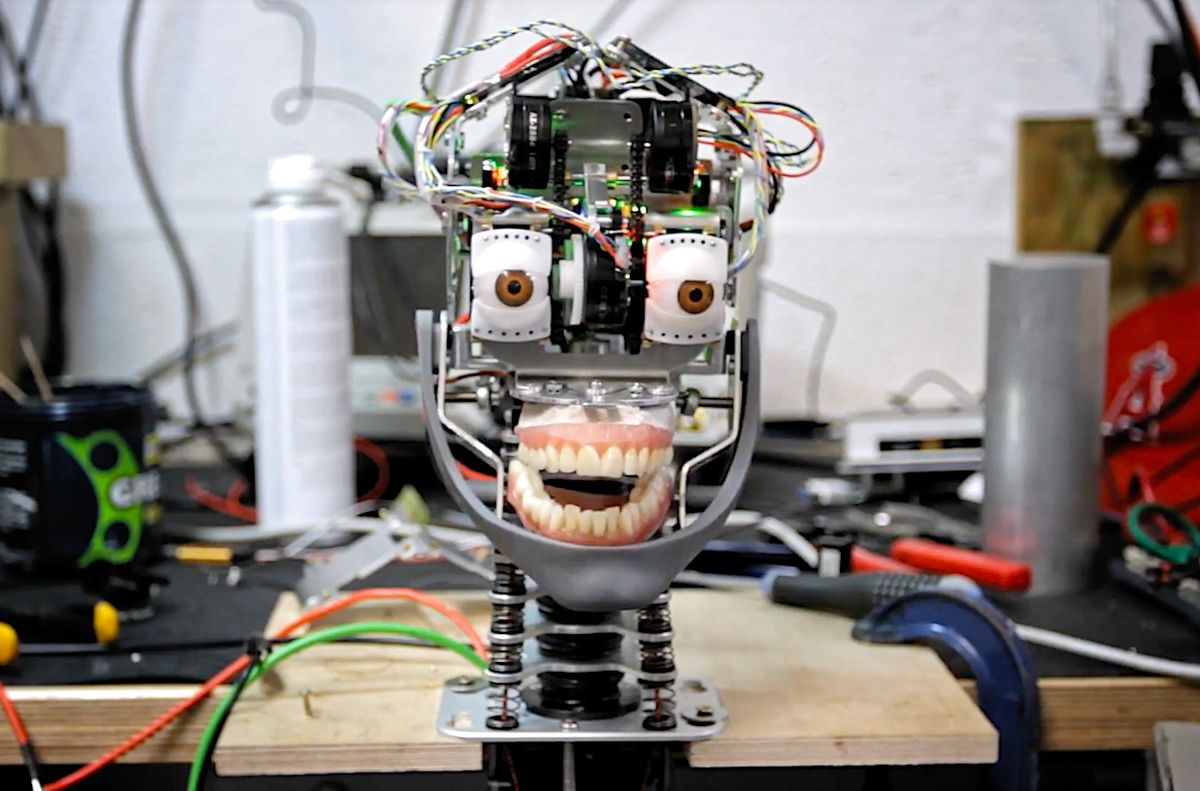

There’s still some Uncanny Valley going on here, but these new Mesmer robots from Engineered Arts are impressive:

We have designed our mechanics to closely mimic human anatomy. A neck with vertebrae that curl and twist. These beauties are more than skin deep. Character animation requires smooth quiet motion. So we developed our own powerful, silent, high torque motors to meet the challenge. With state of the art integrated controllers, every motor has full control of speed, acceleration, torque and position. Motion without compromise.

[ Engineered Arts ]

A 1,000-frames-per-second vision and actuation system helps this robot track and grab tiny ball bearings on moving surfaces:

It is challenging to realize accurate pick-and-place of tiny bearing balls under uncertainties. The uncertainties may be attributed to environmental disturbances as well as to positioning errors of a typical industrial robot. We propose to realize the task by a dynamic compensation robot. It consists of a commercial industrial robot and an add-on module with 2DOF compensation actuators. The former is for fast and coarse global motion realized either by coarse teaching-playback programming or by motion planning with the use of computer vision. The latter is to conduct real-time compensation in a local manner under high-speed visual feedback. In the demonstrations, random disturbances are exerted on the working stage. Along with the main robot conducting coarse global motion, fine positioning is realized by the compensation module under 1000 fps visual feedback.

I can never get enough of mid-size RoboCup soccer robots showing of their skills:

[ Tech United ]

Kuri visited BuzzFeed, and it’s exactly as BuzzFeed-y as you think it is.

Well, I just learned that I’m definitely not hip enough to be working at BuzzFeed. And I’m okay with that.

[ BuzzFeed Video ]

There aren’t many off-the-shelf bipedal robots out there that you can buy, but PAL Robotics’ Talos is one of them:

TALOS is a high performance humanoid robot standing at 1.75m. PAL Robotics’ humanoid can lift up to 6 Kg with one stretched arm, is fully electrical and 100% ROS based.

[ PAL Robotics ]

Thanks Judith!

Genesis Robotics is a Canadian startup developing a new kind of direct-drive high-torque robot actuator called LiveDrive. They’ve been demoing the technology for a while now and it seems pretty interesting (we plan to have an in-depth story about it some time in the next few weeks). They’ve also been spending some of their budget on fancy, highly produced concept videos showing how LiveDrive might be used in the future:

Thanks Cale!

[ Genesis Robotics ]

Meanwhile, students at the University of Koblenz-Landau are spending their time wisely by teaching a PAL Robotics Tiago to fetch them beer:

This is our entry for the NVIDIA Jetson Challenge. Tiago is bringing a beer from the fridge. This video contains complex object segmentation and manipulation tasks. We have developed a convolutional neural network for object segmentation and demonstrate it using an integrated scenario. Computation for path planning and object segmentation is done on a Jetson TX2.

[ NVIDIA Jetson Challenge ] via [ University of Koblenz ]

Oh, so that’s where Reese’s Peanut Butter Cups come from.

[ Reese’s ] via [ Mike Senese ]

Unlike real figure skating, I can handle Cozmo figure skating, because as a tracked robot it’s somewhat less likely to fall over:

I think most of those moves are, by definition, double axels.

Cozmo also tried skeleton bobsled, with the kind of result that you’d probably expect:

[ YouTube ]

Thanks Dominic!

This paper is from last year, but these tiny autonomous drones avoiding obstacles is still impressive work:

Micro Aerial Vehicles (FOV) are very suitable for flying in indoor environments, but autonomous navigation is challenging due to their strict hardware limitations. This paper presents a highly efficient computer vision algorithm called Edge-FS for the determination of velocity and depth. It runs at 20 Hz on a 4 g stereo camera with an embedded STM32F4 microprocessor (168 MHz, 192 kB) and uses edge distributions to calculate optical flow and stereo disparity. The stereo-based distance estimates are used to scale the optical flow in order to retrieve the drone’s velocity. The velocity and depth measurements are used for fully autonomous flight of a 40 g pocket drone only relying on on-board sensors. This method allows the MAV to control its velocity and avoid obstacles.

[ TU Delft MAVLab ]

Unpacking groceries is a straightforward albeit tedious task: You reach into a bag, feel around for an item, and pull it out. A quick glance will tell you what the item is and where it should be stored. Now engineers from MIT and Princeton University have developed a robotic system that may one day lend a hand with this household chore, as well as assist in other picking and sorting tasks, from organizing products in a warehouse to clearing debris from a disaster zone.

The team’s “pick-and-place” system consists of a standard industrial robotic arm that the researchers outfitted with a custom gripper and suction cup. They developed an “object-agnostic” grasping algorithm that enables the robot to assess a bin of random objects and determine the best way to grip or suction onto an item amid the clutter, without having to know anything about the object before picking it up.

In general, the robot follows a “grasp-first-then-recognize” workflow, which turns out to be an effective sequence compared to other pick-and-place technologies.

[ MIT News ]

Assa Abloy Romania assembles locks, which are then sent to other Assa Abloy factories worldwide, where they’re transformed into finished products. The factory suffered from a huge skills gap in Bucharest, the country’s capital city, where the unemployment rate is low. Difficulties to attract new workers to manufacturing forced an automation revolution.

In-house robotics expertise was built around a group of engineers and technicians in charge of automating processes with new technologies. The team chose the Robotiq 2-Finger Adaptive Gripper and Wrist Camera to pair a Universal Robots UR5 for a machine tending process. Two parts are positioned by the robot on a fixture and then welded by the machine.

[ Robotiq ]

Thanks David!

NREC’s relationship with Carnegie Mellon University allows the continuous development of ground-breaking technologies. Mostly, those technologies are robots.

Also, if you go to NREC and want the Wi-Fi password, it’s 5CFCFB6G.

[ NREC ]

In a needlessly long 14-minute video, ZMP’s Carrio delivery robots travel along 1.2 km of sidewalks in Japan.

[ Carrio ]

As a research scientist at Google, Margaret Mitchell helps develop computers that can communicate about what they see and understand. She tells a cautionary tale about the gaps, blind spots and biases we subconsciously encode into AI -- and asks us to consider what the technology we create today will mean for tomorrow. "All that we see now is a snapshot in the evolution of artificial intelligence," Mitchell says. "If we want AI to evolve in a way that helps humans, then we need to define the goals and strategies that enable that path now."

[ TED ]

In this week’s episode of Robots in Depth, Per interviews Jana Tumova from KTH.

Jana Tumova talks about formal verification of computer systems and synthesizing controllers from models. We get an introduction to the relatively new, especially when applied to robotics, field of formal verification. Jana talks about the requirements and limits of formal verification and how she feels we are ready to start merging the computer science process with regulatory and business processes. Jana also describes how she worked on an autonomous golf cart in Singapore where the controller was synthesized.

[ Robots in Depth ]

Last week’s CMU RI Seminar comes from Oregon State’s Geoff Hollinger, on “Marine Robotics: Planning, Decision Making, and Learning.”

Underwater gliders, propeller-driven submersibles, and other marine robots are increasingly being tasked with gathering information (e.g., in environmental monitoring, offshore inspection, and coastal surveillance scenarios). However, in most of these scenarios, human operators must carefully plan the mission to ensure completion of the task. Strict human oversight not only makes such deployments expensive and time consuming but also makes some tasks impossible due to the requirement for heavy cognitive loads or reliable communication between the operator and the vehicle. We can mitigate these limitations by making the robotic information gatherers semi-autonomous, where the human provides high-level input to the system and the vehicle fills in the details on how to execute the plan. These capabilities increase the tolerance for operator neglect, reduce deployment cost, and open up new domains for information gathering. In this talk, I will show how a general framework that unifies information theoretic optimization and physical motion planning makes semi-autonomous information gathering feasible in marine environments. I will leverage techniques from stochastic motion planning, adaptive decision making, and deep learning to provide scalable solutions in a diverse set of applications such as underwater inspection, ocean search, and ecological monitoring. The techniques discussed here make it possible for autonomous marine robots to “go where no one has gone before,” allowing for information gathering in environments previously outside the reach of human divers.

[ CMU RI ]

Evan Ackerman is a senior editor at IEEE Spectrum. Since 2007, he has written over 6,000 articles on robotics and technology. He has a degree in Martian geology and is excellent at playing bagpipes.

Erico Guizzo is the Director of Digital Innovation at IEEE Spectrum, and cofounder of the IEEE Robots Guide, an award-winning interactive site about robotics. He oversees the operation, integration, and new feature development for all digital properties and platforms, including the Spectrum website, newsletters, CMS, editorial workflow systems, and analytics and AI tools. An IEEE Member, he is an electrical engineer by training and has a master’s degree in science writing from MIT.