I’m still waiting for an Atlas robot to arrive on my doorstep from Boston Dynamics (since I’m a famous and occasionally handsome Internet journalist, I’m assuming they’re sending me one for free), but some other robotics groups have gotten theirs first. This hopefully means that we’re about to see a huge number of videos show up on YouTube featuring Atlases doing all of the stuff that Boston Dynamics doesn’t want you to see. Like those butt scoots from the simulation challenge. Check out some of the very first videos of Atlas in the wild, and more (more!), because it’s Video Friday.

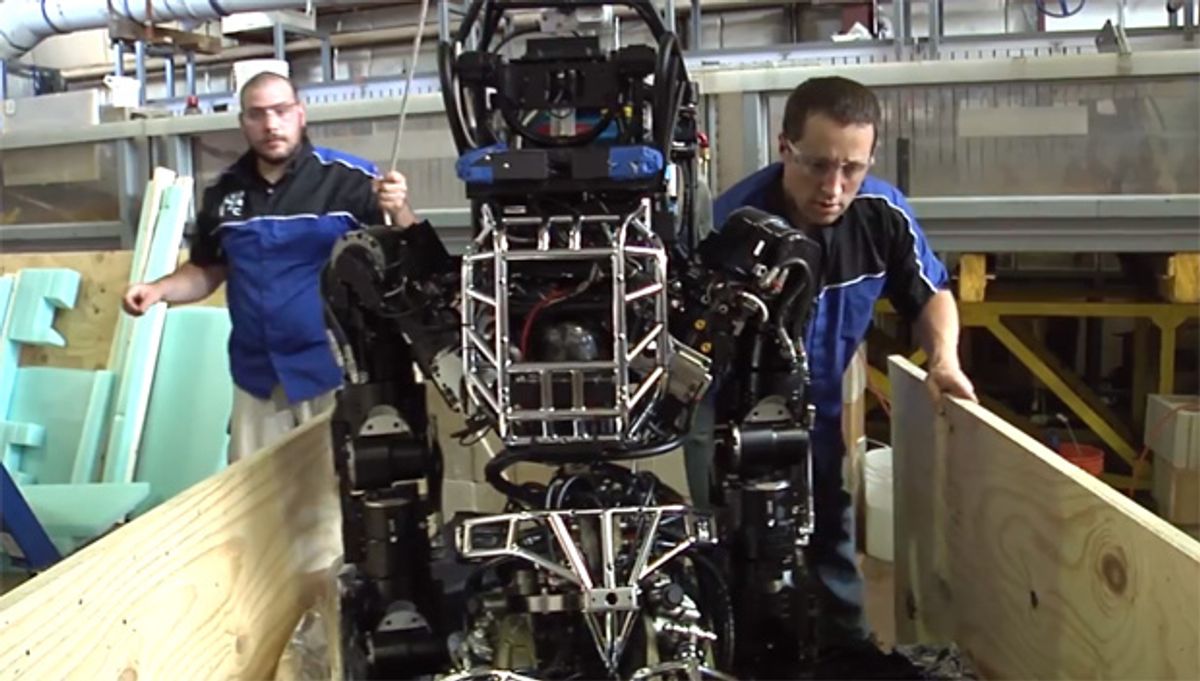

Atlas robots are starting to arrive! Here’s MIT’s:

Best. Present. Ever.

[ MIT CSAIL ]

Meanwhile, Hong Kong University already has their Atlas playing rock, paper, scissors using a Leap motion controller and a Sandia hand:

[ HKU ]

iRobot has finally finished swallowing Evolution Robotics, and the Mint (which we’re big fans of) has been rebranded as the iRobot Bravaa Braava.

We’ve confirmed with iRobot that the Braava is mechanically and functionally the same as Mint, so if you already have a Mint, this re-release doesn’t make your existing robot any less Minty. And now the Evolution is fully integrated, we’re looking forward to some new implementations of the localization technology that makes Mint (and Braava) so smart.

[ iRobot Braava ]

The following video is a dramatization:

The following video is not a dramatization, and is what you should use if you want to humiliate some humans at things like this:

[ R2B2 ]

[ Adept Quattro ]

Every robot needs googly eyes. Every single one. This video of RHex frolicking illustrates why. It also illustrates a bunch of other stuff, but GOOGLY EYES.

Every week we take the robots out for a hike, both because it is fun but also to make sure the robot and operator are working well. This week we made a fun video that shows off some of RHex’s potential to interact with the world in interesting ways. The story in this video stems from some cool research happening in our lab, where students are using a panning camera mounted on the robot to track objects and landmarks as a method of controlling the motion of the robot. This type of control could lead to the behaviors seen in the above video. Currently the motions of the robot are controlled by a person using a joystick, but imagine how fantastic it would be to have a robot able to track and follow a ball autonomously! The ability to track objects, combined with RHex’s agility over rough terrain has many applications in search and rescue scenarios, exploration of dangerous environments, and assisting humans in a multitude of tasks.

[ Kod*lab ]

Thanks Aaron!

Not falling off a ball is probably just about exactly as hard as it looks for Thymio II:

Thymio’s little pointy hat is actually a weight that helps stabilize the robot and increases friction between the ball and the wheels. It’s also super cute.

[ Thymio II ]

Here’s five minutes of RoboCup 2013 highlights, featuring UT Austin’s Standard Platform team:

[ Austin Villa ]

In this week’s Rover Report, Curiosity is making tracks towards Mount Sharp while getting mooned.

[ MSL ]

Northrop Grumman has a sort of sizzle reel of the X-47B carrier landing trials. There’s some new footage, but more importantly, it’s MUCH MORE ROCKIN’.

Seriously. That thing is like some sort of CGI space fighter, man. The future is here.

[ X-47B ]

And because there’s no better way to end a week than with a real live killer robot, this is a Terminator T-1. Zero percent CGI, 100 percent awesome, from the Stan Winston School.

Via [ Gizmodo ]

Evan Ackerman is a senior editor at IEEE Spectrum. Since 2007, he has written over 6,000 articles on robotics and technology. He has a degree in Martian geology and is excellent at playing bagpipes.