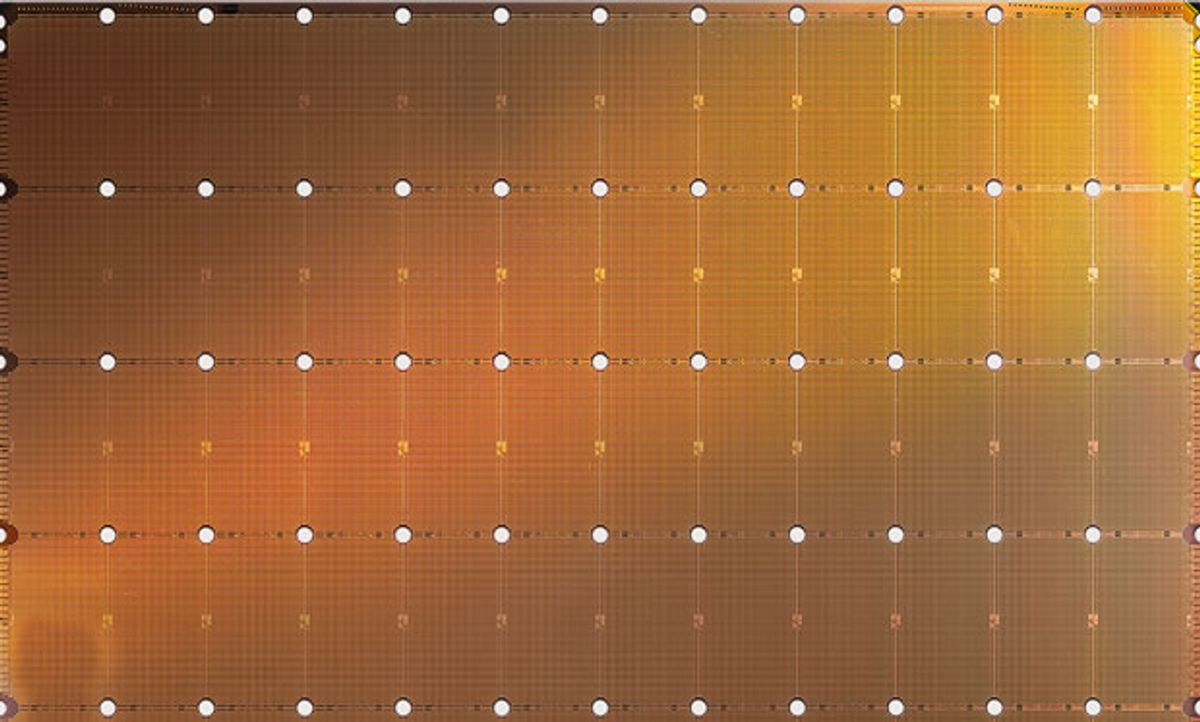

Argonne National Laboratory and Lawrence Livermore National Laboratory will be among the first organizations to install AI computers made from the largest silicon chip ever built. Last month, Cerebras Systems unveiled a 46,225-square millimeter chip with 1.2 trillion transistors designed to speed the training of neural networks. Today, such training is often done in large data centers using GPU-based servers. Cerebras plans to begin selling computers based on the notebook-size chip in the 4th quarter of this year.

“The opportunity to incorporate the largest and fastest AI chip ever—the Cerebras WSE—into our advanced computing infrastructure will enable us to dramatically accelerate our deep learning research in science, engineering, and health” Rick Stevens, head of computing at Argonne National Laboratory, said in a press release. “It will allow us to invent and test more algorithms, to more rapidly explore ideas, and to more quickly identify opportunities for scientific progress.”

Argonne and Lawrence Livermore are the first DOE entities to participate in what is expected to be a multi-year, multi-lab partnership. Cerebras plans to expand to other laboratories in the coming months.

Cerebras computers will be integrated into existing supercomputers at the two DOE labs to act as AI accelerators for those machines. In 2021, Argonne plans to become home to the United States’ first exascale computer, named Aurora; it will be capable of more than 1 billion billion calculations per second. Intel and Cray are the leaders on that $500 million project. The national laboratory is already home to Mira, the 24th-most powerful supercomputer in the world, and Theta, the 28th-most powerful. Lawrence Livermore is also on track to achieve exascale with El Capitan, a $600-million, 1.5-exaflop machine set to go live in late 2022. The lab is also home to the number-two-ranked Sierra supercomputer and the number-10-ranked Lassen.

The U.S. Energy Department established the Artificial Intelligence and Technology Office earlier this month to better take advantage of AI for solving the kinds of problems the U.S. national laboratories tackle.

Samuel K. Moore is the senior editor at IEEE Spectrum in charge of semiconductors coverage. An IEEE member, he has a bachelor's degree in biomedical engineering from Brown University and a master's degree in journalism from New York University.