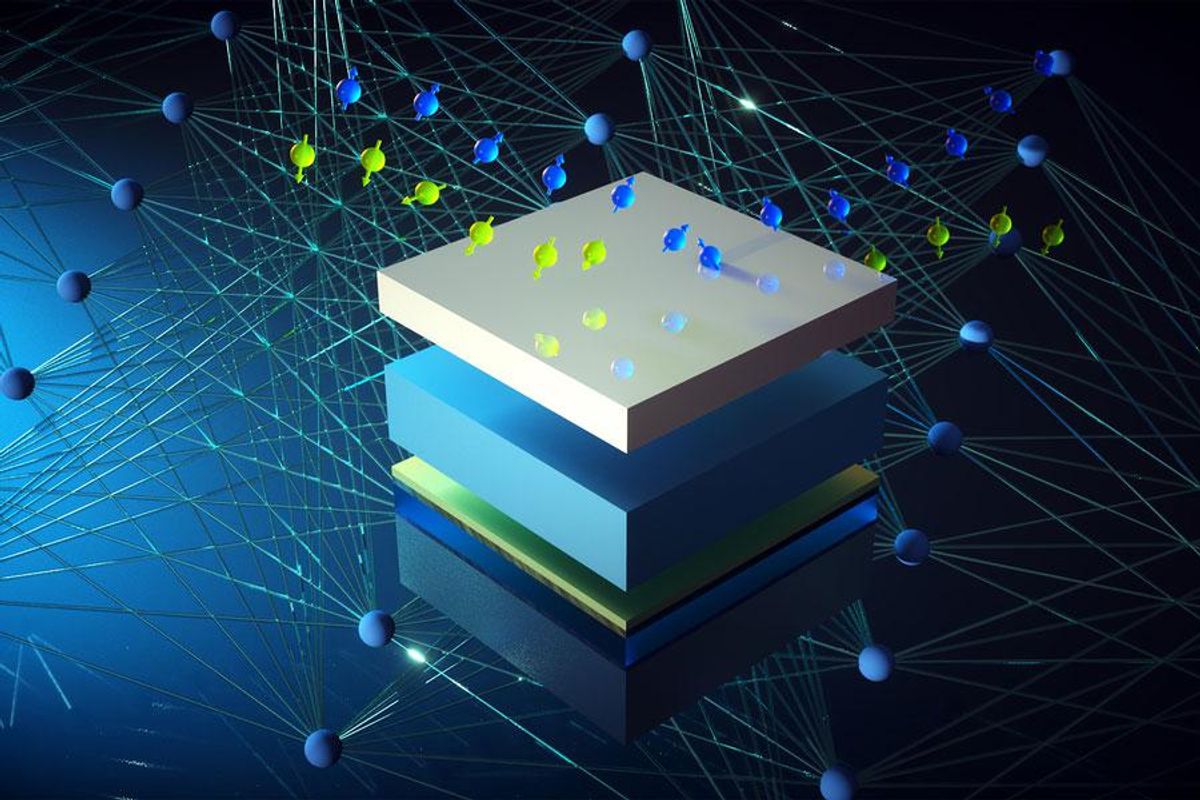

A new AI algorithm has been developed that offers to drastically trim back the time needed to iterate designs of a promising new material called the topological insulator.

The potential of topological insulators—which feature the strange property of being insulators on the inside but conductors on the outside—has transfixed electronics researchers for the past decade. One area of interest has been achieving electronics without dissipation, or loss to heat. For years the only material that seemed to offer electronics without resistivity were superconductors. However, superconductors lack a degree of robustness and were susceptible to the most minute of disturbances.

Topological insulators seemed to offer a reasonable alternative to the fragility of superconductors. However, to develop and perfect a topological insulator meant first understanding how a material’s magnetic and nonmagnetic layers interact—including the induced magnetism in the nonmagnetic layer—a phenomenon called the “magnetic proximity effect.” To detect this phenomenon researchers use a technique known as polarized neutron reflectometry (PNR) to analyze how magnetic structure varies as a function of depth in multilayered materials.

PNR, in other words, was a necessary element of developing topological insulators, but it’s also been a substantial slowdown in the process of exploring and iterating new possible materials. Both PNR’s inherent complexities and the vast amounts of data it produces have been a challenge.

“In traditional methods, people needed to spend time guessing at tens of parameters again and again. With this AI approach there is no need to guess—and it’s painless.”

—Mingda Li, MIT

However, now researchers at MIT have developed an artificial intelligence algorithm for sorting through all the PNR data to help researchers significantly reduce the data-analysis time.

“It has reduced the analysis from days to minutes without exaggeration,” said Mingda Li, professor at MIT and the principal researcher in this work. “In traditional methods, people needed to spend time guessing at tens of parameters again and again. With this AI approach there is no need to guess—and it’s painless.”

PNR starts by aiming two polarized neutron beams with opposing spins at a sample. Those beams are reflected off the sample and collected on a detector. If one of the neutrons comes in contact with a magnetic flux, such as those found inside a magnetic material, it will change its spin state, resulting in different signals measured from the spin-up and spin-down neutron beams. As a result, the proximity effect can be detected if a thin layer of a normally nonmagnetic material—placed immediately adjacent to a magnetic material—is shown to become magnetized: the magnetic proximity effect.

The PNR signal, as it’s first fed into the AI, is a complex signal that’s difficult to deconvolve. But in doubling the resolution of the signal, the AI is able, essentially, to amplify the proximity-effect component of the signal, thus making the data easier to interpret. In the group’s work, their algorithm could discern proximity-effect properties at length scales of 0.5 nanometer. (The typical spatial extent of the proximity effect, Li said, is on the order of one nanometer, so the AI is able to resolve to the size scales needed.)

The AI method succeeds over traditional algorithms, Li said, because it transforms the PNR data into a hidden “latent space”—a sort of simplified but still useful representation of compressed data—that makes analysis much easier.

To leverage this ability to transform data into latent space, each piece of PNR data is first labeled according to the particular parameters most relevant to the researchers. The algorithm then looks for nuanced links between different data points and amplifies them, in contrast to the conventional method of treating each data point independently.

The MIT researchers built their algorithm from PyTorch, the open-source machine-learning framework.

“We are not an AI group designing things like convolutional neural networks, but the package is powerful enough to be adopted in existing research facilities, like the [U.S.] National Institute of Standards and Technology,” said Li.

In addition to locating the proximity effect in PNR data, Li said, it can also be used for finding other nuanced spectral signals, such as SARS-CoV-2 virus in lipid bilayers (which is also measured by PNR). He also envisions using their algorithm to find materials that can host qubits for quantum computing. “Those are direct applications without need to modify much of [the] codes,” Li added.

In fact, quantum computing applications, Li said, are the most immediate applications for this AI beyond the PNR data mining.

“There has been some recent controversies in identifying whether some material systems may host qubits,” Li said. “This work will improve the resovability and help on that.”

- Could "Topological Materials" Be A New Medium for Ultra-Fast ... ›

- A Beginner's Guide to Topological Materials - IEEE Spectrum ›

Dexter Johnson is a contributing editor at IEEE Spectrum, with a focus on nanotechnology.