We love Microsoft's Kinect 3D sensor, and not just because you can play games with it. At a mere $150, it's a dirt-cheap way to bring depth sensing and 3D vision to robots, and while open-source USB drivers made it easy, a forthcoming Windows SDK from Microsoft promises to make it even easier.

Kinect, which is actually hardware made by an Israeli company called PrimeSense, works by projecting an infrared laser pattern onto nearby objects. A dedicated IR sensor picks up on the laser to determine distance for each pixel, and that information is then mapped onto an image from a standard RGB camera. What you end up with is an RGBD image, where each pixel has both a color and a distance, which you can then use to map out body positions, gestures, motion, or even generate 3D maps. Needless to say, this is an awesome capability to incorporate into a robot, and the cheap price makes it accessible to a huge audience.

We've chosen our top 10 favorite examples of how Kinect can be used to make awesome robots, check it out:

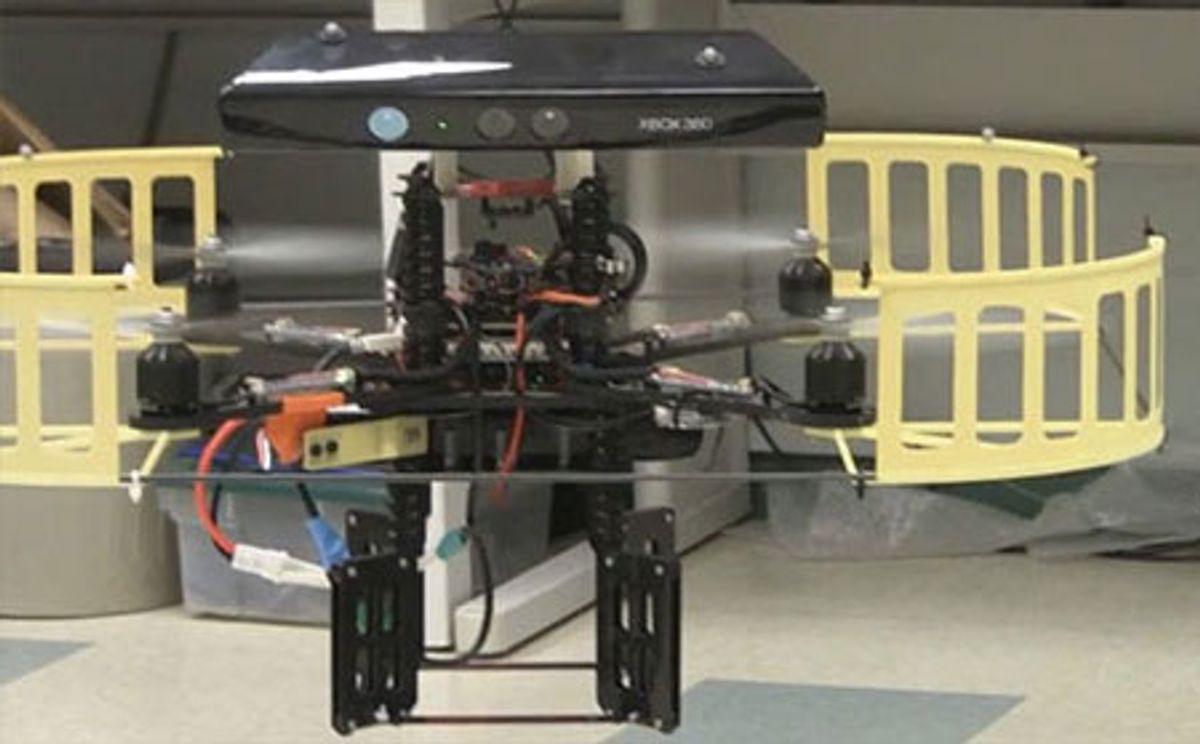

1. Kinect Quadrotor Bolting a Kinect to the top of a quadrotor creates a robot that can autonomously navigate and avoid obstacles, creating a 3D map as it goes.

2. Hands-free Roomba Why actually vacuum when you can just pretend to actually vacuum, and then use a Kinect plus a Roomba to do the vacuuming for you?

3. iRobot AVA iRobot integrated two (two!) Kinect sensors into their AVA not-exactly-telepresence prototype: one to help the robot navigate and another one to detect motion and gestures.

4. Bilibot The great thing about Kinect is that it can be used to give complex vision to cheap robots, and Bilibot is a DIY platform that gives you mobility, eyes, and a brain in a package that costs just $650.

5. Gesture Surgery If you've got really, really steady hands, you can now use a Kinect that recognizes hand gestures to control a DaVinci robotic surgical system.

6. PR2 Teleoperation Willow Garage's PR2 already has 3D depth cameras, so it's kinda funny to see it wearing a Kinect hat. Using ROS, a Kinect sensor can be used to control the robot's sophisticated arms directly.

7. Humanoid Teleoperation Taylor Veltrop put together this sweet demo showing control over a NAO robot using Kinect and some Wii controllers. Then he gives the robot a banana, and a knife (!).

8. Car Navigation Back when DARPA hosted their Grand Challenge for autonomous vehicles, robot cars required all kinds of crazy sensor systems to make it down a road. On a slightly smaller scale, all they need now is a single Kinect sensor.

9. Delta Robot This Kinect controlled delta robot doesn't seem to work all that well, which makes it pretty funny (and maybe a little scary) to watch.

10. 3D Object Scanning Robots can use Kinect for mapping environments in 3D, but with enough coverage and precision, you can use them to whip up detailed 3D models of objects (and people) too.

Evan Ackerman is a senior editor at IEEE Spectrum. Since 2007, he has written over 6,000 articles on robotics and technology. He has a degree in Martian geology and is excellent at playing bagpipes.